A few months ago, one of our users sent us a screenshot of an n8n workflow with 14 nodes, a half-dozen sticky notes, and a subject line that just said: "Help." They'd followed a popular n8n web scraping tutorial, got a beautiful 10-row demo working on a test site, and then tried to scrape real competitor pricing across 200 product pages. The result? A broken pagination loop, a 403 error wall, and a silent scheduler that stopped firing after the first Tuesday.

That gap — between the demo and the pipeline — is where most n8n scraping projects go to die. I've spent years building and working in automation, and I can tell you: the scraping part is rarely the hard part. It's everything after the first successful scrape that trips people up. Pagination, scheduling, anti-bot handling, data cleaning, export, and — the big one — maintenance when the site changes its layout for the third time this quarter. This guide covers the full pipeline, from your first HTTP Request node to a recurring, production-ready n8n web scraping workflow. And where n8n's DIY approach hits a wall, I'll show you where AI-powered tools like Thunderbit can save you hours (or days) of frustration.

What Is n8n Web Scraping (and Why Most Tutorials Only Scratch the Surface)

n8n is an open-source, low-code workflow automation platform. Think of it as a visual canvas where you connect "nodes" — each one does a specific job (fetch a web page, parse HTML, send a Slack message, write to Google Sheets) — and chain them together into automated workflows. No heavy coding required, though you can drop in JavaScript when you need it.

"n8n web scraping" means using n8n's built-in HTTP Request and HTML nodes (plus community nodes) to fetch, parse, and process website data inside these automated workflows. The core is two steps: Fetch (the HTTP Request node grabs the raw HTML from a URL) and Parse (the HTML node uses CSS selectors to extract the data points you care about — product names, prices, emails, whatever).

The platform is massive: as of April 2026, n8n has , over 230,000 active users, 9,166+ community workflow templates, and ships a new minor release roughly every week. It raised in March 2025. There's a lot of momentum here.

But there's a gap nobody talks about. The most popular n8n scraping tutorial on dev.to (by Lakshay Nasa, published under the "Extract by Zyte" org) promised pagination in "Part 2." Part 2 did arrive — and the author's own verdict was: "N8N gives us a default Pagination Mode inside the HTTP Request node under Options, and while it sounds convenient, it didn't behave reliably in my experience for typical web scraping use cases." The author ended up routing pagination through a paid third-party API. Meanwhile, n8n forum users keep citing "pagination, throttling, login" as the point where n8n scraping "gets complex easily." This guide is built to fill that gap.

Why n8n Web Scraping Matters for Sales, Ops, and Ecommerce Teams

n8n web scraping isn't a developer hobby. It's a business tool. The sits at roughly $1–1.3 billion in 2025 and is forecast to reach $2–2.3 billion by 2030. Dynamic pricing alone is used by about , and now rely on alternative data — much of it scraped from the web. McKinsey reports that dynamic pricing delivers for adopters.

Here's where n8n's real strength shines: it's not just about getting data. It's about what happens next. n8n lets you chain scraping with downstream actions — CRM updates, Slack alerts, spreadsheet exports, AI analysis — in a single workflow.

| Use Case | Who Benefits | What You Scrape | Business Outcome |

|---|---|---|---|

| Lead generation | Sales teams | Business directories, contact pages | Fill CRM with qualified leads |

| Competitor price monitoring | Ecommerce ops | Product listing pages | Adjust pricing in real time |

| Real estate listing tracking | Real estate agents | Zillow, Realtor, local MLS sites | Spot new listings before competitors |

| Market research | Marketing teams | Review sites, forums, news | Identify trends and customer sentiment |

| Vendor/SKU stock monitoring | Supply chain ops | Supplier product pages | Avoid stockouts, optimize purchasing |

The data shows the ROI is real: plan to increase AI investment in 2025, and automated lead nurturing has been shown to in nine months. If your team is still copy-pasting from websites into spreadsheets, you're leaving money on the table.

Your n8n Web Scraping Toolbox: Core Nodes and Available Solutions

Before you build anything, you need to know what's in the toolbox. Here are the essential n8n nodes for web scraping:

- HTTP Request node: Fetches raw HTML from any URL. Works like a browser making a page request, but returns the code instead of rendering it. Supports GET/POST, headers, batching, and (theoretically) built-in pagination.

- HTML node (formerly "HTML Extract"): Parses HTML using CSS selectors to pull out specific data — titles, prices, links, images, whatever you need.

- Code node: Lets you write JavaScript snippets for data cleaning, URL normalization, deduplication, and custom logic.

- Edit Fields (Set) node: Restructures or renames data fields for downstream nodes.

- Split Out node: Breaks arrays into individual items for processing.

- Convert to File node: Exports structured data to CSV, JSON, etc.

- Loop Over Items node: Iterates through lists (critical for pagination — more on this below).

- Schedule Trigger: Fires your workflow on a cron schedule.

- Error Trigger: Alerts you when a workflow fails (essential for production).

For advanced scraping — sites with JavaScript rendering or heavy anti-bot protection — you'll need community nodes:

| Approach | Best For | Skill Level | Handles JS-Rendered Sites | Anti-Bot Handling |

|---|---|---|---|---|

| n8n HTTP Request + HTML nodes | Static sites, APIs | Beginner–Intermediate | No | Manual (headers, proxies) |

| n8n + ScrapeNinja/Firecrawl community node | Dynamic/protected sites | Intermediate | Yes | Built-in (proxy rotation, CAPTCHA) |

| n8n + Headless Browser (Puppeteer) | Complex JS interactions | Advanced | Yes | Partial (depends on setup) |

| Thunderbit (AI Web Scraper) | Any site, non-technical users | Beginner | Yes (Browser or Cloud mode) | Built-in (inherits browser session or cloud handling) |

There is no native headless-browser node in n8n as of v2.15.1. Every JS-rendering scrape requires either a community node or an external API.

A quick word about Thunderbit: it's an AI-powered our team built. You click "AI Suggest Fields," then "Scrape," and get structured data — no CSS selectors, no node configuration, no maintenance. I'll show you where it fits (and where n8n is the better choice) throughout this guide.

Step-by-Step: Build Your First n8n Web Scraping Workflow

With the toolbox covered, here's how to build a working n8n web scraper from scratch. I'll use a product listing page as the example — the kind of thing you'd actually scrape for price monitoring or competitor research.

Before You Start:

- Difficulty: Beginner–Intermediate

- Time Required: ~20–30 minutes

- What You'll Need: n8n (self-hosted or Cloud), a target URL, Chrome browser (for finding CSS selectors)

Step 1: Create a New Workflow and Add a Manual Trigger

Open n8n, click "New Workflow," and name it something descriptive — like "Competitor Price Scraper." Drag in a Manual Trigger node. (We'll upgrade to a scheduled trigger later.)

You should see a single node on your canvas, ready to fire when you click "Test Workflow."

Step 2: Fetch the Page with the HTTP Request Node

Add an HTTP Request node and connect it to the Manual Trigger. Set the method to GET and enter your target URL (e.g., https://example.com/products).

Now, the critical step most tutorials skip: add a realistic User-Agent header. By default, n8n sends axios/xx as its user agent — which is instantly recognizable as a bot. Under "Headers," add:

| Header Name | Value |

|---|---|

| User-Agent | Mozilla/5.0 (Windows NT 10.0; Win64; x64) AppleWebKit/537.36 (KHTML, like Gecko) Chrome/124.0.0.0 Safari/537.36 |

| Accept | text/html,application/xhtml+xml,application/xml;q=0.9,/;q=0.8 |

If you're scraping multiple URLs, enable Batching (under Options) and set a wait time of 1–3 seconds between requests. This helps avoid triggering rate limits.

Run the node. You should see raw HTML in the output panel.

Step 3: Parse the Data with the HTML Node

Connect an HTML node to the HTTP Request output. Set the operation to Extract HTML Content.

To find the right CSS selectors, open your target page in Chrome, right-click the data you want (e.g., a product title), and choose "Inspect." In the Elements panel, right-click the highlighted HTML element and select "Copy → Copy selector."

Configure your extraction values like this:

| Key | CSS Selector | Return Value |

|---|---|---|

| product_name | .product-title | Text |

| price | .price-current | Text |

| url | .product-link | Attribute: href |

Execute the node. You should see a table of structured data — product names, prices, and URLs — in the output.

Step 4: Clean and Normalize with the Code Node

Raw scraped data is messy. Prices come with extra whitespace, URLs might be relative, and text fields have trailing newlines. Add a Code node and connect it to the HTML node.

Here's a simple JavaScript snippet to clean things up:

1return items.map(item => {

2 const d = item.json;

3 return {

4 json: {

5 product_name: (d.product_name || '').trim(),

6 price: parseFloat((d.price || '').replace(/[^0-9.]/g, '')),

7 url: d.url && d.url.startsWith('http') ? d.url : `https://example.com$\{d.url\}`

8 }

9 };

10});This step is essential for production-quality data. Skip it and your spreadsheet will be full of "$ 29.99\n" entries.

Step 5: Export to Google Sheets, Airtable, or CSV

Connect a Google Sheets node (or Airtable, or Convert to File for CSV). Authenticate with your Google account, select your spreadsheet and sheet, and map the fields from the Code node output to your column headers.

Run the full workflow. You should see clean, structured data land in your spreadsheet.

Side note: to Google Sheets, Airtable, Notion, and Excel with zero node setup. If you don't need the full workflow chain and just want the data, that's a useful shortcut.

The Part Every n8n Web Scraping Tutorial Skips: Complete Pagination Workflows

Pagination is the #1 gap in n8n scraping content — and the #1 source of frustration in the n8n community forums.

There are two main pagination patterns:

- Click-based / URL-increment pagination — pages like

?page=1,?page=2, etc. - Infinite scroll — content loads as you scroll down (think Twitter, Instagram, or many modern product catalogs).

Click-Based Pagination in n8n (URL Incrementing with Loop Nodes)

The built-in Pagination option under the HTTP Request node's Options menu sounds convenient. In practice, it's unreliable. The most popular n8n scraping tutorial author (Lakshay Nasa) tried it and wrote: "it didn't behave reliably in my experience." Forum users report it , , and failing to detect the last page.

The reliable approach: build the URL list explicitly in a Code node, then iterate with Loop Over Items.

Here's how:

- Add a Code node that generates your page URLs:

1const base = 'https://example.com/products';

2const totalPages = 10; // or detect dynamically

3return Array.from({length: totalPages}, (_, i) => ({

4 json: { url: `$\{base\}?page=${i + 1}` }

5}));- Connect a Loop Over Items node to iterate through the list.

- Inside the loop, add your HTTP Request node (set the URL to

{{ $json.url }}), then the HTML node for parsing. - Add a Wait node (1–3 seconds, randomized) inside the loop to avoid 429 rate limits.

- After the loop, aggregate results and export to Google Sheets or CSV.

The full chain: Code (build URLs) → Loop Over Items → HTTP Request → HTML → Wait → (loop back) → Aggregate → Export.

One gotcha: the Loop Over Items node has a where nested loops silently skip items. If you're paginating and enriching subpages, test carefully — the "done" count may not match your input count.

Infinite Scroll Pagination: Why n8n's Built-In Nodes Struggle

Infinite scroll pages load content via JavaScript as you scroll. The HTTP Request node fetches only the initial HTML — it can't execute JavaScript or trigger scroll events. You have two options:

- Use a headless browser community node (e.g., or ) to render the page and simulate scrolling.

- Use a scraping API (ScrapeNinja, Firecrawl, ZenRows) with JS rendering enabled.

Both add significant complexity. You're looking at 30–60+ minutes of setup per site, plus ongoing maintenance.

How Thunderbit Handles Pagination Without Configuration

I'm biased, but the contrast is stark:

| Capability | n8n (DIY Workflow) | Thunderbit |

|---|---|---|

| Click-based pagination | Manual loop node setup, URL incrementing | Automatic — detects and follows pagination |

| Infinite scroll pages | Requires headless browser + community node | Built-in support, no config needed |

| Setup effort | 30–60 min per site | 2 clicks |

| Pages per batch | Sequential (one at a time) | 50 pages simultaneously (Cloud Scraping) |

If you're scraping 200 product pages across 10 paginated listings, n8n will take you a full afternoon. Thunderbit will take about two minutes. That's not a knock on n8n — it's just a different tool for a different job.

Set It and Forget It: Cron-Triggered n8n Web Scraping Pipelines

One-off scraping is useful, but the real power of n8n web scraping is recurring, automated data collection. Surprisingly, almost no n8n scraping tutorial covers the Schedule Trigger for scraping — even though it's one of the most requested features in the community.

Building a Daily Price Monitoring Pipeline

Replace your Manual Trigger with a Schedule Trigger node. You can use the n8n UI ("Every day at 8:00 AM") or a cron expression (0 8 * * *).

The full workflow chain:

- Schedule Trigger (daily at 8 AM)

- Code node (generate paginated URLs)

- Loop Over Items → HTTP Request → HTML → Wait (scrape all pages)

- Code node (clean data, normalize prices)

- Google Sheets (append new rows)

- IF node (did any price drop below threshold?)

- Slack (send alert if yes)

Wire an Error Trigger workflow alongside it that fires on any failed execution and pings Slack. Otherwise, when selectors break (and they will), you'll discover it three weeks later when the report is empty.

Two non-obvious requirements:

- n8n must be running 24/7. A laptop-hosted self-host won't fire when the lid is closed. Use a server, Docker, or n8n Cloud.

- After every workflow edit, toggle the workflow off and back on. n8n Cloud has a where schedulers silently de-register after edits, with zero error feedback.

Building a Weekly Lead Extraction Pipeline

Same pattern, different target: Schedule Trigger (every Monday at 9 AM) → HTTP Request (business directory) → HTML (extract name, phone, email) → Code (deduplicate, clean formatting) → Airtable or HubSpot push.

The maintenance burden is the under-discussed cost here. If the directory site changes its layout, your CSS selectors break and the workflow fails silently. HasData estimates that of the initial build time should be budgeted for ongoing maintenance per year in any selector-based pipeline. Once you're maintaining ~20 sites, the overhead is real.

Thunderbit's Scheduled Scraper: The No-Code Alternative

Thunderbit's Scheduled Scraper lets you describe the interval in plain language (e.g., "every Monday at 9 AM"), input your URLs, and click "Schedule." It runs in the cloud — no hosting, no cron expressions, no silent de-registrations.

| Dimension | n8n Scheduled Workflow | Thunderbit Scheduled Scraper |

|---|---|---|

| Schedule setup | Cron expression or n8n schedule UI | Describe in plain language |

| Data cleaning | Manual Code node required | AI cleans/labels/translates automatically |

| Export destinations | Requires integration nodes | Google Sheets, Airtable, Notion, Excel (free) |

| Hosting requirement | Self-hosted or n8n Cloud | None — runs in cloud |

| Maintenance on site changes | Selectors break, manual fix needed | AI reads site fresh each time |

That last row is the one that matters most. Forum users say it plainly: "most of them are fine until a site changes its layout." Thunderbit's AI-based approach eliminates that pain because it doesn't rely on fixed CSS selectors.

When Your n8n Web Scraper Gets Blocked: An Anti-Bot Troubleshooting Guide

Getting blocked is the #1 frustration after pagination. The standard advice — "add a User-Agent header" — is about as useful as locking a screen door against a hurricane.

Per the Imperva 2025 Bad Bot Report, , and of it is malicious. Anti-bot vendors (Cloudflare, Akamai, DataDome, HUMAN, PerimeterX) have responded with TLS fingerprinting, JavaScript challenges, and behavioral analysis. The n8n HTTP Request node, which uses the Axios library under the hood, produces a distinct, easily-recognizable, non-browser TLS fingerprint. Changing the User-Agent header does nothing — the gives you away before any HTTP header is even read.

The Anti-Bot Decision Tree

Here's a systematic troubleshooting framework — not just "add a User-Agent":

Request blocked?

- 403 Forbidden → Add User-Agent + Accept headers (see Step 2 above) → Still blocked?

- Yes → Add residential proxy rotation → Still blocked?

- Yes → Switch to a scraping API (ScrapeNinja, Firecrawl, ZenRows) or headless browser community node

- No → Proceed

- No → Proceed

- Yes → Add residential proxy rotation → Still blocked?

- CAPTCHA appears → Use a scraping API with built-in CAPTCHA solving (e.g., )

- Empty response (JS-rendered content) → Use headless browser community node or scraping API with JS rendering

- Rate limited (429 error) → Enable batching on the HTTP Request node, set wait time to 2–5 seconds between batches, reduce concurrency

One more gotcha: n8n has a where the HTTP Request node can't properly tunnel HTTPS through an HTTP proxy. The Axios library fails on TLS handshake, even though curl in the same container works fine. If you're using a proxy and getting mysterious connection errors, this is likely why.

Why Thunderbit Sidesteps Most Anti-Bot Issues

Thunderbit offers two scraping modes:

- Browser Scraping: Runs inside your actual Chrome browser, inheriting your session cookies, login state, and browser fingerprint. This sidesteps most anti-bot measures that block server-side requests — because the request is a real browser.

- Cloud Scraping: For publicly available sites, Thunderbit's cloud handles anti-bot at scale — .

If you're spending more time fighting Cloudflare than analyzing data, this is the practical alternative.

Honest Take: When n8n Web Scraping Works — and When to Use Something Else

n8n is a great platform. But it's not the right tool for every scraping job, and no competitor article is honest about this. Users are literally asking on forums: "how difficult is it to create a web scraper with n8n?" and "which scraping tool works best with n8n?"

Where n8n Web Scraping Excels

- Multi-step workflows that combine scraping with downstream processing — CRM updates, Slack alerts, AI analysis, database writes. This is n8n's core strength.

- Cases where scraping is one node in a larger automation chain — scrape → enrich → filter → push to CRM.

- Technical users comfortable with CSS selectors and node-based logic.

- Scenarios requiring custom data transformation between scraping and storage.

Where n8n Web Scraping Gets Painful

- Non-technical users who just need data fast. The node setup, CSS selector discovery, and debugging loop is steep for business users.

- Sites with heavy anti-bot protection. Proxy and API add-ons add cost and complexity.

- Maintenance when site layouts change. CSS selectors break, workflows fail silently.

- Bulk scraping across many different site types. Each site needs its own selector configuration.

- Subpage enrichment. Requires building separate sub-workflows in n8n.

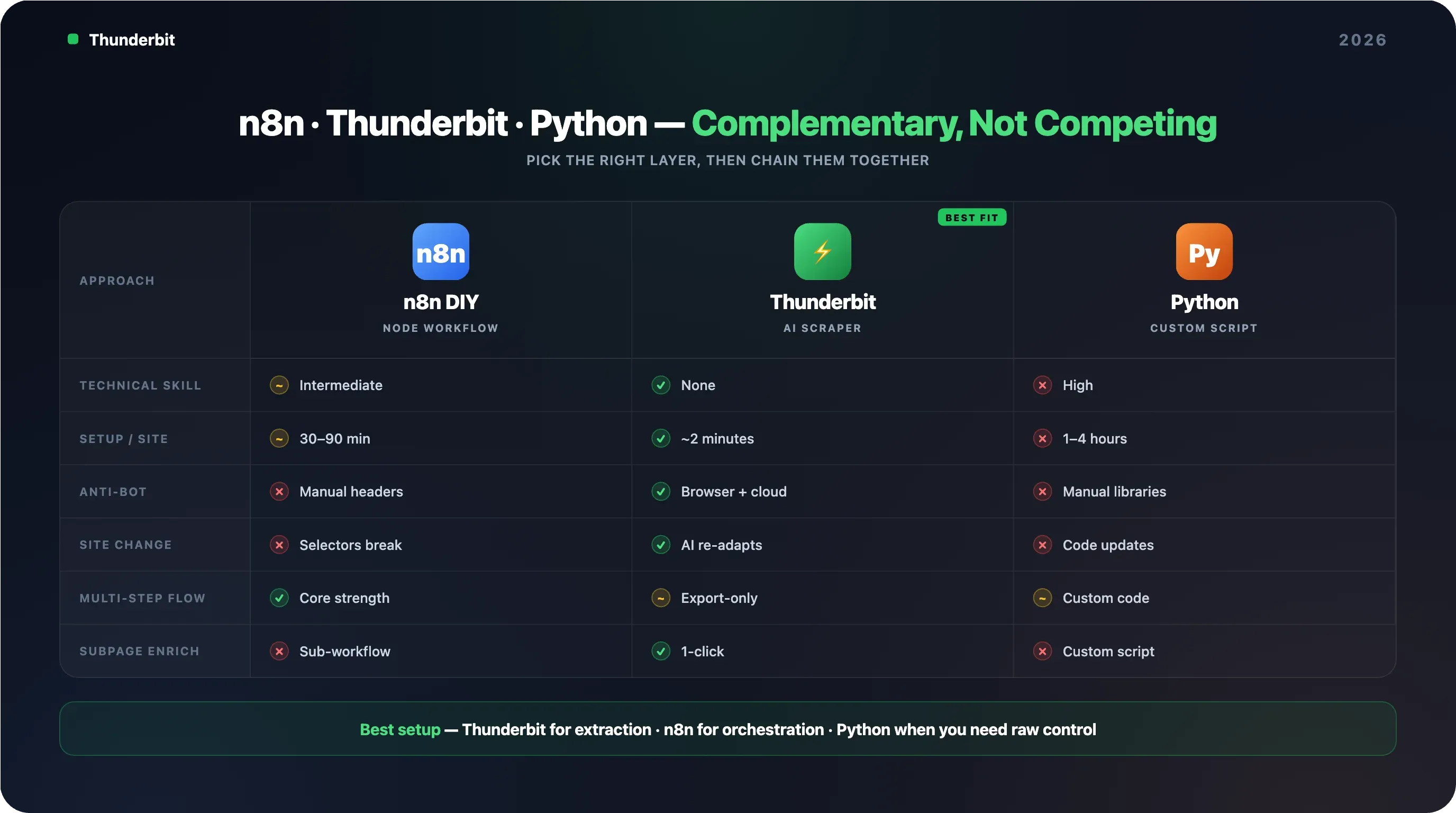

Side-by-Side: n8n vs. Thunderbit vs. Python Scripts

| Factor | n8n DIY Scraping | Thunderbit | Python Script |

|---|---|---|---|

| Technical skill needed | Intermediate (nodes + CSS selectors) | None (AI suggests fields) | High (coding) |

| Setup time per new site | 30–90 min | ~2 minutes | 1–4 hours |

| Anti-bot handling | Manual (headers, proxies, APIs) | Built-in (browser/cloud modes) | Manual (libraries) |

| Maintenance when site changes | Manual selector updates | Zero — AI adapts automatically | Manual code updates |

| Multi-step workflow support | Excellent (core strength) | Export to Sheets/Airtable/Notion | Requires custom code |

| Cost at scale | n8n hosting + proxy/API costs | Credit-based (~1 credit per row) | Server + proxy costs |

| Subpage enrichment | Manual — build separate sub-workflow | 1-click subpage scraping | Custom scripting |

The takeaway: use n8n when scraping is part of a complex, multi-step automation chain. Use Thunderbit when you need data fast without building workflows. Use Python when you need maximum control and have developer resources. They're not competitors — they're complementary.

Real-World n8n Web Scraping Workflows You Can Actually Copy

Forum users keep asking: "Has anyone chained these into multi-step workflows?" Three specific workflows — actual node sequences you can build today.

Workflow 1: Ecommerce Competitor Price Monitor

Goal: Track competitor prices daily and get alerted when they drop.

Node chain: Schedule Trigger (daily, 8 AM) → Code (generate paginated URLs) → Loop Over Items → HTTP Request → HTML (extract product name, price, availability) → Wait (2s) → (loop back) → Code (clean data, normalize prices) → Google Sheets (append rows) → IF (price below threshold?) → Slack (send alert)

Complexity: 8–10 nodes, 30–60 min setup per competitor site.

Thunderbit shortcut: Thunderbit's Scheduled Scraper + can achieve similar results in minutes, with free export to Google Sheets.

Workflow 2: Sales Lead Generation Pipeline

Goal: Scrape a business directory weekly, clean and categorize leads, push to CRM.

Node chain: Schedule Trigger (weekly, Monday 9 AM) → HTTP Request (directory listing page) → HTML (extract name, phone, email, address) → Code (deduplicate, clean formatting) → OpenAI/Gemini node (categorize by industry) → HubSpot node (create contacts)

Note: n8n has a native — useful for CRM pushes. But the scraping and cleaning steps still require manual CSS selector work.

Thunderbit shortcut: Thunderbit's free and Phone Number Extractor can pull contact info in 1 click without building a workflow. Its AI labeling can categorize leads during extraction. Users who don't need the full automation chain can skip the n8n setup entirely.

Workflow 3: Real Estate New Listing Tracker

Goal: Spot new listings on Zillow or Realtor.com weekly and send a digest email.

Node chain: Schedule Trigger (weekly) → HTTP Request (listing pages) → HTML (extract address, price, bedrooms, link) → Code (clean data) → Google Sheets (append) → Code (compare against previous week's data, flag new listings) → IF (new listings found?) → Gmail/SendGrid (send digest)

Note: Thunderbit has — no CSS selectors needed. Users who need the full automation chain (scrape → compare → alert) benefit from n8n; users who just need the listing data benefit from Thunderbit.

For more workflow inspiration, n8n's community library has templates for , , and .

Tips for Keeping Your n8n Web Scraping Pipelines Running Smoothly

Production scraping is 20% building and 80% maintaining.

Use Batching and Delays to Avoid Rate Limits

Enable batching on the HTTP Request node and set a wait time of 1–3 seconds between batches. Concurrent requests are the fastest way to get IP-banned. A little patience here saves a lot of pain later.

Monitor Workflow Executions for Silent Failures

Use n8n's Executions tab to check for failed runs. Scraped data might silently return empty if a site changes its layout — the workflow "succeeds" but your spreadsheet is full of blanks.

Set up an Error Trigger workflow that fires on any failed execution and sends a Slack or email alert. This is non-negotiable for production pipelines.

Store Your CSS Selectors Externally for Easy Updates

Keep CSS selectors in a Google Sheet or n8n environment variables so you can update them without editing the workflow itself. When a site layout changes, you only need to update the selector in one place.

Know When to Switch to an AI-Powered Scraper

If you find yourself constantly updating CSS selectors, fighting anti-bot measures, or spending more time maintaining scrapers than using the data, consider an AI-powered tool like that reads the site fresh each time and adapts automatically. The works well: Thunderbit handles the fragile extraction layer (the part that breaks every time a site updates a <div>), exports to Google Sheets or Airtable, and n8n picks up the new rows via its native Sheets/Airtable trigger to handle the orchestration — CRM updates, alerts, conditional logic, multi-system fan-out.

Wrapping Up: Build the Pipeline That Matches Your Team

n8n web scraping is powerful when you need scraping as one step in a larger automation workflow. But it requires technical setup, ongoing maintenance, and patience with pagination, anti-bot, and scheduling configuration. This guide covered the full pipeline: your first workflow, pagination (the part every tutorial skips), scheduling, anti-bot troubleshooting, an honest assessment of where n8n fits, and real-world workflows you can copy.

Here's how I think about it:

- Use n8n when scraping is part of a complex, multi-step automation chain — CRM updates, Slack alerts, AI enrichment, conditional routing.

- Use when you need data fast without building workflows — AI handles field suggestion, pagination, anti-bot, and export in 2 clicks.

- Use Python when you need maximum control and have developer resources.

And honestly, the best setup for many teams is both: Thunderbit for the extraction, n8n for the orchestration. If you want to see how AI-powered scraping compares to your n8n workflow, lets you experiment on a small scale — and the installs in seconds. For video walkthroughs and workflow ideas, check out the .

FAQs

Can n8n scrape JavaScript-heavy websites?

Not with the built-in HTTP Request node alone. The HTTP Request node fetches raw HTML and cannot execute JavaScript. For JS-rendered sites, you need a community node like or a scraping API integration (ScrapeNinja, Firecrawl) that renders JavaScript server-side. Thunderbit handles JS-heavy sites natively in both Browser and Cloud scraping modes.

Is n8n web scraping free?

n8n's self-hosted version is free and open source. n8n Cloud previously had a free tier, but as of April 2026, it offers only a 14-day trial — after that, plans start at $24/month for 2,500 executions. Scraping protected sites may also require paid proxy services ($5–15/GB for residential proxies) or scraping APIs ($49–200+/month depending on volume).

How does n8n web scraping compare to Thunderbit?

n8n is better for multi-step automations where scraping is one part of a larger workflow (e.g., scrape → enrich → filter → push to CRM → alert on Slack). Thunderbit is better for fast, no-code data extraction with AI-powered field detection, automatic pagination, and zero maintenance when sites change. Many teams use both together — Thunderbit for extraction, n8n for orchestration.

Can I scrape data from sites that require login using n8n?

Yes, but it requires configuring cookies or session tokens in the HTTP Request node, which can be tricky to maintain. Thunderbit's Browser Scraping mode inherits the user's logged-in Chrome session automatically — if you're logged in, Thunderbit can scrape what you see.

What should I do when my n8n scraper suddenly stops returning data?

First, check the n8n Executions tab for errors. The most common cause is a site layout change that broke your CSS selectors — the workflow "succeeds" but returns empty fields. Verify your selectors in Chrome's Inspect tool, update them in your workflow (or in your external selector sheet), and re-test. If you're hitting anti-bot blocks, follow the troubleshooting decision tree in this guide. For long-term reliability, consider an AI-powered scraper like Thunderbit that adapts to layout changes automatically.

Learn More