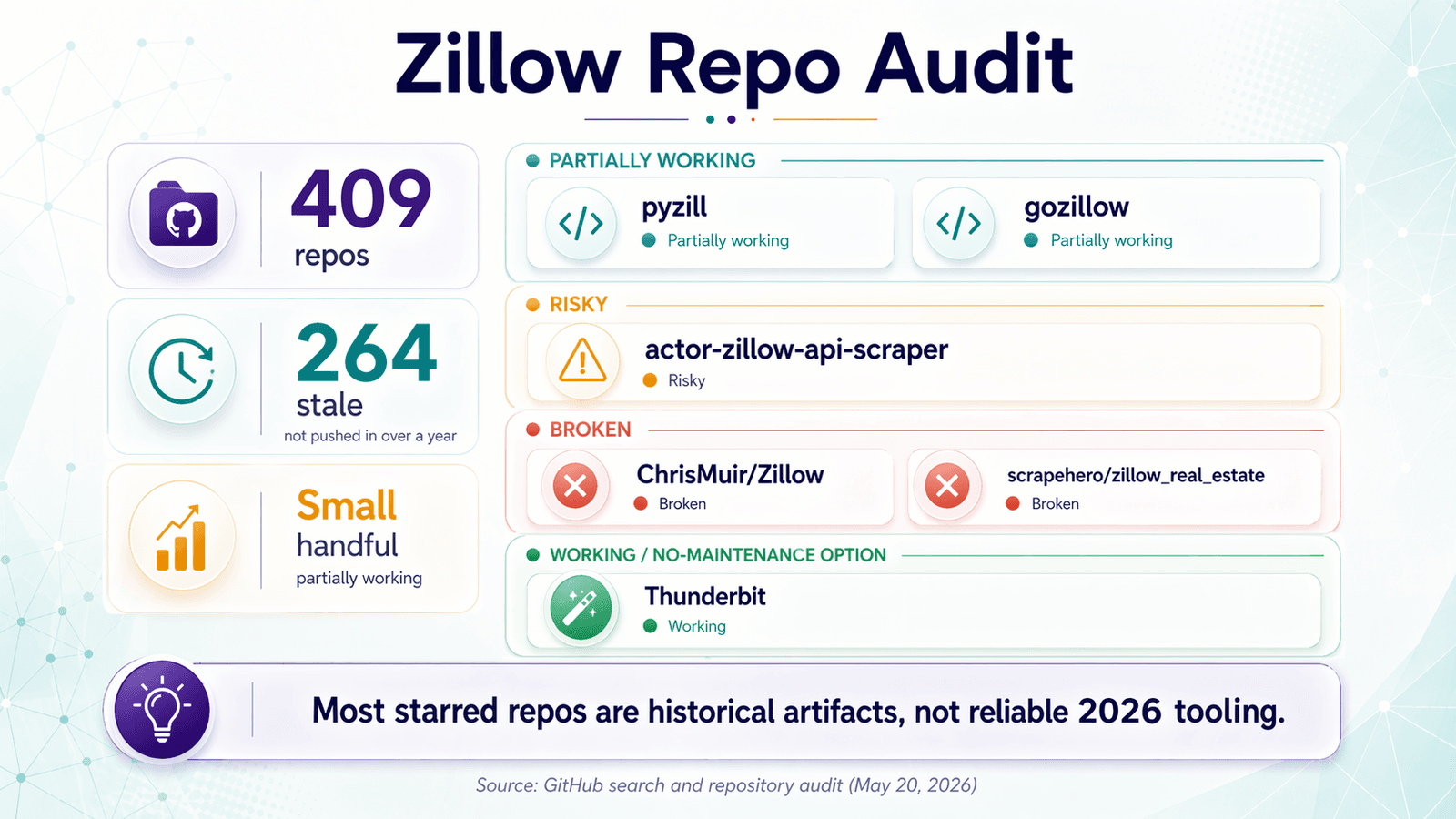

If you search "zillow scraper github" right now, you’ll find . Sounds promising — until you realize that haven’t been updated in over a year.

I’ve spent a lot of time auditing these repos, testing them against live Zillow pages, and reading through the GitHub issues and Reddit threads where developers vent about what broke this time. The pattern is consistent: a repo gets a burst of stars when it first works, then quietly dies when Zillow changes its DOM, tightens its anti-bot stack, or deprecates an internal API endpoint. One frustrated developer on Reddit summed it up perfectly: “scraping projects need to be on constant maintenance due to changes on the page or api.” This article is the audit I wish I’d had before cloning my first Zillow scraper repo — an honest, up-to-date look at what actually runs in 2026, what breaks and why, and when it makes more sense to skip the GitHub rabbit hole entirely and use a tool like instead.

What Is a Zillow Scraper GitHub Project (and Who Needs One)?

A “Zillow scraper” is any script or tool that automatically collects property listing data from Zillow’s website — things like price, address, beds, baths, square footage, Zestimate, listing status, days on market, and sometimes deeper detail-page data like price history or tax records. People search GitHub specifically because they want something free, open-source, and customizable. Fork a repo, tweak the fields, pipe the output into your own pipeline. In theory, it’s the best of both worlds.

The audiences are pretty distinct:

- Real estate investors tracking deals across zip codes — they want price drops, Zestimate gaps, and days-on-market data to filter for opportunities

- Agents building prospecting lists — they need listing URLs, agent contact info, and listing status changes

- Market researchers and analysts pulling structured comps — address, price per square foot, sold-vs-list price, inventory counts

- Ops teams monitoring pricing or inventory across markets at regular intervals

The common thread: everyone wants structured, repeatable data — not a one-time copy-paste job. That’s what makes scraping attractive. It’s also what makes the maintenance burden so painful when a repo stops working.

The 2026 Zillow Scraper GitHub Repo Audit: What Actually Still Runs

I searched GitHub for the most-starred and most-forked Zillow scraper repos, checked last commit dates, read through open issues, and tested them against live Zillow pages. The methodology is simple: if a repo can return accurate listing data from Zillow search results or detail pages as of April 2026, it gets a “working” stamp. If it runs but returns incomplete data or hits blocks after a few pages, it’s “partially working.” If it fails outright or the maintainer says it’s dead, it’s “broken.”

The harsh reality: most repos that looked promising 12–18 months ago have silently broken.

Curated Comparison Table: Top Zillow Scraper GitHub Repos

| Repo | Language | Stars | Last Push | Approach | 2026 Status | Key Limitation |

|---|---|---|---|---|---|---|

| johnbalvin/pyzill | Python | 96 | 2025-08-28 | Zillow search/detail extraction + proxy support | Partially working | README says “Use rotating residential proxies.” Issues include Cloudflare blocks, 403s via proxyrack, CAPTCHA even with proxies. |

| johnbalvin/gozillow | Go | 10 | 2025-02-23 | Go library for property URL/ID and search methods | Partially working | Same maintainer as pyzill, but low adoption and thin issue surface. Confidence is lower. |

| cermak-petr/actor-zillow-api-scraper | JavaScript | 59 | 2022-05-04 | Hosted actor using internal Zillow API recursion | Partially working (risky) | Clever design — recursively splits map bounds to bypass result limits. But GitHub repo hasn’t been pushed since 2022. One issue title: “is this still working?” |

| ChrisMuir/Zillow | Python | 170 | 2019-06-09 | Selenium | Broken | README explicitly says: “As of 2019, this code no longer works for most users.” Zillow detects webdrivers, serves endless CAPTCHAs. |

| scrapehero/zillow_real_estate | Python | 152 | 2018-02-26 | requests + lxml | Broken | Issues include “returns empty dataset,” “No output in .csv file,” and “Is this repo still updated?” |

| faithfulalabi/Zillow_Scraper | Python/notebook | 30 | 2021-07-02 | Hardcoded Selenium | Broken | Educational project hardcoded to Arlington, TX rentals. Not a general-purpose scraper. |

| eswan18/zillow_scraper | Python | 10 | 2021-04-10 | Scraper + processing pipeline | Broken | Repo is archived. |

| Thunderbit | No-code (Chrome extension) | N/A | Continuously updated | AI reads page structure + pre-built Zillow template | Working | No GitHub repo to maintain. AI adapts when Zillow changes layout. Free tier available. |

The pattern is clear: the GitHub ecosystem still contains living code, but most of the visible repos are tutorials, historical artifacts, or thin wrappers around a proxy-dependent workflow.

What “Working” vs. “Broken” vs. “Partially Working” Means

I want to be precise about these labels because they matter more than star counts:

- Working: successfully returns accurate listing data from Zillow search pages and/or detail pages as of the testing date, without the maintainer flagging the project as dead

- Partially working: runs but returns incomplete data, hits blocks after a few pages, or only works on certain page types — typically requires proxy infrastructure and ongoing tuning

- Broken: fails to return data, throws errors, or has been explicitly flagged as non-functional by the maintainer or community

A repo with 170 stars and a “broken” status is worse than a repo with 10 stars that actually returns data. Popularity is historical context, not a quality signal.

Why Zillow Scraper GitHub Projects Break (The 5 Common Failure Modes)

Understanding why Zillow scrapers break saves you more time than any repo README. If you understand why Zillow scrapers break, you can either build a more resilient one or decide the maintenance tax isn’t worth it.

1. DOM Restructuring (Zillow’s React Frontend)

Zillow’s frontend is built on React and changes frequently. Class names, component structure, and data attributes shift without warning. A scraper that targets div.list-card-price today might find that class name gone tomorrow. As one notes, “the class names vary from page to page” on Zillow.

The result: your script runs, returns empty fields, and you don’t notice until you’ve been collecting blanks for a week.

2. Internal API and GraphQL Endpoint Changes

The smarter repos bypass HTML entirely and hit Zillow’s internal GraphQL or REST APIs. The repo, for example, explicitly uses Zillow’s internal API and recursively splits map bounds to get around result limits. It’s a clever design — but Zillow periodically restructures these endpoints. When they do, your scraper returns 404s or empty JSON with no error message.

This is a subtler form of breakage. The code is fine. The target moved.

3. Anti-Bot and CAPTCHA Escalation

Zillow has progressively ramped up bot detection. In my own testing in April 2026, plain requests.get() calls to both zillow.com and zillow.com/homes/Chicago,-IL_rb/ returned — even with a Chrome-like user-agent and Accept-Language header. Community reports align: one user noted their reverse-engineered API flow started returning 403 after about .

Scrapers that work fine at low volume may suddenly fail when scaled up. That’s a nasty surprise when you’re trying to track 200 listings across 3 zip codes.

4. Login Walls Around Premium Data

Certain data points — Zestimate details, tax records, some price history — are gated behind authentication. Open-source scrapers rarely handle login flows, so these fields come back empty. If your use case depends on price history or tax assessed values, you’ll hit this wall quickly.

5. Dependency Rot and Unmaintained Repos

The include install problems like No module named 'unicodecsv'. The documents manual driver and GIS dependency pain. Python library updates break compatibility. Repos that haven’t been updated in 6+ months often fail on fresh installs before they even get to Zillow’s anti-bot stack.

Zillow Anti-Bot Defenses in 2026: What You’re Really Up Against

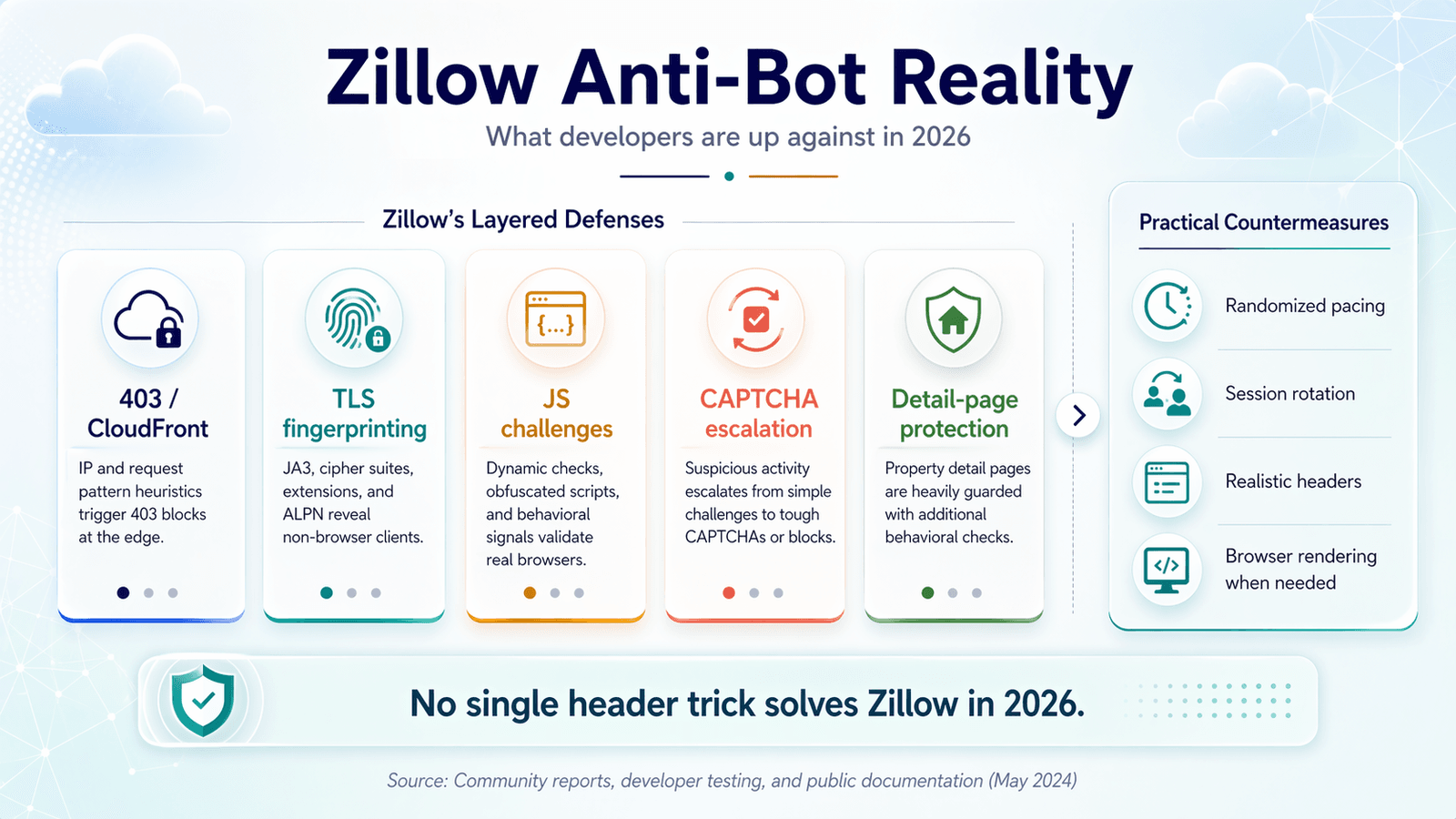

“Just use proxies and rotate headers” was adequate advice in 2022. It’s not in 2026.

Beyond IP Blocking: TLS Fingerprinting and JS Challenges

Zillow doesn’t just block IPs. Community reports describe Zillow sitting behind Cloudflare with that goes beyond simple rate limiting. TLS fingerprinting identifies non-browser clients by their “digital handshake” — the way they negotiate encryption. Even with a fresh proxy, your scraper can be flagged if its TLS signature doesn’t match a real Chrome browser.

JavaScript challenges add another layer. Headless browsers that don’t fully execute JS or that expose automation markers (like navigator.webdriver = true) get caught.

Search Pages vs. Property Detail Pages: Different Protection Levels

Not all Zillow pages are equally defended. The explicitly distinguishes a “Fast Mode” that skips detail pages from a slower “Full Mode” that includes richer data. Thunderbit’s also separates the initial listings scrape from “Scrape Subpages” for detail-page enrichment.

The practical takeaway: your scraper may work fine on search results but fail on individual property pages, where Zillow applies heavier protection because the data is higher-value and more frequently scraped.

The HTTP-Only Crowd: Why Some Devs Avoid Browser Automation

There’s a strong contingent of developers who explicitly want HTTP-only approaches — no Selenium, no Playwright, no Puppeteer. The reasons are practical: browser automation is slow, resource-heavy, and harder to deploy at scale.

The honest assessment: in 2026, pure HTTP approaches against Zillow are increasingly difficult without sophisticated header and fingerprint management. The community evidence points toward browser rendering becoming the standard, not the exception, for targets like Zillow.

Concrete Anti-Block Best Practices for Zillow

If you’re going the DIY route, here’s what actually helps (and what doesn’t):

- Randomized request pacing that mimics human browsing — not fixed delays, but variable intervals with session-like behavior

- Realistic header configurations including

Accept-Language,Sec-CH-UAfamily headers, and proper referer chains — but be honest: realistic headers are necessary, not sufficient - Session rotation — don’t reuse the same proxy/cookie combination for hundreds of requests

- Know when to switch to browser rendering — if your HTTP-only approach is returning 403s after 50 requests, you’re fighting a losing battle

Don’t believe any article that implies one magic header block solves Zillow in 2026.

handles all of this automatically — rotating infrastructure across US/EU/Asia, managing rendering and anti-bot — so users skip the proxy-configuration rabbit hole entirely. The point is where the operational burden sits.

Best Practices to Future-Proof Your Zillow Scraper GitHub Setup

For readers who decide to go the GitHub/DIY route, here are the practices that separate scrapers that last months from scrapers that break in days.

Decouple Selectors from Brittle Class Names

If a repo depends on Zillow’s auto-generated CSS class names, treat that as a red flag. Those names change frequently — sometimes weekly. Instead:

- Target elements by

aria-label,data-*attributes, or nearby heading text - Use text-content-based selectors where possible

- Prefer JSON-first extraction over HTML parsing when Zillow serves structured data in the page source

Add Automated Health Checks

Treat Zillow scraping like production monitoring, not like a one-time script. Set up a cron job or GitHub Action that:

- Runs your scraper against one known listing daily

- Validates the output schema (are all expected fields present and non-empty?)

- Triggers an alert if the output is malformed or empty

This catches breakage within 24 hours instead of weeks.

Pin Dependency Versions and Use Virtual Environments

Always pin your Python (or Node) dependencies to specific versions. Use virtual environments or Docker containers. The older repos in our audit show how quickly install rot sets in — broken dependencies are often the first thing to fail, before Zillow’s anti-bot stack even enters the picture.

Keep Scrape Volume Conservative

That isn’t universal, but it’s a credible reminder that volume changes the behavior of a scraper that seemed fine in testing. Spread your requests across sessions. Use randomized delays. Don’t try to scrape 10,000 listings in one run.

Know When DIY Isn’t Worth the Effort

If you’re spending more time maintaining your scraper than analyzing your data, the economics have flipped. That’s not a failure — it’s a signal to consider a managed solution.

Zillow Scraper GitHub (DIY) vs. No-Code Tools: An Honest Decision Matrix

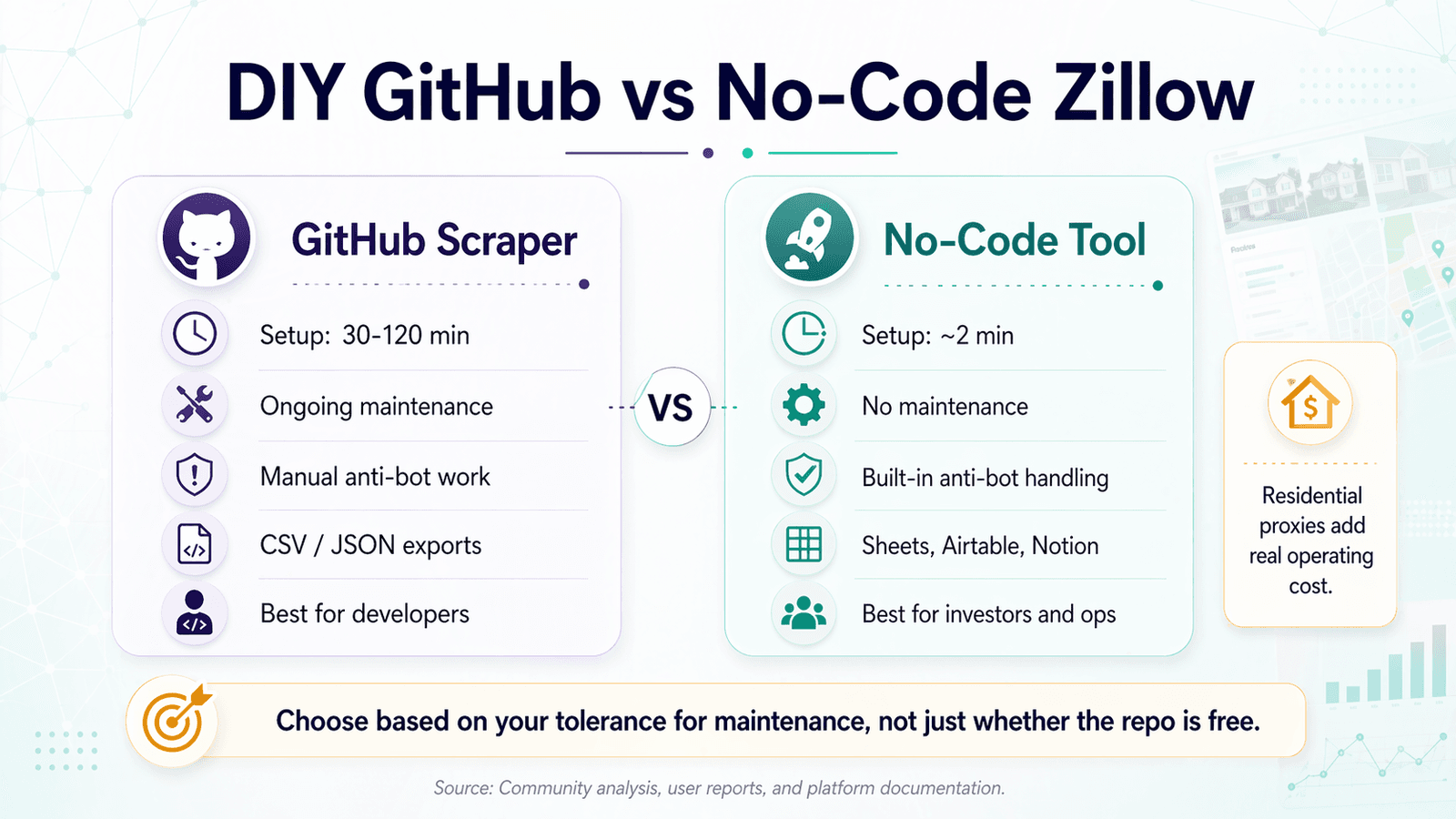

The audience for “zillow scraper github” splits cleanly into two groups: developers who want code ownership, and real estate professionals who just want data in a spreadsheet. Both are valid. Here’s how the tradeoffs actually shake out.

Side-by-Side Comparison Table

| Criteria | GitHub Scraper (Python) | No-Code Tool (e.g., Thunderbit) |

|---|---|---|

| Setup time | 30–120 min (env, deps, proxies) | ~2 min (install extension, click scrape) |

| Maintenance | Ongoing — breaks when Zillow changes | None — AI adapts to page layout automatically |

| Anti-bot handling | Manual (proxies, headers, delays) | Built-in (cloud scraping, rotating infra) |

| Data fields | Custom — whatever you code | AI-suggested or template-based |

| Export options | CSV/JSON via code | Excel, Google Sheets, Airtable, Notion — free |

| Cost | Free (code) + proxy costs ($3.50–$8/GB for residential) | Free tier available; credit-based beyond |

| Customization ceiling | Unlimited (you own the code) | High (field AI prompts, subpage scraping) but bounded |

The Proxy Cost Reality Check

The “free repo” argument gets less persuasive once you factor in proxy costs. Current public pricing for residential proxies:

| Provider | Pricing (as of April 2026) |

|---|---|

| Webshare | $3.50/GB for 1 GB, lower at larger bundles |

| Decodo | ~$3.50/GB pay-as-you-go |

| Bright Data | $8/GB nominal, $4/GB with current promo |

| Oxylabs | Starting at $8/GB |

The repo may be free, but a proxy-backed Zillow workflow usually is not.

When to Choose a GitHub Repo

- You enjoy writing and maintaining code

- You need hyper-specific customization (custom data transformations, proprietary pipeline integration)

- You have the time and technical skills to handle breakage

- You’re willing to manage proxy infrastructure

When to Choose Thunderbit

- You need reliable data today with zero setup or maintenance

- You’re a real estate agent, investor, or ops team member — not a developer

- You want to without writing export code

- You want subpage scraping (enriching listings with detail-page data) without additional configuration

- You want scheduled scraping described in plain language

Step-by-Step: How to Scrape Zillow with Thunderbit (No GitHub Required)

The no-code path looks nothing like the GitHub setup process.

Step 1: Install the Thunderbit Chrome Extension

Head to the , install Thunderbit, and sign up. There’s a free tier.

Step 2: Navigate to Zillow and Open Thunderbit

Go to any Zillow search results page — say, homes for sale in a specific zip code. Click the Thunderbit extension icon in your browser toolbar.

Step 3: Use the Zillow Instant Scraper Template (or AI Suggest Fields)

Thunderbit has a — no configuration needed, just one click. The template covers the standard fields: Address, Price, Beds, Baths, Square Feet, Agent Name, Agent Phone, and Listing URL.

Alternatively, click “AI Suggest Fields” and the AI reads the page and suggests columns. In my experience, it typically detects , including Zestimate.

Step 4: Click Scrape and Review Results

Click “Scrape.” Thunderbit handles pagination, anti-bot, and data structuring automatically. You get a structured table of results — no 403 errors, no empty fields, no proxy configuration.

Step 5: Enrich with Subpage Data (Optional)

Click “Scrape Subpages” to have Thunderbit visit each listing’s detail page and pull additional fields: price history, tax records, lot size, school ratings. In a GitHub setup, this would be a complex second scraping pass with its own selector logic and anti-bot handling. Here it’s one click.

Step 6: Export Your Data for Free

Export to Excel, Google Sheets, Airtable, or Notion — all free. Download as CSV or JSON if you prefer. No export code to write.

That’s materially different from the GitHub user journey, which usually starts with environment setup and ends with troubleshooting 403s.

From CSV to Insight: What to Actually Do With Your Zillow Data

Most guides end at “here’s your CSV.” That’s like handing someone a fishing rod and walking away before explaining how to cook the fish.

Scraping is step one. Here’s the rest.

Step 1: Scrape — Collect Listing Data

Core fields from search results: price, beds, baths, sqft, address, Zestimate, listing status, days on market, listing URL.

Step 2: Enrich — Pull Detail-Page Data via Subpage Scraping

Additional fields from property detail pages: price history, tax records, lot size, HOA fees, school ratings, agent contact details. Thunderbit’s subpage scraping handles this in one click. In a GitHub setup, you’d need a separate scraping pass with its own selectors and anti-bot logic.

Step 3: Export — Push to Your Preferred Platform

- Google Sheets for quick analysis and sharing

- Airtable for a mini-CRM or deal tracker

- Notion for a team dashboard

- CSV/JSON for custom pipelines

Step 4: Monitor — Schedule Recurring Scrapes

This is the pain point multiple forum threads flag as unsolved. You don’t just want today’s data — you want to catch price drops, status changes (active → pending → sold), and new listings as they appear.

Thunderbit’s scheduled scraper lets you describe intervals in plain language (e.g., “every Tuesday and Friday at 8am”). For a GitHub setup, you’d need to build a cron job, handle authentication persistence, and manage failure recovery yourself.

Step 5: Act — Filter for Deals and Feed Outreach Workflows

This is where data becomes decisions:

- For investors: filter for price drops >5% in 30 days, days-on-market >90, price below Zestimate

- For agents: flag new listings matching buyer criteria, expired/withdrawn listings for prospecting

- For researchers: calculate price per sqft trends, sold-vs-list price ratios, inventory velocity

Real-World Example: An Investor Tracking 200 Listings Across 3 Zip Codes

Here’s what the data fields look like mapped to each use case:

| Data Field | Investing | Agent Leads | Market Research |

|---|---|---|---|

| Price | ✅ Core | ✅ | ✅ |

| Zestimate | ✅ Core (gap analysis) | ✅ | |

| Price history | ✅ Core (trend detection) | ✅ | |

| Days on market | ✅ Core (motivation signal) | ✅ | ✅ |

| Tax assessed value | ✅ (valuation cross-check) | ✅ | |

| Listing status | ✅ | ✅ Core | ✅ |

| List date | ✅ | ✅ | |

| Agent name/phone | ✅ Core | ||

| Price per sqft | ✅ | ✅ Core | |

| Sold price vs. list price | ✅ Core |

The investor sets up a weekly scrape across three zip codes, exports to Google Sheets, and applies conditional formatting for price drops and DOM outliers. The agent exports to Airtable and builds a prospecting pipeline. The researcher pulls into a spreadsheet for trend analysis. Same scraping step, three different workflows.

Legal and Ethical Considerations for Scraping Zillow

Brief but necessary.

explicitly prohibit automated queries, including screen scraping, crawlers, spiders, and bypassing CAPTCHA-like precautions. Zillow’s disallows broad paths including /api/, /homes/, and query-state URLs.

At the same time, US web-scraping law is not reducible to “all scraping is illegal.” The hiQ v. LinkedIn line of cases matters for public-data scraping under the CFAA. A from Haynes Boone notes the Ninth Circuit again rejected LinkedIn’s effort to block scraping of public member profiles. But that doesn’t erase separate contract, privacy, or anti-circumvention arguments, and it doesn’t make Zillow’s ToS irrelevant.

Where that leaves you:

- Public-page scraping may have stronger CFAA arguments than many site owners suggest

- Zillow still contractually prohibits it

- Bypassing technical barriers raises the legal risk

- If you have a commercial or high-volume use case, get legal advice

- Regardless of the legal landscape, scrape responsibly: respect rate limits, don’t overload servers, don’t use personal data for spam

Picking the Right Tool for Your Zillow Workflow

The Zillow scraper GitHub landscape in 2026 is thinner than it looks. Most visible repos are stale, brittle, or broken. A small number of newer repos — notably — still work, but only with ongoing proxy and anti-bot maintenance.

The real decision isn’t open source versus closed source. It’s control versus operational burden.

- If you want full control and enjoy maintaining scrapers, GitHub repos are powerful — but budget time for proxy management, selector updates, and health monitoring.

- If you want reliable data today with zero upkeep, gets you from search to spreadsheet in minutes. Its AI reads the page structure fresh each time, so it never relies on hardcoded selectors that break.

Both paths are legitimate.

The worst outcome is spending hours setting up a GitHub scraper, only to discover it broke last month and nobody updated the README.

If you want to see the no-code path in action, — scrape Zillow listings in about 2 clicks and export to whatever platform your team already uses. Want to watch the process first? The has walkthroughs.

FAQs

Is there a working Zillow scraper on GitHub in 2026?

A few repos are partially working — most notably johnbalvin/pyzill, which still returns data but requires rotating residential proxies and ongoing tuning. The majority of starred repos (including ChrisMuir/Zillow with 170 stars and scrapehero/zillow_real_estate with 152 stars) are broken due to Zillow’s anti-bot changes and DOM updates. Check the audit table above for current status.

Can Zillow detect and block GitHub scrapers?

Yes. Zillow uses IP blocking, TLS fingerprinting, JavaScript challenges, CAPTCHAs, and rate limiting. In testing, even plain HTTP requests with Chrome-like headers returned 403 from CloudFront. GitHub scrapers without proper anti-detection measures — residential proxies, realistic headers, browser rendering — get blocked quickly, often within 100 requests.

What data can you scrape from Zillow?

Common fields include price, address, beds, baths, square feet, Zestimate, listing status, days on market, listing URL, and agent contact details. With detail-page scraping, you can also get price history, tax records, lot size, HOA fees, and school ratings. The exact fields depend on your scraper’s capabilities and whether you’re hitting search results or individual property pages.

Is scraping Zillow legal?

This is nuanced. Scraping publicly available data has stronger legal footing after the hiQ v. LinkedIn line of cases, but Zillow’s Terms of Use explicitly prohibit automated access. Bypassing technical barriers (CAPTCHAs, rate limits) adds additional legal risk. For personal research, the risk is generally low. For commercial or high-volume use cases, consult legal counsel. Always scrape responsibly regardless.

How does Thunderbit scrape Zillow without breaking?

Thunderbit uses AI to read the page structure fresh on every run — it doesn’t rely on hardcoded CSS selectors or XPaths that break when Zillow updates its frontend. It also has a pre-built for one-click extraction. Cloud scraping handles anti-bot automatically with rotating infrastructure, so users don’t need to configure proxies or manage browser rendering themselves. When Zillow changes its layout, the AI adapts — no repo update required.

Learn More