If you’ve ever tried scraping data from the web with Python, you know the feeling: one minute you’re happily pulling down product prices or sales leads, and the next—bam—your script gets blocked, your IP is banned, and you’re staring at a CAPTCHA wall that would make even the most patient person sigh. In 2025, this isn’t just a minor annoyance; it’s a daily battle for anyone in sales, marketing, or operations who relies on public web data to stay ahead.

Here’s the kicker: over are caused by anti-bot defenses like IP bans and CAPTCHAs, and about regularly hit these roadblocks. With bots now making up nearly half of all internet traffic, websites are fighting back harder than ever. But don’t worry—whether you’re a Python pro or just want a shortcut, I’ll walk you through how to avoid getting blocked, use proxies the smart way, and even supercharge your scraping with AI tools like .

Web Scraping Without Getting Blocked in Python: The Basics

Let’s start at square one. Web scraping is just a fancy way of saying “automating the process of collecting data from websites.” Python is the go-to language for this— use Python-based tools for scraping. But websites aren’t exactly rolling out the red carpet for bots. Why? Because too many automated requests can overload servers, steal content, or give competitors an unfair edge.

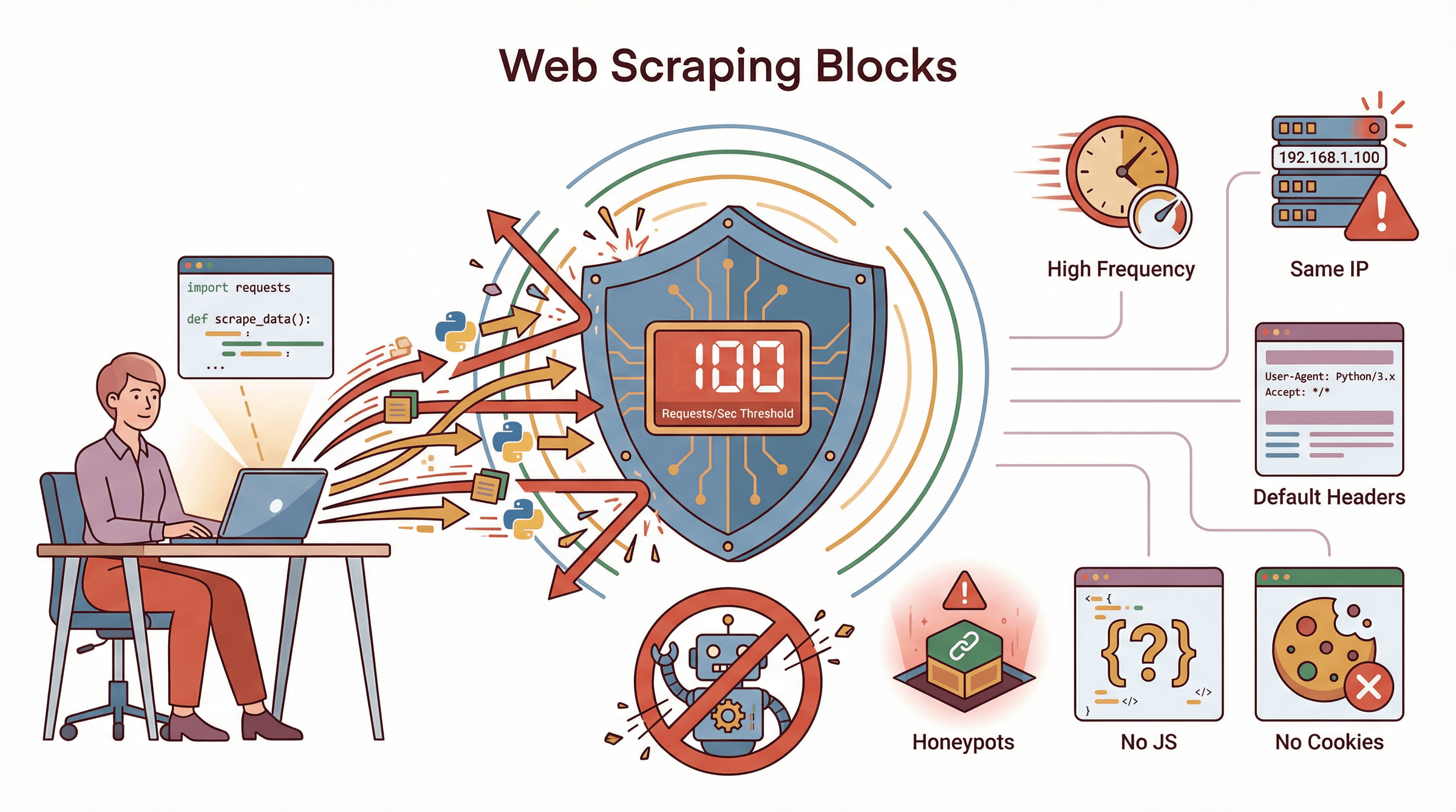

So, how do sites fight back? Here are the most common anti-scraping defenses:

- IP Address Blocking & Rate Limiting: Too many requests from the same IP? Expect a ban or a slowdown.

- CAPTCHAs: Those “prove you’re human” puzzles that bots (and, let’s be honest, sometimes humans) hate.

- User-Agent and Header Filtering: If your script announces itself as “python-requests/2.x,” you’re basically waving a flag that says “I’m a bot!”

- JavaScript Challenges & Browser Fingerprinting: Some sites require you to run JavaScript or pass subtle browser checks.

- Honeypots: Hidden links or fields that only bots will trigger.

If you’re not careful, your Python script will trip these alarms faster than you can say “403 Forbidden.”

Why Avoiding IP Blocking Matters for Python Web Scraping

Getting blocked isn’t just a technical headache—it’s a business risk. Imagine your sales team can’t pull fresh leads, your pricing analyst misses a competitor’s price drop, or your market research is based on incomplete data. That’s not just annoying; it can cost real money.

Let’s break it down:

| Use Case | Example Scenario | Risk If Blocked | Benefit of Reliable Scraping |

|---|---|---|---|

| Sales Lead Generation | Scraping directories or LinkedIn for contacts | Incomplete lists, lost sales opportunities | Continuous, up-to-date leads for outreach |

| Price Monitoring | Tracking competitor prices daily | Outdated data, missed pricing changes | Always-on pricing intelligence, faster reactions |

| Competitor Analysis | Pulling product details or reviews | Blind spots, missed product launches | Full competitive visibility, smarter strategy |

| Market Research & SEO | Aggregating news, forums, or SERPs | Skewed insights, wasted analyst time | Comprehensive, timely datasets for better analysis |

For , web data isn’t just “nice to have”—it’s mission critical.

How Websites Block Python Web Scraping: Key Triggers

So, what actually gets a Python scraper blocked? Here’s what I see most often:

So, what actually gets a Python scraper blocked? Here’s what I see most often:

- High Request Frequency: Humans don’t click 100 pages a second. If you do, you’ll get flagged.

- Repeated IP Use: All requests from one IP? That’s a red flag, especially if it’s a known datacenter.

- Default Headers: Using the default Python user-agent or missing headers is a dead giveaway.

- No Cookies or Sessions: Real users collect cookies as they browse. Bots that don’t look suspicious.

- Skipping JavaScript Rendering: If your scraper can’t run JS, you might miss data or fail bot checks.

- Ignoring Robots.txt: While not a technical block, it’s a quick way to get noticed.

- Honeypots: Clicking hidden links or filling invisible forms? Instant ban.

Common beginner mistakes include hammering sites with requests, not rotating proxies, and forgetting to randomize user-agents and delays. I’ve seen folks get their entire university IP range banned from NASDAQ for sending thousands of hits in a second. Oops.

Using Python Web Scraping Proxies to Avoid IP Blocking

Enter proxies: your best friend in the fight against IP bans. A proxy acts as a middleman, sending your requests through a different IP address. To the website, it looks like the traffic is coming from somewhere else.

Types of Proxies

- Datacenter Proxies: Cheap, fast, but easy to detect. Good for low-stakes scraping.

- Residential Proxies: Real home IPs—harder to block, but slower and pricier.

- Rotating Proxies: Automatically switch IPs on each request. Great for large-scale scraping.

- Mobile Proxies: Use mobile carrier IPs. Rarely needed unless you’re scraping the toughest sites.

For most business scraping, rotating residential proxies are the gold standard—they’re trusted and change often enough to avoid bans.

Integrating Proxies with Python Requests, Selenium, and Beautiful Soup

Let’s get practical. Here’s how you can add proxies to your Python scripts:

With Requests:

1import requests

2proxy = "http://USERNAME:PASSWORD@PROXY_IP:PORT"

3proxies = {"http": proxy, "https": proxy}

4headers = {"User-Agent": "Mozilla/5.0 ..."}

5response = requests.get("https://target-website.com/data", proxies=proxies, headers=headers)

6html = response.textWith Beautiful Soup:

1from bs4 import BeautifulSoup

2soup = BeautifulSoup(html, 'html.parser')

3data_items = soup.find_all('div', class_='item')With Selenium:

1from selenium import webdriver

2proxy = "PROXY_IP:PORT"

3chrome_options = webdriver.ChromeOptions()

4chrome_options.add_argument(f'--proxy-server=http://{proxy}')

5driver = webdriver.Chrome(options=chrome_options)

6driver.get("https://target-website.com")For rotating proxies, you can loop through a list or use a service that handles rotation for you. Just remember: if a proxy fails, catch the error and retry with another.

Best Practices for Managing and Rotating Proxies

- Use a Large Pool: The more proxies, the better. Rotate after every request or batch.

- Monitor Proxy Health: Remove bad proxies from your pool. Retry failed requests with a new IP.

- Don’t Overuse a Single Proxy: Spread your requests out. Don’t let one IP do all the work.

- Geographic Targeting: Use proxies from the same country as your target site if needed.

- Mix Proxy Types: Start with datacenter, switch to residential if you hit blocks.

- Avoid Free Proxies: They’re slow, unreliable, and often already blacklisted.

- Respect Provider Limits: Don’t burn through your proxy quota too quickly.

Managing proxies is almost an art form. But even the best proxy setup isn’t enough on its own.

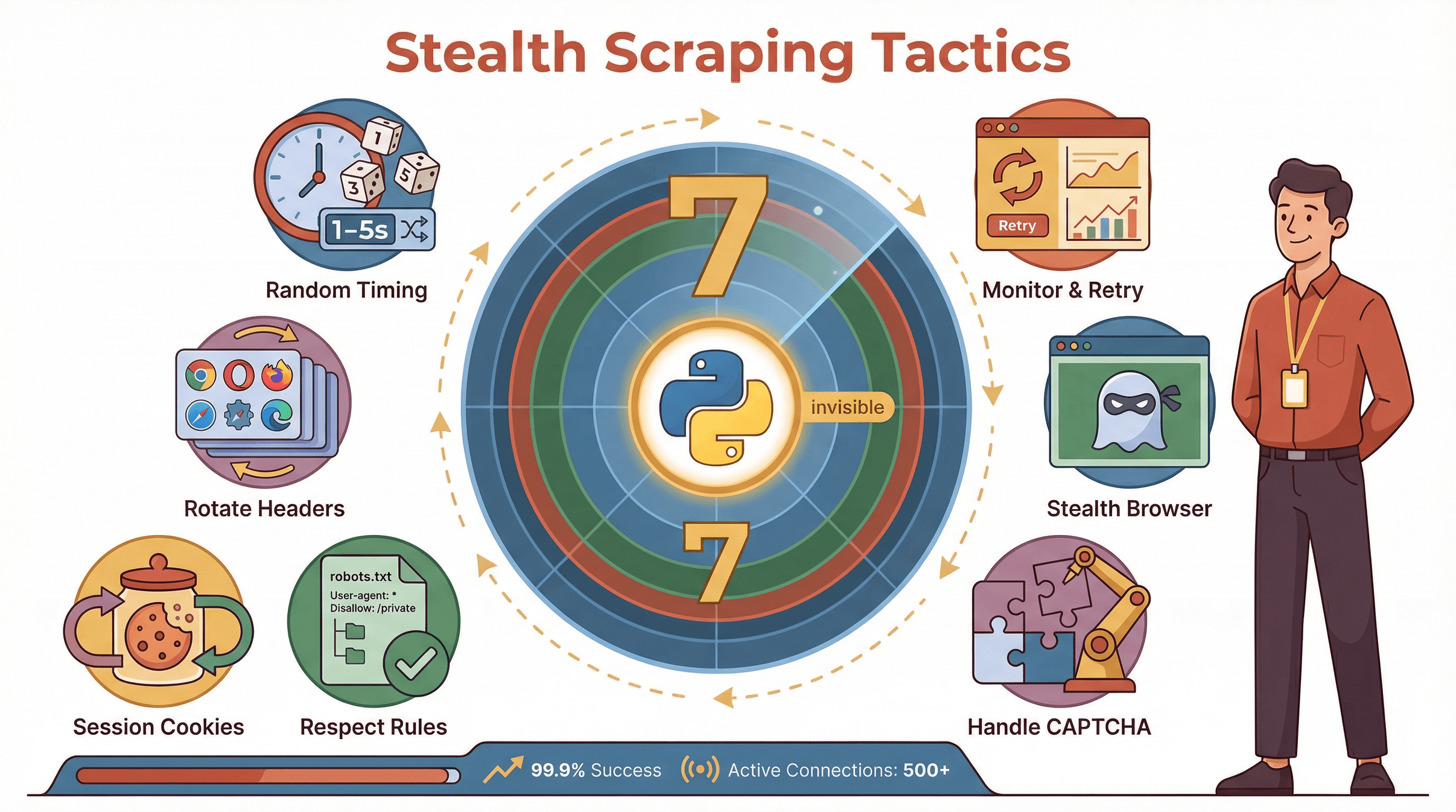

Beyond Proxies: Smart Techniques to Prevent Blocks in Python

Want to really fly under the radar? Layer these tactics on top of your proxy strategy:

Want to really fly under the radar? Layer these tactics on top of your proxy strategy:

- Randomize Request Timing: Don’t send requests at a constant rate. Use random delays (e.g., sleep 1–5 seconds).

- Rotate User-Agents and Headers: Use a list of real browser user-agents. Randomize Accept-Language, Referer, etc.

- Use Sessions and Cookies: Persist cookies across requests to mimic real browsing.

- Respect Robots.txt and Backoff on Errors: Don’t ignore site rules. If you get 429 or 503 errors, slow down.

- Handle CAPTCHAs: Integrate a CAPTCHA-solving service or retry with a new proxy if you hit a wall.

- Stealth Headless Browsers: Use tools like undetected-chromedriver or Playwright’s stealth plugins.

- Monitor and Retry: Keep logs, watch for spikes in failures, and automatically retry with new proxies.

There are great Python libraries for these tricks—fake-useragent, requests.Session(), and stealth browser plugins are your friends.

Supercharge Your Scraping: AI Tools vs. Traditional Python Proxy Methods

Now, here’s where things get interesting. What if you could skip all the proxy juggling, header tweaking, and anti-block headaches? That’s where comes in.

Thunderbit is an AI-powered web scraper Chrome Extension that lets you extract data from any website in just two clicks—no coding, no proxy setup, no maintenance. Just click “AI Suggest Fields,” let the AI figure out what to scrape, and hit “Scrape.” Thunderbit handles proxies, anti-blocking, pagination, and even subpage navigation behind the scenes.

Let’s compare the two approaches:

| Aspect | Python Scraping (Proxies) | Thunderbit AI Scraper |

|---|---|---|

| Setup Time | Hours (code, proxies, parsing) | Minutes (point, click, done) |

| Technical Skill | High (coding, HTTP, proxies) | Low (anyone can use it) |

| Block Avoidance | Manual (rotate proxies, headers) | Automated (AI + built-in proxy management) |

| Maintenance | Ongoing (update code, proxies) | Minimal (AI adapts, templates maintained) |

| Pagination/Subpages | Manual code needed | One-click, AI handles it |

| Data Export | Manual (CSV, Excel via code) | One-click to Sheets, Excel, Notion, Airtable |

| Scalability | Depends on your infra/proxies | High (cloud scraping, parallel pages) |

| Cost | Proxy fees + dev time | Free tier, then affordable plans |

| Reliability | Varies (depends on setup) | High (optimized for business users) |

Thunderbit is especially great for non-technical teams or anyone who just wants the data—fast.

Step-by-Step: Scraping Without Getting Blocked Using Thunderbit

Here’s how I’d use Thunderbit to scrape a site that usually blocks Python scripts:

- Install Thunderbit Chrome Extension: .

- Navigate to Your Target Website: Log in if needed—Thunderbit can use your browser session.

- Click “AI Suggest Fields”: Thunderbit scans the page and suggests columns to extract (like “Name,” “Price,” “Email”).

- Click “Scrape”: Thunderbit collects the data into a structured table.

- Handle Pagination: Enable “Scrape All Pages” and Thunderbit will click through every page, aggregating results.

- Scrape Subpages: Use “Scrape Subpages” to visit each detail page and enrich your data.

- Export: One click to send your data to Google Sheets, Excel, Notion, or Airtable.

Thunderbit handles all the anti-blocking magic for you—rotating IPs, pacing requests, and even solving minor CAPTCHAs. For most business users, it just works.

Thunderbit’s Approach to Pagination and Subpage Scraping

Thunderbit doesn’t just grab what’s on the first page. It can:

- Scroll and Click Like a Human: For infinite scroll or “next page” buttons, Thunderbit mimics real browsing speed.

- Maintain Sessions: If you’re logged in, Thunderbit keeps your session across pages.

- Distribute Load: In cloud mode, Thunderbit scrapes multiple pages in parallel, each from a different IP.

- Handle Dynamic Content: Thunderbit executes JavaScript, so it gets all the data—even if it loads after the page.

- Subpage Scraping: Thunderbit can click into each item’s detail page, grab extra fields, and merge them into your main table.

From the website’s perspective, it looks like a bunch of real users browsing normally—not a bot army.

Comparing Python Proxy Methods and Thunderbit for Business Users

So, which approach is right for you? Here’s a quick rundown:

| Factor | Python + Proxies | Thunderbit |

|---|---|---|

| Speed | Slower to set up | Instant results |

| Maintenance | High (code, proxies) | Low (AI adapts, templates update) |

| Skill Needed | Developer | Anyone |

| Block Risk | Medium (if not careful) | Low (AI/proxy automation) |

| Cost | Proxy fees + dev time | Free tier, then $15/mo+ |

| Best For | Custom, complex scraping | Sales, marketing, research teams |

If you’re a developer who loves tinkering and needs full control, Python and proxies are still a great option. But for most business users—especially those who want to avoid the proxy headache—Thunderbit is a massive productivity boost.

Key Takeaways: Scrape Smarter, Not Harder

Here’s what I’ve learned (and what I wish someone had told me years ago):

- Proxies are essential for avoiding IP blocks in Python scraping—but managing them is tricky.

- Smart anti-blocking tactics (random delays, header rotation, sessions) make a huge difference.

- AI-powered tools like Thunderbit automate all the hard parts—proxies, anti-blocking, pagination, subpages, and export—so you can focus on what matters: the data.

- Choose the right tool for your team: If you need speed and reliability, Thunderbit is a no-brainer. If you love code and need custom workflows, Python + proxies is still powerful.

Want to see how easy scraping can be? and try it on your next project. And if you’re hungry for more scraping tips, check out the .

Happy scraping—and may your IPs stay unblocked and your data always be fresh.

FAQs

1. What’s the biggest reason Python web scrapers get blocked?

The most common cause is sending too many requests from a single IP or using default headers that scream “bot.” Websites quickly spot these patterns and block or throttle your access.

2. How do proxies help avoid IP blocking in Python web scraping?

Proxies route your requests through different IP addresses, making it look like traffic is coming from multiple users. Rotating proxies are especially effective for large-scale scraping.

3. What are the best practices for managing proxies in Python?

Use a large pool of proxies, rotate them frequently, monitor for failures, avoid free proxies, and match proxy locations to your target site’s country. Always randomize your request timing and headers.

4. How does Thunderbit prevent blocks without manual proxy setup?

Thunderbit automates proxy rotation, request pacing, and anti-blocking techniques behind the scenes. Its AI agent mimics real user behavior, handles pagination and subpages, and exports data in one click—no coding required.

5. Should I use Python or Thunderbit for my business scraping needs?

If you’re a developer with complex, custom needs, Python plus proxies is flexible. But for most sales, marketing, and research teams who want fast, reliable data without technical hassle, Thunderbit is the smarter, easier choice.

Ready to scrape smarter? and leave the blocks behind.

Learn More