A few months ago, I wanted to build a daily digest of top Hacker News stories for our team at Thunderbit. My first instinct was to just bookmark the site and scroll through it every morning. That lasted about three days before I realized I was spending 20 minutes a day just reading headlines and copy-pasting links into a spreadsheet.

Hacker News is one of the richest, most concentrated sources of tech intelligence on the internet — roughly , about 1,300 new stories submitted every day, and around 13,000 comments generated daily. Whether you're tracking emerging tech trends, monitoring your brand, building a recruiting pipeline from "Who's Hiring" threads, or just trying to stay on top of what the developer world cares about, manually keeping up with all of that is a losing battle.

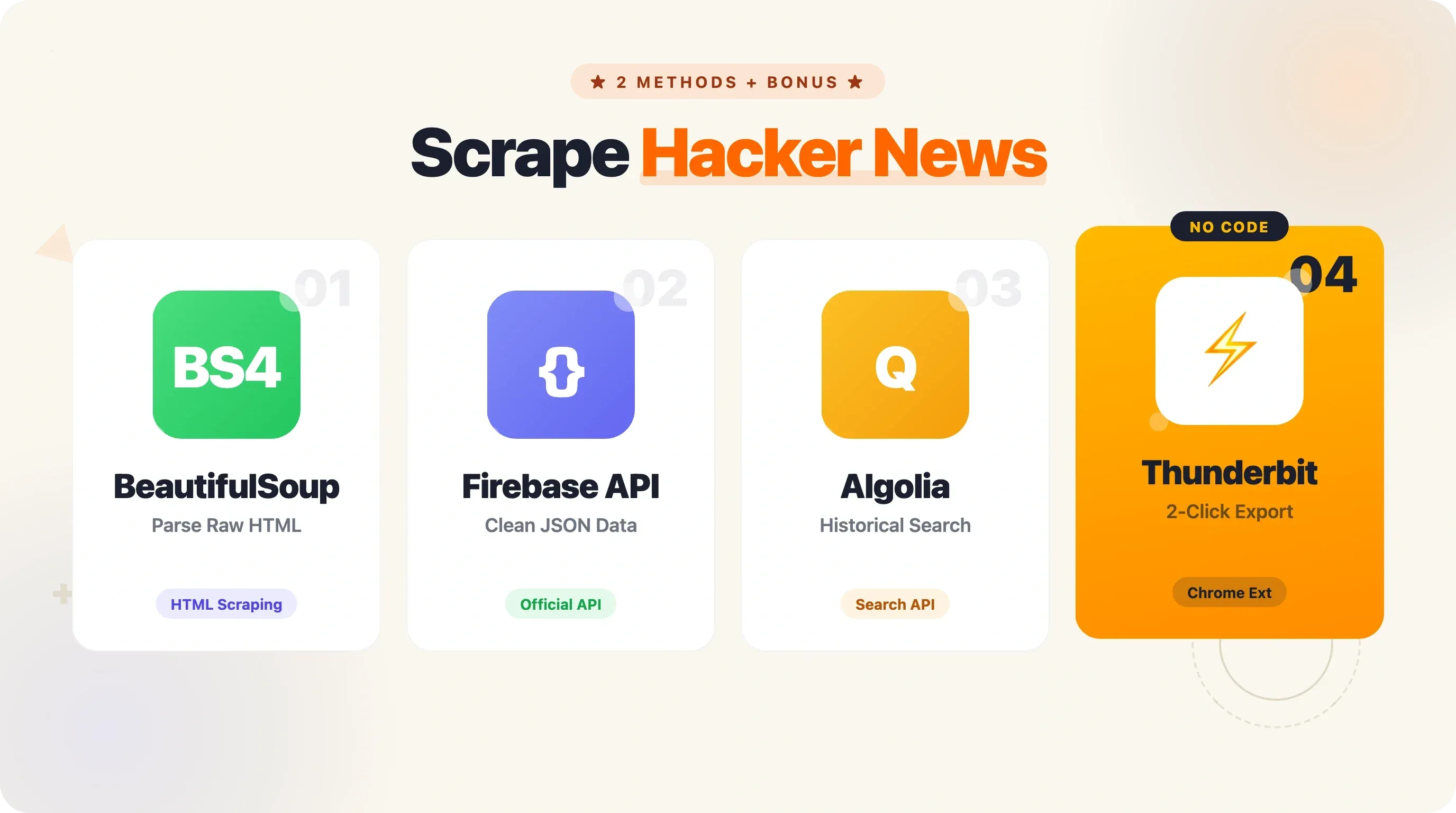

The good news: scraping Hacker News with Python is surprisingly straightforward, and in this guide, I'll walk you through two complete methods — HTML scraping with BeautifulSoup and the official HN Firebase API — along with pagination, data export, production-ready patterns, and a no-code shortcut for when Python feels like overkill.

Why Scrape Hacker News with Python?

Hacker News isn't just another link aggregator. It's a curated, community-driven feed where the most interesting tech stories rise to the top through upvotes and active discussion. The audience skews heavily toward technology professionals (about ), and the site's 66% direct traffic rate tells you this is a loyal, habitual readership — not casual browsers.

Here's why people scrape HN data:

| Use Case | What You Get |

|---|---|

| Daily tech digest | Top stories, scores, and links delivered to your inbox or Slack |

| Brand/competitor monitoring | Alerts when your company or product is mentioned |

| Trend analysis | Track which technologies, languages, or topics are gaining traction over time |

| Recruiting | Parse "Who's Hiring" threads for job postings, tech stacks, and salary signals |

| Content research | Find high-performing topics to write about or share |

| Sentiment analysis | Gauge community opinion on products, launches, or industry shifts |

Companies worth over $400 billion combined — Stripe, Dropbox, Airbnb — attribute crucial early feedback and users to Hacker News. Drew Houston posted Dropbox's demo on HN in April 2007, it hit #1, and the beta waitlist exploded from 5,000 to 75,000 users in a single day. HN data isn't just interesting — it's commercially valuable.

The data is publicly available, but the site's structure makes manual collection tedious. Automation with Python is the practical solution.

Two Ways to Scrape Hacker News with Python: Overview

This guide covers two complete, runnable approaches:

- HTML scraping with

requests+ BeautifulSoup — fetch the raw HTML of news.ycombinator.com and parse it to extract story data. Great for learning scraping fundamentals and grabbing exactly what's on the page. - The official Hacker News Firebase API — hit JSON endpoints directly, no HTML parsing needed. Better for reliable data pipelines, accessing comments, and historical data.

Here's a side-by-side comparison to help you decide which fits your needs:

| Criteria | HTML Scraping (requests + BS4) | HN Firebase API | Thunderbit (No-Code) |

|---|---|---|---|

| Setup complexity | Medium (parse HTML selectors) | Low (JSON endpoints) | None (2-click Chrome extension) |

| Data freshness | Real-time front page | Real-time (any item by ID) | Real-time |

| Rate limit risk | Medium (robots.txt says 30s crawl delay) | Low (official, generous) | Managed by Thunderbit |

| Comments access | Hard (nested HTML) | Easy (recursive item IDs) | Subpage scraping feature |

| Historical data | Limited | Via Algolia Search API | N/A |

| Best for | Learning scraping fundamentals | Reliable data pipelines | Non-developers, quick exports |

Both methods include full, runnable Python code. And if you just want the data without writing any code at all, I'll cover that too.

Before You Start

- Difficulty: Beginner to Intermediate

- Time Required: ~15–20 minutes for each method

- What You'll Need:

- Python 3.11+ installed

- A terminal or code editor

- Chrome browser (if you want to inspect HN's HTML or try the no-code option)

- (optional, for the no-code method)

Setting Up Your Python Environment

Before we touch any HN data, let's get the environment ready. I recommend creating a virtual environment so your project dependencies stay clean.

1# Create and activate a virtual environment

2python3 -m venv hn-scraper

3# macOS/Linux:

4source hn-scraper/bin/activate

5# Windows:

6hn-scraper\Scripts\activate

7# Install the packages we'll need for both methods

8pip install requests==2.33.1 beautifulsoup4==4.14.3 pandas==3.0.2 openpyxl==3.1.5For production patterns later (caching, retries), you'll also want:

1pip install requests-cache==1.3.1 tenacity==9.1.4No special API keys, no authentication tokens. HN's data is open.

Method 1: Scrape Hacker News with Python Using BeautifulSoup

This is the classic approach — fetch the HTML, parse it, and pull out the data you want. It's how most people learn web scraping, and HN's simple table-based layout makes it a great training ground.

Step 1: Fetch the Hacker News Front Page

Open your editor and create a file called scrape_hn_bs4.py. Here's the starting code:

1import requests

2from bs4 import BeautifulSoup

3> This paragraph contains content that cannot be parsed and has been skipped.

4print(f"Status: {response.status_code}, Page length: {len(response.text)} chars")Run it. You should see Status: 200 and a page length around 40,000–50,000 characters. That's the raw HTML of HN's front page sitting in memory, ready to parse.

Step 2: Understand the HTML Structure

HN uses a table-based layout — no modern CSS grid or flexbox. Each story on the page consists of two key <tr> rows:

- The story row (

<tr class="athing submission">): contains the rank, title, and link - The metadata row (the next

<tr>): contains points, author, time, and comment count

The important selectors:

span.titleline > a— the story title and URLspan.score— the vote count (e.g., "118 points")a.hnuser— the author's usernamespan.age— the time posted- The last

<a>in.subtextwith "comment" in the text — the comment count

If you right-click on any story title in Chrome and choose "Inspect," you'll see something like this:

1<span class="titleline">

2 <a href="https://darkbloom.dev">Darkbloom – Private inference on idle Macs</a>

3</span>And the metadata row below it:

1<span class="score" id="score_47788542">118 points</span>

2by <a href="user?id=twapi" class="hnuser">twapi</a>

3<span class="age" title="2026-04-16T04:06:39 1776312399">

4 <a href="item?id=47788542">2 hours ago</a>

5</span>

6| <a href="item?id=47788542">65 comments</a>Understanding these selectors is critical — if HN ever changes its markup, you'll need to update them. (Spoiler: the API method avoids this problem entirely.)

Step 3: Extract Titles, Links, and Scores

Now for the real work. We'll loop through every story row, grab the title and link from the story row, then grab the score from the metadata row immediately below it.

1import requests

2from bs4 import BeautifulSoup

3from pprint import pprint

4> This paragraph contains content that cannot be parsed and has been skipped.

5stories = []

6story_rows = soup.select("tr.athing")

7for row in story_rows:

8 # Title and URL from the story row

9 title_tag = row.select_one("span.titleline > a")

10 if not title_tag:

11 continue

12 title = title_tag.get_text()

13 link = title_tag.get("href", "")

14 # Metadata from the next sibling row

15 meta_row = row.find_next_sibling("tr")

16 score = 0

17 author = ""

18 comments = 0

19> This paragraph contains content that cannot be parsed and has been skipped.

20> This paragraph contains content that cannot be parsed and has been skipped.

21# Filter to stories with 50+ points, sorted by score

22top_stories = sorted(

23 [s for s in stories if s["score"] >= 50],

24 key=lambda x: x["score"],

25 reverse=True,

26)

27pprint(top_stories[:10])A few notes on the code:

- The walrus operator (

:=) works in Python 3.8+. It lets us assign and check in one line — handy for optional elements likespan.scorethat may not exist on every row (e.g., job posts have no score). - HN uses

\xa0(non-breaking space) between the number and "comments," so we split on that. - Stories that link to other HN pages (like "Ask HN" posts) will have relative URLs starting with

item?id=. You might want to prependhttps://news.ycombinator.com/for those.

Step 4: Run It and See Results

Save and run:

1python scrape_hn_bs4.pyYou should see output like:

1[{'author': 'twapi',

2 'comments': 65,

3 'score': 118,

4 'title': 'Darkbloom – Private inference on idle Macs',

5 'url': 'https://darkbloom.dev'},

6 {'author': 'sebg',

7 'comments': 203,

8 'score': 247,

9 'title': 'Show HN: I built an open-source Perplexity alternative',

10 'url': 'https://github.com/...'},

11 ...]That's 30 stories from page 1. But HN has hundreds of active stories at any given time. We'll cover pagination in a later section.

Method 2: Scrape Hacker News with Python Using the Official API

The HN Firebase API is the officially sanctioned way to access Hacker News data. No authentication, no API keys, no HTML parsing. You get clean JSON responses. I use this method for anything that needs to run reliably in production.

Key API Endpoints You Need to Know

The base URL is https://hacker-news.firebaseio.com/v0/. Here are the endpoints that matter:

This paragraph contains content that cannot be parsed and has been skipped.

A story item looks like this:

1{

2 "by": "twapi",

3 "descendants": 65,

4 "id": 47788542,

5 "kids": [47789171, 47788769, 47788762],

6 "score": 118,

7 "time": 1776312399,

8 "title": "Darkbloom – Private inference on idle Macs",

9 "type": "story",

10 "url": "https://darkbloom.dev"

11}The kids field contains the IDs of direct child comments. Each comment is itself an item that may have its own kids — that's how the comment tree is structured.

Step 1: Fetch Top Story IDs

Create a file called scrape_hn_api.py:

1import requests

2import time

3from pprint import pprint

4API_BASE = "https://hacker-news.firebaseio.com/v0"

5# Fetch top story IDs

6response = requests.get(f"{API_BASE}/topstories.json")

7story_ids = response.json()

8print(f"Got {len(story_ids)} top story IDs")

9# Output: Got 500 top story IDs500 story IDs in a single request — no parsing, no selectors, just a JSON array.

Step 2: Fetch Story Details by ID

Now we need the actual story data. This is where the fan-out problem shows up: 500 stories means 500 individual API calls. In my benchmarking, each item request takes about 1.2 seconds sequentially. For 500 stories, that's roughly 10 minutes.

For most use cases, you don't need all 500. Here's code to fetch the top 30:

1def fetch_story(story_id):

2 """Fetch a single story's details from the HN API."""

3 resp = requests.get(f"{API_BASE}/item/{story_id}.json")

4 return resp.json()

5> This paragraph contains content that cannot be parsed and has been skipped.

6# Sort by score, show top 10

7top = sorted(stories, key=lambda x: x["score"], reverse=True)[:10]

8pprint(top)The time.sleep(0.1) adds a small courtesy delay. The Firebase API doesn't have a stated rate limit, but hammering any API without pauses is bad practice.

Step 3: Scrape Comments (Recursive Tree Walk)

This is where the API really shines compared to HTML scraping. Comments on HN are deeply nested — replies to replies to replies. In HTML, that means parsing complex nested table structures. With the API, each comment's kids field gives you the IDs of its children, and you just walk the tree recursively.

1def fetch_comments(item_id, depth=0, max_depth=3):

2 """Recursively fetch comments up to max_depth."""

3 item = requests.get(f"{API_BASE}/item/{item_id}.json").json()

4 if not item or item.get("type") != "comment":

5 return []

6> This paragraph contains content that cannot be parsed and has been skipped.

7 if depth < max_depth and item.get("kids"):

8 for kid_id in item["kids"]:

9 comments.extend(fetch_comments(kid_id, depth + 1, max_depth))

10 time.sleep(0.05)

11 return comments

12# Example: fetch comments for the top story

13if stories:

14 top_story = stories[0]

15 top_story_full = requests.get(f"{API_BASE}/item/{top_story['id']}.json").json()

16 if top_story_full.get("kids"):

17 print(f"\nComments for: {top_story['title']}")

18 all_comments = []

19 for kid_id in top_story_full["kids"][:5]: # First 5 top-level comments

20 all_comments.extend(fetch_comments(kid_id, depth=0, max_depth=2))

21 time.sleep(0.1)

22 for c in all_comments[:15]:

23 indent = " " * c["depth"]

24 preview = c["text"][:80].replace("\n", " ") if c["text"] else "[no text]"

25 print(f"{indent}[{c['author']}] {preview}...")This recursive approach is significantly easier than trying to parse nested HTML comment threads. If you need full comment trees, the API is the way to go.

Step 4: Run and View Results

1python scrape_hn_api.pyYou'll see structured story data followed by a nested comment preview. The data is cleaner, the comment access is trivial, and there's no risk of your scraper breaking because HN changed a CSS class name.

Going Beyond Page 1: Pagination and Historical Data

Most HN scraping tutorials stop at page 1 — 30 stories. That's fine for a quick demo, but real use cases often need more depth.

Scraping Multiple Pages with BeautifulSoup

HN's pagination uses a simple URL pattern: ?p=2, ?p=3, etc. Each page returns 30 stories, and the site serves up to about page 20 (roughly 600 stories total). Beyond that, you get empty pages.

1import time

2def scrape_hn_pages(num_pages=5):

3 """Scrape multiple pages of HN front page stories."""

4 all_stories = []

5 for page in range(1, num_pages + 1):

6 url = f"https://news.ycombinator.com/news?p={page}"

7 response = requests.get(url, headers=headers)

8 soup = BeautifulSoup(response.text, "html.parser")

9 story_rows = soup.select("tr.athing")

10 if not story_rows:

11 print(f"Page {page}: no stories found, stopping.")

12 break

13 for row in story_rows:

14 title_tag = row.select_one("span.titleline > a")

15 if not title_tag:

16 continue

17 meta_row = row.find_next_sibling("tr")

18 score = 0

19 if meta_row and (score_tag := meta_row.select_one("span.score")):

20 score = int(score_tag.get_text().replace(" points", ""))

21> This paragraph contains content that cannot be parsed and has been skipped.

22 print(f"Page {page}: scraped {len(story_rows)} stories")

23 # Respect the robots.txt crawl-delay of 30 seconds

24 if page < num_pages:

25 time.sleep(30)

26 return all_stories

27stories = scrape_hn_pages(5)

28print(f"\nTotal stories scraped: {len(stories)}")That time.sleep(30) is important. HN's explicitly requests a 30-second crawl delay. Ignore it and you'll get rate-limited (HTTP 429) or temporarily blocked. Five pages at 30-second intervals takes about 2.5 minutes — not instant, but respectful.

For users who don't want to manage pagination code, handles click-based and infinite-scroll pagination automatically. It clicks the "More" button at the bottom of HN pages without any configuration.

Accessing Historical Hacker News Data with the Algolia API

The Firebase API gives you current data. For historical analysis — "What were the top Python stories in 2023?" or "How has AI coverage changed over the past 5 years?" — you need the .

1import requests

2ALGOLIA_BASE = "https://hn.algolia.com/api/v1"

3> This paragraph contains content that cannot be parsed and has been skipped.

4# Example: find Python scraping stories with 10+ points since Jan 2024

5results = search_hn(

6 query="python scraping",

7 tags="story",

8)

9print(f"Found {results['nbHits']} total results")

10for hit in results["hits"][:5]:

11 print(f" [{hit.get('points', 0)} pts] {hit['title']}")For date-filtered queries, use numericFilters:

1import calendar, datetime

2# Stories since January 1, 2024

3start_date = datetime.datetime(2024, 1, 1)

4start_ts = int(calendar.timegm(start_date.timetuple()))

5> This paragraph contains content that cannot be parsed and has been skipped.

6The Algolia API is fast (5–9 ms server processing time), requires no API key, and supports pagination up to 500 pages. For bulk historical analysis, it's the best option available.

7## Exporting Scraped Hacker News Data to CSV, Excel, and Google Sheets

8Every HN scraping tutorial I've seen ends with `pprint()` output in the terminal. That's great for debugging, but if you're building a daily digest or doing trend analysis, you need the data in a file. Here's how to get it there.

9### Export to CSV with Python

10```python

11import csv

12def export_to_csv(stories, filename="hn_stories.csv"):

13 """Save scraped stories to a CSV file."""

14 fieldnames = ["title", "url", "score", "author", "comments"]

15 with open(filename, "w", newline="", encoding="utf-8") as f:

16 writer = csv.DictWriter(f, fieldnames=fieldnames)

17 writer.writeheader()

18 writer.writerows(stories)

19 print(f"Saved {len(stories)} stories to {filename}")

20export_to_csv(stories)Export to Excel with Python

1import pandas as pd

2def export_to_excel(stories, filename="hn_stories.xlsx"):

3 """Save scraped stories to an Excel file."""

4 df = pd.DataFrame(stories)

5 df.to_excel(filename, index=False, engine="openpyxl")

6 print(f"Saved {len(stories)} stories to {filename}")

7export_to_excel(stories)Make sure openpyxl is installed — pandas uses it as the Excel engine. If it's missing, you'll get an ImportError.

Push to Google Sheets (Optional)

For automated workflows, you might want to push data directly to Google Sheets using the gspread library. This requires setting up a Google Cloud service account (a one-time process):

1import gspread

2gc = gspread.service_account(filename="service_account.json")

3sh = gc.open("HN Daily Digest")

4worksheet = sh.sheet1

5# Convert stories to rows

6header = list(stories[0].keys())

7rows = [list(s.values()) for s in stories]

8worksheet.clear()

9worksheet.update([header] + rows)

10print("Pushed to Google Sheets")The No-Code Export Alternative

If setting up service accounts and writing export code sounds like more work than the actual scraping, I get it. At Thunderbit, we built free data export that lets you send scraped data directly to Excel, Google Sheets, Airtable, or Notion — no code, no credentials, no pipeline to maintain. For a one-off data pull, it's genuinely faster. More on that below.

Making Your Scraper Production-Ready: Error Handling, Caching, and Scheduling

If you're running a scraper once for fun, the code above is fine. If you're running it daily as part of a workflow, you need a few more pieces.

Error Handling and Retry Logic

Networks fail. Servers throttle. A single bad request shouldn't crash your entire scrape. Here's a retry function with exponential backoff:

1from tenacity import retry, stop_after_attempt, wait_exponential_jitter

2import requests

3@retry(stop=stop_after_attempt(5), wait=wait_exponential_jitter(initial=1, max=60))

4def fetch_with_retry(url):

5 """Fetch a URL with automatic retries and exponential backoff."""

6 response = requests.get(url, timeout=10)

7 response.raise_for_status()

8 return response

9# Usage:

10try:

11 resp = fetch_with_retry("https://hacker-news.firebaseio.com/v0/topstories.json")

12 story_ids = resp.json()

13except Exception as e:

14 print(f"Failed after retries: {e}")The tenacity library handles the retry logic cleanly. It will retry up to 5 times with jittered exponential backoff — starting at 1 second, maxing at 60 seconds. This handles HTTP 429 (rate limited), 503 (service unavailable), and transient network errors gracefully.

Caching Responses to Avoid Re-Crawling

During development, you'll run your scraper many times while tweaking the parsing logic. Without caching, every run hits HN's servers again for the same data. The requests-cache library fixes this in two lines:

1import requests_cache

2requests_cache.install_cache("hn_cache", expire_after=3600) # Cache for 1 hourAfter adding those lines at the top of your script, all requests.get() calls are automatically cached in a local SQLite database. Re-run your script 10 times in an hour, and only the first run actually hits the network. This is a tool , and for good reason.

Separating Crawling from Parsing

A pattern that experienced scrapers swear by: download the raw data first, parse it second. This way, if your parsing logic has a bug, you fix it and re-parse without re-fetching.

1import os, json

2def crawl_and_save(story_ids, output_dir="raw_data"):

3 """Fetch story data and save raw JSON to disk."""

4 os.makedirs(output_dir, exist_ok=True)

5 for sid in story_ids:

6 filepath = os.path.join(output_dir, f"{sid}.json")

7 if os.path.exists(filepath):

8 continue # Skip already-fetched items

9 resp = fetch_with_retry(f"{API_BASE}/item/{sid}.json")

10 with open(filepath, "w") as f:

11 json.dump(resp.json(), f)

12> This paragraph contains content that cannot be parsed and has been skipped.

13This two-phase approach is especially valuable when you're scraping hundreds of items and want to iterate quickly on how you process the data.

14### Automating Your Scraper on a Schedule

15For a daily HN digest, you need your scraper to run automatically. Two common options:

16**Option 1: cron (Linux/Mac)**

17```bash

18# Run every day at 8:30 AM UTC

1930 8 * * * /usr/bin/python3 /home/user/scrape_hn.py >> /home/user/scrape.log 2>&1Option 2: GitHub Actions (free, no server needed)

1name: Scrape Hacker News

2on:

3 schedule:

4 - cron: '30 8 * * *' # Daily at 8:30 AM UTC

5 workflow_dispatch: # Manual trigger button

6jobs:

7 scrape:

8 runs-on: ubuntu-latest

9 steps:

10 - uses: actions/checkout@v4

11 - uses: actions/setup-python@v6

12 with:

13 python-version: '3.12'

14 - run: pip install requests beautifulsoup4 pandas openpyxl

15 - run: python scrape_hn.py

16 - run: |

17 git config user.name "GitHub Actions Bot"

18 git config user.email "actions@github.com"

19 git add -A

20 git diff --staged --quiet || git commit -m "Update HN data $(date -u +%Y-%m-%dT%H:%M:%SZ)"

21 git pushA few gotchas with GitHub Actions scheduling: all cron times are UTC, delays of 15–60 minutes are common (use off-minute times like :30 instead of :00), and GitHub may disable scheduled workflows on repos with no activity for 60 days. Always include workflow_dispatch so you can trigger manually for testing.

For a simpler option, Thunderbit's Scheduled Scraper feature lets you describe the schedule in plain English — something like "scrape every morning at 8am" — without any server or cron setup.

When Python Is Overkill: The No-Code Way to Scrape Hacker News

I'm going to be honest here, even though I'm a Python enthusiast and my team builds developer tools. If you just need today's top 100 HN stories in a spreadsheet — right now, one time — writing, debugging, and running a Python script is unnecessary overhead. The setup alone (virtual environment, installing packages, figuring out selectors) takes longer than the actual data collection.

This is where fits in. Here's the workflow:

- Open

news.ycombinator.comin Chrome - Click the Thunderbit extension icon, then "AI Suggest Fields"

- The AI reads the page and proposes columns: Title, URL, Score, Author, Comment Count, Time Posted

- Adjust the fields if you want (rename, remove, or add custom ones — you can even add an AI prompt like "Categorize as AI/DevTools/Web/Other")

- Click "Scrape" — data appears in a structured table

- Export to Excel, Google Sheets, Airtable, or Notion

Two clicks to structured data. No selectors, no code, no maintenance.

A real advantage here: Thunderbit's AI adapts to layout changes automatically. Traditional CSS-selector scrapers break when a site changes its markup — and while HN's HTML is fairly stable, it has changed (the class="athing submission" class was updated, span.titleline replaced the older a.storylink). An AI-powered scraper reads the page fresh each time, so it doesn't care about class name changes.

Thunderbit also handles pagination (clicking HN's "More" button automatically) and subpage scraping (visiting each story's comment page to pull in discussion data). For the use case, that's the equivalent of the recursive API code in Method 2 — but without writing a single line.

The tradeoffs are straightforward: Python is the right choice when you need custom logic, complex data transformations, scheduled automation pipelines, or you're learning to code. Thunderbit is the right choice when you need data fast, don't want to maintain code, or you're not a developer. Pick the tool that matches your situation.

Python vs. API vs. No-Code: Which Method Should You Pick?

Here's the full decision framework:

| Criteria | BeautifulSoup (HTML) | Firebase API | Algolia API | Thunderbit (No-Code) |

|---|---|---|---|---|

| Technical skill needed | Intermediate Python | Beginner Python | Beginner Python | None |

| Setup time | 10–15 min | 5–10 min | 5–10 min | 2 min |

| Maintenance burden | Medium (selectors break) | Low (stable JSON) | Low (stable JSON) | None |

| Data depth | Front page only | Any item, users | Search + historical | Front page + subpages |

| Comments | Hard | Easy (recursive) | Easy (nested tree) | Subpage scraping |

| Historical data | No | No | Yes (full archive) | No |

| Export options | Code it yourself | Code it yourself | Code it yourself | Built-in (Excel, Sheets, etc.) |

| Scheduling | cron / GitHub Actions | cron / GitHub Actions | cron / GitHub Actions | Built-in scheduler |

| Best for | Learning scraping | Reliable pipelines | Research & analysis | Quick data pulls |

If you're learning Python or building something custom, go with Method 1 or 2. If you need historical analysis, add the Algolia API. If you just want the data without the code, .

Conclusion and Key Takeaways

Here's what you now have in your toolkit:

- Two complete Python methods to scrape Hacker News — BeautifulSoup for HTML parsing and the Firebase API for clean JSON data

- Pagination techniques for scraping beyond page 1, including the Algolia API for historical data going back to 2007

- Export code for CSV, Excel, and Google Sheets — because data in a terminal isn't useful to anyone else on your team

- Production patterns — retry logic, caching, crawl/parse separation, and scheduled automation via cron or GitHub Actions

- A no-code alternative for when Python is more tool than you need

My recommendation: start with the Firebase API (Method 2) for most use cases. It's cleaner, more reliable, and gives you comment access without the headache of parsing nested HTML. Add the Algolia API when you need historical data. And keep bookmarked for those times when you just need a quick spreadsheet and don't want to spin up a whole Python project.

If you want to go deeper, try scraping HN comments for , build a daily digest pipeline with GitHub Actions, or explore the Algolia API to track how technology trends have shifted over the past decade.

FAQs

Is it legal to scrape Hacker News?

HN's data is publicly available, and Y Combinator provides an official API specifically for programmatic access. The site's allows scraping of read-only content (front page, item pages, user pages) but requests a 30-second crawl delay. Respect the delay, don't scrape interactive endpoints (voting, login), and you're on solid ground. For more on scraping ethics, see our guide.

Does Hacker News have an official API?

Yes. The at hacker-news.firebaseio.com/v0/ is free, requires no authentication, and provides access to stories, comments, user profiles, and all feed types (top, new, best, ask, show, jobs). It returns clean JSON and has no stated rate limit, though being polite with request frequency is always recommended.

How do I scrape Hacker News comments with Python?

Using the Firebase API, fetch a story item to get its kids field (an array of top-level comment IDs). Each comment is itself an item with its own kids field for replies. Walk the tree recursively with a function that fetches each comment and its children. See the "Scrape Comments (Recursive Tree Walk)" section above for complete code. Alternatively, the returns the full nested comment tree in a single request — much faster for comment-heavy stories.

Can I scrape Hacker News without writing code?

Yes. works as a Chrome extension — open HN, click "AI Suggest Fields," and it automatically identifies columns like title, URL, score, and author. Click "Scrape" and export directly to Excel, Google Sheets, Airtable, or Notion. It handles pagination and can even visit subpages to pull in comment data. No Python, no selectors, no maintenance.

How do I get historical Hacker News data?

The is the best tool for this. Use the search_by_date endpoint with numericFilters=created_at_i>TIMESTAMP to filter by date range. You can search by keyword, filter by story type, and paginate through up to 500 pages of results. For bulk historical analysis, public datasets are also available on (full archive), (28 million records), and (4 million stories).

Learn More