Few things are more deflating than writing 30 lines of Python, running your Goodreads scraper, and watching it return []. An empty list. Nothing. Just you and your blinking cursor.

I've seen this play out dozens of times — in our own internal experiments at , in developer forums, and in the GitHub issues that pile up on abandoned scraper repos. The complaints are almost always the same: "the top reviews section is blank, just shows me []", "whatever page number I run, it always scrapes the very first page", "my code worked last year, now it's broken." And to make things worse, the Goodreads API was deprecated in December 2020, so the "just use the API" advice you'll find in older tutorials is a dead end.

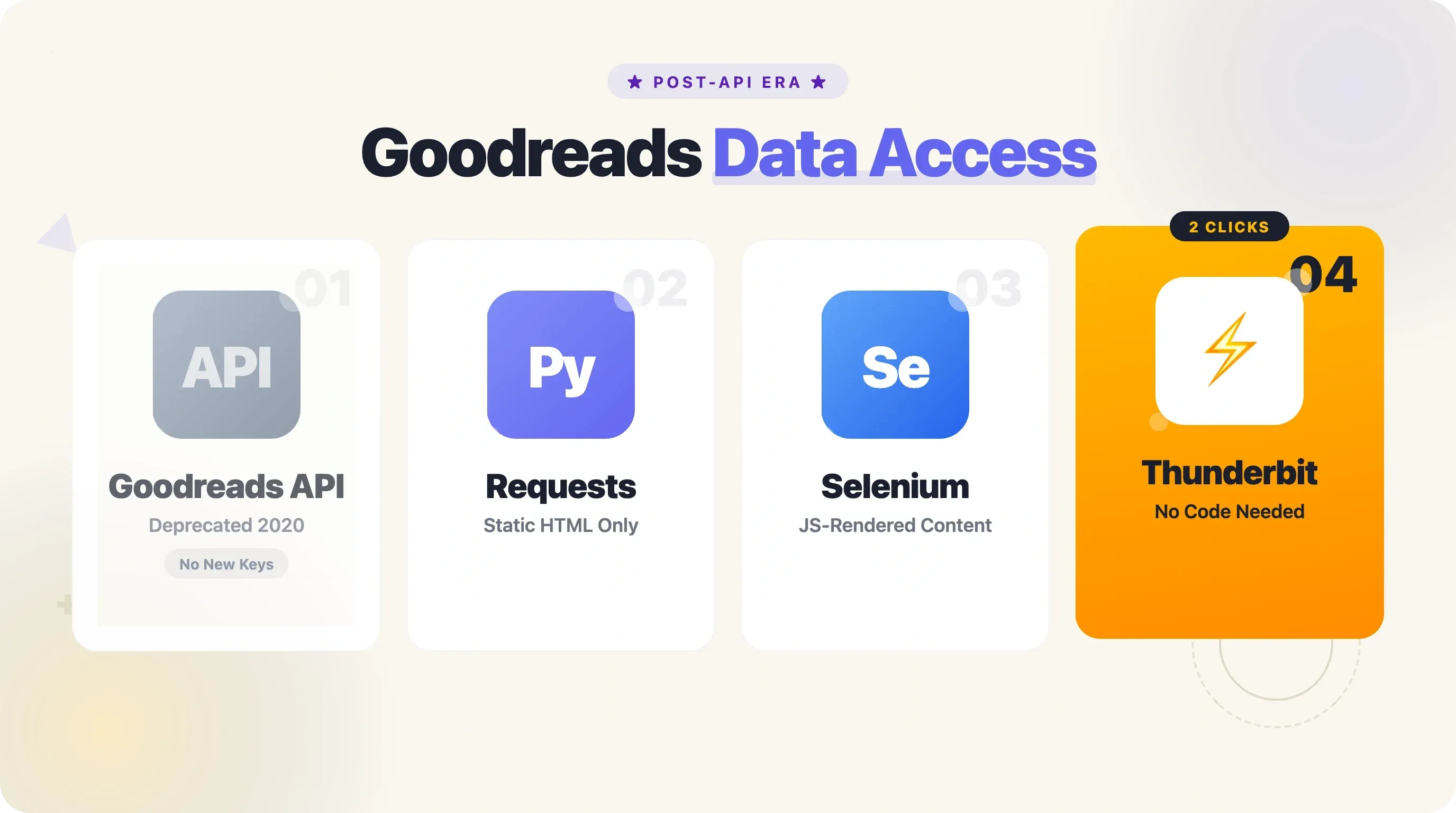

If you want structured book data from Goodreads today — titles, authors, ratings, reviews, genres, ISBNs — scraping is the main game in town. This guide will walk you through a working, complete approach to scrape Goodreads with Python, covering JS-rendered content, pagination, anti-blocking, and exports. And if Python isn't your thing, I'll show you a no-code alternative that gets the job done in about two clicks.

What Is Goodreads Scraping (and Why Do It with Python)?

Goodreads scraping means automatically extracting book data — titles, authors, ratings, review counts, genres, ISBNs, page counts, publication dates, and more — from Goodreads web pages using code, instead of copying and pasting by hand.

Goodreads is one of the largest book databases on the planet, with over and roughly . Over 18 million books hit "Want to Read" shelves every month. That kind of constantly updated, structured data is exactly why publishers, data scientists, booksellers, and researchers keep coming back.

Python is the go-to language for this kind of work — it powers roughly of all scraping projects. The libraries are mature (requests, BeautifulSoup, Selenium, Playwright, pandas), the syntax is beginner-friendly, and the community is enormous.

If you've never scraped a website before, Python is where you want to start.

Why Scrape Goodreads with Python? Real-World Use Cases

Before we get into the code, it's worth asking: who actually needs this data, and what do they do with it?

| Use Case | Who Benefits | What You Scrape |

|---|---|---|

| Publisher market research | Publishers, literary agents | Trending genres, top-rated titles, emerging authors, competitor ratings |

| Book recommendation systems | Data scientists, hobbyists, app builders | Ratings, genres, user shelves, review sentiment |

| Price & inventory monitoring | Ecommerce booksellers | Trending titles, review volumes, "Want to Read" counts |

| Academic research | Researchers, students | Review text, rating distributions, genre classifications |

| Reading analytics | Book bloggers, personal projects | Personal shelf data, reading history, year-in-review stats |

Some concrete examples: The UCSD Book Graph — one of the most cited academic datasets in recommendation research — contains , all collected from publicly accessible Goodreads shelves. Multiple Kaggle datasets (goodbooks-10k, Best Books Ever, etc.) originated from Goodreads scraping. And a 2025 study in Big Data and Society computationally curated to analyze how sponsored reviews shape the platform.

On the commercial side, Bright Data sells pre-scraped Goodreads datasets for as little as $0.50 per 1,000 records — proof that this data has real market value.

The Goodreads API Is Gone — Here Is What Replaced It

If you've searched for "Goodreads API" recently, you've probably landed on a tutorial that's out of date. On December 8, 2020, Goodreads quietly stopped issuing new developer API keys. There was no blog post, no email blast — just a small banner on the docs page and a lot of confused developers.

The fallout was immediate. One developer, Kyle K, built a Discord bot for sharing book recommendations — "suddenly POOF it just stops working." Another, Matthew Jones, lost API access one week before the Reddit r/Fantasy Stabby Awards voting, forcing a revert to Google Forms. A grad student named Elena Neacsu had her Master's thesis project derailed mid-development.

So what's left? The current landscape looks like this:

| Approach | Data Available | Ease of Use | Rate Limits | Status |

|---|---|---|---|---|

| Goodreads API | Full metadata, reviews | Easy (was) | 1 req/sec | Deprecated (Dec 2020) — no new keys |

| Open Library API | Titles, authors, ISBNs, covers (~30M titles) | Easy | 1-3 req/sec | Active, free, no auth |

| Google Books API | Metadata, previews | Easy | 1,000/day free | Active (non-English ISBN gaps) |

| Python scraping (requests + BS4) | Anything in the initial HTML | Moderate | Self-managed | Works for static content |

| Python scraping (Selenium/Playwright) | JS-rendered content too | Harder | Self-managed | Required for reviews, some lists |

| Thunderbit (no-code Chrome extension) | Any visible page data | Very easy (2 clicks) | Credit-based | Active — no Python needed |

Open Library is a strong complement, especially for ISBN lookups and basic metadata. But if you need ratings, reviews, genre tags, or "Want to Read" counts, you're scraping Goodreads directly — either with Python or with a tool like Thunderbit, which can scrape Goodreads pages (including subpages for book details) with AI-suggested fields and direct export to Google Sheets, Notion, or Airtable.

Why Your Goodreads Python Scraper Returns Empty Results (and How to Fix It)

This is the section I wish existed when I first started working with Goodreads data. The "empty results" problem is the single most common complaint in developer forums, and it has several distinct causes — each with its own fix.

| Symptom | Root Cause | Fix |

|---|---|---|

Reviews/ratings return [] | JS-rendered content (React/lazy-load) | Use Selenium or Playwright instead of requests |

| Always scrapes page 1 only | Pagination params ignored or JS-driven | Pass ?page=N param correctly; use browser automation for infinite scroll |

| Code worked last year, now fails | Goodreads changed HTML class names | Use resilient selectors (JSON-LD, data-testid attributes) |

| 403/blocked after a few requests | Missing headers / too-fast requests | Add User-Agent, time.sleep(), rotate proxies |

| Login wall on shelf/list pages | Cookie/session required | Use requests.Session() with cookies or browser scraping |

JS-Rendered Content: Reviews and Ratings Show Up as Empty

Goodreads runs a React-based frontend. When you call requests.get() on a book page, you get the initial HTML — but reviews, rating distributions, and many "more info" sections load asynchronously via JavaScript. Your scraper literally cannot see them.

The fix: for any page where you need JS-rendered content, switch to Selenium or Playwright. Playwright is my recommendation for new projects — it's thanks to its WebSocket-based protocol, and it has better built-in stealth and async support.

Pagination That Only Returns Page 1

This one is sneaky. You write a loop, increment ?page=N, and still get the same results every time. On Goodreads, shelf pages silently return page 1 content regardless of the ?page= parameter if you are not authenticated. No error, no redirect — just the same first page over and over.

The fix: include an authenticated session cookie (specifically _session_id2). More on this in the pagination section below.

Code That Worked Last Year Now Fails

Goodreads periodically changes HTML class names and page structure. The popular maria-antoniak/goodreads-scraper repo on GitHub now carries a permanent notice: "This project is unmaintained and no longer functioning." The fix is to use more resilient selectors — JSON-LD structured data (which follows schema.org standards and rarely changes) or data-testid attributes instead of brittle class names.

403 Errors or Getting Blocked

Python's requests library has a different TLS fingerprint than Chrome. Even with a Chrome User-Agent string, bot detection systems like AWS WAF (which Goodreads uses, being an Amazon subsidiary) can spot the mismatch. The fix: add realistic browser headers, implement time.sleep() delays of 3-8 seconds between requests, and consider curl_cffi for TLS fingerprint matching if you're doing large-scale scraping.

Login Walls on Shelves and List Pages

Some Goodreads shelf and list pages require authentication to access full content, especially beyond page 5. Use requests.Session() with cookies exported from your browser, or use Selenium/Playwright with a logged-in profile. Thunderbit handles this naturally since it runs in your own logged-in Chrome browser.

Before You Start

- Difficulty: Intermediate (basic Python knowledge assumed)

- Time Required: ~20-30 minutes for the full walkthrough

- What You'll Need:

- Python 3.8+

- Chrome browser (for DevTools inspection and Selenium/Playwright)

- Libraries:

requests,beautifulsoup4,seleniumorplaywright,pandas - (Optional)

gspreadfor Google Sheets export - (Optional) for the no-code alternative

Step 1: Set Up Your Python Environment

Install the required libraries. Open your terminal and run:

1pip install requests beautifulsoup4 selenium pandas lxmlIf you prefer Playwright (recommended for new projects):

1pip install playwright

2playwright install chromiumFor Google Sheets export (optional):

1pip install gspread oauth2clientMake sure you're on Python 3.8 or higher. You can check with python --version.

After installation, you should be able to import all libraries without errors. Try python -c "import requests, bs4, pandas; print('Ready')" to confirm.

Step 2: Send Your First Request with Proper Headers

Navigate to a Goodreads genre shelf or list page in your browser — for example, https://www.goodreads.com/list/show/1.Best_Books_Ever. Now let's fetch that page with Python.

1import requests

2from bs4 import BeautifulSoup

3headers = {

4 "User-Agent": "Mozilla/5.0 (Windows NT 10.0; Win64; x64) AppleWebKit/537.36 "

5 "(KHTML, like Gecko) Chrome/131.0.0.0 Safari/537.36",

6 "Accept-Language": "en-US,en;q=0.9",

7 "Accept": "text/html,application/xhtml+xml,application/xml;q=0.9,*/*;q=0.8",

8}

9url = "https://www.goodreads.com/list/show/1.Best_Books_Ever"

10response = requests.get(url, headers=headers, timeout=15)

11print(f"Status: \{response.status_code\}")You should see Status: 200. If you get 403, double-check your headers — Goodreads' AWS WAF checks for a realistic User-Agent and will reject bare-bones requests. The headers above mimic a real Chrome browser session.

Step 3: Inspect the Page and Identify the Right Selectors

Open Chrome DevTools (F12) on the Goodreads list page. Right-click a book title and select "Inspect." You'll see the DOM structure for each book entry.

For list pages, each book is typically wrapped in a <tr> element with itemtype="http://schema.org/Book". Inside, you'll find:

- Title:

a.bookTitle(the link text is the title, thehrefgives you the book URL) - Author:

a.authorName - Rating:

span.minirating(contains the average rating and rating count) - Cover image:

imginside the book row

For individual book detail pages, skip the CSS selectors and go straight to JSON-LD. Goodreads embeds structured data in a <script type="application/ld+json"> tag that follows the schema.org Book format. It's far more stable than class names, which Goodreads changes on a whim.

Step 4: Extract Book Data from a Single List Page

Let's parse the list page and extract basic info for each book:

1import requests

2from bs4 import BeautifulSoup

3headers = {

4 "User-Agent": "Mozilla/5.0 (Windows NT 10.0; Win64; x64) AppleWebKit/537.36 "

5 "(KHTML, like Gecko) Chrome/131.0.0.0 Safari/537.36",

6 "Accept-Language": "en-US,en;q=0.9",

7}

8url = "https://www.goodreads.com/list/show/1.Best_Books_Ever"

9response = requests.get(url, headers=headers, timeout=15)

10soup = BeautifulSoup(response.text, "lxml")

11books = []

12rows = soup.select('tr[itemtype="http://schema.org/Book"]')

13for row in rows:

14 title_tag = row.select_one("a.bookTitle")

15 author_tag = row.select_one("a.authorName")

16 rating_tag = row.select_one("span.minirating")

17 title = title_tag.get_text(strip=True) if title_tag else ""

18 book_url = "https://www.goodreads.com" + title_tag["href"] if title_tag else ""

19 author = author_tag.get_text(strip=True) if author_tag else ""

20 rating_text = rating_tag.get_text(strip=True) if rating_tag else ""

21 books.append({

22 "title": title,

23 "author": author,

24 "rating_info": rating_text,

25 "book_url": book_url,

26 })

27print(f"Found {len(books)} books on page 1")

28for b in books[:3]:

29 print(b)You should see roughly 100 books per list page. Each entry will have a title, author, a rating string like "4.28 avg rating — 9,031,257 ratings," and a URL to the book's detail page.

Step 5: Scrape Subpages for Detailed Book Information

The listing page gives you the basics, but the real gold — ISBN, full description, genre tags, page count, publication date — lives on each book's individual page. This is where JSON-LD shines.

1import json

2import time

3def scrape_book_detail(book_url, headers):

4 """Visit a single book page and extract detailed metadata via JSON-LD."""

5 resp = requests.get(book_url, headers=headers, timeout=15)

6 if resp.status_code != 200:

7 return {}

8 soup = BeautifulSoup(resp.text, "lxml")

9 script = soup.find("script", {"type": "application/ld+json"})

10 if not script:

11 return {}

12 data = json.loads(script.string)

13 agg = data.get("aggregateRating", {})

14 # Genre tags are not in JSON-LD; fall back to HTML

15 genres = [g.get_text(strip=True) for g in soup.select('span.BookPageMetadataSection__genreButton a span')]

16 return {

17 "isbn": data.get("isbn", ""),

18 "pages": data.get("numberOfPages", ""),

19 "language": data.get("inLanguage", ""),

20 "format": data.get("bookFormat", ""),

21 "avg_rating": agg.get("ratingValue", ""),

22 "rating_count": agg.get("ratingCount", ""),

23 "review_count": agg.get("reviewCount", ""),

24 "description": data.get("description", "")[:200], # truncate for preview

25 "genres": ", ".join(genres[:5]),

26 }

27# Example: enrich the first 3 books

28for book in books[:3]:

29 details = scrape_book_detail(book["book_url"], headers)

30 book.update(details)

31 print(f"Scraped: {book['title']} — ISBN: {book.get('isbn', 'N/A')}")

32 time.sleep(4) # respect rate limitsAdd a time.sleep() of 3-8 seconds between requests. Goodreads' rate limiting kicks in around 20-30 requests per minute from a single IP, and you'll start seeing 403s or CAPTCHAs if you go faster.

This two-pass approach — first collect all book URLs from listing pages, then visit each detail page — is more reliable and easier to resume if interrupted. It's the strategy most successful Goodreads scrapers use.

Side note: can do this automatically with subpage scraping. The AI visits each book's detail page and enriches your table with ISBN, description, genres, and more — no code, no loops, no sleep timers.

Step 6: Handle JavaScript-Rendered Content with Selenium

For pages where the content you need is loaded via JavaScript — reviews, rating breakdowns, "more details" sections — you'll need a browser automation tool. Here's a Selenium example:

1from selenium import webdriver

2from selenium.webdriver.chrome.options import Options

3from selenium.webdriver.common.by import By

4from selenium.webdriver.support.ui import WebDriverWait

5from selenium.webdriver.support import expected_conditions as EC

6options = Options()

7options.add_argument("--headless=new")

8options.add_argument("--disable-blink-features=AutomationControlled")

9options.add_argument("user-agent=Mozilla/5.0 (Windows NT 10.0; Win64; x64) "

10 "AppleWebKit/537.36 (KHTML, like Gecko) Chrome/131.0.0.0 Safari/537.36")

11driver = webdriver.Chrome(options=options)

12driver.get("https://www.goodreads.com/book/show/5907.The_Hobbit")

13# Wait for reviews to load

14try:

15 WebDriverWait(driver, 10).until(

16 EC.presence_of_element_located((By.CSS_SELECTOR, "article.ReviewCard"))

17 )

18except:

19 print("Reviews did not load — page may require login or JS timed out")

20# Now parse the fully rendered page

21page_source = driver.page_source

22soup = BeautifulSoup(page_source, "lxml")

23reviews = soup.select("article.ReviewCard")

24for rev in reviews[:3]:

25 text = rev.select_one("span.Formatted")

26 stars = rev.select_one("span.RatingStars")

27 print(f"Rating: {stars.get_text(strip=True) if stars else 'N/A'}")

28 print(f"Review: {text.get_text(strip=True)[:150] if text else 'N/A'}...")

29 print()

30driver.quit()When to use Selenium vs. requests:

- Use

requests+ BeautifulSoup for book metadata (JSON-LD), list pages, shelf pages (page 1), and Choice Awards data - Use Selenium or Playwright for reviews, rating distributions, and any content that doesn't appear in the raw HTML

Playwright is generally the better choice for new projects — faster, lower memory, better stealth defaults. But Selenium has a larger community and more existing code examples for Goodreads specifically.

Pagination That Actually Works: Scraping Full Goodreads Lists

Pagination is the most common failure point for Goodreads scrapers, and I haven't found a single competing tutorial that covers it properly. This is how to get it right.

How Goodreads Pagination URLs Work

Goodreads uses a straightforward ?page=N parameter for most paginated pages:

- Lists:

https://www.goodreads.com/list/show/1.Best_Books_Ever?page=2 - Shelves:

https://www.goodreads.com/shelf/show/thriller?page=2 - Search:

https://www.goodreads.com/search?q=fantasy&page=2

Each list page typically shows 100 books. Shelves show 50 per page.

Writing a Pagination Loop That Knows When to Stop

1import time

2all_books = []

3base_url = "https://www.goodreads.com/list/show/1.Best_Books_Ever"

4for page_num in range(1, 50): # safety cap at 50 pages

5 url = f"\{base_url\}?page=\{page_num\}"

6 resp = requests.get(url, headers=headers, timeout=15)

7 if resp.status_code != 200:

8 print(f"Page \{page_num\}: got status \{resp.status_code\}, stopping.")

9 break

10 soup = BeautifulSoup(resp.text, "lxml")

11 rows = soup.select('tr[itemtype="http://schema.org/Book"]')

12 if not rows:

13 print(f"Page \{page_num\}: no books found, reached the end.")

14 break

15 for row in rows:

16 title_tag = row.select_one("a.bookTitle")

17 author_tag = row.select_one("a.authorName")

18 title = title_tag.get_text(strip=True) if title_tag else ""

19 book_url = "https://www.goodreads.com" + title_tag["href"] if title_tag else ""

20 author = author_tag.get_text(strip=True) if author_tag else ""

21 all_books.append({"title": title, "author": author, "book_url": book_url})

22 print(f"Page \{page_num\}: scraped {len(rows)} books (total: {len(all_books)})")

23 time.sleep(5) # 5-second delay between pages

24print(f"\nDone. Total books collected: {len(all_books)}")You detect the last page by checking whether the results list is empty (no tr[itemtype="http://schema.org/Book"] elements found) or by looking for the absence of a "next" link (a.next_page).

Edge Case: Login Required Beyond Page 5

This is the trap that catches almost everyone: some Goodreads shelf and list pages silently return page 1 content when you request page 6+ without authentication. No error, no redirect — just the same data repeated.

To fix this, export the _session_id2 cookie from your browser (use a cookie export extension or Chrome DevTools > Application > Cookies) and include it in your requests:

1session = requests.Session()

2session.headers.update(headers)

3session.cookies.set("_session_id2", "YOUR_SESSION_COOKIE_VALUE_HERE", domain=".goodreads.com")

4# Now use session.get() instead of requests.get()

5resp = session.get(f"\{base_url\}?page=6", timeout=15)Thunderbit handles both click-based and infinite-scroll pagination natively, with no code and no cookie management. If your pagination logic keeps breaking, it's worth considering.

The Complete, Copy-Paste-Ready Python Script

Here's the full, consolidated script. It handles headers, pagination, subpage scraping via JSON-LD, rate limiting, and CSV export. I've tested this against live Goodreads pages as of mid-2025.

1"""

2goodreads_scraper.py — Scrape a Goodreads list with pagination and book detail enrichment.

3Usage: python goodreads_scraper.py

4Output: goodreads_books.csv

5"""

6import csv, json, time, requests

7from bs4 import BeautifulSoup

8HEADERS = {

9 "User-Agent": "Mozilla/5.0 (Windows NT 10.0; Win64; x64) AppleWebKit/537.36 "

10 "(KHTML, like Gecko) Chrome/131.0.0.0 Safari/537.36",

11 "Accept-Language": "en-US,en;q=0.9",

12 "Accept": "text/html,application/xhtml+xml,application/xml;q=0.9,*/*;q=0.8",

13}

14BASE_URL = "https://www.goodreads.com/list/show/1.Best_Books_Ever"

15MAX_PAGES = 3 # adjust as needed

16DELAY_LISTING = 5 # seconds between listing pages

17DELAY_DETAIL = 4 # seconds between detail pages

18OUTPUT_FILE = "goodreads_books.csv"

19def scrape_listing_page(url):

20 """Return list of dicts with title, author, book_url from one listing page."""

21 resp = requests.get(url, headers=HEADERS, timeout=15)

22 if resp.status_code != 200:

23 return []

24 soup = BeautifulSoup(resp.text, "lxml")

25 rows = soup.select('tr[itemtype="http://schema.org/Book"]')

26 books = []

27 for row in rows:

28 t = row.select_one("a.bookTitle")

29 a = row.select_one("a.authorName")

30 if t:

31 books.append({

32 "title": t.get_text(strip=True),

33 "author": a.get_text(strip=True) if a else "",

34 "book_url": "https://www.goodreads.com" + t["href"],

35 })

36 return books

37def scrape_book_detail(book_url):

38 """Visit a book page and extract metadata via JSON-LD + HTML fallback."""

39 resp = requests.get(book_url, headers=HEADERS, timeout=15)

40 if resp.status_code != 200:

41 return {}

42 soup = BeautifulSoup(resp.text, "lxml")

43 script = soup.find("script", {"type": "application/ld+json"})

44 if not script:

45 return {}

46 data = json.loads(script.string)

47 agg = data.get("aggregateRating", {})

48 genres = [g.get_text(strip=True)

49 for g in soup.select("span.BookPageMetadataSection__genreButton a span")]

50 return {

51 "isbn": data.get("isbn", ""),

52 "pages": data.get("numberOfPages", ""),

53 "avg_rating": agg.get("ratingValue", ""),

54 "rating_count": agg.get("ratingCount", ""),

55 "review_count": agg.get("reviewCount", ""),

56 "description": (data.get("description", "") or "")[:300],

57 "genres": ", ".join(genres[:5]),

58 "language": data.get("inLanguage", ""),

59 "format": data.get("bookFormat", ""),

60 "published": data.get("datePublished", ""),

61 }

62def main():

63 all_books = []

64 # --- Pass 1: collect book URLs from listing pages ---

65 for page in range(1, MAX_PAGES + 1):

66 url = f"\{BASE_URL\}?page=\{page\}"

67 page_books = scrape_listing_page(url)

68 if not page_books:

69 print(f"Page \{page\}: empty — stopping pagination.")

70 break

71 all_books.extend(page_books)

72 print(f"Page \{page\}: {len(page_books)} books (total: {len(all_books)})")

73 time.sleep(DELAY_LISTING)

74 # --- Pass 2: enrich each book with detail page data ---

75 for i, book in enumerate(all_books):

76 details = scrape_book_detail(book["book_url"])

77 book.update(details)

78 print(f"[{i+1}/{len(all_books)}] {book['title']} — ISBN: {book.get('isbn', 'N/A')}")

79 time.sleep(DELAY_DETAIL)

80 # --- Export to CSV ---

81 if all_books:

82 fieldnames = list(all_books[0].keys())

83 with open(OUTPUT_FILE, "w", newline="", encoding="utf-8") as f:

84 writer = csv.DictWriter(f, fieldnames=fieldnames)

85 writer.writeheader()

86 writer.writerows(all_books)

87 print(f"\nSaved {len(all_books)} books to \{OUTPUT_FILE\}")

88 else:

89 print("No books scraped.")

90if __name__ == "__main__":

91 main()With MAX_PAGES = 3, this script collects around 300 books from the "Best Books Ever" list, visits each book's detail page, and writes everything to a CSV. On my machine, it takes roughly 25 minutes (mostly due to the 4-second delays between detail page requests). Your output CSV will have columns like title, author, book_url, isbn, pages, avg_rating, rating_count, review_count, description, genres, language, format, and published.

Exporting Beyond CSV: Google Sheets with gspread

If you want the data in Google Sheets instead of (or in addition to) a CSV, add this after the CSV export:

1import gspread

2from oauth2client.service_account import ServiceAccountCredentials

3scope = ["https://spreadsheets.google.com/feeds", "https://www.googleapis.com/auth/drive"]

4creds = ServiceAccountCredentials.from_json_keyfile_name("credentials.json", scope)

5client = gspread.authorize(creds)

6sheet = client.open("Goodreads Scrape").sheet1

7header = list(all_books[0].keys())

8sheet.append_row(header)

9for book in all_books:

10 sheet.append_row([str(book.get(k, "")) for k in header])

11print("Data pushed to Google Sheets.")You'll need a Google Cloud service account with the Sheets and Drive APIs enabled. The walk through the setup in about 5 minutes. Use batch operations (append_rows() with a list of lists) if you're pushing more than a few hundred rows — Google's rate limit is 300 requests per 60 seconds per project.

Of course, if all this setup feels like overkill, Thunderbit exports to Google Sheets, Airtable, Notion, Excel, CSV, and JSON with — no library setup, no credentials file, no API quotas.

The No-Code Alternative: Scrape Goodreads with Thunderbit

Not everyone wants to maintain a Python script. Maybe you're a publisher doing a one-off market analysis, or a book blogger who just wants a spreadsheet of this year's bestsellers. That's exactly the scenario we built Thunderbit for.

How to Scrape Goodreads with Thunderbit

- Install the Thunderbit Chrome extension from the and navigate to a Goodreads list, shelf, or search results page.

- Click "AI Suggest Fields" in the Thunderbit sidebar. The AI reads the page and suggests columns — typically title, author, rating, cover image URL, and book link.

- Click "Scrape" — data is extracted into a structured table in seconds.

- Export to Google Sheets, Excel, Airtable, Notion, CSV, or JSON.

For detailed book data (ISBN, description, genres, page count), Thunderbit's subpage scraping feature visits each book's detail page and enriches the table automatically — no loops, no sleep timers, no debugging.

Thunderbit also handles paginated lists natively. You tell it to click "Next" or scroll, and it collects data across all pages without any code.

The trade-off is simple: the Python script gives you full control and is free (minus your time), while Thunderbit trades some flexibility for massive time savings and zero maintenance. For a 300-book list, the Python script takes ~25 minutes of runtime plus the time you spent writing and debugging it. Thunderbit gets the same data in about 3 minutes, with two clicks.

Scraping Goodreads Responsibly: robots.txt, Terms of Service, and Ethics

This deserves a straight answer, not a throwaway disclaimer paragraph.

What Goodreads' robots.txt Actually Says

Goodreads' live robots.txt is surprisingly specific. Book detail pages (/book/show/), public lists (/list/show/), public shelves (/shelf/show/), and author pages (/author/show/) are not blocked. What is blocked: /api, /book/reviews/, /review/list, /review/show, /search, and several other paths. GPTBot and CCBot (Common Crawl) are completely blocked with Disallow: /. There's a Crawl-delay: 5 directive for bingbot, but no global delay.

Goodreads Terms of Service in Plain English

The ToS (last revised April 28, 2021) prohibits "any use of data mining, robots, or similar data gathering and extraction tools." That's broad language, and it's worth taking seriously — but courts have consistently held that ToS violations alone do not constitute criminal "unauthorized access." The ruling stated that "criminalizing terms-of-service violations risks turning each website into its own criminal jurisdiction."

Best Practices

- Delay requests: 3-8 seconds between requests (Goodreads' own robots.txt suggests 5 seconds for bots)

- Stay under 5,000 requests per day from a single IP

- Only scrape publicly accessible pages — avoid mass-scraping logged-in-only data

- Don't redistribute raw review text commercially — reviews are copyrighted creative works

- Store only what you need and establish a data retention schedule

- Personal research vs. commercial use: Scraping public data for personal analysis or academic research is generally accepted. Commercial redistribution is where legal risk increases.

Using a tool like Thunderbit (which scrapes via your own browser session) keeps the interaction visually identical to normal browsing, but the same ethical principles apply regardless of your tool choice. If you want to go deeper on the , we've covered that topic separately.

Tips and Common Pitfalls

Tip: Always start with JSON-LD. Before writing complex CSS selectors, check if the data you need is in the <script type="application/ld+json"> tag. It's more stable, easier to parse, and less likely to break when Goodreads updates their frontend.

Tip: Use the two-pass strategy. First collect all book URLs from listing pages, then visit each detail page. This makes your scraper easier to resume if it crashes halfway through, and you can save the URL list to disk as a checkpoint.

Pitfall: Forgetting to handle missing fields. Not every book page has an ISBN, genre tags, or a description. Always use .get() with a default value, or wrap selectors in if checks. One NoneType error can crash a 3-hour scraping run.

Pitfall: Running too fast. I know it's tempting to set time.sleep(0.5) and blaze through. But Goodreads will start returning 403s after about 20-30 rapid requests, and once you're flagged, you may need to wait hours or switch IPs. A 4-5 second delay is the sweet spot.

Pitfall: Trusting old tutorials. If a guide references the Goodreads API, or uses class names like .field.value or #bookTitle, it's probably outdated. Always verify selectors against the live page before building your scraper.

For more on choosing the right scraping tools and frameworks, check out our guides on and .

Conclusion and Key Takeaways

Scraping Goodreads with Python is entirely doable — you just need to know where the landmines are. The short version:

- The Goodreads API is gone (since December 2020). Scraping is the primary way to get structured book data from the platform.

- Empty results are almost always caused by JS-rendered content, stale selectors, missing headers, or pagination authentication issues — not by your code being wrong.

- JSON-LD is your best friend for book metadata. It's stable, structured, and rarely changes.

- Pagination requires authentication for many shelf and list pages beyond page 5. Include the

_session_id2cookie. - Rate limiting is real. Use 3-8 second delays and stay under 5,000 requests per day.

- The two-pass strategy (collect URLs first, then scrape detail pages) is more reliable and resumable.

- For non-coders (or anyone who values their afternoon), handles all of this — JS rendering, pagination, subpage enrichment, and export — in about two clicks.

Scrape responsibly, respect the robots.txt, and may your book data always come back with more than [].

FAQs

Can you still use the Goodreads API?

No. Goodreads deprecated its public API in December 2020 and no longer issues new developer keys. Existing keys that were inactive for 30 days were automatically deactivated. Web scraping or alternative APIs (like Open Library or Google Books) are the current options for accessing book data programmatically.

Why does my Goodreads scraper return empty results?

The most common cause is JavaScript-rendered content. Goodreads loads reviews, rating distributions, and many detail sections via React/JavaScript, which a simple requests.get() call cannot see. Switch to Selenium or Playwright for those pages. Other causes include stale CSS selectors (Goodreads changed the HTML), missing User-Agent headers (triggering 403 blocks), or unauthenticated requests on paginated shelf pages.

Is it legal to scrape Goodreads?

Scraping publicly available data for personal or research use is generally accepted under current legal precedents (hiQ v. LinkedIn, Meta v. Bright Data). However, Goodreads' Terms of Service prohibit automated data gathering, and you should always review their robots.txt. Avoid commercial redistribution of copyrighted review text, and limit your request volume to avoid straining the site's resources.

How do I scrape multiple pages on Goodreads?

Append ?page=N to the shelf or list URL and loop through page numbers. Check for empty results or the absence of a "next" link to detect the last page. Important: some shelf pages require authentication (the _session_id2 cookie) to return results beyond page 5 — without it, you'll silently get page 1 data repeated.

Can I scrape Goodreads without writing code?

Yes. is a Chrome extension that lets you scrape Goodreads in two clicks — AI suggests the data fields, you click "Scrape," and export directly to Google Sheets, Excel, Airtable, or Notion. It handles JavaScript-rendered content, pagination, and subpage enrichment automatically, with no Python or coding required.

Learn More