My Amazon review scraper worked perfectly for six weeks — then one morning it returned 200 OK and a page full of nothing. No error, no CAPTCHA, just empty HTML where hundreds of reviews used to be.

If that sounds familiar, you're not alone. In late 2025, Amazon started gating its full review pages behind a login wall, and a huge number of Python scraping scripts broke overnight. I've spent the last few months at working through the problem from both sides — building our AI scraper and also maintaining my own Python review pipeline — so I figured it was time to write the guide I wish I'd had when my script first went dark. This post covers the working approach: cookie-based authentication, stable selectors that survive Amazon's CSS obfuscation, workarounds for the 10-page pagination cap, anti-bot defenses, and a bonus sentiment analysis section that turns raw review text into actual business insights. And if you get halfway through and think, "I'd rather not maintain all this code," I'll show you how handles the same job in about two minutes with zero Python.

What Is Amazon Review Scraping (and Why Does It Matter)?

Amazon review scraping is the process of programmatically extracting customer review data — star ratings, review text, author names, dates, verified purchase badges — from Amazon product pages. Since Amazon (and never brought it back), web scraping is the only programmatic path to this data.

The numbers back this up. , and . Displaying just 5 reviews on a product page can . Companies that systematically analyze review sentiment see . This isn't abstract data science — it's competitive intelligence, product improvement signals, and marketing language, all sitting in plain text on Amazon's servers.

Why Scrape Amazon Reviews with Python

Python remains the go-to language for this work. It's the , and its ecosystem — requests, BeautifulSoup, pandas, Scrapy — makes web scraping accessible even for people who aren't full-time developers.

Different teams put this data to work in different ways:

| Team | Use Case | What They Extract |

|---|---|---|

| Product / R&D | Identify recurring complaints, prioritize fixes | 1–2 star review text, keyword frequency |

| Sales | Monitor competitor product sentiment | Ratings, review volume trends |

| Marketing | Source customer language for ad copy | Positive review phrases, feature mentions |

| Ecommerce Ops | Track own-product sentiment over time | Star distribution, verified purchase ratio |

| Market Research | Compare category leaders across features | Multi-ASIN review datasets |

One kitchenware brand , reformulated the product, and reclaimed their #1 Best Seller rank within 60 days. A fitness tracker company , identified a latex allergy issue, launched a hypoallergenic variant, and cut returns by 40%. This is the kind of ROI that makes the engineering effort worthwhile.

Login Wall: Why Your Amazon Review Scraper Stopped Working

On November 14, 2024, . The change was confirmed across and . If you visit /product-reviews/\{ASIN\}/ in an incognito window, you'll get redirected to a sign-in page instead of review data.

The symptoms are subtle: your script gets a 200 OK response, but the HTML body contains a login form (name="email", id="ap_password") instead of reviews. No error code. No CAPTCHA. Just... nothing useful.

Amazon did this for anti-bot and regional compliance reasons. The enforcement is inconsistent — sometimes a fresh browser window loads a few reviews before the wall kicks in, especially on the first page — but for any scraper running at scale, you should assume the wall is always on.

Different Amazon country domains (.de, .co.uk, .co.jp) enforce the wall independently. As one forum user put it: "a login for each country is required." Your .com cookies won't work on .co.uk.

Featured Reviews vs. Full Reviews: What You Can Still Access Without Login

Amazon product pages (the /dp/\{ASIN\}/ URL) still display roughly without authentication. These are hand-picked by Amazon's algorithm and are useful for a quick sentiment check, but they're not sortable, filterable, or paginated.

The full review pages (/product-reviews/\{ASIN\}/) — with sorting by newest, filtering by star rating, and pagination across hundreds of reviews — require login.

If you only need a handful of reviews for a quick pulse check, scrape the product page. For hundreds or thousands, you'll need to handle authentication.

What You Need Before You Start: Python Setup and Libraries

Before we write any code, here's the setup:

- Difficulty: Intermediate (comfortable with Python, basic HTML knowledge)

- Time Required: ~45 minutes for the full pipeline; ~10 minutes for a basic scrape

- What You'll Need: Python 3.8+, Chrome browser, a valid Amazon account

Install the core libraries:

1pip install requests beautifulsoup4 lxml pandas textblobOptional (for advanced sentiment analysis):

1pip install transformers torchWhat's an ASIN? It's Amazon's 10-character product identifier. You'll find it in any product URL — for example, in amazon.com/dp/B0BCNKKZ91, the ASIN is B0BCNKKZ91. That's the key you'll plug into the review URL.

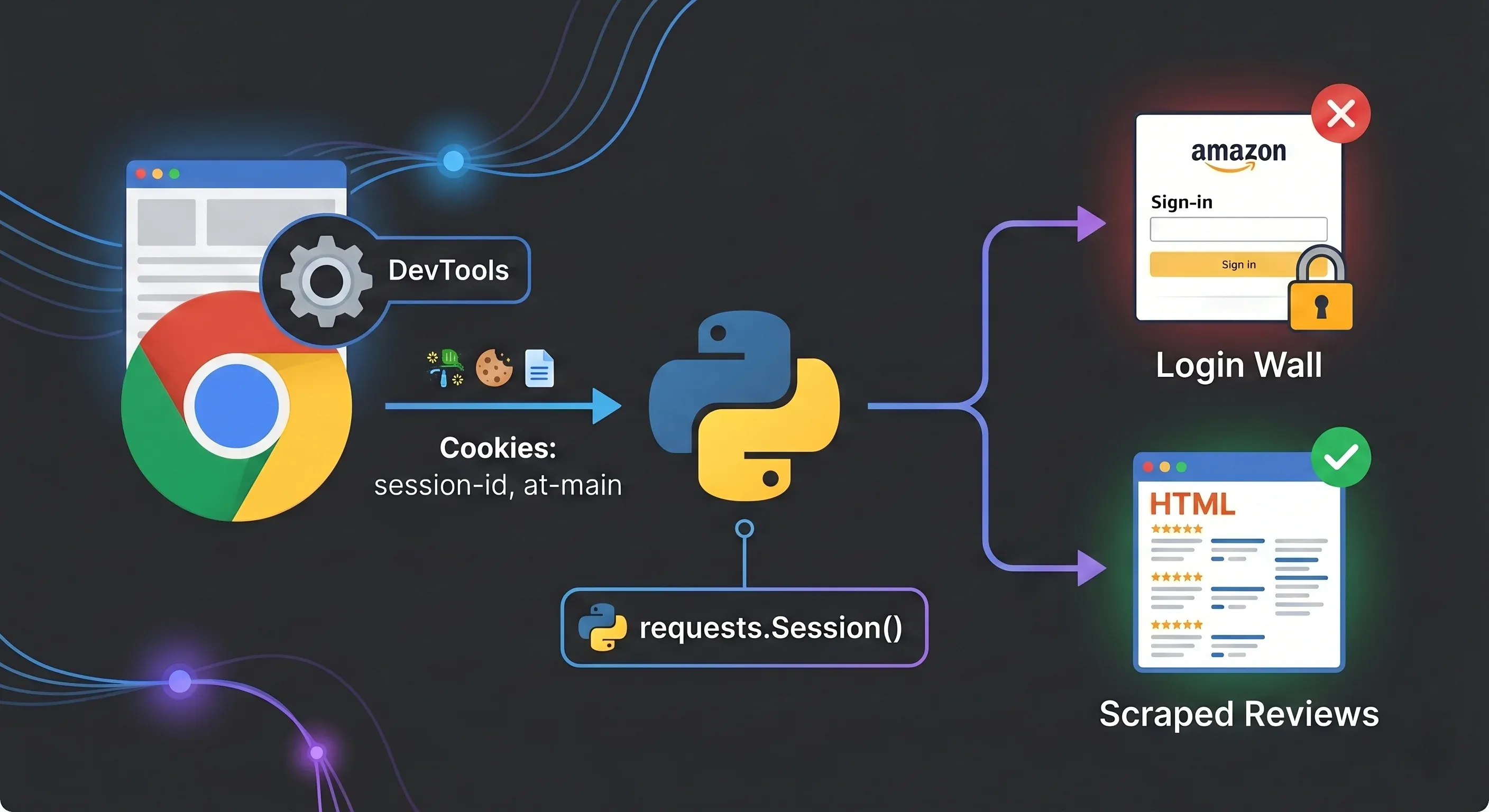

Step 1: Get Past the Login Wall with Cookie-Based Authentication

The most reliable approach is to log into Amazon in your browser, copy your session cookies, and inject them into your Python requests.Session(). This avoids triggering CAPTCHAs and SMS 2FA that plague Selenium-based login automation.

You need these seven cookies:

| Cookie Name | Purpose |

|---|---|

session-id | Rotating session identifier |

session-id-time | Session timestamp |

session-token | Rotating session token |

ubid-main | User browsing identifier |

at-main | Primary auth token |

sess-at-main | Session-scoped auth |

x-main | User-email-keyed identifier |

How to Extract Cookies from Chrome DevTools

- Log into amazon.com in Chrome

- Open DevTools (F12 or right-click → Inspect)

- Go to Application → Storage → Cookies →

https://www.amazon.com - Find each cookie name in the table and copy its value

- Format them as a semicolon-separated string for Python

Set up your session like this:

1import requests

2session = requests.Session()

3# Paste your cookie values here

4cookies = {

5 "session-id": "YOUR_SESSION_ID",

6 "session-id-time": "YOUR_SESSION_ID_TIME",

7 "session-token": "YOUR_SESSION_TOKEN",

8 "ubid-main": "YOUR_UBID_MAIN",

9 "at-main": "YOUR_AT_MAIN",

10 "sess-at-main": "YOUR_SESS_AT_MAIN",

11 "x-main": "YOUR_X_MAIN",

12}

13headers = {

14 "User-Agent": "Mozilla/5.0 (Windows NT 10.0; Win64; x64) AppleWebKit/537.36 (KHTML, like Gecko) Chrome/147.0.0.0 Safari/537.36",

15 "Accept": "text/html,application/xhtml+xml,application/xml;q=0.9,image/webp,*/*;q=0.8",

16 "Accept-Language": "en-US,en;q=0.5",

17}

18session.cookies.update(cookies)

19session.headers.update(headers)Important: Reuse the same session object across all your requests. This keeps cookies consistent and mimics a real browser session. Cookies typically last days to weeks under scraping load, but if you start getting login redirects again, refresh them from your browser.

For non-.com marketplaces, cookie names change slightly — amazon.de uses at-acbde instead of at-main, amazon.co.uk uses at-acbuk, and so on. Each marketplace needs its own independent session.

Step 2: Build the Request and Parse Review HTML with BeautifulSoup

The Amazon reviews URL follows this pattern:

1https://www.amazon.com/product-reviews/\{ASIN\}/ref=cm_cr_arp_d_viewopt_srt?sortBy=recent&pageNumber=1The core function:

1from bs4 import BeautifulSoup

2import time, random

3def get_soup(session, url):

4 time.sleep(random.uniform(2, 5)) # Polite delay

5 response = session.get(url, timeout=15)

6 # Detect login wall

7 if "ap_email" in response.text or "Amazon Sign-In" in response.text:

8 raise Exception("Login wall detected — refresh your cookies")

9 if response.status_code != 200:

10 raise Exception(f"HTTP \{response.status_code\}")

11 return BeautifulSoup(response.text, "lxml")A small trick that helps: before hitting the reviews page, visit the product page first. This establishes a natural browsing pattern in your session.

1# Visit product page first (mimics real browsing)

2product_url = f"https://www.amazon.com/dp/\{asin\}"

3session.get(product_url, timeout=15)

4time.sleep(random.uniform(1, 3))

5# Then hit the reviews page

6reviews_url = f"https://www.amazon.com/product-reviews/\{asin\}/ref=cm_cr_arp_d_viewopt_srt?sortBy=recent&pageNumber=1"

7soup = get_soup(session, reviews_url)Step 3: Use Stable Selectors to Extract Review Data (Stop Relying on CSS Classes)

This is where most tutorials from 2022–2023 fall apart. Amazon obfuscates CSS class names — they change periodically, and as one frustrated developer put it in a forum thread: "none of them had a single pattern for the names of the classes of span tags."

The fix: Amazon uses data-hook attributes on review elements, and these are remarkably stable. They're semantic identifiers that Amazon's own frontend code depends on, so they don't get randomized.

| Review Field | Stable Selector (data-hook) | Fragile Selector (class) |

|---|---|---|

| Review text | [data-hook="review-body"] | .review-text-content (changes) |

| Star rating | [data-hook="review-star-rating"] | .a-icon-alt (ambiguous) |

| Review title | [data-hook="review-title"] | .review-title (sometimes) |

| Author name | span.a-profile-name | Relatively stable |

| Review date | [data-hook="review-date"] | .review-date (region-dependent) |

| Verified purchase | [data-hook="avp-badge"] | span.a-size-mini |

The extraction code using data-hook selectors:

1import re

2def extract_reviews(soup):

3 reviews = []

4 review_divs = soup.select('[data-hook="review"]')

5 for div in review_divs:

6 # Star rating

7 rating_el = div.select_one('[data-hook="review-star-rating"]')

8 rating = None

9 if rating_el:

10 rating_text = rating_el.get_text(strip=True)

11 match = re.search(r'(\d\.?\d?)', rating_text)

12 if match:

13 rating = float(match.group(1))

14 # Title

15 title_el = div.select_one('[data-hook="review-title"]')

16 title = title_el.get_text(strip=True) if title_el else ""

17 # Body

18 body_el = div.select_one('[data-hook="review-body"]')

19 body = body_el.get_text(strip=True) if body_el else ""

20 # Author

21 author_el = div.select_one('span.a-profile-name')

22 author = author_el.get_text(strip=True) if author_el else ""

23 # Date and country

24 date_el = div.select_one('[data-hook="review-date"]')

25 date_text = date_el.get_text(strip=True) if date_el else ""

26 # Format: "Reviewed in the United States on January 15, 2025"

27 country_match = re.search(r'Reviewed in (.+?) on', date_text)

28 date_match = re.search(r'on (.+)$', date_text)

29 country = country_match.group(1) if country_match else ""

30 date = date_match.group(1) if date_match else ""

31 # Verified purchase

32 verified_el = div.select_one('[data-hook="avp-badge"]')

33 verified = bool(verified_el)

34 reviews.append({

35 "author": author,

36 "rating": rating,

37 "title": title,

38 "content": body,

39 "date": date,

40 "country": country,

41 "verified": verified,

42 })

43 return reviewsI've been running this selector set against multiple ASINs for months now, and the data-hook attributes have not changed once. The CSS classes, on the other hand, have rotated at least twice in the same period.

Step 4: Handle Pagination and Amazon's 10-Page Cap

Amazon limits the pageNumber parameter to 10 pages of 10 reviews each — a hard ceiling of ~100 reviews per filter combination. The "Next page" button simply disappears after page 10.

Basic pagination loop:

1all_reviews = []

2for page in range(1, 11):

3 url = f"https://www.amazon.com/product-reviews/\{asin\}/ref=cm_cr_arp_d_viewopt_srt?sortBy=recent&pageNumber=\{page\}"

4 soup = get_soup(session, url)

5 page_reviews = extract_reviews(soup)

6 if not page_reviews:

7 break # No more reviews on this page

8 all_reviews.extend(page_reviews)

9 print(f"Page \{page\}: {len(page_reviews)} reviews")How to Get More Than 10 Pages of Amazon Reviews

The workaround is filter bucketing. Each combination of filterByStar and sortBy gets its own independent 10-page window.

Star filter values: one_star, two_star, three_star, four_star, five_star

Sort values: recent, helpful (default)

By combining all 5 star filters × 2 sort orders, you can access up to 100 pages, 1,000 reviews per product — and for products with uneven star distributions, you'll often get close to the full review set.

1star_filters = ["one_star", "two_star", "three_star", "four_star", "five_star"]

2sort_orders = ["recent", "helpful"]

3all_reviews = []

4seen_titles = set() # Simple deduplication

5for star in star_filters:

6 for sort in sort_orders:

7 for page in range(1, 11):

8 url = (

9 f"https://www.amazon.com/product-reviews/\{asin\}"

10 f"?filterByStar=\{star\}&sortBy=\{sort\}&pageNumber=\{page\}"

11 )

12 soup = get_soup(session, url)

13 page_reviews = extract_reviews(soup)

14 if not page_reviews:

15 break

16 for review in page_reviews:

17 # Deduplicate by title + author combo

18 key = (review["title"], review["author"])

19 if key not in seen_titles:

20 seen_titles.add(key)

21 all_reviews.append(review)

22 print(f"[\{star\}/\{sort\}] Page \{page\}: {len(page_reviews)} reviews")

23print(f"Total unique reviews: {len(all_reviews)}")There will be overlap between buckets, so deduplication is essential. I use a combination of review title + author name as a quick key — not perfect, but it catches the vast majority of duplicates.

Step 5: Dodge Anti-Bot Defenses (Rotate, Throttle, Retry)

Amazon uses AWS WAF Bot Control, and it's gotten significantly more aggressive. Single-layer countermeasures (just rotating User-Agents, just adding delays) don't cut it anymore.

| Technique | Implementation |

|---|---|

| Rotating User-Agents | Random choice from 10+ real browser strings |

| Exponential backoff | 2s → 4s → 8s retry delays on 503s |

| Request throttling | random.uniform(2, 5) seconds between pages |

| Proxy rotation | Cycle through residential proxies |

| Session fingerprint | Consistent cookies + headers per session |

| TLS impersonation | Use curl_cffi instead of stock requests for production |

A production-ready retry wrapper:

1import time, random

2USER_AGENTS = [

3 "Mozilla/5.0 (Windows NT 10.0; Win64; x64) AppleWebKit/537.36 (KHTML, like Gecko) Chrome/147.0.0.0 Safari/537.36",

4 "Mozilla/5.0 (Macintosh; Intel Mac OS X 10_15_7) AppleWebKit/537.36 (KHTML, like Gecko) Chrome/147.0.0.0 Safari/537.36",

5 "Mozilla/5.0 (Windows NT 10.0; Win64; x64; rv:149.0) Gecko/20100101 Firefox/149.0",

6 "Mozilla/5.0 (Macintosh; Intel Mac OS X 15_7_5) AppleWebKit/605.1.15 (KHTML, like Gecko) Version/26.0 Safari/605.1.15",

7 "Mozilla/5.0 (Windows NT 10.0; Win64; x64) AppleWebKit/537.36 (KHTML, like Gecko) Chrome/146.0.0.0 Safari/537.36",

8]

9def scrape_with_retries(session, url, max_retries=3):

10 for attempt in range(max_retries):

11 try:

12 session.headers["User-Agent"] = random.choice(USER_AGENTS)

13 time.sleep(random.uniform(2, 5))

14 response = session.get(url, timeout=15)

15 # Detect blocks

16 if "validateCaptcha" in response.url or "Robot Check" in response.text:

17 wait = (2 ** attempt) * 5

18 print(f"CAPTCHA detected. Waiting \{wait\}s...")

19 time.sleep(wait)

20 continue

21 if response.status_code in (429, 503):

22 wait = (2 ** attempt) * 2

23 print(f"Rate limited (\{response.status_code\}). Waiting \{wait\}s...")

24 time.sleep(wait)

25 continue

26 if "ap_email" in response.text:

27 raise Exception("Login wall — cookies expired")

28 return BeautifulSoup(response.text, "lxml")

29 except Exception as e:

30 if attempt == max_retries - 1:

31 raise

32 print(f"Attempt {attempt + 1} failed: \{e\}")

33 return NoneA note on proxies: Amazon (AWS, GCP, Azure, DigitalOcean) at the network layer. If you're scraping more than a few hundred pages, residential proxies are effectively mandatory — expect to spend $50–200+/month depending on volume. For smaller projects (under 100 requests/day), careful throttling from your home IP often works fine.

Amazon also inspects TLS fingerprints. Python's stock requests library has a . For production scrapers, consider curl_cffi, which impersonates real browser TLS stacks. For tutorial-scale scraping (a few hundred pages), requests with good headers usually gets the job done.

Step 6: Export Your Scraped Amazon Reviews to CSV or Excel

Once you've collected your reviews, getting them into a usable format is straightforward with pandas:

1import pandas as pd

2df = pd.DataFrame(all_reviews)

3df.to_csv("amazon_reviews.csv", index=False)

4print(f"Exported {len(df)} reviews to amazon_reviews.csv")Sample output:

| author | rating | title | content | date | country | verified |

|---|---|---|---|---|---|---|

| Sarah M. | 5.0 | Best purchase this year | Battery lasts all day, screen is gorgeous... | January 15, 2025 | the United States | True |

| Mike T. | 2.0 | Disappointed after 2 weeks | The charging port stopped working... | February 3, 2025 | the United States | True |

| Priya K. | 4.0 | Great value for the price | Does everything I need, minor lag on heavy apps... | March 10, 2025 | the United States | False |

For Excel export: df.to_excel("amazon_reviews.xlsx", index=False) (requires openpyxl).

For Google Sheets, the gspread library works but requires — creating a project, enabling two APIs, generating service account credentials, sharing the sheet. If that sounds like more setup than the actual scraping, you're not wrong. (This is one of those moments where a tool like that exports to Google Sheets with one click starts looking very appealing.)

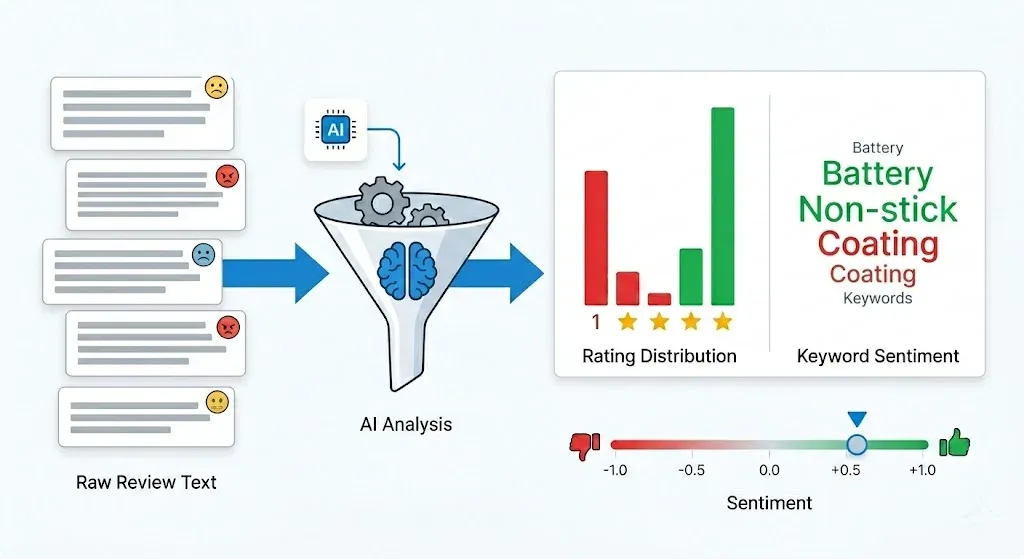

Bonus: Add Sentiment Analysis to Your Scraped Reviews in 5 Lines of Python

Most scraping tutorials stop at CSV export. But scoring sentiment is what turns raw data into business decisions.

The quickest baseline uses TextBlob:

1from textblob import TextBlob

2df["sentiment"] = df["content"].apply(lambda x: TextBlob(str(x)).sentiment.polarity)That gives you a polarity score from -1.0 (very negative) to +1.0 (very positive) for each review. Sample output:

| content (truncated) | rating | sentiment |

|---|---|---|

| "Battery lasts all day, screen is gorgeous..." | 5.0 | 0.65 |

| "The charging port stopped working after..." | 2.0 | -0.40 |

| "Does everything I need, minor lag on..." | 4.0 | 0.25 |

| "Absolute garbage. Returned immediately." | 1.0 | -0.75 |

| "It's okay. Nothing special but works." | 3.0 | 0.10 |

The interesting rows are the mismatches — a 3-star review with positive sentiment text, or a 5-star review with negative language. These discrepancies often reveal nuanced customer opinions that star ratings alone miss.

For production-grade accuracy, the recommendation is Hugging Face Transformers. , and compared to lexicon tools. The nlptown/bert-base-multilingual-uncased-sentiment model even predicts 1–5 star ratings directly:

1from transformers import pipeline

2clf = pipeline("sentiment-analysis",

3 model="nlptown/bert-base-multilingual-uncased-sentiment")

4df["predicted_stars"] = df["content"].apply(

5 lambda x: int(clf(str(x)[:512])[0]["label"][0])

6)Amazon reviews follow a — a big spike at 5 stars, a smaller spike at 1 star, and a valley in the middle. This means the average star rating is often a poor proxy for actual product quality. Segment the 1-star cluster and mine it for recurring themes — that's usually where a single fixable defect hides.

The Honest Trade-Off: DIY Python vs. Paid Scraping APIs vs. Thunderbit

I've maintained Python scrapers for Amazon, and I'll be honest: they break. Selectors change, cookies expire, Amazon rolls out a new bot detection layer, and suddenly your Saturday morning involves debugging a scraper instead of analyzing data. Forum users report the same frustration — DIY scripts that "worked last month" now need constant patching.

Here's how the three main approaches compare:

| Criteria | DIY Python (BS4/Selenium) | Paid Scraping API | Thunderbit (No-Code) |

|---|---|---|---|

| Setup time | 1–3 hours | 30 min (API key) | 2 minutes |

| Cost | Free (+ proxy costs) | $50–200+/mo | Free tier available |

| Login wall handling | Manual cookie management | Usually handled | Handled automatically |

| Maintenance | High (selectors break) | Low (provider maintains) | Zero (AI adapts) |

| Pagination | Custom code needed | Built-in | Built-in |

| Multi-country support | Separate sessions per domain | Usually supported | Browser-based = your locale |

| Sentiment analysis | Add your own code | Sometimes included | Export to Sheets, analyze anywhere |

| Best for | Learning, full control | Scale/production pipelines | Quick data pulls, non-dev teams |

Python gives you full control and is genuinely the best way to learn how web scraping works under the hood. Paid APIs (ScrapingBee, Oxylabs, Bright Data) make sense for production pipelines where uptime matters more than cost. And for teams that need review data without the dev overhead — ecommerce ops monitoring competitor products weekly, marketing teams pulling customer language for ad copy — there's a third path.

How to Scrape Amazon Reviews with Thunderbit (No Code, No Maintenance)

We built to handle exactly the scenarios where maintaining a Python scraper feels like overkill. The workflow looks like this:

- Install the

- Navigate to the Amazon product review page in your browser (you're already logged in, so the login wall is a non-issue)

- Click "AI Suggest Fields" — Thunderbit reads the page and suggests columns like Author, Rating, Title, Review Text, Date, Verified Purchase

- Click "Scrape" — data is extracted instantly, with built-in pagination

- Export to Excel, Google Sheets, Airtable, or Notion

The main advantage is that Thunderbit's AI reads the page structure fresh each time. No CSS selectors to maintain, no cookie management, no anti-bot code. When Amazon changes their HTML, the AI adapts. For readers who want programmatic access without full DIY, Thunderbit also offers an — structured data extraction via API with AI-powered field detection, no selector maintenance.

For deeper dives on Amazon data, see our guides on and .

Tips for Scraping Amazon Reviews at Scale with Python

If you're scraping reviews across many ASINs, a few practices will save you headaches:

- Batch your ASINs with delays between products (not just between pages). I use 10–15 second pauses between ASINs.

- Deduplicate aggressively. When combining multiple star filter and sort order combinations, you'll get overlapping reviews. Use a set of

(title, author, date)tuples as a dedup key. - Log failures. Track which ASIN + page + filter combinations failed so you can retry them without re-scraping everything.

- Store in a database for large projects. A simple SQLite database scales much better than growing CSV files:

1import sqlite3

2conn = sqlite3.connect("reviews.db")

3df.to_sql("reviews", conn, if_exists="append", index=False)- Schedule recurring scrapes. For ongoing monitoring, set up a cron job or use Thunderbit's Scheduled Scraper feature — describe the URL and schedule, and it handles the rest without a server.

For additional approaches, our posts on and cover additional options.

A Quick Note on Legal and Ethical Considerations

Amazon's explicitly prohibit "the use of any robot, spider, scraper, or other automated means to access Amazon Services." That said, recent US case law has been favorable to scrapers of public data. In , a federal court ruled that scraping publicly accessible data does not violate terms of service when the scraper is not a logged-in "user."

The nuance: scraping behind a login (which is what this tutorial covers) moves you into contract-law territory, since you agreed to Amazon's ToS when you created your account. Scraping publicly visible featured reviews carries less legal risk than scraping behind the login wall.

Practical guidelines: don't redistribute scraped data commercially, don't scrape personal user data beyond what's publicly displayed, respect robots.txt, and consult legal counsel for large-scale or commercial use. This is not legal advice. For more on the legal landscape, see our overview of .

Conclusion: Scrape Amazon Reviews with Python or Skip the Code Entirely

Quick recap of what this guide walked through:

- The login wall is real, but solvable with cookie-based authentication — copy 7 cookies from your browser and inject them into a

requests.Session() - Use

data-hookselectors, not CSS classes, for extraction that doesn't break every few weeks - Combine star filters and sort orders to beat the 10-page pagination cap and access 500+ reviews per product

- Add sentiment analysis with TextBlob for a quick baseline or Hugging Face Transformers for production accuracy

- Maintain anti-bot defenses: throttling, User-Agent rotation, exponential backoff, and residential proxies for scale

Python gives you full control and is the best way to understand what's happening under the hood. But if your use case is "I need competitor review data in a spreadsheet by Friday" rather than "I want to build a production data pipeline," the maintenance burden of a custom scraper may not be worth it.

handles authentication, selectors, pagination, and export in clicks — try the and see if it fits your workflow. As Amazon continues to tighten anti-bot measures, AI-powered tools that adapt in real time are going to become less of a nice-to-have and more of a necessity.

You can also explore our for video walkthroughs of scraping workflows.

FAQs

1. Can you scrape Amazon reviews without logging in?

Yes, but only the ~8 "featured reviews" displayed on the product detail page (/dp/\{ASIN\}/). The full review pages with sorting, filtering, and pagination require authentication as of late 2024. For most business use cases, you'll need to handle the login wall.

2. Is it legal to scrape Amazon reviews?

Amazon's Terms of Service prohibit automated scraping. However, recent US case law (Meta v. Bright Data, 2024; hiQ v. LinkedIn) supports scraping publicly accessible data. Scraping behind a login carries higher legal risk since you've agreed to Amazon's ToS. Consult legal counsel for commercial use.

3. How many Amazon reviews can I scrape per product?

Amazon caps review pages at 10 per sort order and star filter combination. Using all 5 star filters × 2 sort orders, you can access up to 100 pages (roughly 1,000 reviews) per product. With keyword filters, the theoretical ceiling is much higher, though with significant duplication.

4. What is the best Python library for scraping Amazon reviews?

requests + BeautifulSoup for static HTML parsing is the most common and reliable combination. Selenium is useful when JavaScript rendering is required. For a no-code alternative that handles login walls and pagination automatically, try .

5. How do I avoid getting blocked when scraping Amazon?

Rotate User-Agent strings from a pool of 10+ real browser strings, add random delays of 2–5 seconds between requests, implement exponential backoff on 503/429 errors, use residential proxies for scale (datacenter IPs are pre-blocked), and maintain consistent session cookies across requests. For a zero-maintenance approach, Thunderbit handles anti-bot defenses automatically through your browser session.

Learn More