Last weekend, I burned through an entire pot of coffee trying to scrape Amazon's Best Sellers page four different ways. Two worked great, one almost got my IP banned, and one took literally two clicks. Here's everything I learned.

Amazon is a monster of a marketplace — , , and a Best Sellers Rank (BSR) system that updates hourly. If you're doing FBA product research, competitive pricing analysis, or just trying to spot trends before your competitors, Best Seller data is gold.

But getting that data out of Amazon and into a spreadsheet? That's where things get interesting. I tested requests + BeautifulSoup, Selenium, a scraping API, and (our own no-code AI web scraper) to see which approach actually delivers — and which ones will leave you staring at a CAPTCHA page.

What Are Amazon Best Sellers (and Why Should You Care)?

Amazon Best Sellers Rank (BSR) is Amazon's real-time leaderboard, ranking products by sales volume within each category. Think of it as a popularity contest, updated hourly, based on both recent and historical sales data. Amazon themselves describe it this way:

"The Amazon Best Sellers calculation is based on Amazon sales and is updated hourly to reflect recent and historical sales of every item sold on Amazon." —

The Best Sellers page shows the top 100 products per category, split across two pages of 50 each. Page 1 covers ranks #1–50, Page 2 covers #51–100. Amazon has confirmed that page views and customer reviews do NOT affect BSR — it's purely sales-driven.

Who cares about this data? E-commerce sellers scouting products for FBA, sales teams building competitive intelligence, operations teams monitoring pricing trends, and market researchers tracking category growth. In my experience, anyone selling on or competing with Amazon eventually ends up needing this data in a spreadsheet.

Why Scrape Amazon Best Sellers with Python?

Manual product research is a time sink. A found that employees spend 9.3 hours per week just searching for and gathering information. For e-commerce teams, that translates to hours spent clicking through Amazon pages, copying product names and prices, and pasting them into spreadsheets — only to repeat the whole process next week.

Here's a quick look at the use cases that make scraping Best Sellers worthwhile:

| Use Case | What You Get | Who Benefits |

|---|---|---|

| FBA Product Research | Identify high-demand, low-competition products by BSR and review count | Amazon sellers, dropshippers |

| Competitive Pricing | Track price changes across top products in your category | E-commerce teams, pricing analysts |

| Market Trend Monitoring | Spot rising categories and seasonal shifts | Product managers, market researchers |

| Lead Generation | Build lists of top-selling brands and their product lines | Sales teams, B2B outreach |

| Competitor Analysis | Benchmark your products against category leaders | Brand managers, strategy teams |

The ROI is real: a of 2,700 commerce professionals found that AI tools save e-commerce professionals an average of . And sellers using automated price tracking hold the Buy Box versus 42% for manual trackers — a 37% sales increase driven by faster reactions to price changes.

4 Ways to Scrape Amazon Best Sellers with Python: A Quick Comparison

Before we get into the step-by-step tutorials, here's the side-by-side comparison I wish I'd had before I started testing. This table should help you pick the right method for your situation:

| Criteria | requests + BS4 | Selenium | Scraping API (e.g., Scrape.do) | Thunderbit (No-Code) |

|---|---|---|---|---|

| Setup Difficulty | Medium | High (driver, browser) | Low (API key) | Very Low (Chrome extension) |

| Handles Lazy Loading | No | Yes (scroll simulation) | Yes (rendered HTML) | Yes (AI handles rendering) |

| Anti-Bot Resilience | Low (IP bans) | Medium (detectable) | High (rotating proxies) | High (cloud + browser modes) |

| Maintenance Burden | High (selectors break) | High (driver updates + selectors) | Low | Very Low (AI adapts to layout changes) |

| Cost | Free | Free | Paid (per request) | Free tier + paid plans |

| Best For | One-off scrapes, learning | JS-heavy pages, login-required | Scale / production | Non-devs, quick research, recurring monitoring |

If you want to learn Python scraping fundamentals, start with Method 1 or 2. If you need production-scale reliability, go with Method 3. If you want results in two clicks without writing code, jump straight to Method 4.

Before You Start

- Difficulty: Beginner to Intermediate (depending on method)

- Time Required: ~15 minutes for Thunderbit, ~45 minutes for Python methods

- What You'll Need: Python 3.8+ (for Methods 1–3), Chrome browser, (for Method 4), and a target Amazon Best Sellers category URL

Method 1: Scrape Amazon Best Sellers with requests + BeautifulSoup

This is the lightweight, beginner-friendly approach — no browser automation, just HTTP requests and HTML parsing. It also taught me the most about Amazon's anti-scraping defenses.

Step 1: Set Up Your Environment

Install the required packages:

1pip install requests beautifulsoup4 pandasThen set up your imports:

1import requests

2from bs4 import BeautifulSoup

3import pandas as pd

4import random

5import timeStep 2: Send a Request with Realistic Headers

Amazon blocks requests that look like bots. The most basic defense is a User-Agent header that mimics a real browser. Here's a snippet with a pool of current, realistic User-Agent strings (sourced from , March 2026):

1USER_AGENTS = [

2 "Mozilla/5.0 (Windows NT 10.0; Win64; x64) AppleWebKit/537.36 (KHTML, like Gecko) Chrome/147.0.0.0 Safari/537.36",

3 "Mozilla/5.0 (Macintosh; Intel Mac OS X 10_15_7) AppleWebKit/537.36 (KHTML, like Gecko) Chrome/147.0.0.0 Safari/537.36",

4 "Mozilla/5.0 (Windows NT 10.0; Win64; x64; rv:149.0) Gecko/20100101 Firefox/149.0",

5 "Mozilla/5.0 (Macintosh; Intel Mac OS X 15.7; rv:149.0) Gecko/20100101 Firefox/149.0",

6 "Mozilla/5.0 (Macintosh; Intel Mac OS X 15_7_5) AppleWebKit/605.1.15 (KHTML, like Gecko) Version/26.0 Safari/605.1.15",

7]

8headers = {"User-Agent": random.choice(USER_AGENTS)}

9url = "https://www.amazon.com/Best-Sellers-Electronics/zgbs/electronics/"

10response = requests.get(url, headers=headers)

11print(response.status_code) # Should be 200If you see a 200 status code, you're in. If you see 503 or get redirected to a CAPTCHA page, Amazon has flagged your request.

Step 3: Parse Product Data with BeautifulSoup

Inspect the Amazon page HTML using your browser's DevTools (right-click → Inspect). The product containers use the ID gridItemRoot. Inside each container, you'll find the product name, price, rating, and URL.

1soup = BeautifulSoup(response.text, "html.parser")

2products = []

3for item in soup.find_all("div", id="gridItemRoot"):

4 title_tag = item.find("div", class_="_cDEzb_p13n-sc-css-line-clamp-3_g3dy1")

5 price_tag = item.find("span", class_="_cDEzb_p13n-sc-price_3mJ9Z")

6 link_tag = item.find("a", class_="a-link-normal")

7 title = title_tag.get_text(strip=True) if title_tag else "N/A"

8 price = price_tag.get_text(strip=True) if price_tag else "N/A"

9 url = "https://www.amazon.com" + link_tag["href"] if link_tag else "N/A"

10 products.append({"Title": title, "Price": price, "URL": url})Warning: The

_cDEzb_prefixed class names are CSS module hashes that Amazon regenerates periodically. ThegridItemRootID anda-link-normalclass are more stable, but always verify selectors with DevTools before running your scraper.

Step 4: Export to CSV

1df = pd.DataFrame(products)

2df.to_csv("amazon_best_sellers.csv", index=False)

3print(f"Scraped {len(products)} products")What to Expect — and What Goes Wrong

In my test, this method returned about 30 products instead of 50. That's not a bug in the code — it's Amazon's lazy loading. Only ~30 products render on the initial page load; the rest appear after scrolling, which requires JavaScript execution that requests can't handle.

Other limitations:

- IP bans happen quickly without proxy rotation (I got blocked after about 15 requests in rapid succession)

- CSS selectors break when Amazon updates their page layout — and they do this regularly

- No pagination handling out of the box

For learning Python scraping, this approach is great. For production use, it's fragile.

Method 2: Scrape Amazon Best Sellers with Selenium

Selenium solves the lazy-loading problem by running a real browser — heavier to set up, but it captures all 50 products per page.

Step 1: Install Selenium

1pip install selenium pandasGood news: as of Selenium 4.6+, you no longer need webdriver-manager. Selenium Manager handles driver downloads automatically.

1from selenium import webdriver

2from selenium.webdriver.chrome.options import Options

3from selenium.webdriver.common.by import By

4from selenium.webdriver.common.keys import Keys

5import time

6import pandas as pd

7options = Options()

8options.add_argument("--headless=new")

9options.add_argument("--window-size=1920,1080")

10options.add_argument("--disable-blink-features=AutomationControlled")

11driver = webdriver.Chrome(options=options)The --headless=new flag (introduced in Chrome 109+) uses the same rendering pipeline as headed Chrome, making it harder for Amazon to detect.

Step 2: Scroll Past Lazy Loading

This is the step that makes Selenium worth the setup overhead. Amazon Best Sellers only loads ~30 products initially — the rest appear after scrolling.

1def scroll_page(driver, scrolls=5, delay=2):

2 for _ in range(scrolls):

3 driver.find_element(By.TAG_NAME, "body").send_keys(Keys.PAGE_DOWN)

4 time.sleep(delay)

5driver.get("https://www.amazon.com/Best-Sellers-Electronics/zgbs/electronics/")

6time.sleep(3)

7scroll_page(driver)After scrolling, all 50 products should be rendered in the DOM. I found that 5 page-down scrolls with a 2-second delay was sufficient, but you may need to adjust depending on your connection speed.

Step 3: Extract Product Data

1items = driver.find_elements(By.ID, "gridItemRoot")

2products = []

3for item in items:

4 try:

5 title = item.find_element(By.CSS_SELECTOR, "div._cDEzb_p13n-sc-css-line-clamp-3_g3dy1").text

6 except:

7 title = "N/A"

8 try:

9 price = item.find_element(By.CSS_SELECTOR, "span._cDEzb_p13n-sc-price_3mJ9Z").text

10 except:

11 price = "N/A"

12 try:

13 url = item.find_element(By.CSS_SELECTOR, "a.a-link-normal").get_attribute("href")

14 except:

15 url = "N/A"

16 products.append({"Title": title, "Price": price, "URL": url})Wrapping each extraction in try/except is important — some products may be out of stock or have missing fields, and you don't want one bad element to crash the whole scrape.

Step 4: Handle Pagination

Amazon splits 100 Best Sellers across two pages with different URL structures:

1urls = [

2 "https://www.amazon.com/Best-Sellers-Electronics/zgbs/electronics/",

3 "https://www.amazon.com/Best-Sellers-Electronics/zgbs/electronics/ref=zg_bs_pg_2_electronics?_encoding=UTF8&pg=2"

4]

5all_products = []

6for url in urls:

7 driver.get(url)

8 time.sleep(3)

9 scroll_page(driver)

10 # ... extract products as above ...

11 all_products.extend(products)

12driver.quit()What to Expect

In my test, Selenium captured all 50 products per page — a clear win over requests + BS4. The downside: it took about 45 seconds per page (including scroll delays), and I still got flagged after running it too many times without proxy rotation. Selenium is also detectable by Amazon's bot detection even with the anti-detection flags — for serious scale, you'll need additional measures (see the Anti-Ban Playbook below).

Other pain points:

- WebDriver version mismatches still happen occasionally, though Selenium Manager has made this much less common

- CSS selectors need updating whenever Amazon changes their DOM

- Memory usage is high — each browser instance eats 200–400MB of RAM

Method 3: Scrape Amazon Best Sellers with a Scraping API

Scraping APIs are the "let someone else handle the hard parts" approach. Services like Scrape.do, Oxylabs, and ScrapingBee manage proxy rotation, JavaScript rendering, and anti-bot measures — you just send a URL and get back HTML or JSON.

How It Works

You send your target URL to the API endpoint. The API renders the page using a real browser on their infrastructure, rotates proxies, handles CAPTCHAs, and returns clean HTML. You then parse the returned HTML with BeautifulSoup as usual.

Step 1: Send a Request Through the API

Here's an example using Scrape.do (pricing starts at $29/month for 150,000 credits, 1 credit = 1 request regardless of rendering):

1import requests

2from bs4 import BeautifulSoup

3api_token = "YOUR_API_TOKEN"

4target_url = "https://www.amazon.com/Best-Sellers-Electronics/zgbs/electronics/"

5api_url = f"https://api.scrape.do?token=\{api_token\}&url=\{target_url\}&render=true&geoCode=us"

6response = requests.get(api_url)

7soup = BeautifulSoup(response.text, "html.parser")From here, parsing is identical to Method 1 — same selectors, same extraction logic.

Pricing Reality Check

Here's what the major APIs charge per 1,000 Amazon requests at their best available rate:

| Provider | Cost per 1,000 Requests | Notes |

|---|---|---|

| Scrape.do | ~$0.19 | Flat rate, no credit multipliers |

| Oxylabs | ~$1.80 | 5x multiplier for JS rendering |

| ScrapingBee | ~$4.90 | 5–25x multipliers for premium features |

| Bright Data | ~$5.00+ | Most comprehensive data (686 fields/product) but slowest (~66 sec/request) |

Pros and Cons

Pros: High reliability ( on Amazon for top providers), no driver maintenance, handles anti-bot automatically, scales well.

Cons: Paid per request (costs add up at scale), still requires you to write parsing code, still vulnerable to CSS selector changes. For 100,000 pages/month, the total cost comparison is dramatic: building in-house costs roughly — a 71% savings.

The breakeven point is typically 500K–1M requests/month. Below that, API time savings far outweigh costs.

Method 4: Scrape Amazon Best Sellers with Thunderbit (No Python Needed)

Full disclosure: I work at Thunderbit, so take this section with that context. That said, I genuinely tested all four methods back-to-back, and the difference in time-to-data was striking.

is an AI web scraper that runs as a Chrome extension. The core idea: instead of writing CSS selectors or Python code, the AI reads the page and figures out what data to extract. For Amazon Best Sellers specifically, Thunderbit has pre-built templates that work in one click.

Step 1: Install the Thunderbit Chrome Extension

Go to the and click "Add to Chrome." Sign up for a free account — the free tier gives you enough credits to test this out.

Step 2: Navigate to the Amazon Best Sellers Page

Open any Amazon Best Sellers category page in Chrome. For example:

https://www.amazon.com/Best-Sellers-Electronics/zgbs/electronics/

Step 3: Click "AI Suggest Fields"

Open the Thunderbit sidebar and click "AI Suggest Fields." The AI analyzes the page structure and suggests columns: Product Name, Price, Rating, Image URL, Vendor, Product URL, and Rank. In my test, it correctly identified all the relevant fields within about 3 seconds.

You can rename, remove, or add columns. You can even add custom AI prompts per field — for example, "categorize as Electronics/Apparel/Home" to add a category tag to each product.

Step 4: Click "Scrape"

Hit the "Scrape" button. Thunderbit fills a structured table with all the product data from the page. In cloud mode, it processes up to 50 pages at once in parallel, handling lazy loading and pagination automatically.

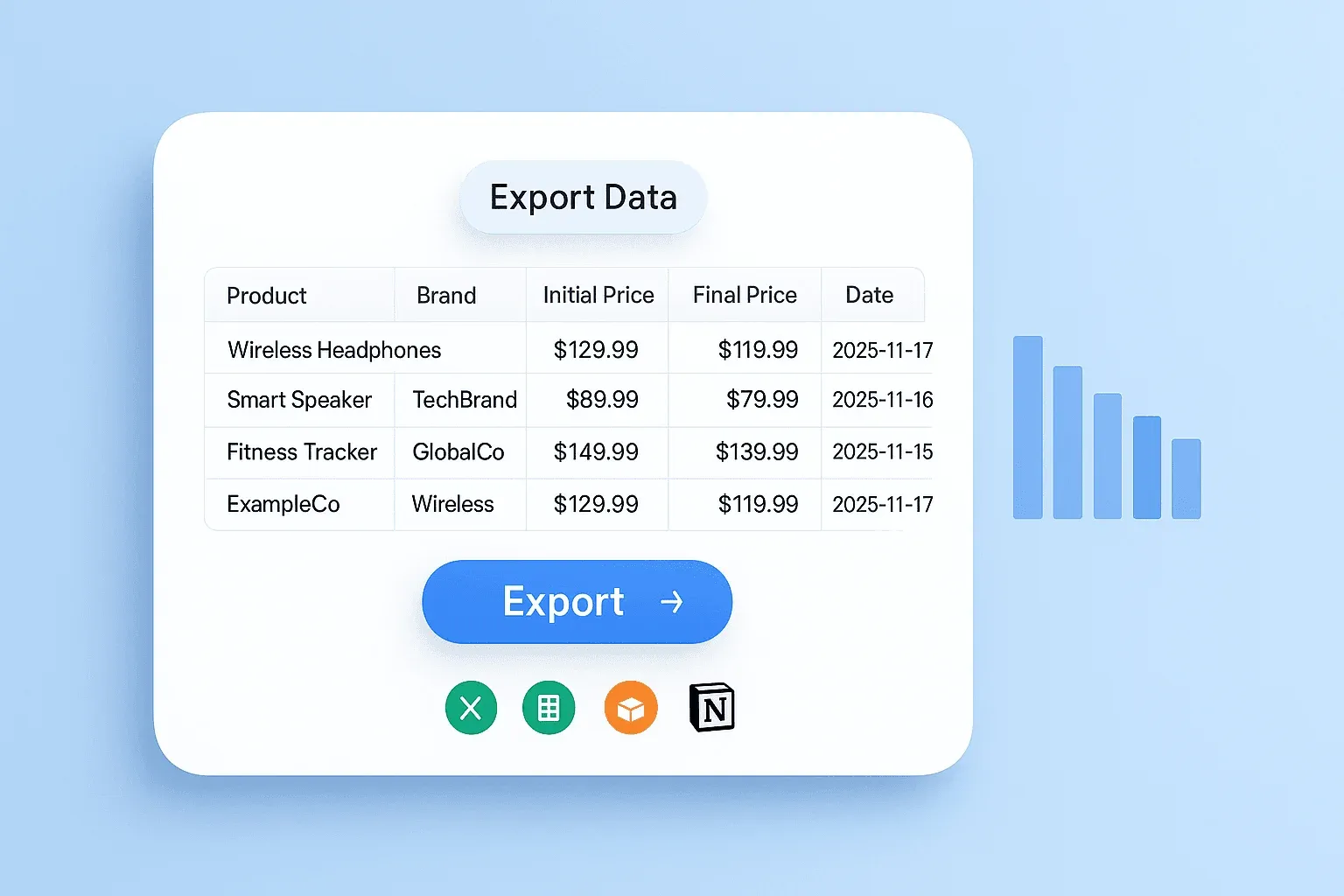

Step 5: Export for Free

Click "Export" and choose your destination: Excel, Google Sheets, Airtable, or Notion. All exports are free on every plan — no hidden charges.

The whole process took me about 90 seconds from opening the page to having a complete spreadsheet. For comparison, Method 1 took about 20 minutes (including debugging the lazy loading issue), Method 2 took about 35 minutes (including Selenium setup), and Method 3 took about 15 minutes (including API account setup).

Why Thunderbit Handles Amazon Well

Because the AI reads the page fresh each time, it adapts to layout changes automatically — no CSS selectors to maintain. This directly addresses the most common complaint in scraping forums: "A basic web scraper won't suffice, you need to add so many 'catches' for element changes." When Amazon changes their DOM (which happens regularly), you don't have to update anything.

Cloud scraping mode handles proxy rotation, rendering, and anti-bot measures transparently. For users who want a "just works" solution, this eliminates the entire anti-ban headache.

The Anti-Ban Playbook: How to Avoid Getting Blocked by Amazon

Amazon's bot detection is aggressive. I got my IP temporarily blocked during testing, and forum users report the same: "errors everywhere, amazon even started redirecting me to the homepage." If you're going the Python route (Methods 1–3), this section is critical.

Here's a layered strategy, ranked from basic to advanced:

1. Rotate User-Agent Strings

Sending the same User-Agent repeatedly is a red flag. Use the pool of 5+ strings from the code example in Method 1, and randomly select one per request:

1headers = {"User-Agent": random.choice(USER_AGENTS)}2. Add Random Delays Between Requests

Fixed delays are detectable (patterns). Random ones are safer:

1time.sleep(random.uniform(2, 5))I found that 2–5 seconds between requests kept me under the radar for small batches (under 50 requests). For larger runs, bump it to 3–7 seconds.

3. Use Proxy Rotation

This is the big one. show residential proxies average ~94% success on Amazon versus ~59% for datacenter proxies — a 35 percentage point gap. Amazon's detection stack includes TLS fingerprinting, behavioral analysis, and per-IP rate limiting, so standard datacenter IPs get flagged within seconds.

Residential proxies are more expensive ($2–$12 per GB depending on provider) but dramatically more reliable. For a code example:

1proxies = {

2 "http": "http://user:pass@residential-proxy.example.com:8080",

3 "https": "http://user:pass@residential-proxy.example.com:8080"

4}

5response = requests.get(url, headers=headers, proxies=proxies)4. Harden Your Browser Fingerprint (Selenium)

1options.add_argument('--disable-blink-features=AutomationControlled')

2options.add_experimental_option("excludeSwitches", ["enable-automation"])

3options.add_experimental_option('useAutomationExtension', False)

4# After driver init, remove navigator.webdriver flag

5driver.execute_cdp_cmd('Page.addScriptToEvaluateOnNewDocument', {

6 'source': "Object.defineProperty(navigator, 'webdriver', {get: () => undefined})"

7})5. Manage Sessions and Cookies

Persisting cookies across requests makes your scraper look more like a real user session:

1session = requests.Session()

2# Visit homepage first to get realistic cookies

3session.get("https://www.amazon.com", headers=headers)

4time.sleep(2)

5# Then scrape your target page

6response = session.get(target_url, headers=headers)6. When to Skip the Headache Entirely

For users who don't want to manage any of this, Thunderbit's cloud scraping handles proxy rotation, rendering, and anti-bot measures transparently. Scraping APIs also handle most of these concerns out of the box. In my experience, the time spent debugging anti-ban issues often exceeds the time spent writing the actual scraping code — so the "just works" approach has real ROI.

Subpage Enrichment: Scraping Product Detail Pages for Richer Data

The Best Sellers listing page only shows basic info — title, price, rating, rank. But the real value for FBA research lives on individual product detail pages. Here's what you're missing if you only scrape the listing:

| Field | Listing Page | Product Detail Page |

|---|---|---|

| Product Name | ✅ | ✅ |

| Price | ✅ | ✅ |

| Rating | ✅ | ✅ |

| BSR Rank | ✅ | ✅ (with sub-category ranks) |

| Brand | ❌ | ✅ |

| ASIN | ❌ | ✅ |

| Date First Available | ❌ | ✅ |

| Dimensions/Weight | ❌ | ✅ |

| Number of Sellers | ❌ | ✅ |

| Bullet-Point Features | ❌ | ✅ |

| Buy Box Owner | ❌ | ✅ |

That "Date First Available" field is especially valuable — it tells you how long a product has been on the market, which is a key signal for competition analysis. And knowing the number of sellers and Buy Box owner helps you assess whether a product niche is worth entering (if Amazon itself holds >30% Buy Box share, competing is extremely difficult).

Python Approach: Looping Through Product URLs

After collecting product URLs from the listing page, loop through each one with a delay:

1for product in products:

2 time.sleep(random.uniform(3, 6))

3 detail_response = session.get(product["URL"], headers={"User-Agent": random.choice(USER_AGENTS)})

4 detail_soup = BeautifulSoup(detail_response.text, "html.parser")

5 # Extract brand

6 brand_tag = detail_soup.find("a", id="bylineInfo")

7 product["Brand"] = brand_tag.get_text(strip=True) if brand_tag else "N/A"

8 # Extract ASIN from page source or URL

9 # Extract Date First Available from product details table

10 # ... additional fields ...Fair warning: hitting 100 individual product pages significantly increases your ban risk. Budget for proxy rotation and longer delays.

Thunderbit Approach: One-Click Subpage Scraping

After scraping the listing page into a table, click "Scrape Subpages" in Thunderbit. The AI visits each product URL and enriches the table with additional columns — brand, ASIN, specifications, features — automatically. No additional code, selectors, or setup. This is particularly useful for e-commerce teams who need the full picture for sourcing decisions but don't want to write and maintain a detail-page parser.

Automating Recurring Scrapes: Monitor Best Sellers Over Time

A one-time scrape is useful, but ongoing monitoring is where the real competitive advantage lives. Tracking which products rise and fall, spotting trends early, and monitoring price shifts over weeks or months — that's what separates casual research from data-driven decision-making.

Python Approach: Scheduling with Cron

On Linux/Mac, you can schedule your Python script with cron. Here's the crontab entry for a daily 8am scrape:

10 8 * * * /usr/bin/python3 /home/user/amazon_scraper.py >> /home/user/logs/scrape.log 2>&1For a weekly Monday 9am scrape:

10 9 * * 1 /usr/bin/python3 /home/user/amazon_scraper.py >> /home/user/logs/scrape.log 2>&1On Windows, use Task Scheduler to achieve the same thing. For always-on scheduling without keeping your laptop running, deploy to a VPS or AWS Lambda — though that adds infrastructure complexity.

Add logging and error notifications to catch failed runs. There's nothing worse than discovering your scraper silently broke two weeks ago.

Thunderbit Approach: Scheduled Scraper in Plain Language

Thunderbit's Scheduled Scraper lets you describe the interval in natural language — type "every Monday at 9am" or "every day at 8am" and the AI interprets the schedule. Scrapes run on Thunderbit's cloud servers (no browser or computer needs to be running), and data exports automatically to Google Sheets or Airtable. This creates a live monitoring dashboard with zero server management — ideal for operations teams who want ongoing visibility without the DevOps overhead.

Legal and Ethical Considerations When Scraping Amazon

I'm not a lawyer, and this isn't legal advice. But ignoring the legal landscape in a scraping tutorial would be irresponsible — forum users explicitly raise ToS concerns, and for good reason.

Amazon's robots.txt: As of 2026, Amazon's robots.txt contains 80+ specific Disallow paths, but /gp/bestsellers/ is NOT explicitly blocked for standard user agents. However, 35+ AI-specific user agents (ClaudeBot, GPTBot, Scrapy, etc.) receive a blanket Disallow: /. The absence of a specific disallow doesn't mean Amazon endorses scraping.

Amazon's Terms of Service: Amazon's (updated May 2025) explicitly prohibit "using any automated process or technology to access, acquire, copy, or monitor any part of the Amazon Website" without written permission. This isn't theoretical — Amazon in November 2025 over unauthorized automated access and won a preliminary injunction.

The hiQ v. LinkedIn precedent: In (Ninth Circuit, 2022), the court held that scraping publicly available data likely doesn't violate the Computer Fraud and Abuse Act. But hiQ ultimately settled and agreed to stop scraping — winning on CFAA doesn't protect against breach of contract claims.

Practical guidelines:

- Scrape only publicly available data (prices, BSR, product titles — not PII)

- Respect rate limits and don't overload servers

- Use data for legitimate competitive intelligence

- Consult your own legal counsel before scraping at scale

- Be aware that now have comprehensive privacy legislation

Thunderbit's cloud scraping uses standard browser-like request patterns, but you should always verify compliance with your own legal counsel.

Which Method Should You Use? A Quick Decision Guide

The short version:

- "I'm learning Python and want a weekend project." → Method 1 (requests + BeautifulSoup). You'll learn a ton about HTTP requests, HTML parsing, and Amazon's anti-bot defenses.

- "I need to scrape JavaScript-heavy pages or logged-in sessions." → Method 2 (Selenium). It's heavier but handles dynamic content.

- "I'm running production scrapes at scale." → Method 3 (Scraping API). Let someone else manage proxies and rendering. The favors APIs below 500K requests/month.

- "I'm not a developer and I want data in 2 minutes." → Method 4 (). No code, no selectors, no maintenance.

- "I need ongoing monitoring without server management." → Thunderbit Scheduled Scraper. Set it and forget it.

Conclusion and Key Takeaways

After a weekend of testing, here's what actually stuck:

requests + BeautifulSoup is great for learning, but the lazy-loading limitation (only ~30 of 50 products) and fragile CSS selectors make it impractical for production use.

Selenium solves the lazy-loading problem and captures all 50 products per page, but it's slow, memory-hungry, and still detectable by Amazon's bot defenses.

Scraping APIs offer the best reliability for production-scale scraping — on Amazon — but costs add up and you still need to write parsing code.

Thunderbit delivered the fastest time-to-data by a wide margin. The AI handles layout changes, lazy loading, pagination, and anti-bot measures without any configuration. For non-technical users or teams that need recurring data without DevOps overhead, it's the most practical option.

The biggest lesson? Amazon's anti-bot defenses and frequent layout changes mean maintenance-free solutions save the most time in the long run. Every hour you spend debugging broken selectors and rotating proxies is an hour you're not spending on actual analysis.

Want to try the no-code approach? gives you enough credits to scrape a few Best Sellers categories and see the results for yourself. Prefer the Python route? The code examples above should get you started. Either way, you'll have Amazon Best Seller data in a spreadsheet instead of staring at a browser tab.

For more on web scraping approaches, check out our guides on , , and . You can also watch step-by-step walkthroughs on the .

Learn More