There’s something oddly satisfying about watching a script zip through a website, scooping up data while you sip your coffee. If you’re like me, you’ve probably wondered: “How can I make web scraping faster, smarter, and less of a headache?”

That’s exactly what drew me into the world of OpenClaw web scraping. In a digital landscape where for everything from sales leads to market intelligence, mastering the right tools isn’t just a tech flex—it’s a business necessity.

OpenClaw has quickly become a favorite in the scraping community, especially for folks tackling dynamic, image-heavy, or complex sites that leave traditional scrapers gasping for air.

In this guide, I’ll walk you through everything from setting up OpenClaw to building advanced, automated workflows. And, because I’m all about saving time, I’ll show you how to supercharge your scraping with Thunderbit’s AI features for a workflow that’s not just powerful, but actually fun to use.

What is OpenClaw Web Scraping?

Let’s start with the basics. OpenClaw web scraping refers to using the OpenClaw platform—a self-hosted, open-source agent gateway—to automate the extraction of data from websites. OpenClaw isn’t just another scraper; it’s a modular system that connects your favorite chat channels (like Discord or Telegram) to a suite of agent tools, including web fetchers, search utilities, and even a managed browser for those JavaScript-heavy sites that make other tools sweat.

What makes OpenClaw stand out for web data extraction? It’s designed to be both flexible and robust. You can use built-in tools like web_fetch for simple HTTP extraction, spin up an agent-controlled Chromium browser for dynamic content, or plug in community-built skills (like ) for more advanced workflows. It’s open-source (), actively maintained, and has a thriving ecosystem of plugins and skills, making it a top choice for anyone serious about scraping at scale.

OpenClaw handles a wide range of data types and website formats, including:

- Text and structured HTML

- Images and media links

- Dynamic content rendered by JavaScript

- Complex, multi-layered DOM structures

And because it’s agent-driven, you can orchestrate scraping tasks, automate reporting, and even interact with your data in real time—all from your favorite chat app or terminal.

Why OpenClaw is a Powerful Tool for Web Data Extraction

So, why are so many data pros and automation geeks flocking to OpenClaw? Let’s break down the technical strengths that make it a powerhouse for web scraping:

Speed and Compatibility

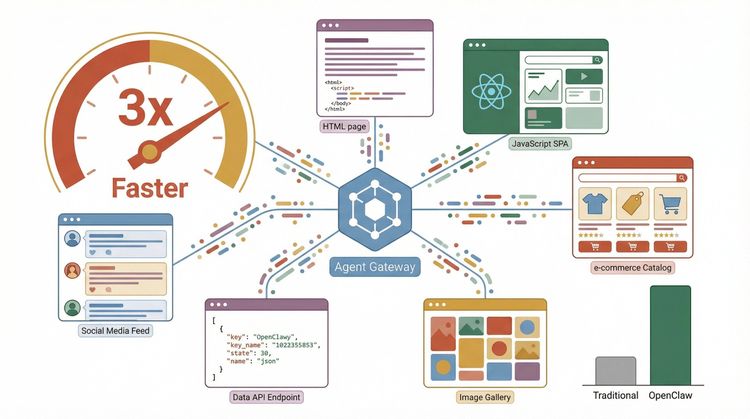

OpenClaw’s architecture is built for speed. Its core web_fetch tool leverages HTTP GET requests with smart content extraction, caching, and redirect handling. In internal and community benchmarks, OpenClaw consistently outpaces legacy tools like BeautifulSoup or Selenium when extracting large volumes of data from static and semi-dynamic sites ().

But where OpenClaw really shines is compatibility. Thanks to its managed browser mode, it can handle sites that rely on JavaScript for rendering—something that trips up many traditional scrapers. Whether you’re targeting an image-rich e-commerce catalog or a single-page app with infinite scroll, OpenClaw’s agent-controlled Chromium profile gets the job done.

Resilience to Website Changes

One of the biggest headaches in web scraping is dealing with site updates that break your scripts. OpenClaw’s plugin and skill system is designed to be resilient. For example, wrappers around the library offer adaptive extraction, meaning your scraper can “relocate” elements even if the site layout changes—a huge win for long-term projects.

Real-World Performance

In side-by-side tests, OpenClaw-based workflows have shown:

- Up to 3x faster extraction on complex, multi-page sites compared to traditional Python scrapers ()

- Higher success rates on dynamic, JavaScript-heavy pages, thanks to the managed browser

- Better handling of mixed-content pages (text, images, HTML fragments)

User testimonials often highlight OpenClaw’s ability to “just work” where other tools fail—especially for scraping data from sites with tricky layouts or anti-bot measures.

Getting Started: Setting Up OpenClaw for Web Scraping

Ready to dive in? Here’s how to get OpenClaw up and running on your system.

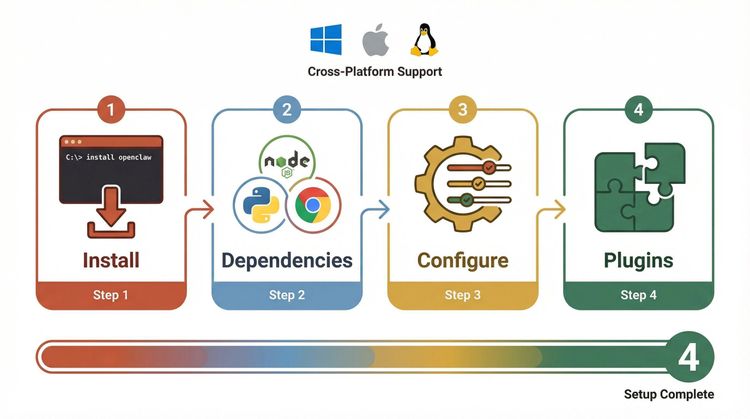

Step 1: Install OpenClaw

OpenClaw supports Windows, macOS, and Linux. The official docs recommend starting with the guided onboarding flow:

1openclaw onboard()

This command walks you through the initial setup, including environment checks and basic configuration.

Step 2: Install Required Dependencies

Depending on your workflow, you may need:

- Node.js (for the core gateway)

- Python 3.10+ (for plugins/skills that use Python, like Scrapling wrappers)

- Chromium/Chrome (for managed browser mode)

On Linux, you might need to install additional packages for browser support. The docs have a for common issues.

Step 3: Configure Web Tools

Set up your web search provider:

1openclaw configure --section web()

This lets you choose from providers like Brave, DuckDuckGo, or Firecrawl.

Step 4: Install Plugins or Skills (Optional)

To unlock advanced scraping, install community plugins or skills. For example, to add the :

1git clone https://github.com/hvkeyn/openclaw-plugin-web-scraper.git

2cd openclaw-plugin-web-scraper

3openclaw plugins install .

4openclaw gateway restart()

Pro Tips for Beginners

- Run

openclaw security auditafter installing new plugins to check for vulnerabilities (). - If you’re using Node via nvm, double-check your CA certificates—mismatches can break HTTPS requests ().

- Always isolate plugins and browser components in a VM or container for extra safety.

Beginner’s Guide: Your First OpenClaw Scraping Project

Let’s build a simple scraping project—no PhD in computer science required.

Step 1: Choose Your Target Website

Pick a site with structured data, like a product listing or directory. For this example, let’s scrape product titles from a demo e-commerce page.

Step 2: Understand the DOM Structure

Use your browser’s “Inspect Element” tool to find the HTML tags that contain the data you want (e.g., <h2 class="product-title">).

Step 3: Set Up Extraction Filters

With OpenClaw’s Scrapling-based skills, you can use CSS selectors to target elements. Here’s a sample script using the skill:

1PYTHON=/opt/scrapling-venv/bin/python3

2$PYTHON scripts/scrape.py fetch "https://example.com/products" --css "h2.product-title::text"()

This command fetches the page and extracts all product titles.

Step 4: Safe Data Handling

Export your results to CSV or JSON for easy analysis:

1$PYTHON scripts/scrape.py fetch "https://example.com/products" --css "h2.product-title::text" -f csv -o products.csvKey Concepts Explained

- Tool schemas: Define what each tool or skill can do (fetch, extract, crawl).

- Skill registration: Add new scraping capabilities to OpenClaw via ClawHub or manual install.

- Safe data handling: Always validate and sanitize your outputs before using them in production.

Automating Complex Scraping Workflows with OpenClaw

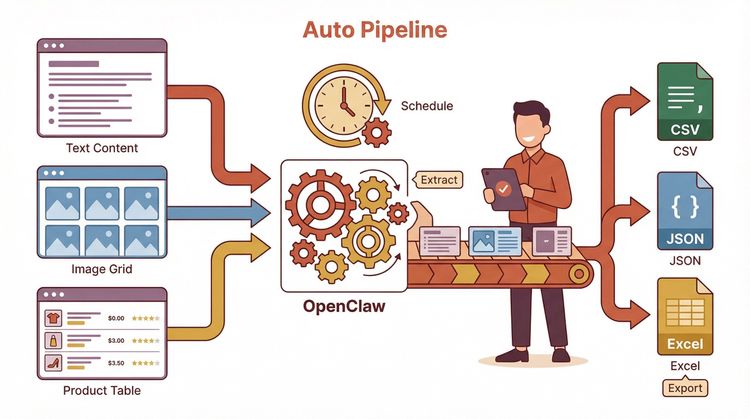

Once you’ve mastered the basics, it’s time to automate. Here’s how to build a workflow that runs itself (while you focus on more important things—like lunch).

Step 1: Create and Register Custom Skills

Write or install skills that match your specific extraction needs. For example, you might want to scrape product info and images, then send a daily report.

Step 2: Set Up Scheduled Tasks

On Linux or macOS, use cron to schedule your scraping scripts:

10 6 * * * /usr/bin/python3 /path/to/scrape.py fetch "https://example.com/products" --css "h2.product-title::text" -f csv -o /data/products_$(date +\%F).csvOn Windows, use Task Scheduler with similar arguments.

Step 3: Integrate with Other Tools

For dynamic navigation (e.g., clicking buttons or logging in), combine OpenClaw with Selenium or Playwright. Many OpenClaw skills can call out to these tools or accept browser automation scripts.

Manual vs. Automated Workflow Comparison

| Step | Manual Workflow | Automated OpenClaw Workflow |

|---|---|---|

| Data extraction | Run script by hand | Scheduled via cron/Task Scheduler |

| Dynamic navigation | Click manually | Automated with Selenium/skills |

| Data export | Copy/paste or download | Auto-export to CSV/JSON |

| Reporting | Manual summary | Auto-generate and email reports |

| Error handling | Fix as you go | Built-in retries/logging |

The result? More data, less drudgery, and a workflow that scales with your ambitions.

Boosting Efficiency: Integrating Thunderbit’s AI Scraping Features with OpenClaw

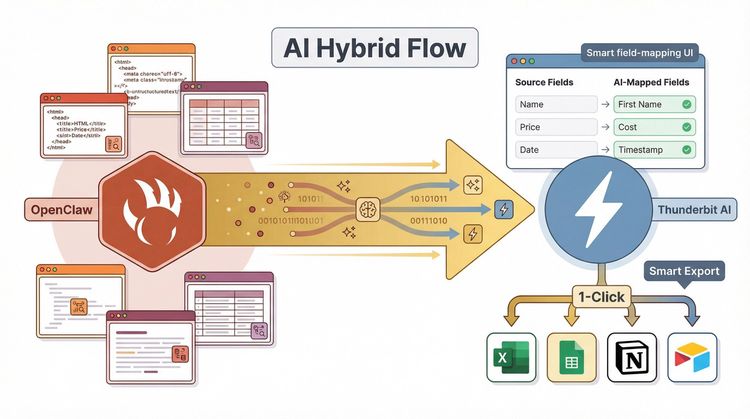

Now, here’s where things get really interesting. As the co-founder of , I’m a big believer in combining the best of both worlds: OpenClaw’s flexible scraping engine and Thunderbit’s AI-powered field detection and export.

How Thunderbit Supercharges OpenClaw

- AI Suggest Fields: Thunderbit can automatically analyze a web page and recommend the best columns to extract—no more guessing at CSS selectors.

- Instant Data Export: Export your scraped data directly to Excel, Google Sheets, Airtable, or Notion with a single click ().

- Hybrid Workflow: Use OpenClaw for complex navigation and scraping logic, then pipe the results into Thunderbit for field mapping, enrichment, and export.

Example Hybrid Workflow

- Use OpenClaw’s managed browser or Scrapling skill to extract raw data from a dynamic site.

- Import the results into Thunderbit.

- Click “AI Suggest Fields” to auto-map the data.

- Export to your preferred format or platform.

This combo is a game-changer for teams that need both power and ease of use—think sales ops, e-commerce analysts, and anyone tired of wrangling messy spreadsheets.

Real-Time Troubleshooting: Common OpenClaw Errors and How to Fix Them

Even the best tools hit a snag now and then. Here’s a quick guide to diagnosing and fixing common OpenClaw scraping issues:

Frequent Errors

- Authentication issues: Some sites block bots or require login. Use OpenClaw’s managed browser or integrate with Selenium for login flows ().

- Blocked requests: Rotate user agents, use proxies, or slow down your request rate to avoid bans.

- Parsing failures: Double-check your CSS/XPath selectors; sites may have changed their structure.

- Plugin/skill errors: Run

openclaw plugins doctorto diagnose issues with installed extensions ().

Diagnostic Commands

openclaw status– Check gateway and tool status.openclaw security audit– Scan for vulnerabilities.openclaw browser --browser-profile openclaw status– Check browser automation health.

Community Resources

Best Practices for Reliable and Scalable OpenClaw Scraping

Want to keep your scraping smooth and sustainable? Here’s my checklist:

- Respect robots.txt: Only scrape what you’re allowed to.

- Throttle requests: Avoid hammering sites with too many requests per second.

- Validate outputs: Always check your data for completeness and accuracy.

- Monitor usage: Log your scraping runs and watch for errors or bans.

- Use proxies for scale: Rotate IPs to avoid rate limits.

- Deploy in the cloud: For large jobs, run OpenClaw in a VM or containerized environment.

- Handle errors gracefully: Build retries and fallback logic into your scripts.

| Do’s | Don’ts |

|---|---|

| Use official plugins/skills | Install untrusted code blindly |

| Run security audits regularly | Ignore vulnerability warnings |

| Test on staging before production | Scrape sensitive or private data |

| Document your workflows | Rely on hardcoded selectors |

Advanced Tips: Customizing and Extending OpenClaw for Unique Needs

If you’re ready to go full power-user, OpenClaw lets you build custom skills and plugins for specialized tasks.

Developing Custom Skills

- Follow the to create new extraction tools.

- Use Python or TypeScript, depending on your comfort zone.

- Register your skill with ClawHub for easy sharing and reuse.

Advanced Features

- Chaining skills: Combine multiple extraction steps (e.g., scrape a list page, then visit each detail page).

- Headless browsers: Use OpenClaw’s managed Chromium or integrate with Playwright for JavaScript-heavy sites.

- AI agent integration: Connect OpenClaw to external AI services for smarter data parsing or enrichment.

Error Handling and Context Management

- Build robust error handling into your skills (try/except in Python, error callbacks in TypeScript).

- Use context objects to pass state between scraping steps.

For inspiration, check out and the .

Conclusion & Key Takeaways

We’ve covered a lot of ground—from installing OpenClaw and running your first scrape to building automated, hybrid workflows with Thunderbit. Here’s what I hope you’ll remember:

- OpenClaw is a flexible, open-source powerhouse for web data extraction, especially on complex or dynamic sites.

- Its plugin/skill ecosystem lets you tackle everything from simple fetches to advanced, multi-step scraping.

- Combining OpenClaw with Thunderbit’s AI features makes field mapping, data export, and workflow automation a breeze.

- Stay secure and compliant: Audit your environment, respect site rules, and validate your data.

- Don’t be afraid to experiment: The OpenClaw community is active and welcoming—jump in, try new skills, and share your wins.

If you’re looking to push your scraping efficiency even further, is here to help. And if you want to keep learning, check out the for more deep dives and practical guides.

Happy scraping—and may your selectors always find their mark.

FAQs

1. What makes OpenClaw different from traditional web scrapers like BeautifulSoup or Scrapy?

OpenClaw is built as an agent gateway with modular tools, managed browser support, and a plugin/skill system. This makes it more flexible for dynamic, JavaScript-heavy, or image-rich sites, and easier to automate end-to-end workflows compared to traditional, code-heavy frameworks ().

2. Can I use OpenClaw if I’m not a developer?

Yes! OpenClaw’s onboarding flow and plugin ecosystem are beginner-friendly. For more complex tasks, you can use skills built by the community or combine OpenClaw with no-code tools like for easy field mapping and export.

3. How do I troubleshoot common OpenClaw errors?

Start with openclaw status and openclaw security audit. For plugin issues, use openclaw plugins doctor. Check the and GitHub issues for solutions to common problems.

4. Is it safe and legal to use OpenClaw for web scraping?

As with any scraper, always respect website terms of service and robots.txt. OpenClaw is open-source and runs locally, but you should audit plugins for security and avoid scraping sensitive or private data without permission ().

5. How can I combine OpenClaw with Thunderbit for better results?

Use OpenClaw for complex scraping logic, then import your raw data into Thunderbit. Thunderbit’s AI Suggest Fields will auto-map your data, and you can export directly to Excel, Google Sheets, Notion, or Airtable—making your workflow faster and more reliable ().

Want to see how Thunderbit can level up your scraping? and start building smarter, hybrid workflows today. And don’t forget to check out the for hands-on tutorials and tips.

Learn More