A GitHub search for "linkedin scraper" returns roughly as of April 2026. Most of them will waste your time. Harsh? Maybe. But that's what I found after auditing eight of the most visible repos, reading dozens of GitHub issue threads, and cross-referencing community reports from Reddit and scraping forums. The pattern repeats itself: high-star repos attract attention, LinkedIn's anti-bot team studies the code, detection gets patched, users end up with broken selectors, CAPTCHA loops, or outright account bans. One Reddit user described the current state bluntly — LinkedIn has added "stricter rate limits, better bot detection, session tracking, and frequent changes," and old tools now "break quickly or get accounts/IPs flagged." If you're a sales rep, recruiter, or ops manager looking for LinkedIn data in a spreadsheet, the repo you cloned last month might already be dead. This guide is designed to help you figure out which GitHub projects are actually worth your time, how to avoid getting your account torched, and when it makes more sense to skip the code entirely.

What Is a LinkedIn Scraper on GitHub?

A LinkedIn scraper GitHub project is an open-source script — usually Python, sometimes Node.js — that automates extracting structured data from LinkedIn pages. The typical targets include:

- People profiles: name, headline, company, location, skills, experience

- Job listings: title, company, location, posting date, job URL

- Company pages: overview, headcount, industry, follower count

- Posts and engagement: content text, likes, comments, shares

Under the hood, most repos use one of two approaches. Browser-driven scrapers rely on Selenium, Playwright, or Puppeteer to render pages, click through flows, and extract data via CSS selectors or XPath. A smaller subset tries to call LinkedIn's internal (undocumented) API endpoints directly. And a newer wave — still rare on GitHub but growing — pairs browser automation with an LLM like GPT-4o mini to parse page text into structured fields without brittle selectors.

There's a fundamental audience mismatch. These tools are built by developers comfortable with virtual environments, browser dependencies, and proxy configuration. But a large share of the people searching "linkedin scraper github" are recruiters, SDRs, RevOps managers, and founders who just want rows in a spreadsheet.

That gap explains most of the frustration in the issue threads.

Why People Turn to GitHub for LinkedIn Scraping

The appeal is obvious. Free. Customizable. No vendor lock-in. Full control over your data pipeline. If a SaaS tool changes pricing or shuts down, your code still exists.

| Use Case | Who Needs It | Typical Data Extracted |

|---|---|---|

| Lead generation | Sales teams | Names, titles, companies, profile URLs, email clues |

| Candidate sourcing | Recruiters | Profiles, skills, experience, locations |

| Market research | Ops and strategy teams | Company data, headcounts, job postings |

| Competitive intelligence | Marketing teams | Posts, engagement, company updates, hiring signals |

But "free" is a licensing label, not an operating cost. The real expenses are:

- Setup time: even friendly repos typically require 30 minutes to 2+ hours for environment setup, browser dependencies, cookie extraction, and proxy configuration

- Maintenance: LinkedIn changes its DOM and anti-bot defenses regularly — a working scraper today can break next week

- Proxies: residential proxy bandwidth runs depending on provider and plan

- Account risk: your LinkedIn account is the most expensive thing at stake, and it's not replaceable like a proxy IP

The Repo Health Scorecard: How to Evaluate Any LinkedIn Scraper GitHub Project

Most "best LinkedIn scraper" lists rank repos by star count. Stars measure historical interest, not current functionality. A repo with 3,000 stars and no commits since 2022 is a museum exhibit, not a production tool.

Before you run git clone on anything, apply this framework:

| Criteria | Why It Matters | Red Flag |

|---|---|---|

| Last commit date | LinkedIn changes DOM frequently | > 6 months ago for browser-driven repos |

| Open/closed issues ratio | Maintainer responsiveness | > 3:1 open-to-closed, especially with recent "blocked" or "CAPTCHA" reports |

| Anti-detection features | LinkedIn bans aggressively | No mention of cookies, sessions, pacing, or proxies in README |

| Auth method | 2FA and CAPTCHA break login flows | Only supports password-based headless login |

| License type | Legal exposure for commercial use | No license or ambiguous terms |

| Data types supported | Different use cases need different repos | Only one data type when you need several |

The single trick that saves the most time: before committing to any repo, search its Issues tab for "blocked," "banned," "CAPTCHA," or "not working." If recent issues are full of those terms with no maintainer response, move on. That repo already lost the fight.

What the 2026 Audit Actually Found

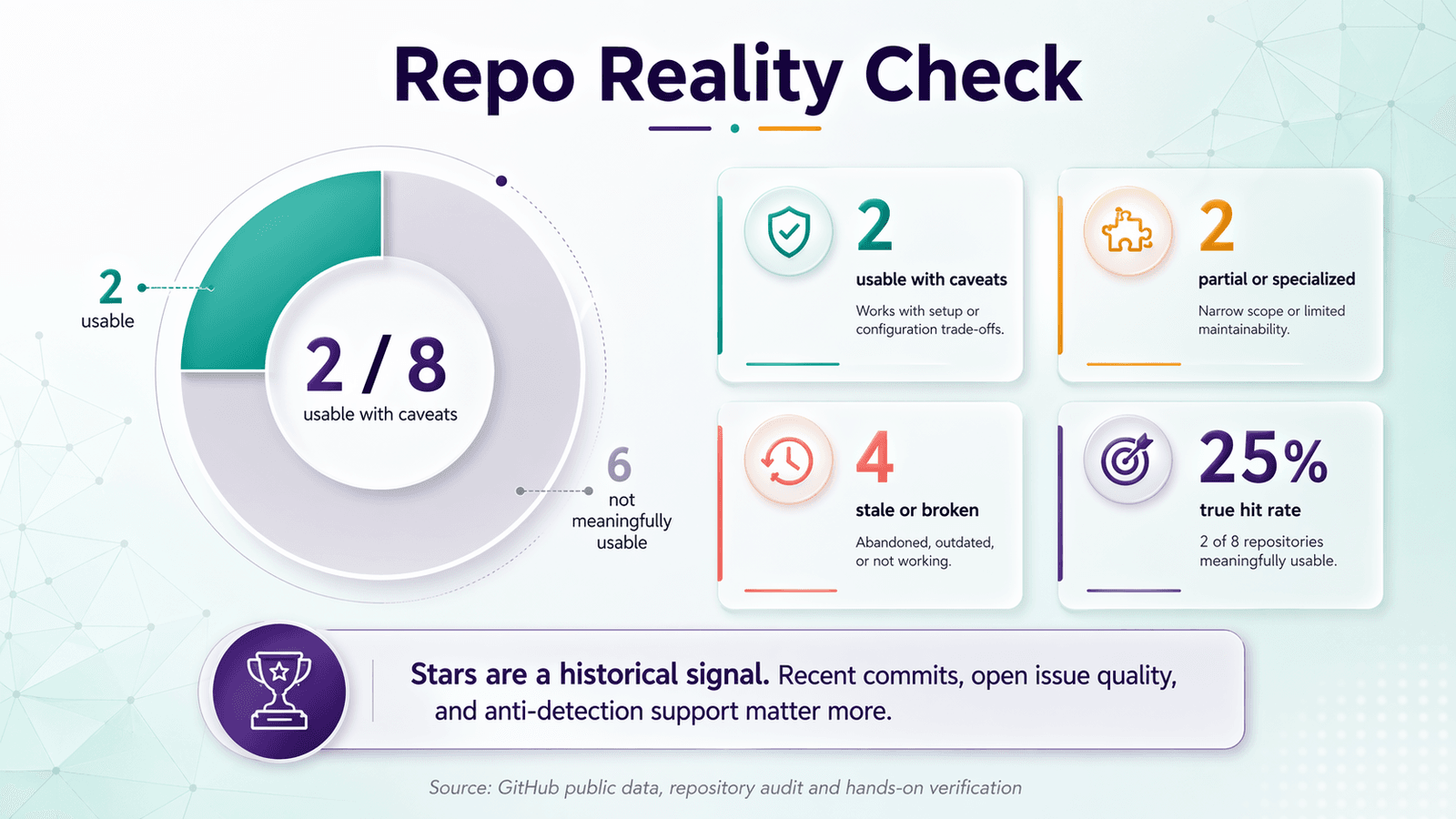

I applied this scorecard to eight of the most visible LinkedIn scraper repos on GitHub. The results were not encouraging.

| Repo | Stars | Last Commit | Working in 2026? | Main Scope | Key Notes |

|---|---|---|---|---|---|

| joeyism/linkedin_scraper | ~3,983 | Apr 2026 | ✅ With caveats | Profiles, companies, posts, jobs | Playwright-based rewrite, session reuse — but recent issues show security blocks and broken job search |

| python-scrapy-playbook/linkedin-python-scrapy-scraper | ~111 | Jan 2026 | ✅ For tutorials/public data | People, companies, jobs | ScrapeOps proxy integration; free plan allows 1,000 requests/month with 1 thread |

| spinlud/py-linkedin-jobs-scraper | ~472 | Mar 2025 | ⚠️ Jobs only | Jobs | Cookie support, experimental proxy mode — useful if you only need public job listings |

| madingess/EasyApplyBot | ~170 | Mar 2025 | ⚠️ Wrong tool | Easy Apply automation | Not a data scraper — automates job applications |

| linkedtales/scrapedin | ~611 | May 2021 | ❌ | Profiles | README still says "working in 2020"; issues show pin verification and HTML changes |

| austinoboyle/scrape-linkedin-selenium | ~526 | Oct 2022 | ❌ | Profiles, companies | Once useful, now too stale for 2026 |

| eilonmore/linkedin-private-api | ~291 | Jul 2022 | ❌ | Profiles, jobs, companies, posts | Private API wrapper; undocumented endpoints shift unpredictably |

| nsandman/linkedin-api | ~154 | Jul 2019 | ❌ | Profiles, messaging, search | Historically interesting; documented rate limiting after ~900 requests/hour |

Only 2 of 8 repos looked meaningfully usable for a 2026 reader without heavy disclaimers. That ratio is not unusual — it's the norm for LinkedIn scraping on GitHub.

The Ban Prevention Playbook: Proxies, Rate Limits, and Account Safety

Account bans are the single biggest operational risk. Even technically competent scrapers fail here. The code works; the account doesn't. Users report getting flagged after as few as despite proxies and long delays.

Rate Limiting: What the Community Reports

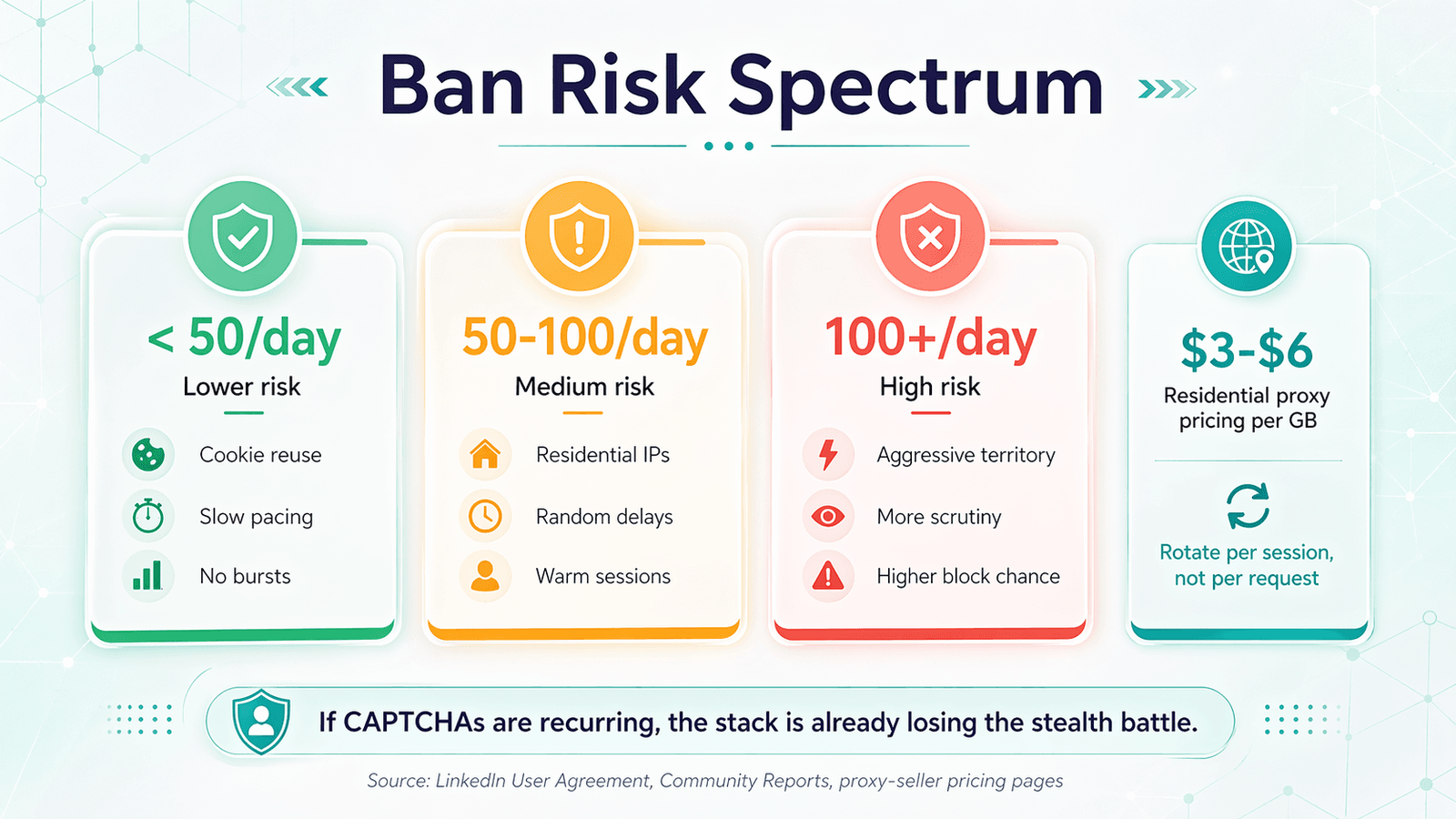

No guaranteed safe number exists. LinkedIn evaluates session age, click timing, burst patterns, IP reputation, and account behavior — not just raw volume. Community data clusters around these bands:

- One user reported detection after 40–80 profiles with proxies and 33-second pacing

- Another advised staying around 30 profiles/day/account

- A more aggressive operator claimed spread throughout the day

- documented an internal rate-limit warning after about 900 requests in one hour

The practical synthesis: under 50 profile views/day/account is the lower-risk zone. 50–100/day is medium risk where session quality matters a lot. Above 100/day/account is increasingly aggressive territory.

Proxy Strategy: Residential vs. Datacenter

Residential proxies remain the standard for LinkedIn because they resemble normal end-user traffic. Datacenter IPs are cheaper but get flagged faster on sophisticated sites — and LinkedIn is exactly the kind of sophisticated site where cheap traffic gets noticed.

Current pricing context:

- : $3.00–$4.00/GB depending on plan

- : $4.00–$6.00/GB depending on plan

Rotate per session, not per request. Per-request rotation creates a fingerprint that says "proxy infrastructure" louder than any single IP would.

Burner Account Protocol

Community advice is blunt on this one: do not treat your main LinkedIn account as disposable scraping infrastructure.

If you insist on account-backed scraping:

- Use a separate account from your primary professional identity

- Complete the profile fully and let it behave like a human for days before scraping

- Never link your real phone number to scraping accounts

- Keep scraping sessions completely separate from real outreach and messaging

Worth noting: LinkedIn's (effective November 3, 2025) explicitly prohibits false identities and account sharing. The burner-account tactic is operationally common but contractually messy.

Handling CAPTCHAs

A CAPTCHA isn't just an inconvenience. It's a signal that your session is already under scrutiny. Options include:

- Manual completion to continue a session

- Reusing cookies instead of re-running login flows

- Solver services like (~$0.50–$1.00 per 1,000 image CAPTCHAs, ~$1.00–$2.99 per 1,000 reCAPTCHA v2 solves)

But if your workflow is routinely triggering CAPTCHAs, the economics of solver services are the least of your problems. Your stack is losing the stealth battle.

The Risk Spectrum

| Volume | Risk Level | Recommended Approach |

|---|---|---|

| < 50 profiles/day | Lower | Browser session or cookie reuse, slow pacing, no aggressive automation |

| 50–500 profiles/day | Medium to high | Residential proxies, warmed accounts, session reuse, randomized delays |

| 500+/day | Very high | Commercial APIs or maintained tooling with built-in anti-detection; public GitHub repos alone usually aren't enough |

The Open-Source Paradox: Why Popular LinkedIn Scraper GitHub Repos Break Faster

Users raise a fair concern: "Making an open-source version means LinkedIn can just look at what you're doing and prevent it." That worry is not paranoid. It's structurally correct.

The Visibility Problem

High star counts create two signals at once: trust for users and a target for LinkedIn's security team. The more popular a repo becomes, the more likely LinkedIn is to specifically counter its methods.

You can see this lifecycle in the audit data. linkedtales/scrapedin was notable enough to advertise that it worked with LinkedIn's "new website" in 2020. But the repo didn't keep pace with later verification and layout changes. nsandman/linkedin-api documented useful tricks once, but its last commit was years before the current anti-bot environment.

The Community Patch Advantage

Open source still has one real upside: active maintainers and contributors can patch quickly when LinkedIn changes defenses. joeyism/linkedin_scraper is the main example from this audit — it still throws off blocked-auth and broken-search issues, but it's at least moving. Forks often implement newer evasion techniques faster than the original repo.

What to Do About It

- Don't rely on a single public repo as permanent infrastructure

- Watch for active forks that implement updated evasion techniques

- Consider maintaining a private fork for production use (so your specific adaptations aren't public)

- Expect to change methods when LinkedIn changes detection or UI behavior

- Diversify approaches rather than betting everything on one tool

AI-Powered Extraction vs. CSS Selectors: A Practical Comparison

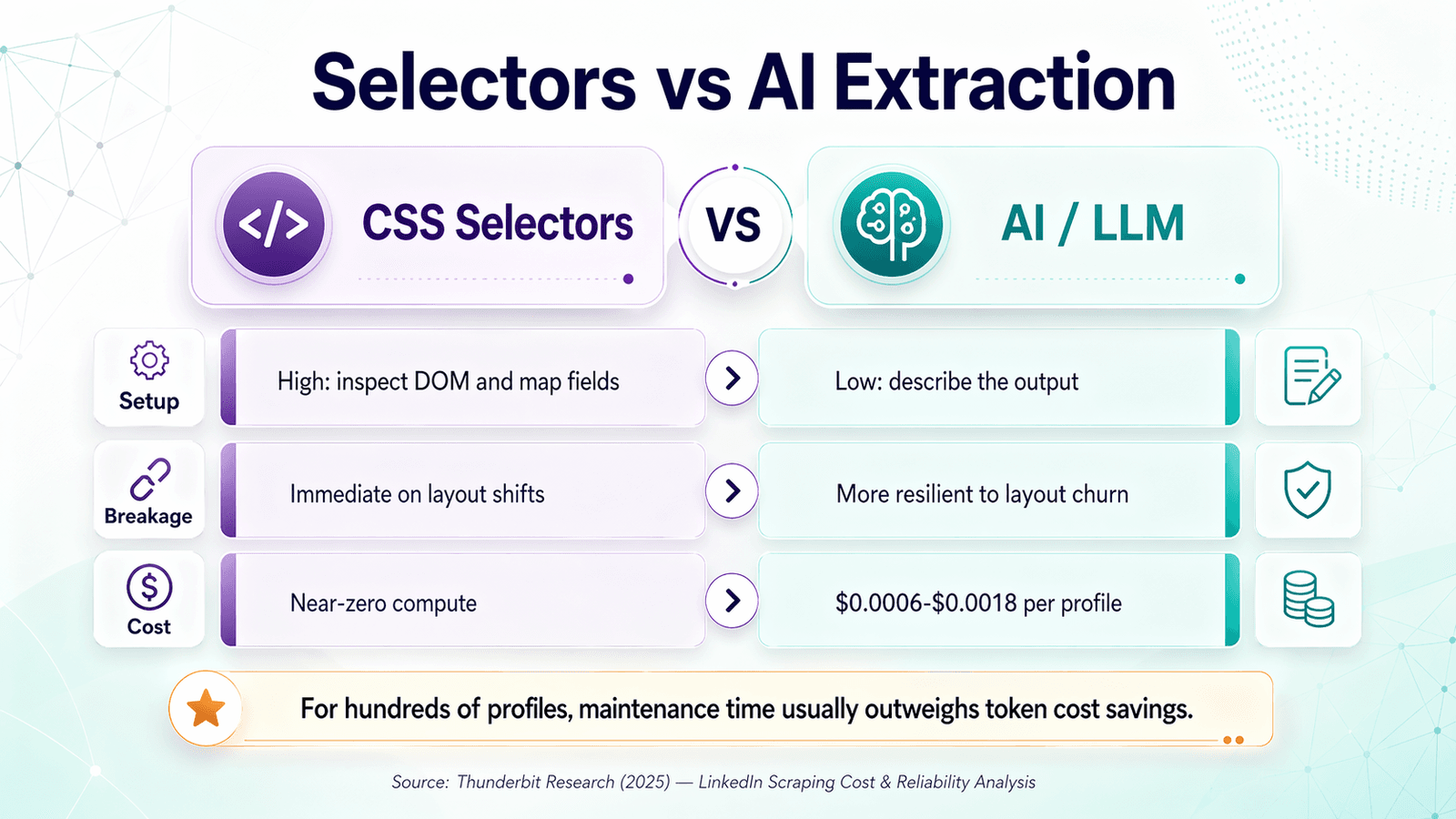

The more interesting technical split in 2026 isn't GitHub versus no-code. It's selector-based extraction versus semantic extraction — and the difference matters more than most roundups acknowledge.

How CSS Selectors Work (and Break)

Traditional scrapers inspect LinkedIn's DOM and map each field to a CSS selector or XPath expression. When the page structure is stable, the approach is excellent: high precision, low marginal cost, very fast parsing.

The failure mode is equally obvious. LinkedIn changes class names, nesting, lazy-loading behavior, or gates content behind different auth walls — and the scraper breaks immediately. The issue titles in the repo audit tell the story: "changed HTML," "broken job search," "missing values," "authwall blocks."

How AI/LLM Extraction Works

The newer pattern is simpler in concept: render the page, collect the visible text, ask a model to emit structured fields. That's the logic behind many no-code AI scrapers and some newer custom workflows.

Using current ($0.15/1M input tokens, $0.60/1M output tokens), a text-only extraction pass for one profile typically costs $0.0006–$0.0018 per profile. That's small enough to be irrelevant for medium-volume workflows.

Head-to-Head Comparison

| Dimension | CSS Selector / XPath | AI/LLM Extraction |

|---|---|---|

| Setup effort | High — inspect DOM, write selectors per field | Low — describe desired output in natural language |

| Breakage on layout changes | Breaks immediately | Adapts automatically (reads semantically) |

| Accuracy on structured fields | ~99% when selectors are correct | ~95–98% (occasional LLM interpretation errors) |

| Handling unstructured/variable data | Weak without custom logic | Strong — AI interprets context |

| Cost per profile | Near zero (compute only) | ~$0.001–$0.002 (API token cost) |

| Labeling/categorization | Requires separate post-processing | Can categorize, translate, label in one pass |

| Maintenance burden | Ongoing selector fixes | Near-zero |

Which Should You Choose?

For very high-volume, stable, engineering-owned pipelines, selector-based parsing can still win on cost. For most small and mid-market users scraping hundreds (not millions) of profiles, AI extraction is the better long-term investment because LinkedIn's layout changes cost more in developer time than the model tokens you save.

When GitHub Repos Are Overkill: The No-Code Path

Most people searching "linkedin scraper github" don't want to become browser-automation maintainers.

They want rows in a table.

Users explicitly complain about GitHub scraper usability in issue threads: "It does not handle 2FA and it is not easy to use since there is no UI." The audience includes recruiters, SDRs, and ops managers — not just Python developers.

The Build vs. Buy Decision

| Factor | GitHub Repo | No-Code Tool (e.g., Thunderbit) |

|---|---|---|

| Setup time | 30 min–2+ hours (Python, dependencies, proxies) | Under 2 minutes (install extension, click) |

| Maintenance | You fix it when LinkedIn changes | Tool provider handles updates |

| Anti-detection | You configure proxies, delays, sessions | Built into the tool |

| Data structuring | You write parsing logic | AI suggests fields automatically |

| Export options | You build export pipeline | One-click to Excel, Google Sheets, Airtable, Notion |

| Cost | Free repo + proxy costs + your time | Free tier available; credit-based for volume |

How Thunderbit Handles LinkedIn Scraping Without Code

approaches the problem differently from GitHub repos. Instead of writing selectors or configuring browser automation, you:

- Install the

- Navigate to any LinkedIn page (search results, profile, company page)

- Click "AI Suggest Fields" — Thunderbit's AI reads the page and proposes structured columns (name, title, company, location, etc.)

- Adjust columns if needed, then click to extract

- Export directly to Excel, Google Sheets, , or Notion

Because Thunderbit uses AI to read the page semantically each time, it doesn't break when LinkedIn changes its DOM. That's the same advantage as the GPT-integrated approach in custom Python scripts, but packaged in a no-code extension rather than a codebase you maintain.

For — clicking into individual profiles from a search results list to enrich your data table — Thunderbit handles that automatically. Browser mode works for login-required pages without separate proxy configuration.

Who Should Still Use a GitHub Repo?

GitHub repos still make sense for:

- Developers who need deep customization or unusual data types

- Teams scraping at very high volume where per-credit costs matter

- Users who need to run scraping in CI/CD pipelines or on servers

- People building LinkedIn data into larger automated workflows

For everyone else — especially sales, recruiting, and ops teams — the eliminates the entire setup-and-maintain cycle.

Step-by-Step: How to Evaluate and Use a LinkedIn Scraper from GitHub

If you've decided GitHub is the right path, here's a staged workflow that minimizes wasted time and account risk.

Step 1: Search and Shortlist Repos

Search GitHub for "linkedin scraper" and filter by:

- Recently updated (last 6 months)

- Language matching your stack (Python is most common)

- Scope matching your actual need (profiles vs. jobs vs. companies)

Shortlist 3–5 repos that look alive.

Step 2: Apply the Repo Health Scorecard

Run each repo through the scorecard from earlier. Eliminate anything with:

- No commits in the past year

- Unresolved "blocked" or "CAPTCHA" issues

- Password-only authentication

- No mention of sessions, cookies, or proxies

Step 3: Set Up Your Environment

Common setup commands from the repos in this audit:

1pip install linkedin-scraper

2playwright install chromium

3pip install linkedin-jobs-scraper

4LI_AT_COOKIE=<cookie> python your_app.py

5scrapy crawl linkedin_people_profileThe recurring friction points:

- Missing

session.jsonfiles - Browser driver version mismatches (Chromium/Playwright)

- Cookie extraction from browser DevTools

- Proxy auth timeouts

Step 4: Run a Small Test Scrape

Start with 10–20 profiles. Check:

- Are fields correctly parsed?

- Is data complete?

- Did you hit any security checkpoints?

- Is the output format usable or raw JSON noise?

Step 5: Scale Carefully

Add randomized delays (5–15 seconds between requests), lower concurrency, session reuse, and residential proxies. Do not jump to hundreds of profiles/day on a fresh account.

Step 6: Export and Structure Your Data

Most GitHub repos output raw JSON or CSV. You'll still need to:

- Deduplicate records

- Normalize titles and company names

- Map fields into your CRM or ATS

- Document data provenance for compliance

(Thunderbit handles structuring and export automatically if you'd rather skip this step.)

LinkedIn Scraper GitHub vs. No-Code Tools: The Full Comparison

| Dimension | GitHub Repo (CSS Selectors) | GitHub Repo (AI/LLM) | No-Code Tool (Thunderbit) |

|---|---|---|---|

| Setup time | 1–2+ hours | 1–3+ hours (+ API key) | Under 2 minutes |

| Technical skill | High (Python, CLI) | High (Python + LLM APIs) | None |

| Maintenance | High (selectors break) | Medium (LLM adapts, code still needs updates) | None (provider maintains) |

| Anti-detection | DIY (proxies, delays) | DIY | Built-in |

| Accuracy | High when working | High with occasional LLM errors | High (AI-powered) |

| Cost | Free + proxy costs + your time | Free + LLM API costs + proxy costs | Free tier; credit-based for volume |

| Export | DIY (JSON, CSV) | DIY | Excel, Sheets, Airtable, Notion |

| Best for | Developers, custom pipelines | Developers wanting lower maintenance | Sales, recruiting, ops teams |

Legal and Ethical Considerations

I'll keep this section short, but it can't be skipped.

LinkedIn's (effective November 3, 2025) explicitly prohibits using software, scripts, robots, crawlers, or browser plugins to scrape the service. LinkedIn has backed this with enforcement:

- : LinkedIn announced legal action against Proxycurl

- : LinkedIn said that case was resolved

- : Law360 reported LinkedIn sued additional defendants over industrial-scale scraping

The hiQ v. LinkedIn line of cases created some nuance around public data access, but favored LinkedIn on breach-of-contract theories. "Publicly visible" does not mean "clearly safe to scrape at scale for commercial reuse."

For EU-linked workflows, . The by the French data authority is a concrete example of regulators treating scraped LinkedIn data as personal data subject to data protection rules.

Using a maintained tool like Thunderbit doesn't change your legal obligations. But it does reduce the risk of accidentally triggering security responses or violating rate limits in ways that attract LinkedIn's attention.

What Works and What Doesn't in 2026

What Works

- Applying the Repo Health Scorecard before committing to any repo

- Cookie/session reuse instead of repeated automated login

- Residential proxies when you must run account-backed scraping

- Smaller, slower, human-like scraping workflows

- AI-assisted extraction when you value adaptability over marginal token cost

- when the real need is spreadsheet output, not scraper ownership

- Diversifying approaches rather than betting on a single public repo

What Doesn't Work

- Cloning high-star repos without checking maintenance status or recent issues

- Using datacenter proxies or free proxy lists for LinkedIn

- Scaling to hundreds of profiles/day without rate limits or anti-detection

- Relying on CSS selectors long-term without a maintenance plan

- Treating your real LinkedIn account as disposable infrastructure

- Confusing "publicly accessible" with "contractually or legally unproblematic"

FAQs

Do LinkedIn scraper GitHub repos still work in 2026?

Some do, but only a small subset. In this audit of eight visible repos, only two looked meaningfully usable for a 2026 reader without heavy disclaimers. The key is to evaluate repos by maintenance activity and issue health, not star counts. Use the Repo Health Scorecard before investing setup time in any project.

How many LinkedIn profiles can I scrape per day without getting banned?

There's no guaranteed safe number because LinkedIn evaluates session behavior, not just volume. Community reports suggest under 50 profiles/day/account is the lower-risk zone, 50–100/day is medium risk where infrastructure quality matters, and above 100/day becomes increasingly aggressive. Randomized delays of 5–15 seconds and residential proxies help, but nothing eliminates the risk entirely.

Is there a no-code alternative to LinkedIn scraper GitHub projects?

Yes. lets you scrape LinkedIn pages in a few clicks with AI-powered field detection, browser-based auth (no proxy configuration needed), and one-click export to Excel, Google Sheets, Airtable, or Notion. It's designed for sales, recruiting, and ops teams who want data without maintaining code. You can try it via the .

Is scraping LinkedIn data legal?

It's a gray area with increasingly sharp edges. LinkedIn's User Agreement explicitly prohibits scraping, and LinkedIn has pursued legal action against scrapers in . The hiQ v. LinkedIn precedent on public data access has been narrowed by more recent rulings. GDPR applies to personal data of EU residents regardless of how it's collected. For any commercial use case, get legal counsel specific to your situation.

AI extraction or CSS selectors — which should I use for LinkedIn scraping?

CSS selectors are faster and cheaper per record when they're working, but they create a maintenance treadmill because LinkedIn changes its DOM regularly. AI/LLM extraction costs slightly more per profile (~$0.001–$0.002 at current ) but adapts to layout changes automatically. For most non-enterprise users scraping hundreds rather than millions of profiles, AI extraction is the better long-term investment. Thunderbit's built-in AI engine offers this advantage without requiring you to write or maintain any code.

Learn More