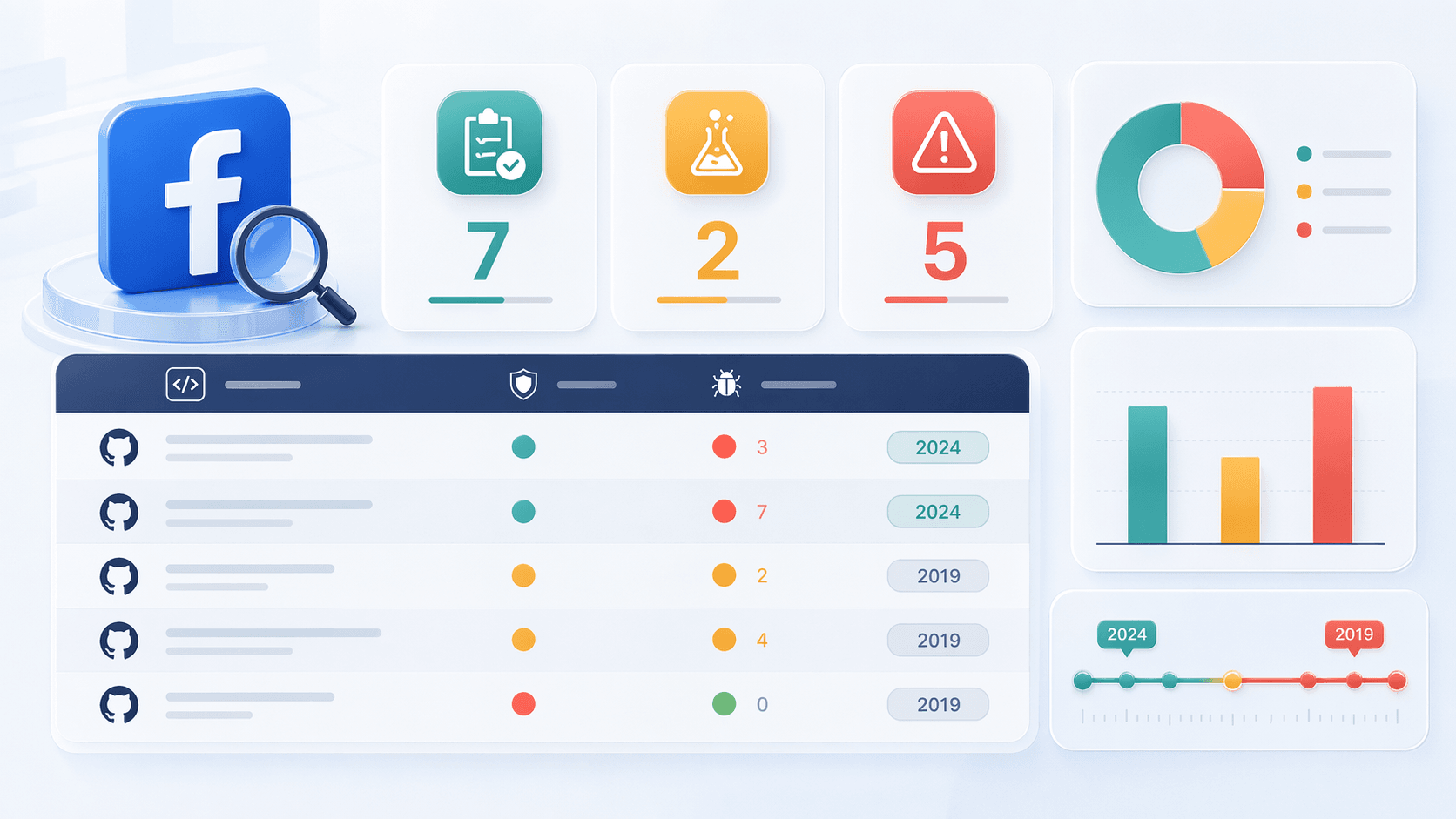

A GitHub search for "facebook scraper" returns . Only have been pushed in the last six months.

That gap between "available" and "actually works" is the whole story of Facebook scraping on GitHub in 2026.

I've spent a lot of time digging through repo issue tabs, Reddit complaints, and actual output from these tools. The pattern is consistent: most top-starred projects are quietly broken, maintainers have moved on, and Facebook's anti-scraping defenses keep getting sharper. Developers and business users keep landing on the same search results, installing the same repos, and running into the same empty output. This article is a 2026 reality check — an honest audit of which repos still deserve your time, what Facebook is doing to break them, and when you should skip GitHub entirely.

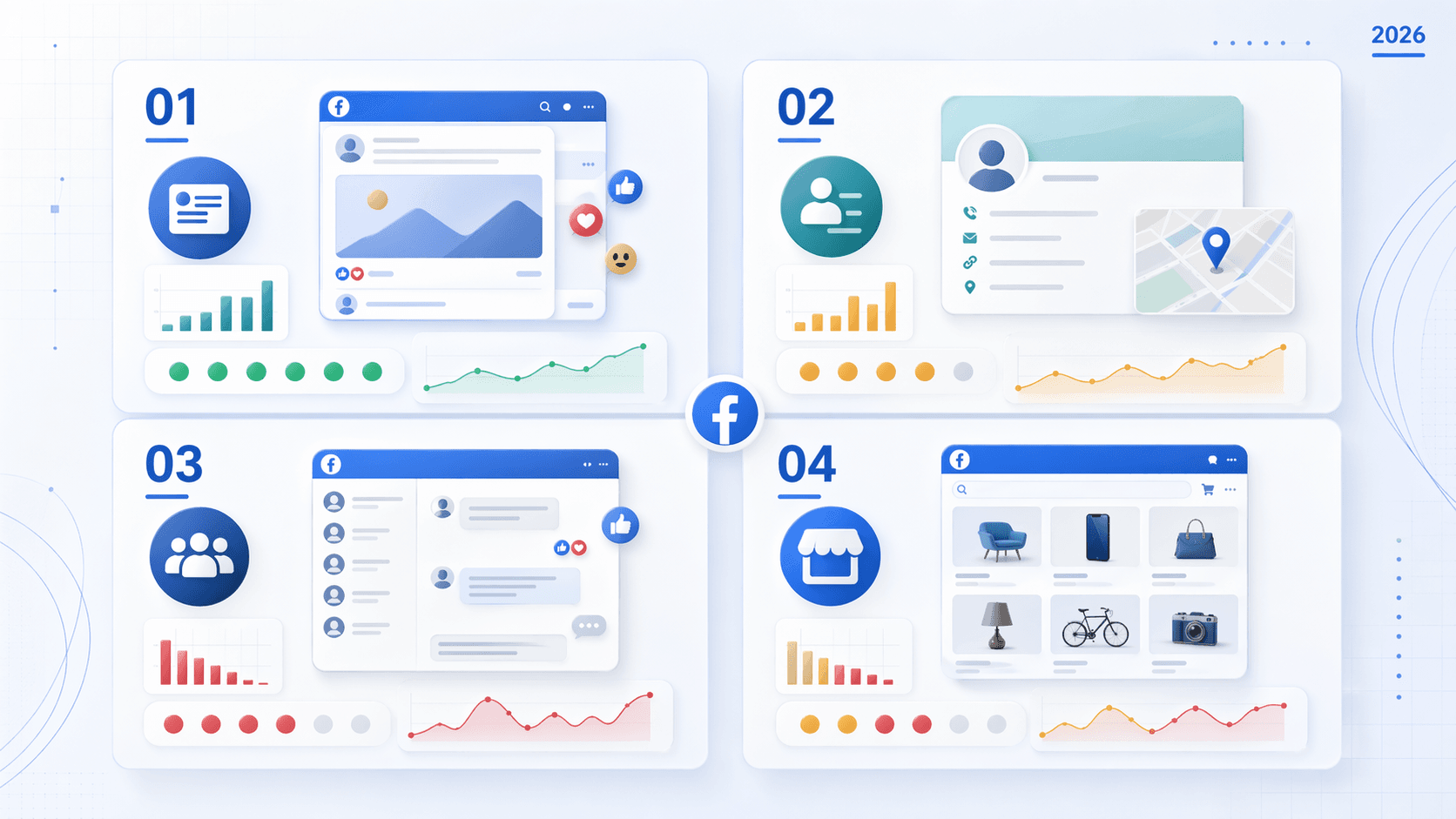

Why People Search for a Facebook Scraper on GitHub

The use cases behind this search are the same ones that have existed for years — even if the tools keep falling apart:

- Lead generation: Extracting business page contact info (emails, phone numbers, addresses) for outreach

- Marketplace monitoring: Tracking product listings, prices, and seller info for ecommerce or arbitrage

- Group research: Archiving posts and comments for market research, OSINT, or community management

- Content and post archiving: Saving public page posts, reactions, images, and timestamps

- Events aggregation: Pulling event titles, dates, locations, and organizers

GitHub's appeal is obvious: visible code, zero cost, community maintenance (theoretically), and full control over fields and pipelines.

The problem is that stars and forks don't correlate with "currently functional." Among the top 10 exact-phrase repos by stars, as of April 2026. That's not a fluke — it's the norm.

One Reddit user in a put it plainly after six months of trying: it was "impossible without either paying for an external data scraping application" or using Python plus JS rendering plus significant computation power. Another, in an , summarized it as: "Facebook is one of the harder ones to scrape because they aggressively block automation" and browser automation is "fragile since Facebook changes their DOM constantly."

The use cases are real. The demand is real. The frustration is very real. The rest of this article is about navigating that gap.

What Is a Facebook Scraper GitHub Repo, Exactly?

A "Facebook scraper" on GitHub is an open-source script — usually Python — that programmatically extracts public data from Facebook pages, posts, groups, Marketplace, or profiles. Not all of them work the same way. Three architectures dominate:

Browser-Automation Scrapers vs. API Wrappers vs. Direct HTTP Scrapers

| Approach | Typical stack | Strength | Weakness |

|---|---|---|---|

| Browser automation | Selenium, Playwright, Puppeteer | Can handle login walls, mimics real user behavior | Slow, resource-heavy, easy to fingerprint if not configured carefully |

| Official API wrapper | Meta Graph API / Pages API | Stable, documented, compliant when approved | Severely restricted — most public post/group data is no longer available |

| Direct HTTP scraper | requests, HTML parsing, undocumented endpoints | Fast and lightweight when it works | Breaks whenever Facebook changes page structure or anti-bot measures |

is the classic direct-HTTP example: it scrapes public pages "without an API key" using direct requests and parsing. is a browser-automation example. represents the old Graph API era, where scripts could pull page/group posts through official endpoints that are no longer broadly available.

Typical target data across these repos includes post text, timestamps, reaction/comment counts, image URLs, page metadata (category, phone, email, follower count), Marketplace listing fields, and group or event metadata.

In 2026, the real tradeoff isn't language preference. It's which kind of failure you can tolerate.

The 2026 Facebook Scraper GitHub Freshness Audit: Which Repos Actually Work?

I audited the most-starred and most-recommended Facebook scraper repos on GitHub against real 2026 data — not README claims, but actual commit dates, issue queues, and community reports. This is the section that matters most.

The Full Freshness Audit Table

| Repo | Stars | Last Push | Open Issues | Language / Runtime | What It Still Scrapes | Status |

|---|---|---|---|---|---|---|

| kevinzg/facebook-scraper | 3,157 | 2024-06-22 | 438 | Python ^3.6 | Limited public page posts, some comments/images, page metadata | ⚠️ Partially broken / stale |

| moda20/facebook-scraper | 110 | 2024-06-14 | 29 | Python ^3.6 | Same as kevinzg + Marketplace helper methods | ⚠️ Partially broken / stale fork |

| minimaxir/facebook-page-post-scraper | 2,128 | 2019-05-23 | 53 | Python 2/3 era, Graph API dependent | Historical reference only | ❌ Abandoned |

| apurvmishra99/facebook-scraper-selenium | 232 | 2020-06-28 | 7 | Python + Selenium | Browser automation for page scraping | ❌ Abandoned |

| passivebot/facebook-marketplace-scraper | 375 | 2024-04-29 | 3 | Python 3.x + Playwright 1.40 | Marketplace listings via browser automation | ⚠️ Fragile / niche |

| Mhmd-Hisham/selenium_facebook_scraper | 37 | 2022-11-29 | 1 | Python + Selenium | General Selenium scraping | ❌ Abandoned |

| anabastos/faceteer | 20 | 2023-07-11 | 5 | JavaScript | Automation-oriented | ❌ Risky / low proof |

A few things jump out:

- Even the "active fork" (moda20) hasn't been pushed since June 2024.

- Issue queues tell the real story faster than READMEs.

- Both kevinzg and moda20 still declare Python ^3.6 in their files — a signal that the dependency baseline hasn't been modernized.

kevinzg/facebook-scraper

The best-known Python Facebook scraper on GitHub. Its describes page scraping, group scraping, login via credentials or cookies, and post-level fields like comments, image, images, likes, post_id, post_text, text, and time.

The operational signal, though, is weak:

- Last push: June 22, 2024

- Open issues: — including titles like "Example Scrape does not return any posts"

- The maintainer has not responded to recent issues

Verdict: Partially broken. Still has value for low-volume public page experiments and as a field-name reference, but not reliable for production use.

moda20/facebook-scraper (Community Fork)

The most visible fork of kevinzg, with added options and Marketplace-oriented helpers like extract_listing (documented in its ).

The makes the breakage story explicit:

- "mbasic is gone"

- "CLI 'Couldn't get any posts.'"

- "https://mbasic.facebook.com is no longer working"

When the simplified mbasic frontend changes or disappears, a whole class of scrapers degrades at once.

Verdict: The most notable fork, but also stale and fragile in 2026. Worth trying first if you insist on a GitHub-based solution, but don't expect stability.

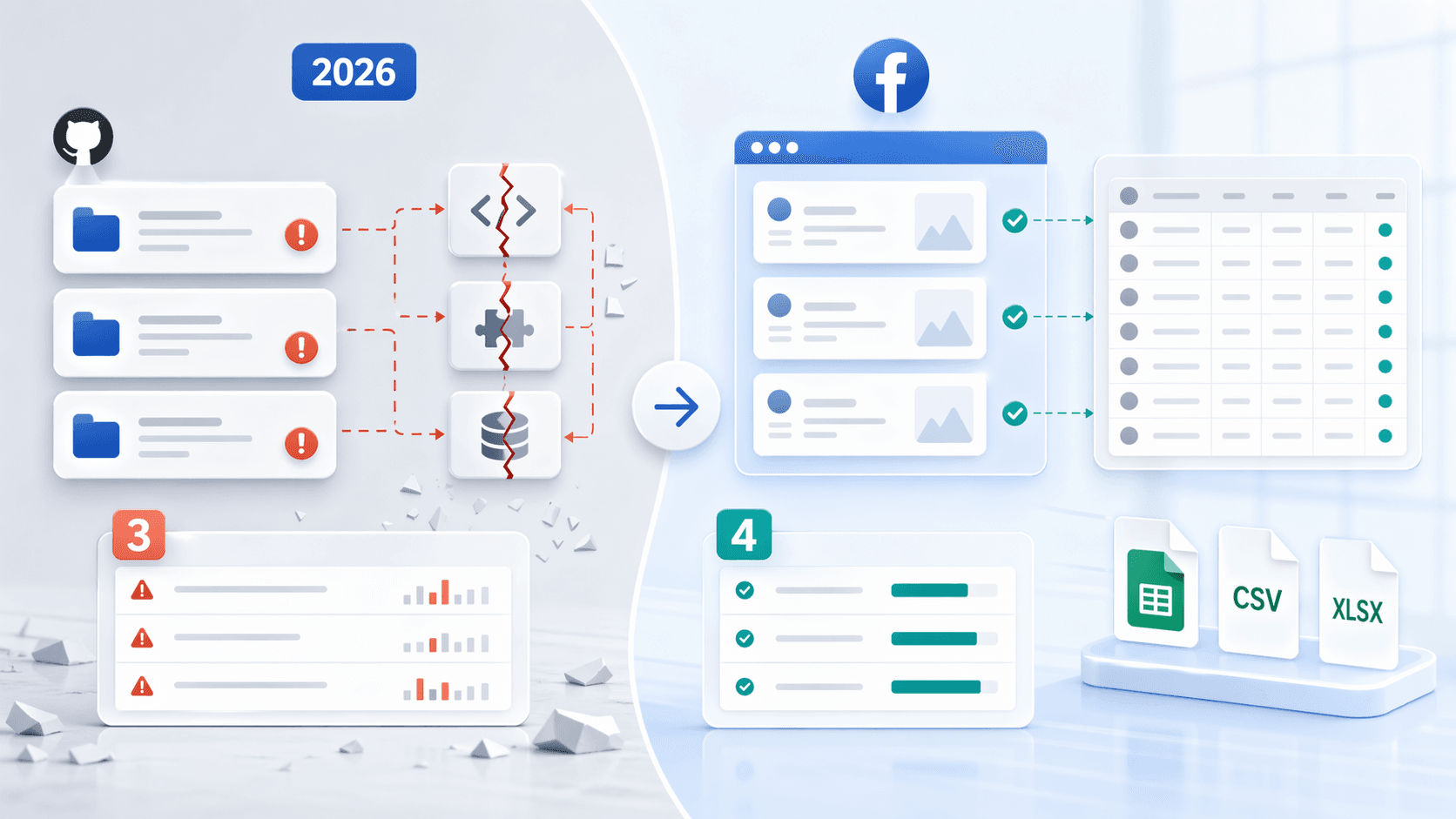

minimaxir/facebook-page-post-scraper

Once a very practical Graph API tool for gathering posts, reactions, comments, and metadata from public Pages and open Groups into CSVs. Its still explains how to use a Facebook app's App ID and App Secret.

In 2026, it's a historical artifact:

- Last push: May 23, 2019

- Open issues: 53 — including "HTTP 400 Error Bad Request" and "No data retrieved!!"

Verdict: Abandoned. Tightly coupled to an API permission model Meta has since narrowed substantially.

Other Notable Repos

- passivebot/facebook-marketplace-scraper: Useful for Marketplace use cases, but its includes "login to view the content," "CSS selectors outdated," and "Getting blocked." A one-line case study of what breaks on Marketplace scraping.

- apurvmishra99/facebook-scraper-selenium: Has one issue literally asking from September 2020. That tells you almost everything.

- Mhmd-Hisham/selenium_facebook_scraper and anabastos/faceteer: Neither has enough current activity to justify confidence.

Facebook's Anti-Scraping Defenses: What Every GitHub Scraper Is Up Against

Most articles on this topic offer vague "check the ToS" disclaimers. That's not useful.

Facebook has one of the most aggressive anti-scraping systems of any major platform. Understanding the specific defense layers is the difference between a working scraper and an afternoon of empty output.

Meta's own describes an "Anti Scraping team" that uses static analysis across its codebase to identify scraping vectors, sends cease-and-desist letters, disables accounts, and relies on rate-limiting systems. That's not a hypothetical — it's an organizational commitment.

Randomized DOM and CSS Class Names

Facebook deliberately randomizes HTML element IDs, class names, and page structure. As one put it: "No normal scraper can work on Facebook. The HTML mutates between refreshes."

What breaks: XPath and CSS selectors that worked last week return nothing today.

Countermeasure: Use text-based or attribute-based selectors when possible. AI-based parsing that reads page content rather than relying on rigid selectors handles this better. Expect selector maintenance as a recurring cost.

Login Walls and Session Management

Many Facebook surfaces — profiles, groups, some Marketplace listings — require login to view. Headless browsers get redirected or served stripped-down HTML. The passivebot Marketplace scraper's has "login to view the content" as a top complaint.

What breaks: Anonymous requests miss content or redirect entirely.

Countermeasure: Use session cookies from a real browser session, or browser-based scraping tools that operate within your logged-in session. Rotating accounts is possible but risky.

Digital Fingerprinting

Meta's engineering post says unauthorized scrapers — which is effectively a statement that browser-quality and behavior-quality are central to detection. Community discussions in and continue to recommend anti-detect browsers and consistent fingerprints.

What breaks: Standard off-the-shelf Selenium or Puppeteer setups are easily identified.

Countermeasure: Use tools like undetected-chromedriver or anti-detect browser profiles. Realistic sessions and consistent fingerprints matter more than simple user-agent spoofing.

IP-Based Rate Limiting and Blocking

Meta's engineering post explicitly discusses rate limiting as part of the defense strategy, including capping follower-list counts to force more requests that then . In practice, users report getting rate-limited after posting to .

What breaks: Bulk requests from the same IP get throttled or blocked within minutes. Datacenter proxy IPs are often pre-blocked.

Countermeasure: Residential proxy rotation (not datacenter proxies), with sensible request pacing.

GraphQL Schema Changes

Some scrapers rely on Facebook's internal GraphQL endpoints because they return cleaner structured data than raw HTML. But Meta doesn't publish a stability guarantee for internal GraphQL, so these queries break silently — returning empty data instead of errors.

What breaks: Structured extraction silently returns nothing.

Countermeasure: Add validation checks, monitor schema endpoints, and pin to known working queries. Expect maintenance.

Anti-Scraping Defense Summary

| Defense Layer | How It Breaks Your Scraper | Practical Countermeasure |

|---|---|---|

| Layout churn / unstable selectors | XPath and CSS selectors return nothing or partial fields | Prefer resilient anchors, validate against visible page output, expect maintenance |

| Login walls | Logged-out requests miss content or redirect | Use valid session cookies or browser-session tools |

| Fingerprinting | Standard automation looks synthetic | Use real browsers, consistent session quality, anti-detect measures |

| Rate limiting | Empty output, blocks, throttling | Slow pacing, lower batch sizes, residential proxy rotation |

| Internal query changes | Structured extraction silently returns empty data | Add validation checks, expect query maintenance |

When GitHub Repos Fail: The No-Code Escape Hatch

A large share of people landing on "facebook scraper github" are not developers. They're sales reps looking for business page emails, ecommerce operators tracking Marketplace prices, or marketers doing competitor research. They don't want to manage a Python environment, debug broken selectors, or rotate proxies.

If that sounds like you, the decision tree is short:

Scraping Facebook Page Contact Info (Emails, Phone Numbers)

If the job is pulling emails and phone numbers from Page "About" sections, a GitHub repo is overkill. 's free and scan a web page and export results to Sheets, Excel, Airtable, or Notion. The AI reads the page fresh each time, so Facebook's DOM changes don't break it.

Scraping Structured Data from Marketplace or Business Pages

For extracting product listings, prices, locations, or business details, Thunderbit's AI Web Scraper lets you click "AI Suggest Fields" — the AI reads the page and proposes columns like price, title, location — then click "Scrape." No XPath maintenance, no code installation. Export directly to .

Scheduled Monitoring (Marketplace Price Alerts, Competitor Tracking)

For ongoing monitoring — "alert me when a Marketplace listing matches my price range" — Thunderbit's lets you describe the interval in plain language (like ) and set URLs. It runs automatically, no cron job required.

When GitHub Repos Are Still the Right Choice

If you need deep programmatic control, large-scale extraction, or custom data pipelines, GitHub repos (or for structured extraction) are the right tool. The decision is straightforward: business users with simple extraction needs → no-code first; developers building data pipelines → GitHub repos or API.

Real Output Samples: What You Actually Get

Every competitor article shows code snippets but never the actual output. Below is what you can realistically expect from each approach.

Sample Output: kevinzg/facebook-scraper (or Active Fork)

From the , a scraped public post returns JSON like:

1{

2 "comments": 459,

3 "comments_full": null,

4 "image": "https://...",

5 "images": ["https://..."],

6 "likes": 3509,

7 "post_id": "2257188721032235",

8 "post_text": "Don't let this diminutive version...",

9 "text": "Don't let this diminutive version...",

10 "time": "2019-04-30T05:00:01"

11}Note the nullable fields like comments_full. In 2026, expect more fields to come back empty or missing — that's usually a blocking signal, not a harmless glitch. The output is raw JSON and requires post-processing.

Sample Output: Facebook Graph API

Meta's current documents page info requests like GET /<PAGE_ID>?fields=id,name,about,fan_count. The includes fields such as followers_count, fan_count, category, emails, phone, and other public metadata — but only with the right permissions like .

That's a much narrower data shape than most GitHub scraper users expect. It's page-centric, permission-gated, and not a substitute for arbitrary public-post or group scraping.

Sample Output: Thunderbit AI Web Scraper

Thunderbit's AI-suggested columns for a Facebook business page produce a clean, structured table:

| Page URL | Business Name | Phone | Category | Address | Follower Count | |

|---|---|---|---|---|---|---|

| facebook.com/example | Example Biz | info@example.com | (555) 123-4567 | Restaurant | 123 Main St | 12,400 |

For posts and comments, the output looks like:

| Post URL | Author | Post Content | Post Date | Comment Text | Commenter | Comment Date | Like Count |

|---|---|---|---|---|---|---|---|

| fb.com/post/123 | Page Name | "Grand opening this Saturday..." | 2026-04-20 | "Can't wait!" | Jane D. | 2026-04-21 | 47 |

Structured columns, formatted phone numbers, ready-to-use data — no post-processing step. The contrast with raw JSON from GitHub tools is hard to miss.

Facebook Data Type × Best Tool Matrix

No single tool handles everything well on Facebook in 2026.

This matrix lets you jump directly to your use case instead of reading the entire article hoping to find the right answer.

| Facebook Data Type | Best GitHub Repo | API Option | No-Code Option | Difficulty | Reliability in 2026 |

|---|---|---|---|---|---|

| Public page posts | kevinzg family or browser-based scraper | Page Public Content Access, limited | Thunderbit AI Scraper | Medium–High | ⚠️ Fragile |

| Page About / contact info | Lightweight parsing or page metadata | Page reference fields with permissions | Thunderbit Email/Phone Extractor | Low–Medium | ✅ Stable-ish |

| Group posts (member) | Browser automation with login | Groups API deprecated | Browser-based no-code (logged in) | High | ⚠️ Mostly broken / high risk |

| Marketplace listings | Playwright-based scraper | No official API path | Thunderbit AI or scheduled browser scraping | Medium–High | ⚠️ Fragile |

| Events | Browser automation or ad hoc parsing | Historical API support largely gone | Browser-based extraction | High | ❌ Fragile |

| Comments / reactions | GitHub repo with comment support | Some page-comment workflows with permissions | Thunderbit subpage scraping | Medium | ⚠️ Fragile |

Which Approach Fits Your Team?

- Sales teams extracting leads: Start with Thunderbit's Email/Phone Extractor or AI Scraper. No setup, immediate results.

- Ecommerce teams monitoring Marketplace: Thunderbit's Scheduled Scraper or a custom Scrapy + residential proxies setup (if you have the engineering resources).

- Developers building data pipelines: GitHub repos (active forks) + residential proxies + a maintenance budget. Expect ongoing work.

- Researchers archiving group content: Browser-based workflow only (Thunderbit or Selenium with login), with compliance review.

The honest position — and the one — is that there is no single reliable solution. Match your specific data need to the right tool.

Step-by-Step: How to Set Up a Facebook Scraper from GitHub (When It Makes Sense)

If you've read the freshness audit and still want to go the GitHub route, fair enough. Here's the practical path — with honest notes about where things break.

Step 1: Choose the Right Repo (Use the Freshness Audit)

Refer back to the audit table. Pick the least stale repo that matches your target surface. Before installing anything, check the Issues tab — recent issue titles tell you more about current functionality than the README does.

Step 2: Set Up Your Python Environment

1python3 -m venv fb-scraper-env

2source fb-scraper-env/bin/activate

3pip install -r requirements.txtCommon gotcha: version conflicts with dependencies, especially Selenium/Playwright versions. Both kevinzg and moda20 declare Python ^3.6 in their — an older baseline that may conflict with newer libraries. passivebot's Marketplace scraper pins , which is fine for experimentation but not proof of durability.

Step 3: Configure Proxies and Anti-Detection

If you're doing anything beyond a quick test:

- Set up residential proxy rotation (look for providers with Facebook-specific IP pools)

- If using browser automation, install undetected-chromedriver or configure anti-fingerprinting

- Don't skip this step — standard Selenium or Puppeteer gets flagged fast

Step 4: Run a Small Test Scrape and Validate Output

Start with a single public page, not a large batch. Check the output carefully:

- Empty fields or missing data usually mean Facebook's defenses are blocking you

- Compare output against what you actually see on the page in your browser

- A successful one-page test matters more than a pretty README

Step 5: Handle Errors, Rate Limits, and Maintenance

- Build in retry logic and error handling

- Expect to update selectors or configurations regularly — this is ongoing maintenance, not set-and-forget

- If you find yourself spending more time maintaining the scraper than using the data, that's a signal to reconsider the no-code path

Legal and Ethical Considerations for Facebook Scraping

This section is brief and factual. It's not the focus of the article, but ignoring it would be irresponsible.

Facebook's state that users "may not access or collect data from our Products using automated means (without our prior permission)." Meta's , updated February 3, 2026, make clear that enforcement can include suspension, API access removal, and account-level action.

This isn't theoretical. Meta's describes active investigation of unauthorized scraping, cease-and-desist letters, and account disabling. Meta has also against scraping companies (e.g., the Voyager Labs lawsuit).

The safest framing:

- Meta's terms are explicitly anti-scraping

- Permissioned API use is safer than unauthorized scraping

- Public availability does not erase privacy-law obligations (GDPR, CCPA, etc.)

- If operating at scale, consult legal counsel

- Thunderbit is designed for scraping publicly available data and does not bypass login requirements when using cloud scraping

Key Takeaways: What Actually Works for Facebook Scraping in 2026

Most Facebook scraper GitHub repos are broken or unreliable in 2026. That's not a scare tactic — it's what commit dates, issue queues, and community reports consistently show.

The few active forks still work for limited public page data, but they require ongoing maintenance, anti-detection setup, and a realistic expectation that things will break again. The Graph API is useful but narrow — it covers page-level metadata with proper permissions, not the broad public-post or group scraping most people want.

For business users who need Facebook data without the developer overhead, no-code tools like offer a more reliable and lower-maintenance path. The AI reads the page fresh each time, so DOM changes don't break your workflow. You can try the for free and export to Sheets, Excel, Airtable, or Notion.

The practical recommendation: start with the freshness audit table. If you're not a developer, try the no-code option first. If you are a developer, only invest in a GitHub setup if you have the technical resources — and the patience — to maintain it. And regardless of which path you choose, match your specific data need to the right tool rather than hoping for one solution that does everything.

If you want to go deeper on scraping social media data and related tools, we have guides on , , and . You can also watch walkthroughs on the .

FAQs

Is there a working Facebook scraper on GitHub in 2026?

Yes, but options are limited. The most notable is the fork of kevinzg's original repo — check the freshness audit table above for current status. It can partially scrape public page posts and some metadata, but its issue queue shows core breakage around mbasic and empty output. Most other repos are abandoned or fully broken.

Can I scrape Facebook without coding?

Yes. Tools like and free Email/Phone Extractors let you extract Facebook data from your browser in a few clicks, with no Python or GitHub setup required. The AI reads the page each time, so you don't need to maintain selectors when Facebook changes its layout.

Is it legal to scrape Facebook?

Facebook's prohibit automated data collection without permission. Meta actively enforces this through account bans, cease-and-desist letters, and . Legality varies by jurisdiction and use case. Stick to publicly available business data, avoid personal profiles, and consult legal counsel if operating at scale.

What data can I still get from the Facebook Graph API?

In 2026, the is heavily restricted. You can access limited page-level data — fields like id, name, about, fan_count, emails, phone — with proper permissions such as . Most public post data, group data (the ), and user-level data are no longer available via API.

How often do Facebook scraper GitHub repos break?

Frequently. Facebook changes its DOM structure, anti-bot measures, and internal APIs on an ongoing basis — there's no published cadence, but community reports show breakage every few weeks for active scrapers. The moda20 fork's issue queue around mbasic disappearance is a recent example. If you rely on a GitHub repo, budget for regular maintenance and output validation.

Learn More