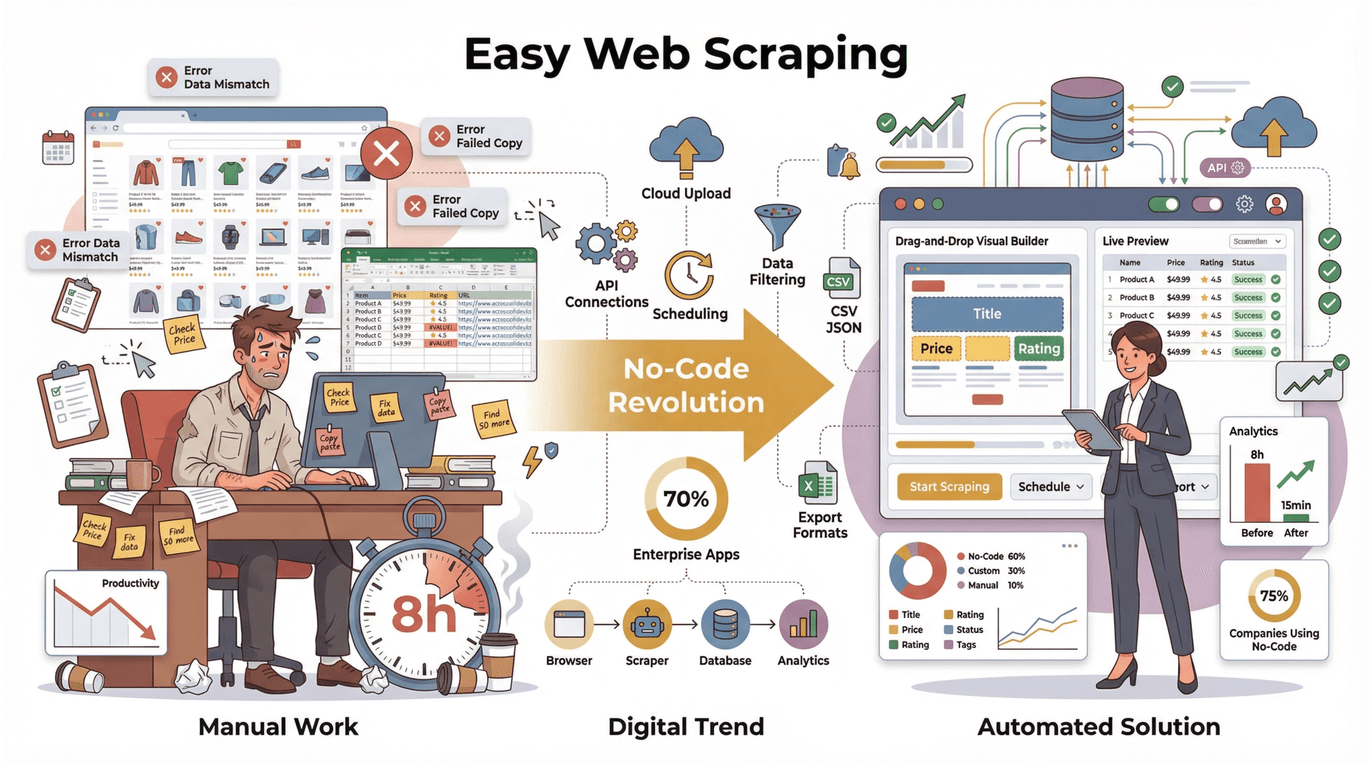

If you’ve ever tried to copy-paste hundreds of product listings or sales leads from a website into a spreadsheet, you know the feeling: somewhere between “I’m saving time” and “why is this so painful?” The truth is, web data is the new oil for sales, ecommerce, and research teams—but most folks don’t want to become coders just to get it. The good news? The no-code revolution has finally reached web scraping. In fact, , and . That means the days of “tedious copy-pasting” are numbered.

But here’s the catch: with so many web scrapers out there, how do you pick one that’s actually easy for beginners? I’ve spent years building and testing these tools (and yes, I’m a little biased toward Thunderbit, since that’s my baby), but I’m also obsessed with making web scraping as simple as ordering takeout. So, I’ve put together this practical, no-nonsense guide to the top 10 easiest web scrapers to use in 2025—with honest pros, cons, and tips for every tool.

What Makes a Web Scraper the Easiest to Use?

Let’s get real: “easy” means different things to different people. For business users, the easiest web scrapers to use have a few things in common:

- No coding or HTML required: You shouldn’t need to know what a selector is, or why XPath sounds like a Star Wars villain.

- Intuitive, visual interface: Drag-and-drop, point-and-click, or even natural language prompts—so you can just say what you want.

- Minimal setup and learning curve: You should be able to get results in minutes, not hours (or days).

- Automation and reliability: The tool should handle tricky stuff like pagination, subpages, and dynamic content without you sweating the details.

- One-click export: Data should be ready for Excel, Google Sheets, Airtable, Notion, or whatever your team uses.

- Support and documentation: Good tutorials, responsive support, and a helpful community make a huge difference.

- Flexible pricing: Free plans or trials for small jobs, with paid upgrades if you need more.

When I ranked these tools, I focused on how quickly a non-technical user could go from “I need this data” to “I have it in my spreadsheet”—especially for common business scenarios like product scraping, lead generation, and price monitoring.

How We Evaluated the Easiest Web Scrapers to Use

I didn’t just skim marketing pages—I looked at user reviews, ran hands-on tests, and compared feature sets for each tool. Here’s what mattered most:

- Beginner onboarding: How fast can a new user get started? Are there templates, wizards, or AI helpers?

- Real-world tasks: Can it handle scraping a product list, extracting emails from a directory, or monitoring prices—without a steep learning curve?

- Automation: Does it handle pagination, subpages, and scheduling? Or do you have to babysit it?

- Data export: Is it easy to get your data out, clean, and ready for use?

- Support and pricing: Are there free plans, responsive support, and clear upgrade paths?

Now, let’s dive into the top 10 easiest web scrapers to use —ranked and reviewed for real beginners.

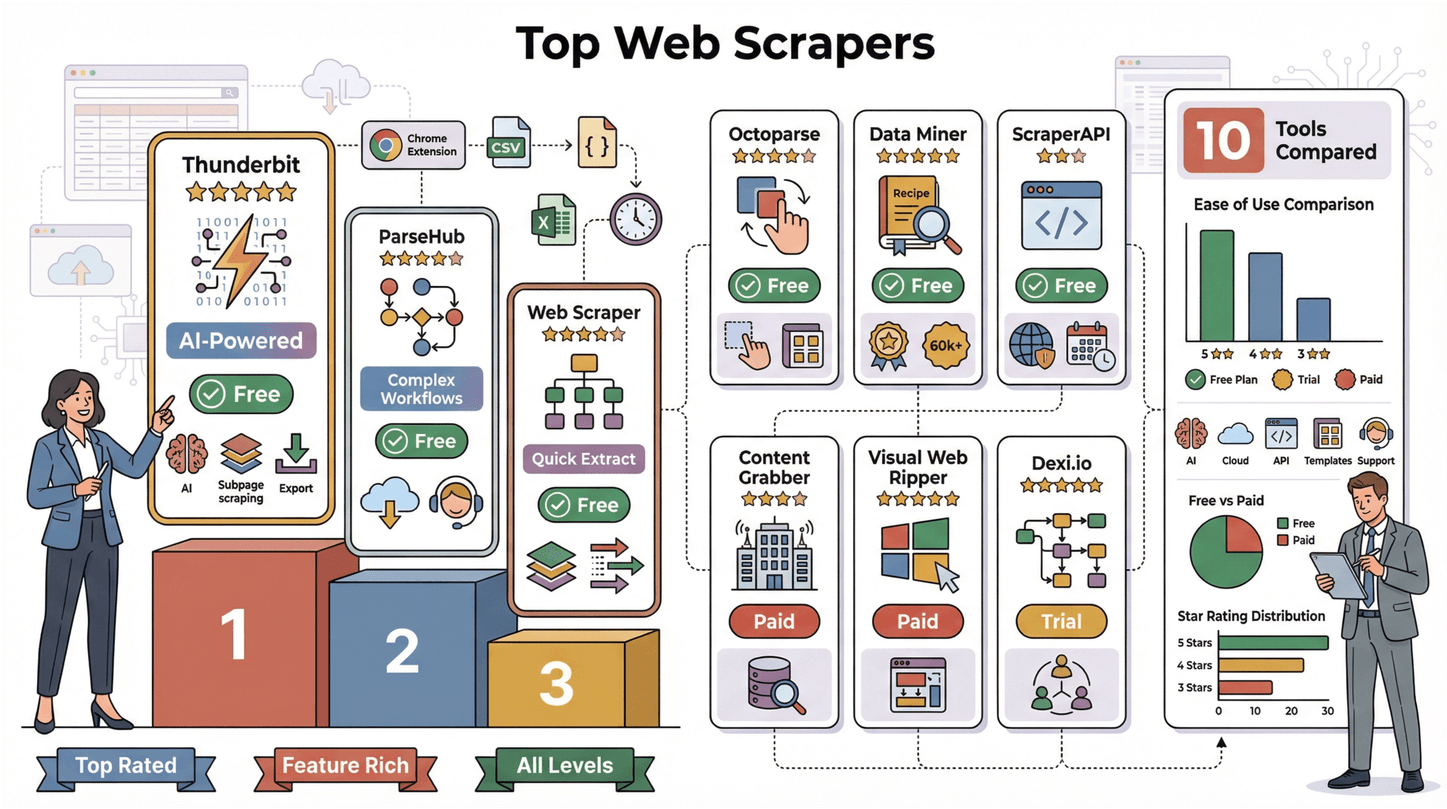

The Top 10 Easiest Web Scrapers to Use

- for the easiest AI-powered, 2-click web scraping

- for visual, point-and-click workflows

- for free, flexible sitemap-based scraping

- for drag-and-drop, template-driven automation

- for recipe-based, everyday scraping

- for plug-and-play API scraping (for technical teams)

- for cloud-based, business-friendly extraction

- for enterprise-scale, highly customizable scraping

- for Windows users wanting offline, template-based scraping

- for browser-based, workflow automation and team collaboration

1. Thunderbit

is my top pick for the easiest web scraper to use in 2025—and not just because I helped build it. Thunderbit is all about making web scraping feel like having an AI-powered assistant in your browser. Here’s why it stands out:

- AI-Powered 2-Click Workflow: Open any webpage, click “AI Suggest Fields,” and Thunderbit’s AI reads the page, suggests what data to extract (like product names, prices, emails), and sets up your table. Click “Scrape,” and you’re done. No fiddling with selectors, no coding, no stress.

- Natural Language Prompts: You can describe what you want in plain English. Thunderbit’s AI figures out the rest—even on complex, messy, or unstructured pages.

- Subpage Scraping: Need more details? Thunderbit can automatically visit each subpage (like product details or LinkedIn profiles) and enrich your table without extra setup.

- Scrape Anything: Works on any website, PDF, or image—even if the data is buried in a document or a picture.

- Instant Export: Export your results to Excel, Google Sheets, Airtable, or Notion with one click. No paywall on exports—even on the free plan.

- Free Chrome Extension: Install from the , and you’re ready to go.

- Free Email, Phone, and Image Extraction: Extract contacts and images from any page, PDF, or image file—no extra setup.

Use cases: Sales teams use Thunderbit for lead extraction, ecommerce teams monitor competitor prices, and real estate pros collect property data. I’ve seen users go from “I need 200 product listings” to “here’s my spreadsheet” in 10 minutes flat.

User feedback: Thunderbit has a and over 100,000 users. People say it “feels like having an intern do the copy-pasting for you.” Even complex tasks like scraping multiple pages and sub-links are handled automatically.

Pricing: Free tier lets you scrape 6 pages (or 10 with a trial). Paid plans start at $15/month for 500 rows, and all features are included even in the base plan.

Why it’s #1: Thunderbit is the only tool that combines true AI field suggestion, natural language prompts, and subpage scraping in a 2-click workflow. It’s the closest thing to “just tell the computer what you want”—and it works for anyone, not just techies.

2. ParseHub

is a well-known desktop app (Windows, Mac, Linux) with a visual workflow builder. It’s popular among beginners and small teams who want to scrape more complex sites without coding.

- Visual Point-and-Click: Build scraping projects by clicking elements on a page. ParseHub tries to “guess and automatically select similar data elements,” making setup easier.

- Handles Complex Workflows: Supports clicking through dropdowns, handling “Load More” buttons, and scraping content behind logins.

- Live Preview and Export: See your data as it’s scraped, then export to CSV, Excel, or JSON.

- Scheduling and Cloud Runs: Paid plans let you schedule scrapes and run them in the cloud.

Beginner experience: Basic scrapes are easy, but advanced flows (like nested data or conditional logic) require a short learning curve. ParseHub’s tutorials and live training sessions are a big help.

Pricing: Free plan allows up to 5 projects (200 pages per run). Paid plans start at $99/month for more pages and scheduling.

Best for: Beginners who want to scrape complex websites and are willing to invest a little time learning the ropes.

3. Web Scraper (webscraper.io)

is a free Chrome extension that uses a visual “sitemap” approach. It’s flexible and powerful, but requires a bit more setup than AI-driven tools.

- Visual Sitemap Builder: Define how to navigate and what to extract by adding selectors and actions in Chrome DevTools.

- Handles Multi-Level Navigation: Scrape categories, subcategories, and detail pages by setting up parent-child relationships.

- Supports Dynamic Content: Can scroll, click, and wait for AJAX elements.

- Free and Open Source: 100% free for browser-based scraping; optional cloud service for scheduling and scale.

Beginner experience: There’s a learning curve—especially for non-techies. Setting up sitemaps can feel technical, but there are lots of tutorials and sample projects.

Pricing: Free for browser use; cloud plans start at $50/month.

Best for: Tech-savvy beginners or analysts who want a free, flexible tool and are willing to learn the sitemap logic.

4. Octoparse

is a drag-and-drop web scraper with both desktop and cloud versions. It’s known for its friendly UI and powerful automation.

- Drag-and-Drop Designer: Click elements to extract data, build workflows visually, and handle pagination automatically.

- Pre-Built Templates: Templates for Amazon, Twitter, Facebook, and more—just enter a URL and go.

- Cloud Automation: Run scrapes in the cloud, schedule tasks, and handle IP rotation to avoid blocks.

- Export to CSV, Excel, JSON, or API: Flexible output options for business users.

Beginner experience: Very approachable for basic tasks—users report reaching “basic proficiency in 2–3 hours.” Advanced features (like login or infinite scroll) may require more learning.

Pricing: Free plan allows up to 10,000 records per export and 2 concurrent local tasks. Paid plans start at $89/month for unlimited pages and cloud runs.

Best for: Beginners and analysts who want a balance of simplicity and power, especially for recurring or scheduled scraping.

5. Data Miner

is a Chrome/Edge extension that uses a “recipe” system for scraping. It’s great for everyday tasks and has a huge library of pre-built extraction rules.

- 60,000+ Pre-Built Recipes: Find a recipe for your target site and run it with one click.

- Point-and-Click Recipe Builder: Create your own extraction rules by selecting elements visually.

- Handles Pagination and Form Filling: Recipes can click through pages or fill out search forms.

- Exports to CSV, Excel, or Google Sheets: Direct integration for quick data pipelines.

Beginner experience: Super easy if a recipe exists for your site. Creating custom recipes is beginner-friendly, though the UI can feel busy.

Pricing: Free for 500 pages/month. Paid plans start at $19/month for more pages and features.

Best for: Marketers, sales, and researchers who want quick results—especially when a recipe already exists.

6. ScraperAPI

isn’t a point-and-click tool, but it’s worth mentioning for teams with some technical chops. It’s an API service that handles all the hard parts of scraping (proxies, CAPTCHAs, JavaScript rendering).

- Plug-and-Play API: Just call the API with your target URL, and ScraperAPI returns the HTML or JSON.

- Automatic Proxy Rotation and CAPTCHA Solving: No more IP blocks or anti-bot headaches.

- Geo-Targeting and Structured Data Endpoints: Scrape from different countries or get structured data for common sites.

Beginner experience: Easiest for developers or teams with some scripting skills. Can be used with Google Sheets, Zapier, or low-code platforms for non-coders.

Pricing: Free plan with 5,000 API calls/month. Paid plans start at $49/month for 100,000 requests.

Best for: Teams with light coding skills who want reliable, scalable backend scraping.

7. Import.io

is a cloud-based platform with a visual extractor. It’s designed for business users who want to turn web pages into structured data—no desktop install required.

- Point-and-Click Training: Highlight data points on a page, and Import.io generalizes the pattern for you.

- Cloud-Based Scheduling: Run extractors on a schedule, and build APIs or webhooks for data delivery.

- Data Cleaning and Transformation: Built-in tools to clean and format your data before export.

Beginner experience: Very approachable for basic extractions. The platform offers a free trial, but ongoing use is aimed at enterprise customers.

Pricing: Free trial available; paid plans start around $299/month (custom quotes for enterprise).

Best for: Business teams needing a robust, managed solution for recurring web data projects.

8. Content Grabber

is a desktop tool built for business automation and large-scale scraping.

- Visual Editor: Design extraction sequences by clicking through sites—no coding needed for most tasks.

- Automation and Scheduling: Run multiple agents in parallel, schedule scrapes, and integrate directly with databases or APIs.

- Enterprise Features: Error handling, notifications, and a central management console.

Beginner experience: Steep learning curve unless you have some technical background. Best for IT or operations teams ready to invest in a paid solution.

Pricing: No free version; licenses run in the thousands of dollars.

Best for: Enterprises and data teams needing highly customizable, large-scale scraping.

9. Visual Web Ripper

is a classic Windows desktop scraper with a point-and-click interface.

- Template and Project Designer: Build scrapers by selecting data visually—handles listings, detail pages, and pagination.

- Scheduling and Automation: Run projects on a schedule and output to CSV, XML, SQL, and more.

- One-Time License: Pay once, use forever.

Beginner experience: Relatively easy for typical projects, especially if you’re comfortable with Windows software. The interface is a bit dated but logical.

Pricing: No free plan; one-time license around $349 per user.

Best for: SMBs and power users on Windows who want a reliable, offline scraper.

10. Dexi.io

(formerly CloudScrape) is a cloud-based platform with a browser-based visual editor and workflow automation.

- Drag-and-Drop Robot Designer: Build scraping bots in your browser with blocks and point-and-click selections.

- Workflow Automation: Chain robots, schedule runs, and integrate with Slack, Sheets, or APIs.

- Team Collaboration: User management, version control, and cloud storage for results.

Beginner experience: Basic tasks are easy, but advanced workflows (loops, conditionals) require some learning. Documentation and support are available.

Pricing: Free trial; business plans typically start at a few hundred dollars per month.

Best for: Operations and data teams who need scalable, repeatable scraping with automation.

Easiest Web Scrapers to Use: At-a-Glance Comparison Table

| Tool Name | Ease of Use Rating | Ideal Use Case | Free Plan Availability | Notable Features |

|---|---|---|---|---|

| Thunderbit | ⭐⭐⭐⭐⭐ | Unstructured web scraping | Yes | AI field suggestion, subpage scraping, instant export, free Chrome extension |

| ParseHub | ⭐⭐⭐⭐ | Complex automation workflows | Yes | Visual workflow, cloud runs, live support |

| Web Scraper | ⭐⭐⭐⭐ | Quick, flexible extraction | Yes | Visual sitemap, multi-level scraping |

| Octoparse | ⭐⭐⭐⭐ | Frequent, complex scraping | Yes | Drag-and-drop, templates, cloud scheduling |

| Data Miner | ⭐⭐⭐⭐ | Everyday tasks, recipes | Yes | 60k+ recipes, batch scraping, Sheets export |

| ScraperAPI | ⭐⭐⭐ | API-driven, technical teams | Yes | Proxy rotation, CAPTCHA bypass, JSON output |

| Import.io | ⭐⭐⭐⭐ | Cloud-based, business teams | Free trial | Visual training, scheduling, data cleaning |

| Content Grabber | ⭐⭐⭐ | Enterprise, automation | No | Visual scripting, direct DB/API integration |

| Visual Web Ripper | ⭐⭐⭐⭐ | Windows, structured data | No | Point-and-click templates, one-time license |

| Dexi.io | ⭐⭐⭐⭐ | Workflow automation, teams | Free trial | Drag-and-drop, cloud scheduling, integrations |

How to Choose the Easiest Web Scraper for Your Needs

Here’s my cheat sheet for picking the right tool:

- Absolute beginner, want instant results? Start with Thunderbit or Data Miner (especially if there’s a recipe for your site).

- Need to scrape complex or dynamic sites? Try Octoparse or ParseHub—both handle advanced flows with a visual interface.

- Comfortable with a little technical setup? Web Scraper is free and powerful, but expect a learning curve.

- Need to automate recurring jobs or work as a team? Dexi.io, Import.io, or Content Grabber are built for business automation.

- Have a developer on hand? ScraperAPI is a plug-and-play backend for custom workflows.

Always start with a free plan or trial. Try scraping a sample of your target data, and see which tool “clicks” for you. Sometimes the best fit is the one that feels most natural for your workflow.

Conclusion: Start Scraping Smarter, Not Harder

Web scraping in 2025 isn’t just for developers—it’s for anyone who needs web data, fast. The tools on this list prove that you can go from “I need this data” to “I have it in my spreadsheet” in minutes, not months. Whether you’re a sales rep, an ecommerce manager, or just someone tired of copy-pasting, there’s a beginner-friendly web scraper for you.

If you want to see what modern, AI-powered scraping feels like, . And if Thunderbit isn’t the perfect fit, try out a few others from this list—there’s never been a better time to automate the boring stuff and focus on what really matters.

Happy scraping—and may your data always be clean, structured, and ready for action. For more tips and deep dives, check out the .

FAQs

1. What makes a web scraper “easy to use” for beginners?

The easiest web scrapers to use require no coding, have an intuitive visual interface, minimal setup, and handle automation (like pagination and subpages) for you. They should let you export data in one click and offer good support and documentation.

2. Is Thunderbit really the easiest web scraper for non-technical users?

Yes—Thunderbit’s AI-powered field suggestion and 2-click workflow make it uniquely simple. You just describe what you want, click “Scrape,” and get structured data—no coding or manual setup required.

3. Can I use these web scrapers for free?

Most tools on this list offer a free plan or trial. Thunderbit, ParseHub, Web Scraper, Octoparse, and Data Miner all have free tiers, though you may need to upgrade for larger or more frequent jobs.

4. Which web scraper is best for recurring or automated scraping?

For recurring jobs, look for tools with scheduling and cloud automation—like Thunderbit (scheduled scraping), Octoparse, Dexi.io, or Import.io. These let you run scrapes on a schedule and deliver data automatically.

5. How do I know which web scraper is right for my business?

Match your use case (e.g., lead generation, price monitoring) and technical comfort to the tool’s features. Start with a free trial, test a real-world task, and see which tool feels most natural. If you get stuck, look for tools with strong support and tutorials.

Ready to get started? or explore other options from this list—and join the no-code data revolution.

Learn More