Data is the new fuel for business growth, but let’s be honest—wrangling it can feel more like herding cats than filling your tank. In 2025, the sheer volume and variety of information businesses need to collect is staggering. Whether you’re running quick customer surveys, scraping competitor prices, or building a lead list from scratch, the right online data collection service can turn hours of grunt work into a few clicks and a fresh spreadsheet. I’ve spent years in SaaS and automation, and I’ve watched firsthand how the right tool can make or break a project (and sometimes your sanity).

But with so many options—survey platforms, web scrapers, form builders—how do you choose? I’ve put together this guide to the top 9 online data collection services for 2025, breaking down what each one does best, where they shine (or fall short), and how to match the tool to your business needs. Whether you’re a sales pro, a market researcher, or just tired of copy-pasting, there’s something here for you.

Why Online Data Collection Services Matter for Modern Businesses

Let’s set the stage: by 2025, the world will churn out an estimated of data. Businesses that harness this tidal wave are seeing . But here’s the catch: collecting the right data, at the right time, from the right sources, is what separates the winners from the also-rans.

Online data collection services have become the backbone of data-driven decision-making. They automate the tedious parts—gathering customer feedback, scraping web data, building lead lists—so your teams can focus on analysis and action. For example, sales teams use web scrapers to generate thousands of leads per month, while e-commerce operators monitor competitor prices and inventory in real time. On the survey side, rely on online surveys to gather customer feedback, and incorporate that feedback into new product development.

The bottom line? Whether you’re collecting structured survey responses or wrangling messy web data, the right tool can save you hours, reduce errors, and give you a competitive edge.

How We Selected the Best Online Data Collection Services

Not all data collection tools are created equal. Here’s how I narrowed down the top 9:

- Automation & AI: Does the tool minimize manual work? Can it schedule jobs, use AI to suggest fields, or adapt to changes automatically?

- User-Friendliness: Is it easy enough for non-coders or business users to get started? Drag-and-drop builders and AI guidance scored big points.

- Integration & Export: Can you get your data into Excel, Google Sheets, Notion, Airtable, or your CRM with minimal fuss?

- Scalability & Reliability: Will it handle thousands of responses or rows without choking? Is it robust enough for enterprise needs?

- Pricing & Value: Is there a free tier for small jobs? Does the pricing scale fairly as your needs grow?

- Best Use Case Fit: Some tools are survey specialists, others are web scraping wizards. I matched each to the scenarios where they shine.

I also dug into real-world user reviews, expert opinions, and recent feature updates to make sure this list is as practical as it is comprehensive.

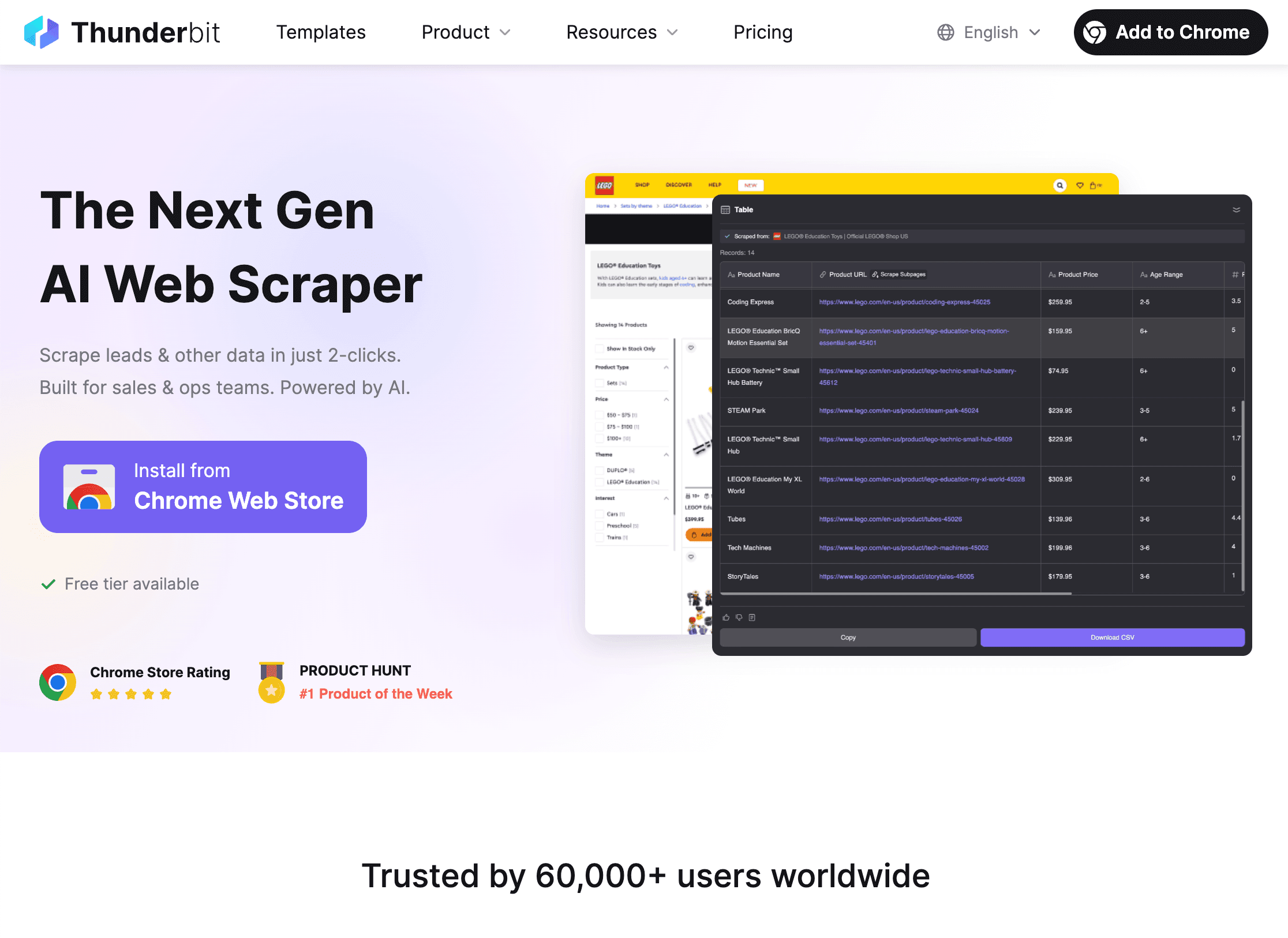

1. Thunderbit

is my go-to for web data collection that goes way beyond basic forms. As an , Thunderbit is built for business users who want to extract structured data from any website—no coding, no templates, no headaches.

is my go-to for web data collection that goes way beyond basic forms. As an , Thunderbit is built for business users who want to extract structured data from any website—no coding, no templates, no headaches.

Why Thunderbit Stands Out:

- AI-Guided Setup: Just click “AI Suggest Fields” and Thunderbit scans the page, suggests what to extract, and sets up the scraper for you. It’s like having an AI assistant who actually knows what you want.

- Subpage & Pagination Scraping: Need data from detail pages or across multiple pages? Thunderbit follows links, clicks “Next,” and merges everything into one table—automatically.

- Instant Export: Export your data directly to Excel, Google Sheets, Airtable, or Notion. No extra fees, no CSV gymnastics.

- Unstructured Data Support: Thunderbit isn’t just for neat tables—it can extract emails, phone numbers, images, and even parse data from PDFs or images using OCR.

- Scheduling & Cloud Scraping: Set up recurring jobs (“scrape every day at 9am”) and let Thunderbit’s cloud servers do the heavy lifting—up to 50 pages at a time.

Best For: Sales lead generation, competitor monitoring, e-commerce operations, market research, and anyone who needs to extract data from complex or messy websites.

Pricing: Free for up to 6 pages (or 10 with a trial boost), then paid plans start at $15/month for 500 rows. All features included—no paywalls for exports or AI.

Pro Tip: If you’re tired of copy-pasting or wrestling with broken scrapers, Thunderbit’s AI-first approach is a breath of fresh air. It’s especially good for long-tail, unstructured data—think scraping niche directories, product catalogs, or contact info from messy sites.

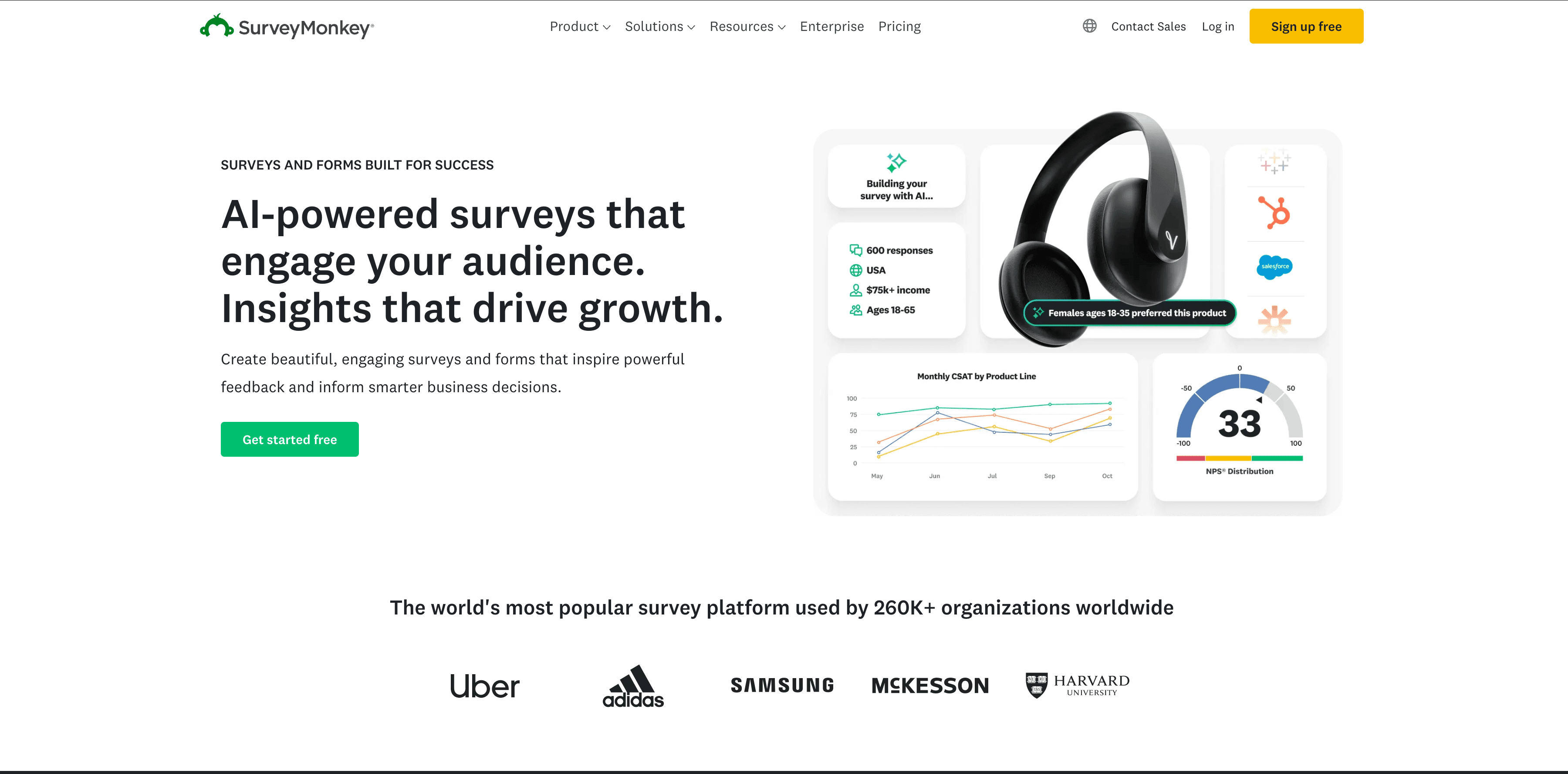

2. SurveyMonkey

is the classic online survey platform—trusted by everyone from startups to Fortune 500s for collecting customer, employee, or market feedback at scale.

is the classic online survey platform—trusted by everyone from startups to Fortune 500s for collecting customer, employee, or market feedback at scale.

Why SurveyMonkey Stands Out:

- Survey Templates & AI Builder: Over 400 pre-built templates and an AI-powered question generator make it easy to launch professional surveys in minutes.

- Advanced Logic: Supports skip logic, branching, and even A/B testing questions for more nuanced feedback.

- Distribution & Audience Panel: Share via web, email, social, or even buy targeted responses from SurveyMonkey’s global panel.

- Analytics & Integrations: Real-time dashboards, export to CSV/SPSS, and integrations with Salesforce, Mailchimp, Slack, and more.

Best For: Customer satisfaction surveys, employee engagement, market research, and any scenario where you need polished, scalable feedback collection.

Pricing: Free plan (10 questions, 25 responses per survey); paid plans start around $25/month. Some integrations and advanced features require higher tiers.

Pro Tip: SurveyMonkey’s “Audience” panel is a lifesaver if you don’t have your own list—you can buy responses from specific demographics or regions.

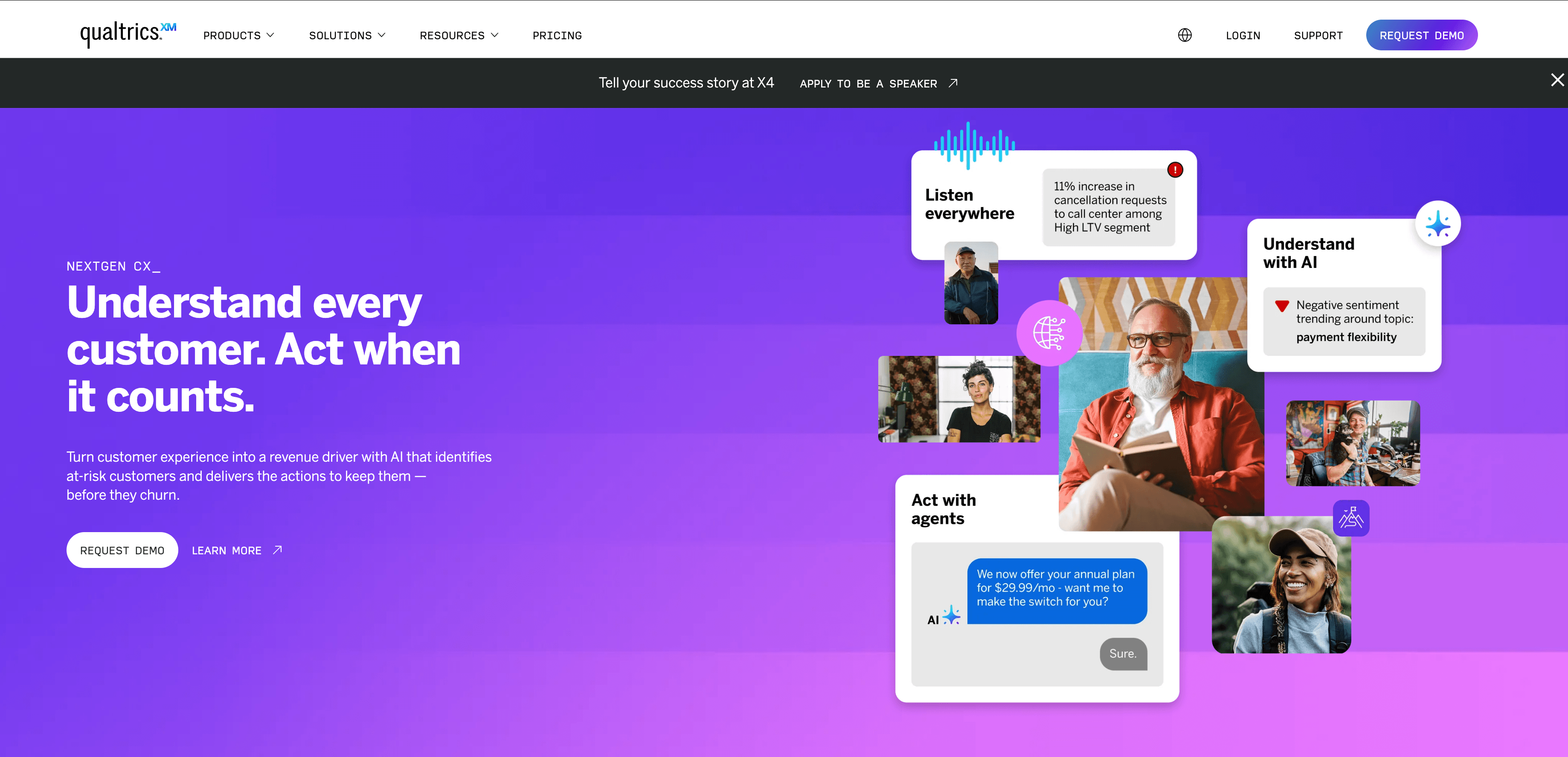

3. Qualtrics

is the heavyweight champion for enterprise-grade surveys, experience management, and deep analytics.

is the heavyweight champion for enterprise-grade surveys, experience management, and deep analytics.

Why Qualtrics Stands Out:

- Complex Survey Logic: Dynamic branching, embedded data, randomization, and advanced question types (conjoint, max-diff, etc.).

- AI Analytics: Built-in sentiment analysis (Text iQ), predictive analytics, and Stats iQ for advanced statistical analysis—no PhD required.

- Workflow Automation: Trigger actions based on responses (e.g., create a support ticket if NPS is low), integrate with Salesforce, SAP, and more.

- Enterprise Security & Scale: Role-based access, compliance (GDPR, HIPAA), and robust support.

Best For: Large organizations, universities, or research teams running complex, high-volume feedback programs or market research.

Pricing: Enterprise-only (custom quotes, typically in the tens of thousands per year).

Pro Tip: If you need boardroom-ready insights, custom workflows, or to tie survey data directly into business processes, Qualtrics is the gold standard.

4. Google Forms

is the no-fuss, totally free solution for quick surveys, polls, or internal data collection.

is the no-fuss, totally free solution for quick surveys, polls, or internal data collection.

Why Google Forms Stands Out:

- Simplicity: Drag-and-drop builder, real-time collaboration, and instant linking to Google Sheets.

- Unlimited Free Use: No limits on forms or responses—great for small teams or internal projects.

- Integration: Seamlessly connects with Google Workspace (Sheets, Gmail), and can be extended via Zapier or Google Apps Script.

Best For: Quick surveys, event RSVPs, internal polls, or any scenario where you need to spin up a form in minutes.

Pricing: Free for anyone with a Google account.

Pro Tip: While it’s not the most customizable or analytical, Google Forms is unbeatable for speed and ease—perfect for small businesses or ad-hoc projects.

5. Typeform

is the king of engaging, interactive surveys—designed to boost response rates with a conversational, one-question-at-a-time interface.

is the king of engaging, interactive surveys—designed to boost response rates with a conversational, one-question-at-a-time interface.

Why Typeform Stands Out:

- Conversational UX: Forms feel like a chat, not a chore—leading to , double the industry average.

- Design & Customization: Beautiful templates, brandable themes, and easy embedding.

- Logic Jumps & Automation: Visual logic builder, workflow automation (send emails, sync to CRM), and integrations with HubSpot, Slack, and more.

Best For: Marketing, lead generation, user research, or any scenario where user experience and high response rates are top priorities.

Pricing: Free plan (10 responses/month); paid plans start at $25/month.

Pro Tip: If your forms look and feel great, people are more likely to finish them. Typeform is my pick for outward-facing surveys where every response counts.

6. Zoho Survey

is the integrated survey tool for businesses already using Zoho’s suite—or anyone who wants solid features at a budget-friendly price.

is the integrated survey tool for businesses already using Zoho’s suite—or anyone who wants solid features at a budget-friendly price.

Why Zoho Survey Stands Out:

- Zoho Ecosystem Integration: Native connections to Zoho CRM, Campaigns, Analytics, and more.

- Multilingual & Mobile: Supports surveys in multiple languages and offline data collection via mobile app.

- Automation: Skip logic, email triggers, and workflow integration (especially powerful when paired with other Zoho apps).

Best For: Small to mid-sized businesses, especially those already running on Zoho’s platform.

Pricing: Free plan (100 responses/survey); paid plans start around $8/month (annual billing).

Pro Tip: If you’re already using Zoho CRM, Zoho Survey is a no-brainer—it closes the loop between feedback and customer data.

7. JotForm

is the Swiss Army knife of online forms—great for everything from surveys to order forms, registrations, and payment collection.

is the Swiss Army knife of online forms—great for everything from surveys to order forms, registrations, and payment collection.

Why JotForm Stands Out:

- Drag-and-Drop Builder: Over 2,000 templates and a huge library of widgets (e-signature, calculators, file uploads, and more).

- Conditional Logic & Workflows: Show/hide fields, auto-emails, approval flows, and calculated fields.

- Payment Integration: Collect payments via PayPal, Stripe, Square, and more—right inside your form.

- Integrations: 100+ direct integrations (Salesforce, Google Drive, Airtable, etc.), plus Zapier and API access.

Best For: SMBs needing flexible forms for registrations, orders, surveys, or any data collection that goes beyond basic Q&A.

Pricing: Free plan (5 forms, 100 submissions/month); paid plans start at $34/month.

Pro Tip: JotForm is perfect if you need to collect more than just survey responses—think event sign-ups, order forms, or anything requiring calculations or payments.

8. QuestionPro

is a feature-rich survey platform built for researchers, academic teams, and enterprises that need advanced question types and analytics.

is a feature-rich survey platform built for researchers, academic teams, and enterprises that need advanced question types and analytics.

Why QuestionPro Stands Out:

- Advanced Question Types: Supports conjoint, max-diff, side-by-side matrices, and more—ideal for market research.

- Complex Logic: Detailed branching, looping, and quality checks (e.g., attention checks, fraud detection).

- Panel Management: Build and manage respondent panels for ongoing research.

- Integrations: Google Sheets, Tableau, Salesforce, HubSpot, and more (some require higher tiers).

Best For: Market research, academic studies, large-scale feedback programs, or any scenario needing research-grade survey features.

Pricing: Free basic version; paid plans start around $85/user/month for teams.

Pro Tip: If you need “Qualtrics-like” power without the enterprise price tag, QuestionPro is a strong contender—especially for research-heavy teams.

9. Alchemer

(formerly SurveyGizmo) is the tool for businesses that want maximum flexibility, deep customization, and workflow automation—without the enterprise bloat.

(formerly SurveyGizmo) is the tool for businesses that want maximum flexibility, deep customization, and workflow automation—without the enterprise bloat.

Why Alchemer Stands Out:

- Extreme Customization: Advanced branching, scripting, quotas, and custom branding (even custom HTML/CSS).

- Workflow Automation: 400+ integrations (CRM, databases, helpdesks), unlimited on all plans, and robust triggers (e.g., auto-create support tickets from survey responses).

- Enterprise Features: Role-based access, SSO, strong security, and fast go-live (most customers launch in days, not months).

- Cost-Effective: Delivers 90% of Qualtrics’ functionality at about 20% of the price.

Best For: Enterprises or teams needing highly tailored surveys, complex feedback workflows, or deep integration with business systems.

Pricing: Team and Enterprise plans (mid-range, custom quotes, generally much cheaper than Qualtrics).

Pro Tip: If you want your survey platform to mold to your business—not the other way around—Alchemer is your best bet.

Quick Comparison Table: Online Data Collection Services at a Glance

| Service | Best For | Automation & AI | Integrations | Starting Price | Unique Strength |

|---|---|---|---|---|---|

| Thunderbit | Web scraping, sales leads, e-commerce ops | AI-guided, subpage/pagination, schedule | Excel, Sheets, Airtable, Notion | Free, $15/mo | Easiest AI web scraper, 2-click setup, handles unstructured data |

| SurveyMonkey | Customer/employee feedback, market research | AI question builder, skip logic, A/B | Salesforce, Mailchimp, Slack, more | Free, $25/mo | 400+ templates, buy responses from Audience panel |

| Qualtrics | Enterprise research, CX/EX programs | Advanced logic, Text iQ, workflow | SAP, Salesforce, BI tools, APIs | $$$ (custom) | Deep analytics, workflow automation, enterprise scale |

| Google Forms | Quick surveys, internal forms | Basic skip logic, auto-link to Sheets | Google Workspace, Zapier | Free | Totally free, instant setup, unlimited responses |

| Typeform | High-engagement, marketing, lead gen | Conversational UI, logic jumps, workflow | HubSpot, Slack, Zapier, webhooks | Free, $25/mo | 47% avg completion rate, beautiful design |

| Zoho Survey | SMBs, Zoho users, multi-language | Skip logic, email triggers, mobile app | Zoho CRM, Analytics, Zapier, API | Free, $8/mo | Seamless Zoho integration, affordable for SMBs |

| JotForm | Flexible forms, orders, registrations | Conditional logic, auto-emails, payments | 100+ apps, Salesforce, Drive, API | Free, $34/mo | 2,000+ templates, widgets, payment & e-signature support |

| QuestionPro | Market research, academic, large-scale | Conjoint, branching, panel mgmt, QA | Sheets, Tableau, Salesforce, API | Free, $85/mo | Research-grade features, panel management |

| Alchemer | Custom workflows, enterprise feedback | Advanced logic, scripting, triggers | 400+ (CRM, DB, helpdesk), API | $$ (custom) | Maximum flexibility, unlimited integrations, fast deployment |

Choosing the Right Online Data Collection Service for Your Business

So, which tool is right for you? Here’s how I’d break it down:

- Need to scrape complex web data, automate lead gen, or monitor competitors? Go with . Its AI-guided setup and instant exports make it the easiest choice for web scraping in 2025.

- Running quick, internal surveys or collecting basic feedback? is free, fast, and gets the job done for simple needs.

- Want to maximize survey response rates and delight your users? is the king of engaging, conversational forms.

- Already using Zoho for CRM or operations? will fit right into your workflow.

- Need advanced analytics, branching, or enterprise-scale feedback? , , or are your best bets—pick based on your budget and integration needs.

- Want flexible forms for orders, registrations, or payments? is the most versatile all-in-one builder.

- Looking for a trusted, all-purpose survey platform? is still the workhorse for most feedback needs.

Pro tip: Start with a free trial or plan, run a small pilot, and see which tool fits your workflow and team best. Don’t get seduced by features you’ll never use—pick the simplest tool that meets your needs and integrates with your existing stack.

And remember: the real value isn’t just in collecting data, but in turning it into action. The best tool is the one that helps you close the loop—analyze, share, and make smarter decisions.

FAQs

1. What’s the difference between a web scraper and a survey platform?

A web scraper (like Thunderbit) automatically extracts data from websites—great for collecting product info, prices, or contact details. Survey platforms (like SurveyMonkey or Typeform) are designed to collect structured feedback from people via forms or questionnaires.

2. Which tool is best for collecting customer feedback?

For quick, simple surveys, Google Forms or SurveyMonkey work well. For more advanced analytics or large-scale feedback, Qualtrics or Alchemer are better suited.

3. Can I export data directly to Excel or Google Sheets with these tools?

Yes—Thunderbit, SurveyMonkey, Google Forms, Zoho Survey, JotForm, and others all support direct export to Excel, Sheets, or via integrations.

4. Are there free options for online data collection?

Absolutely. Google Forms, the free tiers of SurveyMonkey, Zoho Survey, JotForm, and Thunderbit all let you get started at no cost, though paid plans unlock more features and higher limits.

5. How do I choose the right online data collection service for my business?

Start by defining your primary use case (web scraping, surveys, forms, etc.), your integration needs, and your budget. Test a few tools with a small project, and pick the one that’s easiest for your team and fits your workflow.

Ready to supercharge your data collection in 2025? to try AI-powered web scraping, or explore more tips and guides on the . Happy data hunting!