Here's a stat that should bother every outbound team: . The average campaign reply rate sits at just 4.1%. Meanwhile, well-researched, deeply personalized outreach can push into double-digit reply territory. So the formula seems obvious—just personalize more, right?

Not so fast. The problem in 2026 isn't that teams don't personalize. It's that buyers have gotten really good at spotting fake personalization. say they'd be less likely to reply if they thought an email was AI-generated, and now prefer brands that avoid GenAI in customer-facing content.

The real challenge isn't personalization vs. scale. It's personalization vs. credibility. This guide is about building a system that gives you both—without tripping the "this is fake" alarm.

What Is Cold Email Personalization (and Why Most Teams Still Get It Wrong)?

Cold email personalization means making every outreach message feel like it was written specifically for one person—not pulled from a blast template. But here's where most teams go sideways: they think personalization equals more merge tags. It doesn't. Personalization equals relevance.

Cold email personalization means making every outreach message feel like it was written specifically for one person—not pulled from a blast template. But here's where most teams go sideways: they think personalization equals more merge tags. It doesn't. Personalization equals relevance.

The spectrum runs from basic token swaps (\{FirstName\}, \{CompanyName\}) all the way to context-rich references tied to a prospect's actual situation—a recent hire surge, a product launch, a pricing page overhaul. An email that nails a prospect's likely pain point with zero name-drops outperforms one stuffed with merge fields but saying nothing meaningful.

Community complaints confirm this. A Reddit commenter compared the classic "I noticed you're in the [industry] space" opener to saying "I noticed you have a face." Another sales practitioner on LinkedIn called the "I came across your company and was impressed by…" line . The pattern is clear: recipients don't reject personalization. They reject lazy personalization that could apply to anyone.

One more thing worth stating upfront: personalization quality depends on research quality. The writing is downstream. If the data going in is thin, no template or AI prompt will save the output.

The Numbers Don't Lie: Cold Email Reply Rates by Personalization Tier

I've spent a lot of time cross-referencing vendor benchmarks, community-reported numbers, and our own observations at Thunderbit. The clearest way to frame the data is in tiers—because personalization isn't binary. It's a spectrum, and each level has a different effort-to-reward ratio.

| Personalization Tier | Effort per Email | Typical Open Rate | Typical Reply Rate | Best For |

|---|---|---|---|---|

| None (batch blast) | ~0 sec | 20–30% | <1–3% | ❌ Not recommended |

| Basic (name + company merge) | ~5 sec | 35–45% | 3–6% | Low-value, high-volume lists |

| Segment-based (ICP + pain point) | ~30 sec | 40–50% | 5–8% | Mid-market outbound at scale |

| Deep 1:1 (researched first line) | 3–5 min | 50%+ | 8–15% | Enterprise / high-ACV accounts |

Sources: , , , .

A few honest caveats: these ranges shift with industry, list quality, and sending reputation. Open rates are especially noisy— that image blocking and privacy features distort tracking. And Hunter found that campaigns with open tracking actually got lower reply rates () than untracked ones.

Still, the directional pattern is consistent across every dataset I've reviewed: more relevant personalization → more replies. The question is where to draw the line.

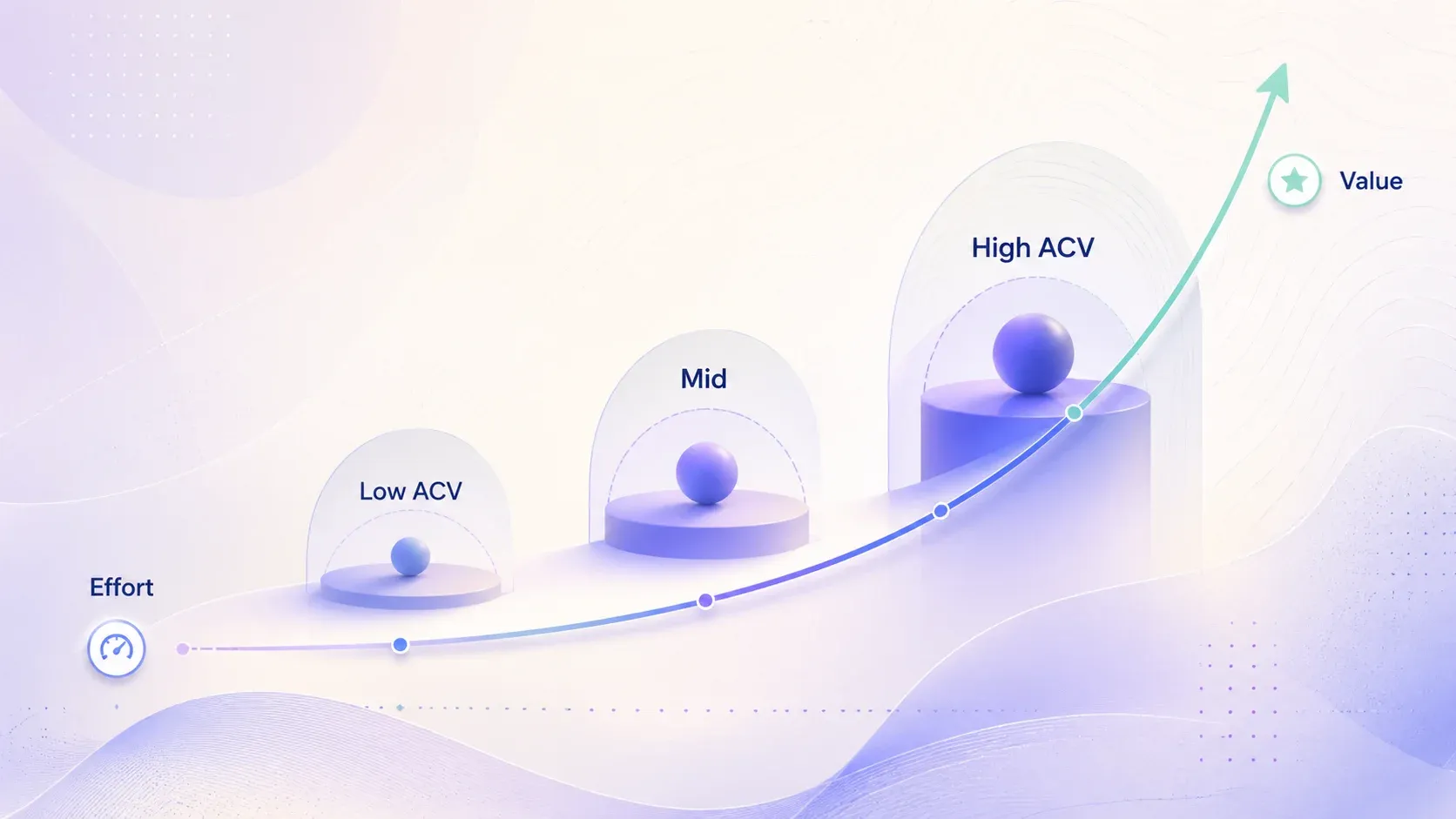

When Deeper Personalization Stops Being Worth the Extra Effort

There's a diminishing-returns curve, and it's tied to deal size. If you're selling a $500/month product, spending five minutes per prospect on custom research probably doesn't pencil out. If you're chasing a $50K+ annual contract, it absolutely does.

A practical rule of thumb:

- ACV above ~$30K–50K: Deep 1:1 personalization is justified. The payoff per reply is high enough to absorb the research cost.

- ACV $5K–$30K: Segment-based personalization is the sweet spot. Build 5–8 persona-specific templates around real pain points.

- ACV below $5K: Basic merge personalization, but only with a very clean, well-targeted list.

supports this framing: higher-ACV teams should benchmark against tighter reply-rate expectations and invest more per prospect.

How to Collect Personalization Signals Without Losing Your Mind

Most personalization guides jump straight to writing. That's backwards. The hardest part of personalization at scale isn't generating sentences. It's finding recent, useful, and role-relevant signals fast enough to justify the effort.

This is the data pipeline step that competitors skip—and it's where the real bottleneck lives.

What Signals to Look For (and Where to Find Them)

Not all signals are created equal. The best ones are recent and specific enough that they can't be faked. "Your company is growing" is weak. "You posted three DevOps roles in two weeks" is strong—because it implies a likely operational pressure point.

Here's what to look for and where it usually lives:

| Signal | Where to Find It |

|---|---|

| Recent funding rounds | Crunchbase, press releases, investor pages |

| Hiring surges / role clusters | Careers pages, LinkedIn Jobs, job boards |

| Tech-stack changes | Engineering blog, job descriptions, product docs |

| Pricing / packaging changes | Pricing page, changelog, product-marketing pages |

| Positioning shifts | Homepage, solution pages, company blog |

| Executive priorities | Earnings calls, podcasts, LinkedIn posts |

The key is that each signal should connect to a plausible business challenge. A funding round implies scaling pressure. A cluster of DevOps hires implies infrastructure pain. A pricing page overhaul implies competitive repositioning. You're not just collecting facts—you're building hypotheses about what the prospect cares about right now.

Speed Up Research with AI Web Scraping (Without Sacrificing Data Quality)

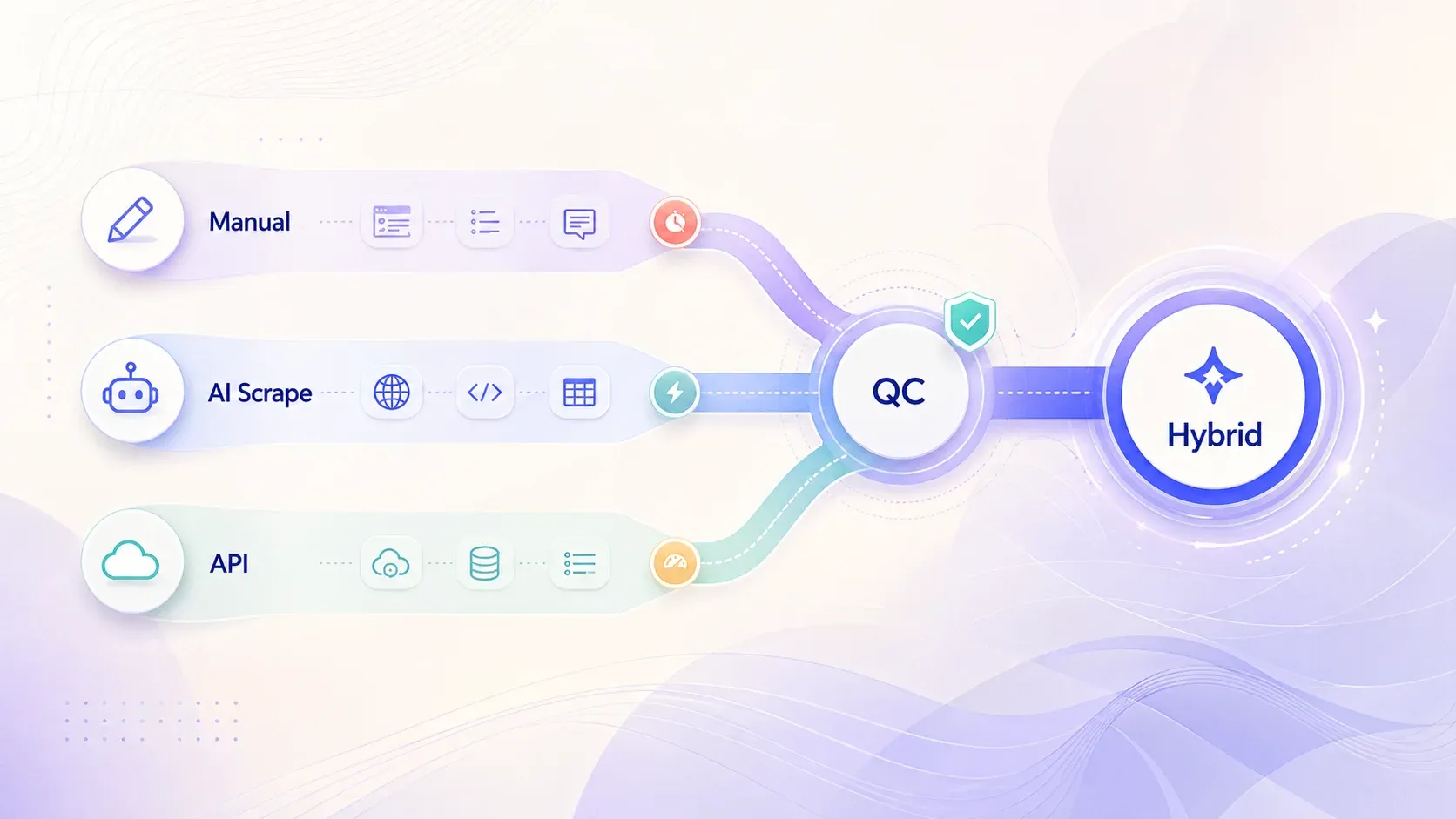

Manual research is thorough, but it's slow. In my experience, fully manual prospect research tops out around 5–10 prospects per hour—and that's with a focused SDR who knows where to look. For most outbound teams, that's not sustainable at scale.

This is where AI-powered web scraping fits naturally. At , we built our Chrome extension to handle exactly this workflow: visit a prospect's company website, let the AI scan team pages, product pages, careers sections, About Us details, and blog posts, then export structured data to Google Sheets or your CRM. The is especially useful here—you don't have to manually click through every section of a site. The scraper visits relevant subpages automatically and enriches the dataset without the tab-switching marathon.

Here's how the research methods compare in practice:

| Research Method | Prospects/Hour | Data Quality | Cost |

|---|---|---|---|

| Fully manual (Google + LinkedIn) | 5–10 | High | Free (just time) |

| AI web scraper (e.g., Thunderbit) + manual review | 40–80 | High (with QC) | Low |

| Enrichment API only (no web context) | 100+ | Medium (structured only) | Medium–High |

The hybrid approach—AI scrape plus human review—consistently hits the best balance. Enrichment APIs are fast, but they miss the nuanced, narrative signals (recent blog posts, pricing changes, leadership commentary) that make personalization feel real. Manual research catches everything but doesn't scale. The middle path is where most teams should land.

For a deeper walkthrough on using Thunderbit for this kind of research, check out our or our .

How to Personalize Each Part of a Cold Email (With Before-and-After Examples)

Once you have signals, the next step is turning them into email copy that feels specific—not scripted. Each section of a cold email serves a different function, and each needs a different kind of personalization.

Subject Lines That Get Opened

The subject line's job is to earn the open. The data here is nuanced: personalized subject lines drove 46% opens vs. 35% without, but Lavender's research suggests that first-name personalization in subject lines can actually reduce replies by 12%. even found non-personalized subject lines outperforming personalized ones on opens (41.87% vs. 35.78%).

The resolution is simple: contextual specificity beats cosmetic name-drops.

- Before: "Quick question for you"

- After: "Your new Kubernetes migration"

The second subject line signals that the sender knows something specific. It doesn't need a first name to feel personal.

Opening Lines That Feel Specific, Not Scripted

The opening line is the make-or-break moment. It must reference a specific, verifiable signal—not a generic compliment. Here's a quick checklist:

-

Is it specific to THIS person or company?

-

Could it only be true for them? (If it could apply to 100 other companies, rewrite it.)

-

Does it connect to a business challenge, not just flattery?

-

Before: "I noticed your company is doing great things in the SaaS space."

-

After: "Saw your team just posted three DevOps roles this month—scaling infrastructure that fast usually means deployment bottlenecks are piling up."

The first one is the cold-email equivalent of "nice shirt." The second one proves the sender did homework and has a hypothesis about the prospect's world.

Body Copy That Shows You Understand Their Workflow

The body should bridge the personalized opener to the value proposition. Don't rehash the opener. Don't list features. Use a "bridge sentence" that connects the signal to the problem you solve, then add a peer reference for credibility.

Keep it to 2–3 sentences. shows the best-performing campaigns keep emails under 80 words. found 6–8 sentence emails averaging a 6.9% reply rate, but shorter and tighter generally wins in cold outreach.

- Before: "We offer a cloud infrastructure platform with auto-scaling, CI/CD pipelines, and 24/7 monitoring."

- After: "We helped [peer company]'s DevOps team cut deployment time by 40% after a similar hiring surge—without adding headcount to the ops team."

CTAs That Feel Relevant, Not Generic

Match the ask to the trust level. Cold prospects don't want to "schedule a demo." They want low-commitment next steps.

- Before: "Let me know if you'd like to schedule a demo."

- After: "Happy to share the playbook we used with [similar company]—want me to send it over?"

The second CTA offers value before asking for time. That's a much lower bar for a stranger to clear.

Cold Email Personalization by Buyer Persona: What Works for the CFO vs. the CTO vs. the VP Sales

One of the most underappreciated findings in recent cold-email research is that the same personalization quality performs very differently across roles. Lavender's benchmarking data shows:

- Finance buyers averaged 3.2% reply rate, but high-quality finance emails jumped to 5.7%—a 79% lift.

- Marketing buyers averaged 3.2%, rising to 4.2%—a 31% lift.

- Technical buyers averaged 5.2%, but stronger emails only moved them to 5.5%—about 6% lift.

The implication is clear: what counts as "relevant" is persona-dependent. A CFO cares about margin pressure and cost efficiency. A CTO cares about technical fit and engineering velocity. Using the same angle for both is lazy, and the data shows it.

| Buyer Persona | Signals That Resonate | Personalization Angle | Example Opening Line |

|---|---|---|---|

| CFO / Finance | Revenue milestones, funding, margins | ROI & cost reduction | "Saw your Q3 report highlighted margin pressure in logistics…" |

| CTO / Engineering | Tech stack, hiring for specific roles, open-source contributions | Technical fit & efficiency | "Noticed your team's migrating to Kubernetes—we helped [peer] cut deployment time by 40%…" |

| VP Sales / CRO | Quota attainment, team growth, new market entry | Pipeline & conversion impact | "Your sales team grew 3x this year—curious if outbound infrastructure kept pace…" |

| Marketing Lead | Campaign launches, content strategy shifts, brand mentions | Awareness & demand gen | "Your recent rebrand caught my eye—the positioning shift toward enterprise is smart…" |

The practical takeaway: build 5–8 strong templates mapped to specific personas and pain points. This segment-based approach often outperforms sloppy 1:1 AI lines—because a well-crafted template with the right angle beats a poorly researched "personalized" opener every time.

For more on building prospect lists organized by persona, see our .

The Section Every Guide Skips: How to Keep Personalization Alive Across Emails 2–5

This is the single biggest gap in cold email advice right now. I've read dozens of guides, and almost none of them address what happens after email 1. Yet most cold email campaigns run 3–5 touches, and shows follow-ups capture 42% of all replies. the first follow-up can get a 40% higher reply rate than the opener.

The problem? Personalization usually drops to zero after email 1. Follow-ups become generic nudges: "Just checking in," "Bumping this to the top of your inbox," "Did you get a chance to see my last email?"

That's a waste. Each follow-up is a chance to introduce new evidence that you're paying attention. Here's a framework we've found effective:

Email 1: The Deep Personalized Opener

Use the strongest researched signal as the hook. This is where you spend the most effort—it establishes credibility for the rest of the sequence.

Email 2: Reference a New, Different Signal

Don't repeat email 1's signal. Find a second one from a different source—a recent LinkedIn post, a new job listing, a company blog update. Callback to email 1's value prop: "Following up on my note about [X]—also noticed [new signal]."

Email 3: Shift Angle with Peer Proof or Competitor Insight

Use a case study or competitor insight relevant to their segment. "Teams like [peer company] in [their industry] faced the same challenge and saw [result]." This reduces perceived risk and adds social proof.

Email 4: Use a Timing Trigger

Reference a real-time event: "Noticed your team just posted a role for [X]—that usually means [Y challenge] is on your radar." This keeps the sequence feeling current, not automated.

Email 5: The Breakup Email with a Personalized Summary

Summarize why you reached out, what signals you noticed, and what value you offered. Keep it short and respectful: "I'll stop reaching out—but wanted to leave you with [resource] in case [pain point] comes up down the road."

One important caveat: shows spam complaints rising from 0.5% on email 1 to 1.6% on email 4, and unsubscribe rates hitting 2% by round 4. So each follow-up needs to add genuine value. If you're just nudging, you're burning trust.

For more on structuring outbound sequences, check out our and .

The AI Personalization Trust Problem: What Gets Flagged and How to Fix It

AI can help with personalization at scale. But unchecked AI personalization can actually hurt reply rates. The evidence is pretty damning:

- in a 2025 Adobe Express survey said they'd received at least one brand email written by AI.

- had unsubscribed because they suspected an email was AI-written.

- say it bothers them if AI was used—unless the result still feels human and relevant.

The issue isn't that AI is involved. It's that robotic phrasing, hallucinated facts, and fake admiration are what trigger distrust. A Reddit user in described the "I noticed you…" pattern as "a template pretending to be a person." That's the failure mode.

A QC Checklist for AI-Generated Personalization Lines

Before any AI-drafted email goes out, run it through these five checks:

- Is the referenced fact verifiable? Google it. If the AI fabricated a detail (hallucination risk is real—community reports suggest roughly 1 in 40 leads), you'll lose credibility instantly.

- Could this compliment apply to 100 other companies? If yes, rewrite.

- Does it use "I noticed…" or "I was impressed by…"? These are the telltale AI-default openers. Rephrase.

- Are the company name, role, and industry all correct? Check for hallucinations.

- Does it connect to a real business problem, or is it just flattery?

Prompt Engineering Tips for Better AI Output

The quality of AI personalization is downstream of the data you feed it. A vague prompt produces vague output. A constrained prompt with real signals produces something usable.

- Bad prompt: "Write a personalized first line for [company]."

- Better prompt: "Using this data about [prospect]: [paste scraped data from Thunderbit or CRM]. Write a 1-sentence opening line that references their [specific signal] and connects it to [pain point]. Write in a casual, direct tone. Do not start with 'I noticed' or 'I was impressed.'"

The difference is night and day. The first prompt gives the AI nothing to work with. The second gives it constraints, context, and a clear output format.

AI vs. Manual vs. Hybrid: An Honest Comparison

| Approach | Volume/Day | Quality | Hallucination Risk | Best For |

|---|---|---|---|---|

| Fully AI-generated | 200+ | Low–Medium | ⚠️ High | Only with rigorous QC layer |

| AI draft + human edit | 50–100 | High | Low (caught in edit) | Most B2B outbound teams |

| Fully manual research + writing | 10–20 | Very High | None | Enterprise ABM plays |

For most teams, the hybrid approach—AI draft plus human edit—is the sweet spot. You get the speed of automation with the judgment of a real person catching errors, removing clichés, and sharpening the angle. The article's message isn't "personalize every email with AI." It's personalize strategically, and verify ruthlessly.

Tools and Approaches for Cold Email Personalization at Scale

No single tool covers the entire personalization workflow. The best stacks combine layers, each doing one job well.

| Tool Type | What It Does | Strengths | Limitations |

|---|---|---|---|

| AI web scraper (e.g., Thunderbit) | Extracts prospect data from websites in bulk | Captures unstructured signals (blogs, team pages, careers); subpage scraping | Requires human review for QC |

| Enrichment API (e.g., Apollo, Clearbit) | Appends firmographic/technographic data to leads | Fast, structured data at scale | Misses nuanced signals (recent blog posts, pricing changes) |

| AI writing assistant (e.g., Lavender) | Scores and suggests improvements to email copy | Real-time feedback, tone analysis | Still needs quality input data |

| Cold email platform (e.g., Saleshandy, Smartlead) | Sends personalized sequences with merge fields and scheduling | Automates delivery, tracks opens/replies | Personalization quality depends on what you feed it |

The workflow that makes sense for most teams:

Scrape → Normalize → Enrich → Draft → QC → Send → Track

Thunderbit handles the scrape-and-normalize step: pull structured data from company websites, export to or Excel, then feed that into your enrichment and sending tools. Apollo or similar handles firmographic enrichment. Lavender or ChatGPT helps with drafting. Saleshandy or Smartlead handles delivery and tracking.

The point is that these tools are complementary, not competing. A scraper without a sender is just a spreadsheet. A sender without quality data is just a spam cannon.

Step-by-Step: How to Personalize Cold Emails at Scale (Putting It All Together)

Here's the consolidated workflow, pulling every earlier section into a repeatable system. Think of it as the playbook we'd follow if we were building a cold email personalization engine from scratch today.

Step 1: Define Your ICP and Segment Your List

Before personalizing anything, segment your prospect list by persona (CFO, CTO, VP Sales, etc.) and by account tier (enterprise = deep 1:1, mid-market = segment-based). This determines how much research effort each prospect gets.

Step 2: Scrape Personalization Signals in Bulk

Use Thunderbit or a similar AI web scraping tool to pull prospect data from company websites, LinkedIn, job boards, and other public sources. Use Thunderbit's "AI Suggest Fields" to let the tool automatically identify what data to extract. Export the structured output to Google Sheets or your CRM.

For a step-by-step walkthrough of Thunderbit's scraping workflow, see the or our .

Step 3: Build 5–8 Persona-Specific Templates

Write segment-based templates for each persona, each centered around a specific pain point. Leave placeholders for the personalized opener and bridge sentence. The template handles the body and CTA; the personalization layer handles the first 1–2 sentences.

Step 4: Write (or AI-Draft) Personalized Openers

Using the scraped data, manually write or AI-draft opening lines for each prospect. Apply the QC checklist before anything goes out. If you're using AI, feed it the scraped signals and constrain the output format.

Step 5: Build a Multi-Touch Sequence with Fresh Signals at Each Step

Map out 3–5 emails per prospect, with a different personalization signal at each touchpoint. Email 1 gets the deepest signal. Each follow-up introduces new context—a different data point, a peer proof, a timing trigger.

Step 6: Send, Track, and Iterate

Use a cold email platform to schedule and send. Track open rates, reply rates, and positive reply rates by personalization tier and persona. Iterate on which signals and angles drive the best results. Double down on what works; cut what doesn't.

The whole process—from scraping to sending—can be stood up in a few days for most teams. The ongoing maintenance is mostly about refreshing signals and tuning templates based on performance data.

Key Takeaways

Cold email personalization at scale isn't about choosing between quality and volume. It's about building a system that gives you both—without faking it.

- Relevance beats flattery. A segment-based template with the right angle outperforms a sloppy AI-generated "I noticed…" opener.

- Research quality = personalization quality. The bottleneck isn't writing—it's finding recent, specific, role-relevant signals fast enough. AI web scraping (like ) compresses that bottleneck dramatically.

- Persona matters. What moves a CFO is different from what moves a CTO. Map your templates to buyer roles, not just company names.

- Follow-ups need fresh signals. Personalization shouldn't die after email 1. Each touch in the sequence should introduce new evidence that you're paying attention.

- AI helps, but only with guardrails. The hybrid approach—AI draft plus human edit—is the most reliable method for most teams. Verify facts, ban canned phrases, and never send something you wouldn't read yourself.

A practical next step: audit your current outreach. Which personalization tier are you at today? What would it take to move up one level? Even going from "basic merge" to "segment-based" can meaningfully shift your reply rates—without requiring a massive time investment.

If you want to start building your research pipeline, on a small list and see how quickly you can turn a set of prospect URLs into structured, usable signals.

FAQs

Does cold email personalization actually improve reply rates?

Yes, and the data is consistent across multiple benchmarks. Non-personalized batch blasts typically sit around 1–3% reply rate, while well-executed deep personalization can reach 8–15%. The exact numbers vary by industry, list quality, and sending reputation, but the directional lift is real. Sources include , , and .

How long should I spend researching each prospect?

It depends on account value. For enterprise deals ($50K+ ACV), 3–5 minutes per prospect is justified. For mid-market at scale, use AI web scraping tools to bring research time down to 30–60 seconds per prospect with a human QC pass. The hybrid model—AI scrape plus manual review—consistently delivers the best speed-to-quality ratio.

Can AI write personalized cold emails that don't sound fake?

AI can draft personalization, but it needs quality input data and human review. The biggest risks are hallucinated facts, generic compliments, and telltale phrasing like "I noticed…" or "I was impressed by…" The most reliable approach for most B2B teams is AI draft plus human edit—catching errors and sharpening angles before anything goes out.

How many follow-up emails should I send, and should each one be personalized?

The most defensible range is 3–5 follow-ups (4–7 total touches). Yes, each follow-up should include at least one fresh, personalized signal. shows follow-ups capture 42% of all replies, but warns that spam complaints and unsubscribe rates rise after the third follow-up unless each touch adds new value.

Is cold email personalization legal?

Cold emailing is legal when done correctly. In the U.S., applies fully to B2B commercial email—there is no B2B exemption. Key requirements: accurate subject lines, clear sender identification, a valid postal address, a working opt-out mechanism, and honoring opt-outs within 10 business days. In the UK/EU, are stricter and require more caution around consent and data handling.

Learn More