Walmart.com has over , roughly $50 billion in ecommerce-related net sales, and some of the most aggressive anti-bot defenses in retail. If you've ever tried to scrape product data from Walmart—prices, stock levels, seller info—you've probably hit a wall that returned blank fields or a CAPTCHA page instead of the data you needed.

I spent weeks testing 9 different Walmart scraping tools, from no-code Chrome extensions to enterprise-grade APIs. My goal was simple: find out which ones actually return usable Walmart product data in 2026, and which ones just burn your credits. The answer depends a lot on who you are—a solo seller tracking 50 SKUs, a dev building a pipeline, or an enterprise team monitoring thousands of products daily. Below, I'll walk through what worked, what didn't, and how to pick the right tool for your situation.

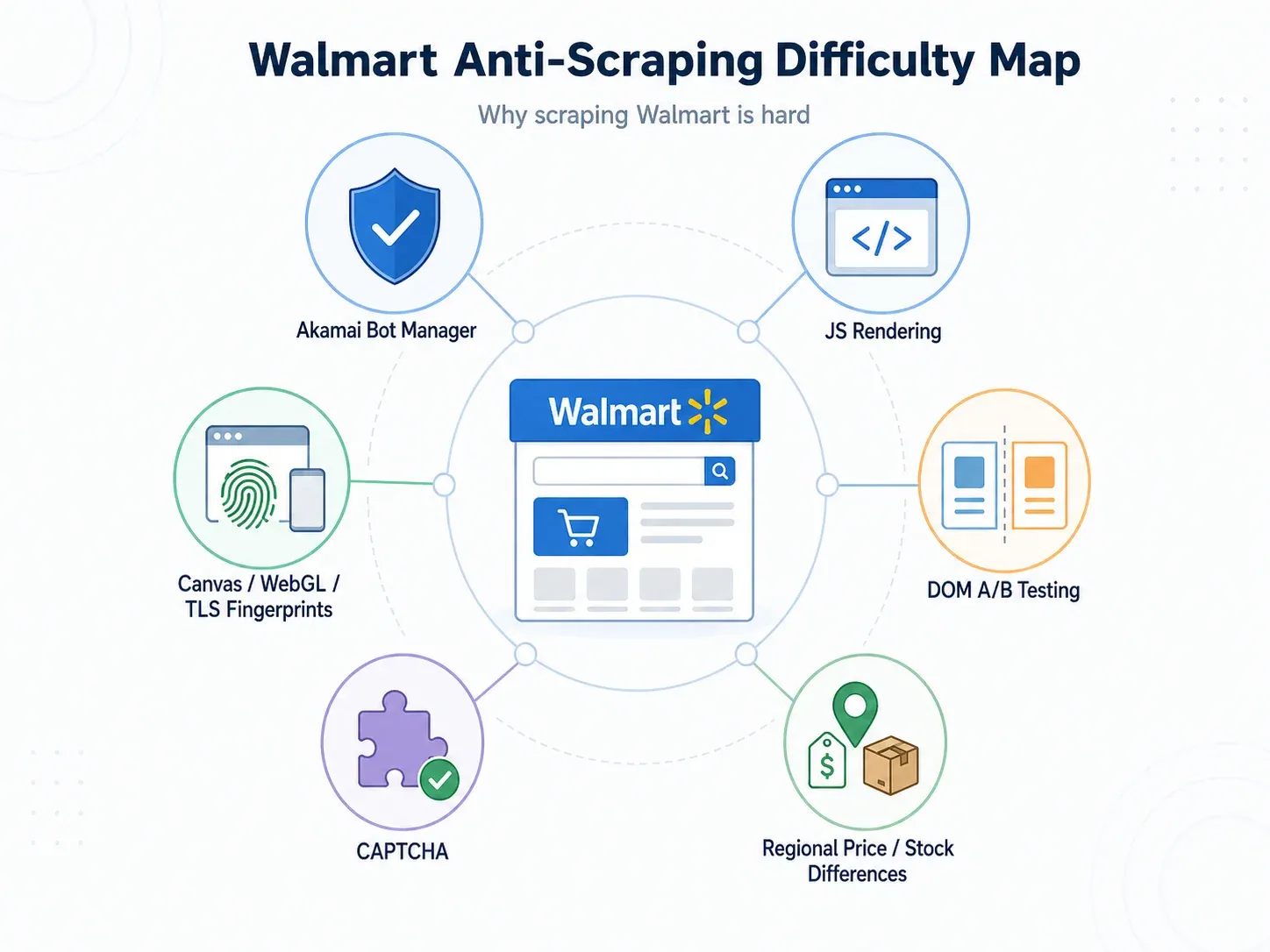

Why Scraping Walmart Is Harder Than Most Retail Sites

Most people assume scraping Walmart is like scraping any other retail site. It isn't. Walmart's anti-bot stack is commonly rated 9/10 difficulty by scraping industry sources, and for good reason.

Here's what you're actually up against:

- Akamai Bot Manager: Walmart uses , which scores requests using AI/ML-driven behavior analytics, browser/device fingerprinting, HTTP anomaly detection, and user-interaction signals. Akamai processes 40 billion bot requests daily and analyzes 946 TB of new security data per day.

- JavaScript-rendered content: Prices, fulfillment options, seller info, and stock status often don't appear in the initial HTML. You need a full browser render to see them.

- Canvas/WebGL/TLS fingerprinting: As one production thread put it, "Walmart fingerprints more than just your IP—canvas, WebGL, timing, TLS." Standard proxy rotation alone won't cut it.

- Frequent A/B test DOM changes: Walmart runs constant layout experiments. A CSS selector that grabbed the price on Monday can return an empty string by Wednesday—without any obvious error.

- CAPTCHA interception: Some scrapers silently ingest a CAPTCHA challenge page and treat it as "success," leaving you with garbage data.

The practical result? A scraper that "works" on most retail sites will often fail silently on Walmart—returning HTTP 200 responses with missing or incorrect data.

Anti-Bot Challenge Matrix

| Challenge | What Happens | Tools That Handle It |

|---|---|---|

| JS rendering required | Basic HTTP returns empty HTML shell | Thunderbit, Bright Data, Oxylabs, Zyte, ScraperAPI, ScrapingBee, Decodo |

| Canvas/WebGL fingerprinting | Bot detection even with proxies | Bright Data, Decodo, Zyte, Oxylabs |

| Selector breakage (A/B tests) | Data fields return empty/wrong | Thunderbit (AI reads page fresh each time), Zyte AI, Bright Data/Oxylabs structured APIs |

| CAPTCHA interception | Parser silently ingests CAPTCHA page | ScraperAPI, Bright Data, Oxylabs, ScrapingBee |

| Regional price/inventory | Price depends on zip/store context | Bright Data geo-targeting, Oxylabs, Decodo, ScraperAPI, ScrapingBee |

What I Looked for When Testing These Walmart Scrapers

Not every Walmart scraper solves the same problem. A solo seller checking 30 prices is not the same as an enterprise team monitoring 10,000 SKUs daily. Here's what I evaluated across all 9 tools:

- Anti-bot success rate: Does it return real product data, or just HTTP 200 with empty fields?

- Field completeness: Can it extract title, price, availability, seller, rating, review count, UPC, images, fulfillment options, and specs?

- JS rendering: Does it handle Walmart's client-side rendering?

- Billing model: Pay-per-success (you don't pay for blocked requests) vs. pay-per-request (credits burn even on failures).

- Setup burden: No-code (click and go) vs. API (write code to integrate).

- Maintenance burden: Fixed selectors break often on Walmart. AI/semantic extraction or vendor-maintained endpoints reduce this.

- Export/output: Business users need Sheets/Excel/Airtable/Notion. Developers need JSON/CSV/webhooks.

- Scalability: One-off research, daily monitoring, and bulk catalog datasets are different jobs.

- Free tier: What can you actually accomplish for $0?

Independent benchmarks helped calibrate expectations. tested 200 URLs with 2,000 total requests and compared structured output, field coverage, and response time. labels Walmart as an Akamai target and compares 10 providers on success rate and speed. Bright Data's Walmart ranking article reports response times ranging from 2.31 s to 11.12 s and field counts from below 300 to 650+ per product page across reviewed tools.

The 9 Best Walmart Scrapers at a Glance

| Tool | Type | Anti-Bot Handling | Free Tier | Starting Price | Best For | Code Required? |

|---|---|---|---|---|---|---|

| Thunderbit | Chrome extension / AI scraper | Browser/cloud scraping, AI adaptive extraction | 6 pages/mo (10 with trial) | ~$9/mo | Non-technical teams | No |

| Bright Data | Walmart API / dataset / scraping browser | Managed unblocking, JS, CAPTCHA, geo | Trial/credits | ~$0.75/1K successful requests | Enterprise scale | Optional |

| Oxylabs | Web Scraper API | JS rendering, proxy/unblocking, parser | Up to 2,000 trial results | $49/mo | Data completeness | Yes |

| Decodo | eCommerce scraping API | JS, premium modes, anti-bot | 2K regular or 667 premium+JS | ~$9/mo | Best value API | Mostly yes |

| Zyte API | Generic scraping API | Automated tiering, browser requests | $5 credit | From $0.06/1K | Fast API workflows | Yes |

| ScraperAPI | Walmart endpoints / REST API | Proxy rotation, render, premium modes | 7-day / 5,000 credits | $49/mo | Budget developers | Yes |

| Apify | Actor marketplace / platform | Depends on actor/proxies | $5/mo platform credit | $49/mo + usage | Custom workflows | Optional |

| Octoparse | No-code desktop/cloud scraper | Visual selectors, cloud/proxy add-ons | Free plan (limited) | $69/mo Standard | Beginners | No |

| ScrapingBee | Walmart API / HTML API | JS, premium/stealth proxies, CAPTCHA | 1,000 credits | $49/mo | Lightweight API projects | Yes |

Pricing as of April 2026; verify before buying.

1. Thunderbit

is an AI-powered Chrome extension and web scraper built for business users who need structured data from Walmart—without writing code, configuring selectors, or managing proxies.

The workflow is genuinely two clicks. Open a Walmart search results page or product listing, click "AI Suggest Fields," and Thunderbit reads the visible page and proposes columns: Product Name, Price, Rating, Stock Status, Seller, Review Count, Image URL, Product URL. Click "Scrape" and the table fills. Need richer data? Click "Scrape Subpages" and Thunderbit visits each product page to pull specs, UPC, detailed descriptions, and more.

The key differentiator for Walmart specifically is adaptive extraction. Traditional scrapers rely on fixed CSS selectors or XPath—which break every time Walmart runs an A/B test or updates its DOM. Thunderbit's AI reads the page structure fresh each time, understanding content semantically rather than by position. In my testing, this meant I didn't have to fix broken selectors after Walmart layout changes—a maintenance headache that plagues selector-based tools.

Key Features for Walmart Scraping

- AI Suggest Fields: Reads Walmart pages and auto-generates column names and data types—no manual selector setup.

- Subpage scraping: Scrape a listing page, then enrich each row with detailed specs from individual product pages.

- Pagination and infinite scroll: Handles Walmart's paginated search results and "load more" patterns.

- Scheduled scraping: Set up recurring runs for daily or weekly price/stock monitoring.

- Free exports: Excel, CSV, Google Sheets, Airtable, Notion—no hidden download fees.

- Browser + cloud modes: Browser scraping for logged-in/store-specific content; cloud scraping for faster public page runs (up to 50 pages at once).

- Free email and phone extractors: Useful if you're scraping Walmart Marketplace seller pages for contact info.

- 34-language support.

Pros and Cons

| Pros | Cons |

|---|---|

| Zero setup, no code | Free tier is small for heavy monitoring |

| AI adapts to layout changes—no selector maintenance | Not a dedicated enterprise Walmart-only API |

| Free exports to Sheets, Excel, Airtable, Notion | Paid plan needed for larger subpage/pagination work |

| Subpage scraping enriches listing data | Newer tool compared to enterprise API vendors |

| Browser and cloud modes for different workflows |

Pricing: Free tier (6 pages/month, 10 with trial). Paid plans from ~$9/month. 1 credit = 1 output row.

Best for: Non-technical teams—sales ops, ecommerce operators, VAs, small sellers—who want Walmart product data in a spreadsheet without writing code or managing infrastructure.

2. Bright Data

Bright Data is the most comprehensive enterprise Walmart data platform—not just a single API. It offers a dedicated Walmart Scraper API, pre-collected Walmart datasets (267M+ records), a Scraping Browser for JS/CAPTCHA handling, and an MCP Server for AI/LLM workflows.

In benchmark testing, Bright Data reported a 98.44% success rate across 11 providers in a Scrape.do independent benchmark. Its pay-per-success model means you don't pay when Walmart blocks a request. At scale, that distinction matters a lot.

Key Features for Walmart Scraping

- Dedicated Walmart endpoint: Structured JSON output with fields like URL, final price, SKU, currency, GTIN, specifications, image URLs, and top reviews.

- Pre-collected datasets: Bulk historical access to Walmart product data.

- Scraping Browser: Handles JS rendering, CAPTCHA solving, and fingerprint evasion.

- City-level geo-targeting: Critical for regional pricing intelligence.

- Proxy network: 150M+ residential IPs.

- MCP Server: For LLM/AI agent integration.

Pros and Cons

| Pros | Cons |

|---|---|

| Highest benchmark success rate | Premium pricing and complexity |

| Pay-per-success billing | Multiple product lines can be confusing |

| Geo-targeting for regional pricing | Minimum spend for enterprise plans |

| Datasets for bulk historical access |

Pricing: Walmart Scraper API from ~$0.75/1K successful requests. Datasets from ~$50/100K records. Enterprise plans with minimums.

Best for: Enterprise teams needing maximum reliability, geo-targeting, and structured Walmart data at scale.

3. Oxylabs

Oxylabs is a strong enterprise alternative with emphasis on data completeness. Its Web Scraper API lists Walmart targets directly: Walmart Product (59 parsed data points), Walmart Search (58 parsed data points), and Walmart URL with raw HTML or parsed output.

In benchmark summaries, Oxylabs is cited for high field depth—around 620+ fields per Walmart product page in some tests. Its free trial includes up to 2,000 results, and paid plans start at $49/month.

Key Features for Walmart Scraping

- High field count: 59 parsed data points per Walmart product page.

- Anti-bot handling: Manages Akamai and HUMAN Security layers.

- Multiple output formats: Parsed JSON and raw HTML.

- Scalable API architecture.

Pros and Cons

| Pros | Cons |

|---|---|

| Deep data extraction (59+ fields) | Higher price point |

| Reliable anti-bot handling | Code required for API integration |

| Good trial (2,000 results) | Steeper learning curve for non-technical users |

| Enterprise support |

Pricing: Free trial up to 2,000 results. Paid from $49/month. JS rendering around $0.35/1K results.

Best for: Teams that need maximum field coverage and structured Walmart data via API.

4. Decodo

Decodo (formerly Smartproxy) is the best balance of price and performance for mid-scale Walmart scraping. Its eCommerce Scraper API supports Walmart with ready-made templates, anti-bot bypassing, and JS rendering.

The free plan gives you up to 2K regular requests or 667 premium+JS requests—enough to test whether Walmart pages return usable data before committing. Paid plans start around $9/month, with mid-tier pricing as low as $0.30/1K regular requests.

Key Features for Walmart Scraping

- Affordable per-request pricing.

- eCommerce-focused API with templates.

- CAPTCHA and anti-bot handling.

- Geolocation targeting.

- Free starter plan for testing.

Pros and Cons

| Pros | Cons |

|---|---|

| Competitive pricing | Fewer Walmart-specific features than Bright Data |

| Solid performance for the price | Code required |

| Generous free plan for testing | Mode multipliers can increase effective cost |

| Good for mid-scale projects | Smaller proxy network than enterprise leaders |

Pricing: Free plan (2K regular requests). Paid from ~$9/month.

Best for: Teams that want a capable Walmart API without enterprise pricing—especially for mid-scale monitoring or catalog building.

5. Zyte API

Zyte is the fastest option in benchmark summaries, with a reported 2.31-second median response time and 96.22% success rate on Walmart pages. Its API uses automated tiering—selecting datacenter, residential, or rendering technologies per request—so you're charged for what's needed.

New users get $5 in free credit. Pricing starts from $0.06/1K successful responses, with browser-tier requests costing more.

Key Features for Walmart Scraping

- Fast response times (~2–3 seconds median).

- AI-structured extraction for ecommerce data.

- Flexible pay-per-request pricing with auto-tiering.

- Browser requests for JS-rendered Walmart pages.

Pros and Cons

| Pros | Cons |

|---|---|

| Fastest response time in benchmarks | Smaller free tier |

| AI extraction capabilities | Less Walmart-specific tooling than Bright Data |

| Flexible pricing | Requires technical setup |

| Good for real-time monitoring | Auto-tiering makes exact costs less predictable |

Pricing: $5 free credit. From $0.06/1K successful responses; browser tiers higher.

Best for: Developers building real-time monitoring pipelines who need speed and flexible pricing.

6. ScraperAPI

ScraperAPI has one of the clearest Walmart-specific developer offerings. Its Walmart Scraper provides structured endpoints for product pages, search, categories, and reviews—with synchronous and async options.

The 7-day trial gives you 5,000 credits, and paid plans start at $49/month with 100,000 credits. But here's the catch: ScraperAPI's credit system charges 1 credit for basic requests, 10 for JS rendering, 25 for premium+render, and up to 75 for ultra premium+render. Walmart almost always requires JS rendering, so your effective page count is much lower than the raw credit number.

Key Features for Walmart Scraping

- Dedicated Walmart endpoints (product, search, category, reviews).

- Simple REST API integration.

- Automatic proxy rotation and CAPTCHA handling.

- JavaScript rendering.

- Geolocation targeting.

Pros and Cons

| Pros | Cons |

|---|---|

| Affordable entry price | Credits burn fast on Walmart (JS = 10+ credits/page) |

| Simple API with good docs | Lower success rate than enterprise tools on Walmart |

| Dedicated Walmart endpoints | Credits consumed even on failed requests |

| Free trial |

Pricing: 7-day trial (5,000 credits). Paid from $49/month.

Best for: Developers who want a straightforward Walmart API at a reasonable price—but who understand the credit multiplier math.

7. Apify

Apify is a platform and actor marketplace, not a single scraper. You can find pre-built Walmart actors like automation-lab/walmart-scraper (~$0.004/product plus run fees), Axesso Walmart lookup/search actors, and others maintained by community developers.

The free plan gives $5/month in usage credits. Paid plans start at $49/month plus pay-as-you-go compute. The platform supports scheduling, batch processing, webhooks, dataset exports, and API clients.

Key Features for Walmart Scraping

- Pre-built Walmart scraper actors in the marketplace.

- Scalable cloud platform for running tasks.

- APIs for custom integrations and pipeline building.

- Scheduling and batch processing.

- Multiple export formats (JSON, CSV, Excel).

Pros and Cons

| Pros | Cons |

|---|---|

| Flexible and customizable | Actor quality varies by maintainer |

| Good marketplace with Walmart actors | Costs increase with heavy usage |

| Scalable cloud infrastructure | Requires more technical knowledge for custom actors |

| Developer-friendly APIs | Proxy/anti-bot handling depends on actor configuration |

Pricing: Free plan ($5/month credits). Starter $49/month + usage.

Best for: Teams that need custom Walmart scraping workflows with scheduling, batch processing, and API integration.

8. Octoparse

Octoparse is the classic point-and-click no-code scraper. Its visual workflow builder lets you select elements on a Walmart page, configure extraction rules, and run scrapers in the cloud or locally. It offers a for faster setup.

The free plan includes limited local extraction and export. Paid plans start at $69/month (Standard, billed annually).

Key Features for Walmart Scraping

- Point-and-click visual workflow builder.

- Cloud-based and local scraping options.

- Scheduled scraping for recurring monitoring.

- Template library including Walmart.

- Multiple export formats (CSV, Excel).

Pros and Cons

| Pros | Cons |

|---|---|

| No coding needed | Fixed selectors break when Walmart changes layouts |

| Visual interface for beginners | Slower cloud execution |

| Generous row limits on free plan | Pricier for teams |

| Scheduled scraping | Less AI adaptation than Thunderbit |

Pricing: Free plan (limited). Paid from $69/month Standard.

Best for: Beginners who want a visual, no-code interface and are willing to maintain selectors when Walmart layouts change.

The key difference between Octoparse and Thunderbit: both are no-code, but Thunderbit uses AI to adapt to page changes automatically, while Octoparse relies on fixed selectors that need manual updates when Walmart's DOM shifts.

9. ScrapingBee

ScrapingBee is a lightweight API for developers who want simple proxy rotation and JS rendering without a heavy platform. It offers both a general HTML API and a dedicated Walmart Scraper API for product and search extraction.

The free tier gives 1,000 credits. Paid plans start at $49/month (Freelance, 250,000 credits). But ScrapingBee's credit system charges 1 credit for classic requests without JS, 5 for JS rendering, 10 for premium without JS, 25 for premium with JS, and up to 75 for stealth mode. Since Walmart requires JS rendering at minimum, your effective free tier is closer to 200 pages—or fewer if premium/stealth is needed.

Key Features for Walmart Scraping

- Simple REST API with proxy rotation.

- JavaScript rendering (required for Walmart).

- Geolocation targeting.

- CAPTCHA handling.

- Walmart-specific API endpoints.

Pros and Cons

| Pros | Cons |

|---|---|

| Simple API | Credits burn fast on Walmart (JS = 5+ credits/page) |

| Handles JS rendering | Limited free tier for Walmart |

| Geolocation support | Code required |

| Reasonable entry price | Less Walmart-specific optimization than enterprise tools |

Pricing: 1,000 free credits. Paid from $49/month.

Best for: Developers who need a lightweight, simple API for Walmart projects—and who can model the credit math before committing.

Which Walmart Scraper Fits Your Workflow

No competitor article I found clearly segments tools by use case. This is the decision table I wish existed when I started:

| Use Case | Best Tool(s) | Why |

|---|---|---|

| Quick product research (<100 items, no code) | Thunderbit, Octoparse | 2-click setup, visual interface, export to Sheets |

| Price monitoring at scale (1,000+ SKUs daily) | Bright Data, Oxylabs | Pay-per-success, structured output, high success rates |

| Dropshipping catalog building | Thunderbit, Apify | Subpage scraping enriches listings; template-based batch runs |

| Competitive intelligence (pricing + reviews) | Zyte, Decodo, Bright Data | API pipelines, structured fields, recurring analysis |

| Developer building a data pipeline | ScraperAPI, ScrapingBee, Zyte | Simple REST APIs, raw response control, code-first |

| Enterprise regional price intelligence | Bright Data, Oxylabs | Geo-targeting, infrastructure, enterprise support, datasets |

Thunderbit fits naturally for non-technical ecommerce operators and small teams who need product data without writing code. Its "AI Suggest Fields" reads Walmart pages and proposes columns automatically, and subpage scraping can enrich a listing page with detailed product specs from each item's page.

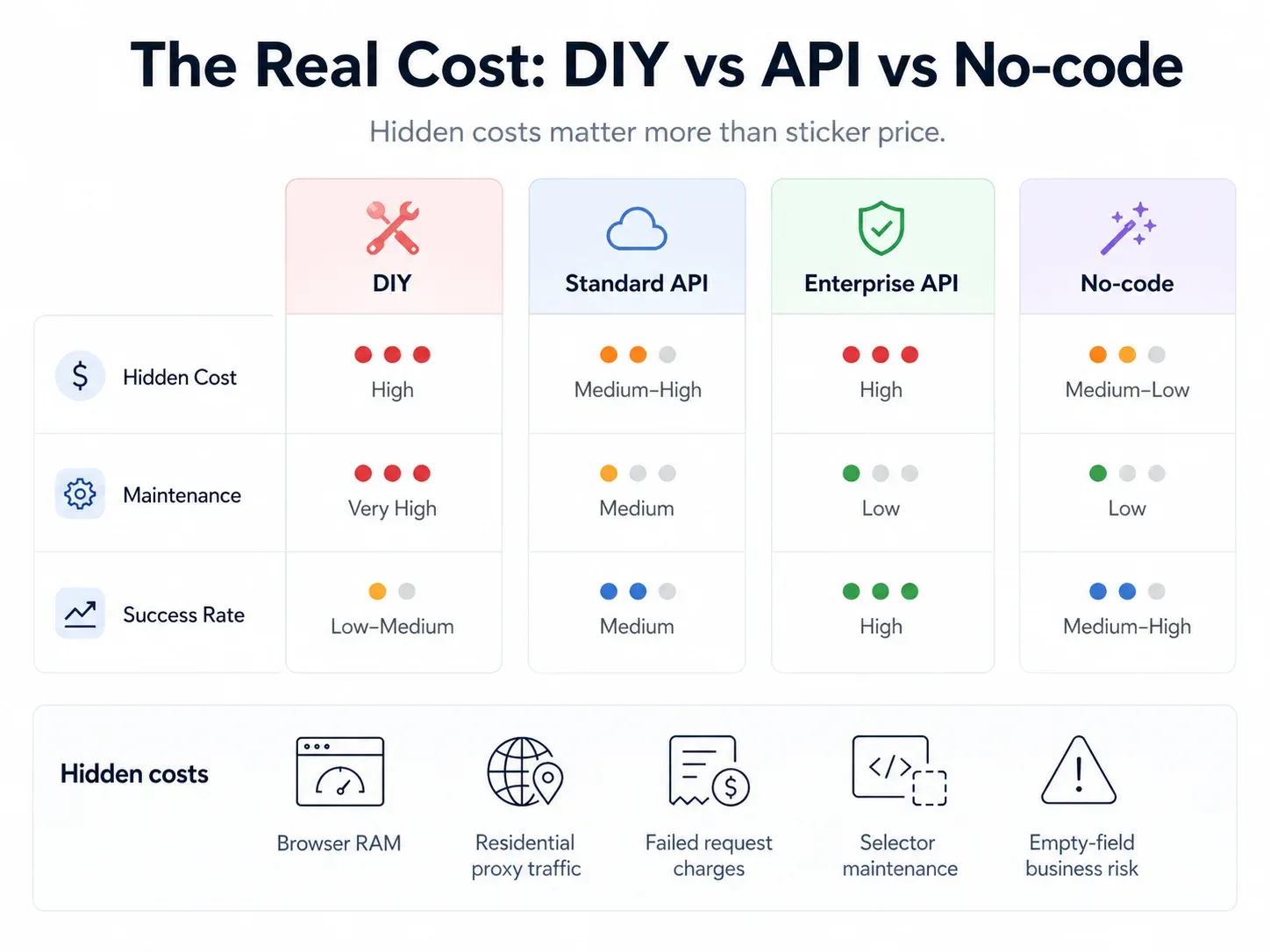

DIY Scraper vs. Scraping API vs. No-Code Tool: The Real Cost of Scraping Walmart

I see this question constantly in forums: "Should I build my own Walmart scraper or pay for a tool?" The answer depends on your real costs—not just the subscription price.

| Approach | Upfront Cost | Monthly Running Cost (1,000 pages/day) | Maintenance | Directional Success Rate |

|---|---|---|---|---|

| DIY (Playwright + residential proxies) | $0 (open source) | $200–500+ (proxies + server + browser infra) | HIGH (weekly fixes) | ~70–85% |

| Scraping API (ScraperAPI, ScrapingBee) | $0 (free tier) | $49–149/mo | LOW | ~85–95% |

| Enterprise API (Bright Data, Oxylabs) | $0 (trial) | $300–1,000+/mo | VERY LOW | ~95–99% |

| No-code tool (Thunderbit, Octoparse) | $0 (free tier) | $9–99/mo | NONE for AI tools (AI adapts) | ~85–95% |

Hidden costs users miss:

- RAM: Each Chromium instance eats ~150–300 MB of RAM. At 1,000 concurrent pages, your infrastructure bill rivals paid API costs.

- Proxy complexity: Residential proxies are billed by GB, not request. JS-heavy Walmart pages can cost more than expected.

- Failed requests: Some APIs still consume credits on blocked requests.

- Silent failures: A blank price or missing stock value is a business failure even if the scraper says "success."

- Developer time: Hours spent fixing broken selectors after Walmart layout changes have a real cost.

For most teams, the break-even point favors a paid tool unless you have dedicated scraping engineers and infrastructure already in place.

What Extracted Walmart Data Actually Looks Like

No competitor article I reviewed shows an actual data preview. Below is what a typical Walmart product scrape returns—spreadsheet form (what Thunderbit outputs) and API JSON (what developer tools return):

Spreadsheet Output (Thunderbit)

| Product Name | Price | Availability | Seller | Rating | Reviews | Image URL | UPC | Fulfillment |

|---|---|---|---|---|---|---|---|---|

| Great Value Sparkling Water 12pk | $4.98 | In stock | Walmart.com | 4.6 | 1,284 | https://i5.walmartimages.com/...jpg | 078742000000 | Pickup / Delivery |

| onn. Wireless Earbuds | $19.88 | Available online | Walmart.com | 4.3 | 3,912 | https://i5.walmartimages.com/...jpg | 681131000000 | Shipping / Pickup |

API JSON Response (Developer Tools)

1{

2 "title": "onn. Wireless Earbuds",

3 "url": "https://www.walmart.com/ip/example",

4 "price": 19.88,

5 "currency": "USD",

6 "availability": "In stock",

7 "seller": "Walmart.com",

8 "rating": 4.3,

9 "review_count": 3912,

10 "sku": "123456789",

11 "gtin": "681131000000",

12 "images": ["https://i5.walmartimages.com/...jpg"],

13 "fulfillment": {

14 "shipping": true,

15 "pickup": true,

16 "delivery": "store-dependent"

17 }

18}Core fields supported across benchmarked APIs include title, URL, price, currency, image, review count, availability, breadcrumb, and rating. Source: .

For Thunderbit, the visual workflow is: AI Suggest Fields proposes columns → Scrape fills the table → export to Google Sheets, Excel, Airtable, or Notion. No JSON parsing required.

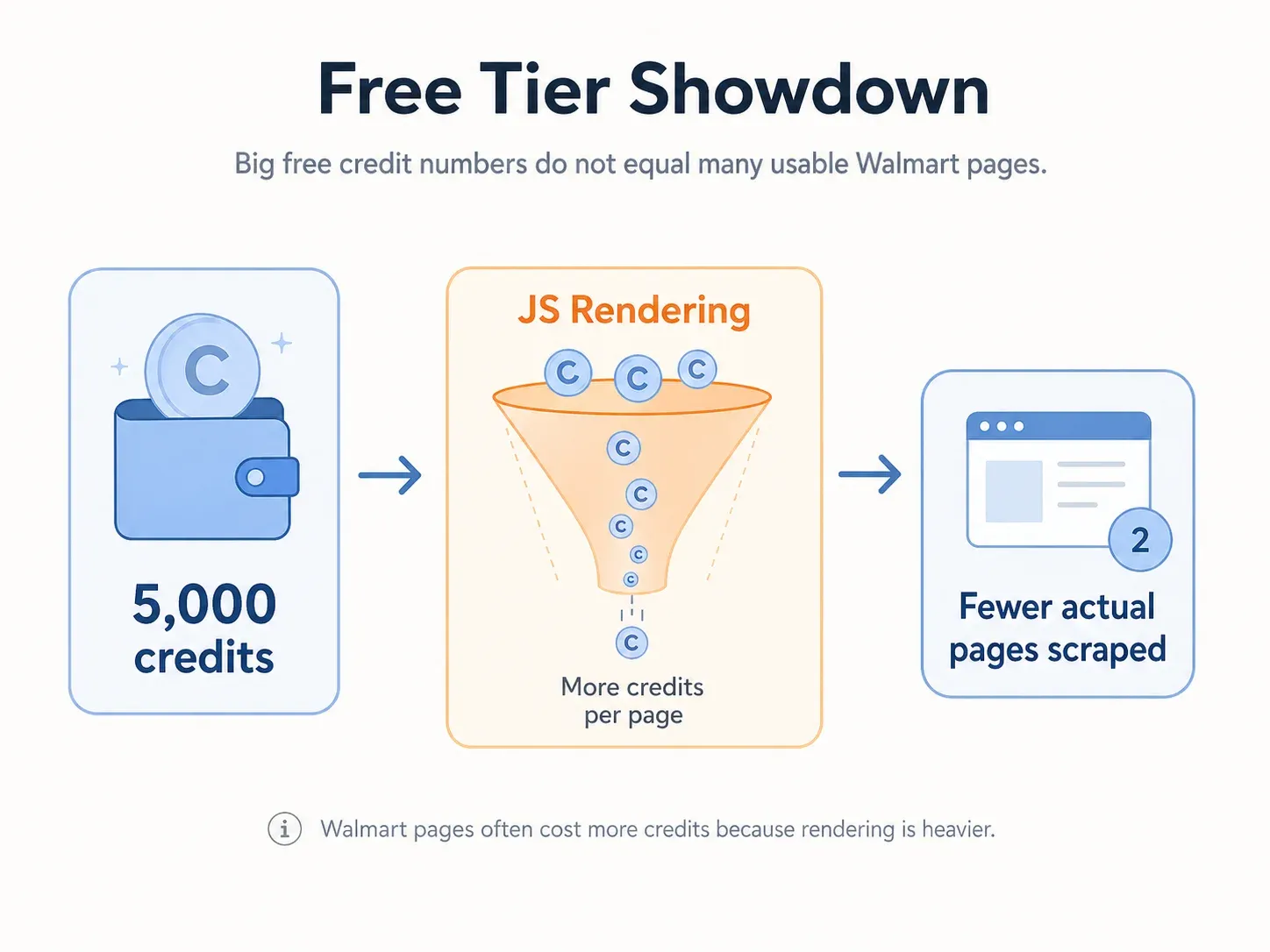

Free Tier Showdown: What Can You Actually Scrape from Walmart for $0?

If you're a student, solo seller, or just testing the waters, this is what each tool's free tier actually gets you on Walmart:

| Tool | Free Tier Limit | Works on Walmart for Free? | Output Formats | Key Limitation |

|---|---|---|---|---|

| Thunderbit | 6 pages/mo (10 with trial) | ✅ Yes (browser scraping) | Excel, CSV, Sheets, Airtable, Notion | Page count cap |

| ScraperAPI | 5,000 credits (7-day) | ⚠️ Limited (~500 pages if JS = 10 credits) | JSON | Credits burn fast |

| Apify | $5 free credits/mo | ⚠️ ~50 pages (depends on actor) | JSON, CSV, Excel | Actor run limits |

| Octoparse | Free plan (limited local) | ✅ Yes (local extraction) | CSV, Excel | Cloud/proxy features paid |

| ScrapingBee | 1,000 credits | ⚠️ ~200 pages (JS = 5 credits) | JSON, HTML | Credits burn fast |

| Decodo | 2K regular or 667 premium+JS | ✅ Yes for testing | HTML, JSON, CSV | Mode multipliers matter |

| Zyte | $5 free credit | ✅ Yes for testing | HTTP/browser responses | Auto-tiering makes page count uncertain |

| Bright Data | Trial/credits (varies) | ✅ If approved | JSON, NDJSON, CSV | Sales/trial eligibility |

| Oxylabs | Up to 2,000 trial results | ✅ For testing | Parsed JSON, raw HTML | Requires API setup |

Key insight for budget users: Thunderbit's free export (Excel, Google Sheets, Airtable, Notion) means even on the free tier you get clean output with no hidden download fees—something several API-based tools charge extra for. Plus its email and phone extractors are completely free if you're scraping seller contact info from marketplace pages.

Side-by-Side: All 9 Walmart Scrapers Compared

| Tool | Type | Anti-Bot Handling | Free Tier | Starting Price | Best For | Code Required? |

|---|---|---|---|---|---|---|

| Thunderbit | Chrome extension / AI scraper | AI adaptive, browser/cloud | 6 pages/mo | ~$9/mo | Non-technical teams | No |

| Bright Data | Walmart API / dataset / browser | Managed unblocking, geo, CAPTCHA | Trial | ~$0.75/1K success | Enterprise scale | Optional |

| Oxylabs | Web Scraper API | JS, proxy, parser | 2,000 trial results | $49/mo | Data completeness | Yes |

| Decodo | eCommerce API | JS, premium, anti-bot | 2K regular | ~$9/mo | Best value API | Mostly yes |

| Zyte | Generic API | Auto-tiering, browser | $5 credit | $0.06/1K | Fast API | Yes |

| ScraperAPI | Walmart endpoints / REST | Proxy, render, premium | 5,000 credits (7-day) | $49/mo | Budget developers | Yes |

| Apify | Actor marketplace | Actor-dependent | $5/mo credits | $49/mo + usage | Custom workflows | Optional |

| Octoparse | No-code desktop/cloud | Visual selectors | Free plan | $69/mo | Beginners | No |

| ScrapingBee | HTML/Walmart API | JS, premium, CAPTCHA | 1,000 credits | $49/mo | Lightweight API | Yes |

If you need enterprise reliability, go with Bright Data or Oxylabs. If you want the fastest no-code setup for Walmart, try Thunderbit. If you're a developer on a budget, ScraperAPI or Decodo are solid starting points.

Wrapping Up: How to Pick the Best Walmart Scraper for Your Needs

Walmart is one of the hardest retail sites to scrape reliably. The right tool depends on your use case, budget, and technical skill level. Here's my quick recommendation by persona:

- Non-technical teams who want fast results → . Two clicks, AI-powered, exports to Sheets/Excel/Airtable/Notion.

- Enterprise teams needing maximum reliability at scale → Bright Data or Oxylabs. Pay-per-success, geo-targeting, structured endpoints.

- Developers building data pipelines → ScraperAPI, ScrapingBee, or Zyte. Simple REST APIs, code-first.

- Budget-conscious users wanting best value → Decodo or Thunderbit's free tier.

- Custom workflow builders → Apify for actor-based composability.

My advice: start with a free tier to test whether a tool actually returns the Walmart fields you need. Don't commit to a paid plan until you've validated output quality on your specific product categories—because Walmart's defenses hit different pages differently.

If you want to see what AI-powered Walmart scraping looks like without writing a line of code, . In my experience, it's the lowest-friction way to get clean Walmart data into a spreadsheet. And if you're more of a developer, the API-based tools above give you the control and scale you need.

Happy scraping—and may your prices always be current and your fields never empty.

FAQs

1. Is it legal to scrape Walmart product data?

Scraping publicly available product data is generally considered lower risk than scraping login-gated or personal data. However, explicitly restrict the use of robots, spiders, or automated devices to retrieve or index content without written consent. Users should respect terms of service, robots.txt, rate limits, and avoid scraping personal or copyrighted content. For commercial use, consult legal counsel.

2. Do I need coding skills to scrape Walmart?

No. Tools like Thunderbit and Octoparse offer fully no-code interfaces—click, configure, export. API tools like ScraperAPI, ScrapingBee, and Zyte require basic coding. Enterprise platforms like Bright Data and Oxylabs offer both API access and dashboard/template options.

3. How often does Walmart change its website layout?

Frequently. Walmart runs A/B tests and updates its DOM structure regularly. Community reports consistently mention selector breakage and blank-field failures after layout changes. This is why AI-powered tools that read the page fresh each time (like Thunderbit) or vendor-maintained structured endpoints (like Bright Data, Oxylabs) have lower maintenance than fixed-selector approaches.

4. What data can I extract from Walmart product pages?

Common fields include: product name, URL, price (current and was/rollback), availability, seller, ratings, review count, image URLs, UPC/GTIN, SKU/item ID, specifications, fulfillment options (shipping, pickup, delivery), variants, breadcrumb/category, and sometimes store/aisle context when location data is available.

5. What is the best free Walmart scraper for quick tests?

For non-technical users, Thunderbit (6 free pages, 10 with trial) and Octoparse (free plan with local extraction) are the easiest to start with. For developers, ScraperAPI (5,000 credits), ScrapingBee (1,000 credits), Decodo (2K requests), and Zyte ($5 credit) all offer usable free tiers—but remember that Walmart pages consume more credits than simple static sites due to JS rendering requirements.

Learn More