A GitHub search for "amazon scraper" returns roughly . Narrow that to repos pushed in the last six months, and you're down to about — barely 20%. The rest? Abandoned tutorials, stale wrappers, and scripts that stopped working the moment Amazon tightened its defenses.

I've spent a lot of time digging through Amazon scraper repos, reading GitHub issues, and following community threads on Reddit and Stack Overflow. The pattern is consistent: someone finds a popular repo, spends an hour setting it up, runs it once, and hits a wall of CAPTCHAs or 503 errors. Amazon's anti-bot posture in 2026 is not the same as it was even two years ago — TLS fingerprinting, behavioral analysis, and aggressive CAPTCHA deployment have made the old "rotate user agents and hope for the best" playbook almost useless. This guide covers the best practices that actually matter if you want to get reliable Amazon data from a GitHub repo, and what to do when (not if) your scraper breaks.

What Is an Amazon Scraper on GitHub (and Why Do So Many Fail)?

An Amazon scraper GitHub repo is typically an open-source script — usually Python, Node.js, or Scrapy-based — that extracts structured data from Amazon pages. The data targets are familiar: product title, price, ASIN, ratings, review counts, availability, seller info, search-result cards, and review text.

The architecture is usually straightforward:

- An HTTP client or headless browser fetches the page.

- An HTML or JSON parser extracts the fields.

- The data gets saved to CSV, JSON, or a database.

Repos generally fall into four buckets:

- Lightweight Python libraries (e.g., )

- Scrapy spiders (e.g., )

- Selenium or Playwright browser automators

- API wrapper projects that are really front-ends for a commercial scraping service (e.g., )

The failure pattern is predictable. Most repos break because:

- Amazon changes its page layout or HTML fragments

- Amazon serves a 503 or CAPTCHA instead of real content

- The scraper's TLS and HTTP fingerprint no longer looks browser-like

- Locale, language, or header mismatches trigger suspicion

- The maintainer moves on after solving their original, narrow use case

High stars and "currently usable" are very different things. In the audit I ran for this article, only about three out of eight widely surfaced repos looked clearly active in 2026.

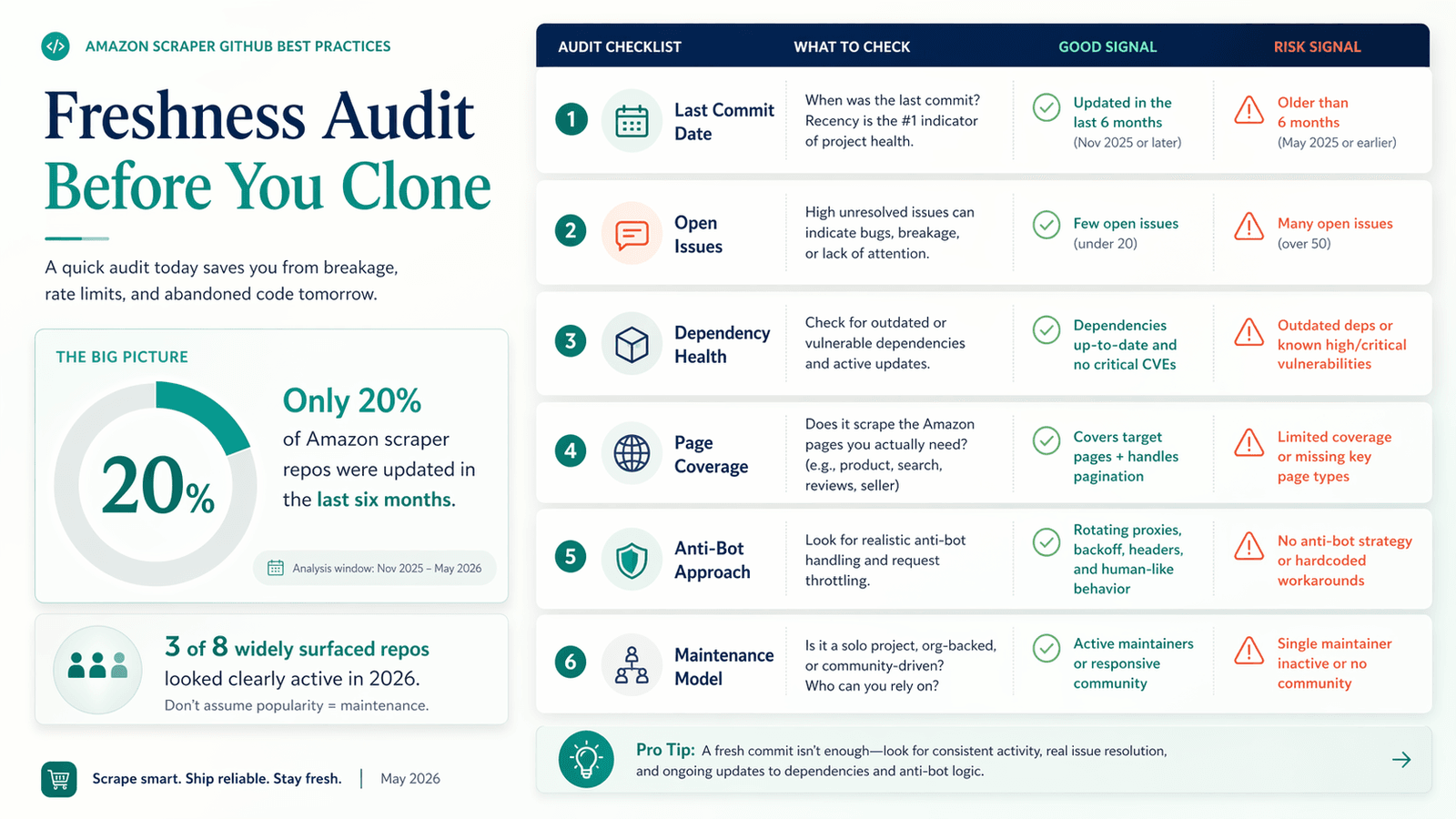

Run a 2026 Freshness Audit Before You Clone Any Amazon Scraper GitHub Repo

This step matters more for Amazon than for most other targets. Amazon's defensive posture changes faster than a typical ecommerce site, so a repo that works fine on a brochure website can become worthless on Amazon in a few weeks. Yet most "best amazon scraper github" lists recommend repos without checking if they still function. Users waste hours setting up broken tools.

How to Check If a GitHub Repo Is Still Alive

Before you git clone anything, run through these checks:

- Last commit date: Anything older than 6 months is a strong warning sign on Amazon.

- Open issues vs. response rate: Search the Issues tab for "captcha," "503," "blocked," and "not working." If those reports pile up with no maintainer replies, walk away.

- Dependency health: Open

requirements.txtorpackage.json. Deprecated libraries (e.g., oldrequestswithout modern TLS handling) are a red flag. - Amazon page-type coverage: Does the repo handle product pages, search results, AND reviews? Or just one?

- Anti-bot approach: Hardcoded headers with no proxy support is a 2023-era approach that won't survive 2026.

Amazon Scraper GitHub Freshness Checklist

| Freshness Signal | What to Check | Red Flag 🚩 |

|---|---|---|

| Last commit date | Commit feed or repo push date | Older than 6 months |

| Open issues | Issues tab — filter for "captcha," "503," "blocked" | Repeated breakage with no maintainer replies |

| Dependency health | requirements.txt / package.json | Deprecated libraries, no modern TLS strategy |

| Amazon page coverage | README + code examples | Only handles one page type (e.g., product pages but not search or reviews) |

| Anti-bot approach | Source code, proxy config | Hardcoded headers and UA strings only |

| Maintenance model | Is it a real scraper, tutorial, or commercial API wrapper? | Repo is really just a front-end for a paid service |

What the Audit Actually Found

I checked eight widely surfaced Amazon scraper repos against these criteria. The results are sobering:

| Repo / Tool | Stars | Last Commit Signal | Scope | 2026 Status | Notes |

|---|---|---|---|---|---|

| oxylabs/amazon-scraper | ~2,872 | 2026-04-02 | Managed scraper API wrapper | Alive, but not DIY | Fresh, but this is really a front-end to a managed service |

| omkarcloud/amazon-scraper | ~214 | 2026-02-25 | Managed API for search, details, reviews | Alive, but not DIY | Good coverage, but it's an API product, not a raw scraper |

| theonlyanil/amzpy | ~110 | 2026-02-26 | Lightweight Python library | Alive | The clearest direct GitHub scraper using curl_cffi |

| philipperemy/amazon-reviews-scraper | ~134 | 2024-11-21 | Reviews only | Narrow but usable | Old and very review-specific |

| python-scrapy-playbook/amazon-python-scrapy-scraper | ~74 | Last commit 2023; repo pushed 2024-08-20 | Scrapy spiders + proxy middleware | Tutorial-grade, aging | Useful for learning, not a turnkey 2026 stack |

| drawrowfly/amazon-product-api | ~744 | 2022-11-13 | Node CLI for search, details, reviews | High-risk | Broad coverage, but maintenance is too old |

| tducret/amazon-scraper-python | ~881 | 2020-10-13 | Search to CSV | Dead for 2026 | Popular historically, clearly stale |

| scrapehero-code/amazon-scraper | ~432 | 2020-06-21 | Search/product tutorial | Dead for 2026 | Effectively archival |

The public issues tell the same story. has an issue titled "All requests receive captcha response." has "Doesn't seem to be working." has "Bypass Amazon protection." These are not obscure edge cases — they're the first things users hit.

The Anti-Ban Playbook: How to Avoid Getting Blocked with an Amazon Scraper from GitHub

Getting blocked is the single biggest pain point for anyone using an amazon scraper github project. Generic advice like "use proxies and rotate user agents" is no longer sufficient. Amazon's 2025-2026 anti-bot stack includes TLS fingerprinting, behavioral analysis, and aggressive CAPTCHA deployment. You need a layered approach.

TLS Fingerprint Matching: Why Vanilla requests Gets You Banned

This is one of the most overlooked anti-ban techniques. TLS fingerprinting works like this: when your script opens a secure connection to Amazon, the server can tell a lot about the client by how it "shakes hands" — the cipher suites offered, the order of extensions, the HTTP/2 settings. Browsers use relatively fixed TLS and HTTP/2 settings, and those combinations are fingerprintable via techniques like .

Plain requests and ordinary httpx setups can copy headers, but they don't copy Chrome-like TLS and HTTP/2 behavior. Amazon can tell the difference.

addresses this directly. It provides browser impersonation — supported targets include chrome136, safari184, and firefox133 — so your HTTP client's TLS fingerprint matches a real browser. The docs explicitly warn against generating random JA3 strings: browser fingerprints are mostly fixed per version, and random nonsense is easier to detect than a copied real fingerprint.

The community data matches. A confirms the impersonate argument is useful because it rotates browser profiles and keeps headers aligned. Another notes Amazon blocks clients based on TLS fingerprint "after about a month or two." A specifically asks whether Amazon is fingerprinting python-requests (spoiler: yes).

If you're still using plain requests as your first-line Amazon client, upgrade that assumption before you upgrade anything else.

Proxy Rotation Done Right (Not Just "Use Proxies")

The point of proxies is not to rotate as much as possible. The point is to make sessions look believable.

Residential vs. datacenter: Datacenter proxies are cheaper but easier to detect. Residential proxies cost more but are much harder for Amazon to flag. starts at $4.00/GB pay-as-you-go, down to $3.50/GB on larger plans. starts at $6/GB. Amazon belongs in the "sophisticated target" bucket where residential proxies are worth the premium.

Per-request vs. per-session rotation: This is where most tutorials get it wrong. Rotating proxies on every request while keeping cookies and headers constant can look less human, not more. The safer pattern:

- Keep search → product → review traversal on the same sticky session where possible

- Switch sessions when starting a new search journey, not on every request

- Rotate between sessions, not randomly inside one browsing session

One noted that standard ISP IPs did not perform nearly as well as mobile IPs on popular ecommerce sites. Another reported getting blocked even with rotating user agents and residential proxies — a good reminder that proxies alone are not enough.

Request Pacing, Backoff, and Rate Limiting

Amazon's 503 pages are not random bad luck. They're feedback.

A about scraping more than 500 ASINs reported a 503 at the same point every time, around ASIN 101, even with sleeping. The pattern is old, but the lesson is current: raw volume from one IP or fingerprint eventually trips defenses.

Best-practice pacing for DIY GitHub scrapers:

- Randomized delays between requests (not fixed intervals, which are detectable)

- 2 to 5 seconds between public product requests for simple HTTP clients

- Exponential backoff after 503 or CAPTCHA — back off progressively instead of retrying immediately

- Lower concurrency than you think you need

- Fail-open logging instead of tight retry loops

Most amazon scraper github repos lack built-in rate limiting. You'll need to add it yourself.

Header Orchestration: More Than Just User-Agent Strings

Amazon checks the full header set, not just the User-Agent.

A realistic browser header set should include:

User-AgentAcceptAccept-LanguageAccept-EncodingSec-CH-*hints when appropriate- Connection behavior consistent with the chosen browser profile

Headers should match the marketplace locale. One found the same bot setup was detected only in some locales, with another commenter pointing at region-related headers like Accept-Language.

The rule: headers, TLS/browser profile, and proxy geography should not contradict each other. Don't send Chrome headers with a Firefox UA. Don't use a US proxy with Accept-Language: de-DE.

CAPTCHA Handling: When to Solve vs. When to Back Off

Hitting a CAPTCHA means Amazon is already suspicious. Solving it doesn't reset your trust score.

For isolated, low-frequency CAPTCHA events:

- The PyPI package is a pure-Python Amazon text CAPTCHA solver, though its latest release is from May 2023 — treat it as a tactical tool, not a durable strategy

- lists Amazon Captcha at $0.45 per 1,000 solves

For repeated CAPTCHA loops:

- Stop solving and start backing off

- Repeated CAPTCHAs mean the session is burned — solving them doesn't rebuild trust in the fingerprint, session history, or IP reputation

- If CAPTCHAs cluster by proxy subnet, the problem is the network layer, not the parser

When You Actually Need a Headless Browser (and When It's Overkill)

The wrong instinct is to run Playwright for everything.

Good browser use cases:

- Search results that depend on JavaScript rendering or locale-dependent state

- Review flows that redirect to login or sign-in pages

- Workflows where cookies and browser context matter more than raw speed

Bad browser use cases:

- Ordinary public product pages

- Static product detail extraction where a browser-like HTTP client is enough

- Large-scale bulk retrieval where compute efficiency matters

Start with the lightest client that works. One on scraping at scale described the progression: start with requests, then curl_cffi, and only go to a full browser when the lighter options fail. Headless browsers are materially slower and more resource-intensive than HTTP clients for Amazon product-page scraping.

Anti-Ban Decision Matrix for Amazon Scraper GitHub Projects

| Scenario | Recommended Approach | Why |

|---|---|---|

| Public product pages (small scale) | curl_cffi + sticky residential session | Cheapest path that still looks browser-like |

| Search results pages | curl_cffi first, Playwright only if rendering or state breaks HTTP | Search is more stateful and locale-sensitive |

| Reviews (login required) | Browser mode with real cookies/session | Login and dynamic review flows are harder to emulate with bare HTTP |

| Large-scale (5k+ daily) | Managed scraper API, unlocker, or no-code platform | DIY GitHub code alone becomes an infrastructure problem |

When Your Amazon Scraper GitHub Project Breaks: Have a No-Code Fallback Plan

Every experienced scraper keeps a Plan B.

Amazon updates will eventually break any GitHub repo at the worst possible time. For ecommerce teams, a broken scraper means missed price changes, stale competitor data, and gaps in dashboards.

Many people searching "amazon scraper github" are actually business users — ecommerce ops, marketers, FBA researchers — who tried coding solutions because they couldn't find better options. Forum data shows real frustration with Amazon's official too: restrictive access, limited data, and that many sellers can't meet.

Why GitHub Amazon Scrapers Need Constant Maintenance

The audit above makes this concrete:

- Stale repos pile up breakage reports with no fixes

- "Working" repos now talk openly about anti-bot measures in the README

- Community threads increasingly center on TLS fingerprints, CAPTCHA loops, and proxy quality — not CSS selectors

For business users, that maintenance burden is the real hidden cost. The repo is free. Your time debugging it at 2 AM is not.

Thunderbit as a Practical Amazon Scraper Alternative

offers an that extracts title, price, ASIN, ratings, brand, availability, shipping origin, and original URL — without writing code.

What that looks like in practice:

- 2-click scraping vs. setting up Python environments, dependencies, and proxy configs

- Instant Amazon template — no AI overhead, just 1-click extraction

- Browser scraping mode for pages requiring login (like review pages that frustrate GitHub scraper users)

- Cloud scraping for public product pages at speed (50 pages at a time)

- Free export to Google Sheets, Airtable, Notion, Excel — not just CSV/JSON

- Scheduled scraper for ongoing price monitoring

- AI adapts to layout changes — no maintenance burden on you

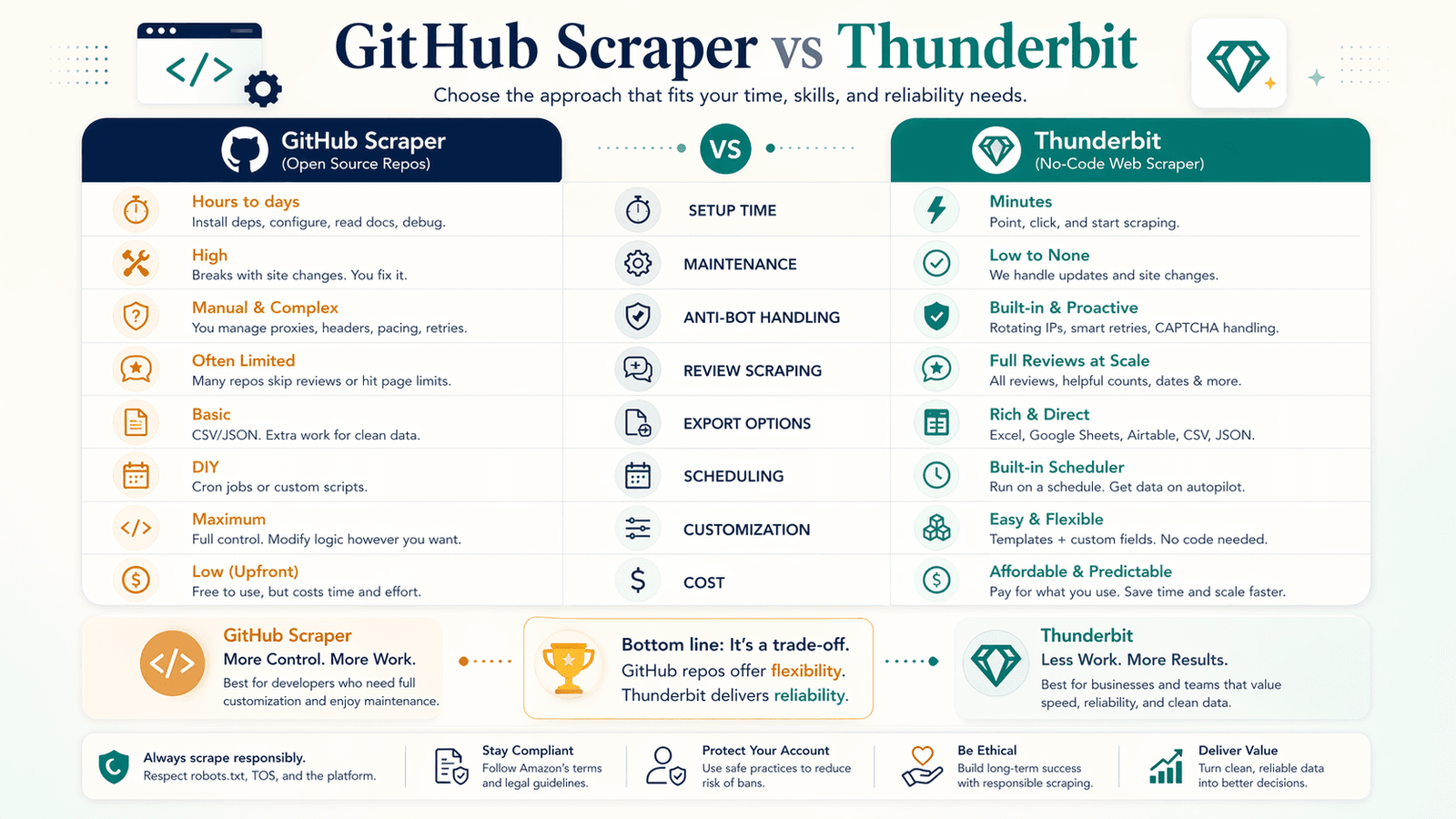

GitHub Amazon Scraper vs. Thunderbit: Honest Comparison

| Factor | GitHub Scraper (e.g., AmzPy) | Thunderbit |

|---|---|---|

| Setup time | 15–60 min (Python, dependencies, proxies) | ~2 min (install Chrome extension) |

| Maintenance | You fix breakages | AI adapts to layout changes |

| Anti-bot handling | DIY (proxies, headers, TLS) | Built-in (cloud + browser modes) |

| Review scraping (logged-in) | Complex session management | Browser scraping mode |

| Data export | CSV/JSON only | Sheets, Airtable, Notion, Excel, CSV, JSON |

| Scheduling | DIY (cron, Airflow, etc.) | Built-in scheduled scraper |

| Customization | Higher | Lower |

| Cost | Free (plus proxy costs) | Free tier available; credit-based |

The honest trade-off: GitHub repos offer more customization; Thunderbit offers more reliability. If your team cares about uptime over flexibility, the no-code path is usually the more rational choice.

Best Practices for Scheduled and Recurring Amazon Scraping

Most amazon scraper github projects are built for one-time runs, but real business use cases — price monitoring, inventory tracking, competitor analysis — require recurring scrapes. GitHub repos almost never include scheduling natively, leaving users to stitch together cron jobs, Airflow, or n8n workflows.

DIY Scheduling for GitHub Amazon Scrapers

The minimum viable recurring setup:

- Cron job on Linux or macOS to run the script on a schedule

- Append-only logs so you can debug failures after the fact

- Deduplication by ASIN + timestamp so you don't store duplicate data

- Failure alerts (even a simple email on non-zero exit) so you know when a run breaks at 3 AM

For more complex teams:

- n8n for lightweight workflow automation (mentioned frequently in community threads)

- Airflow for heavier scheduled pipelines

- Database-backed state if you need diffs and history

The key best practice is not the scheduler itself — it's state management. Track last successful run, last ASIN set, changed prices, and failed URLs.

Scheduling Made Simpler with Thunderbit

Thunderbit's lets you describe the interval in plain English, input URLs, and click "Schedule." The AI converts natural language into a cron schedule — no technical setup. For non-engineering ecommerce teams monitoring pricing or competitor product launches, that's a meaningful reduction in operational drag.

Best Practices for Recurring Amazon Scrapes

These apply no matter what tool you use:

- Deduplicate by ASIN + timestamp window — don't store the same product twice per run

- Store prices as numbers, not raw strings — saves cleanup downstream

- Append scrape timestamps to every row — you'll need them for trend analysis

- Track deltas, not just current state — "price dropped 12% since last week" is more useful than "price is $24.99"

- Alert on meaningful changes — a competitor dropping price by 15% is worth a notification; a 0.5% fluctuation is noise

- Think about data storage — flat files work for small runs; for 5k+ ASINs daily, consider a database or cloud spreadsheet

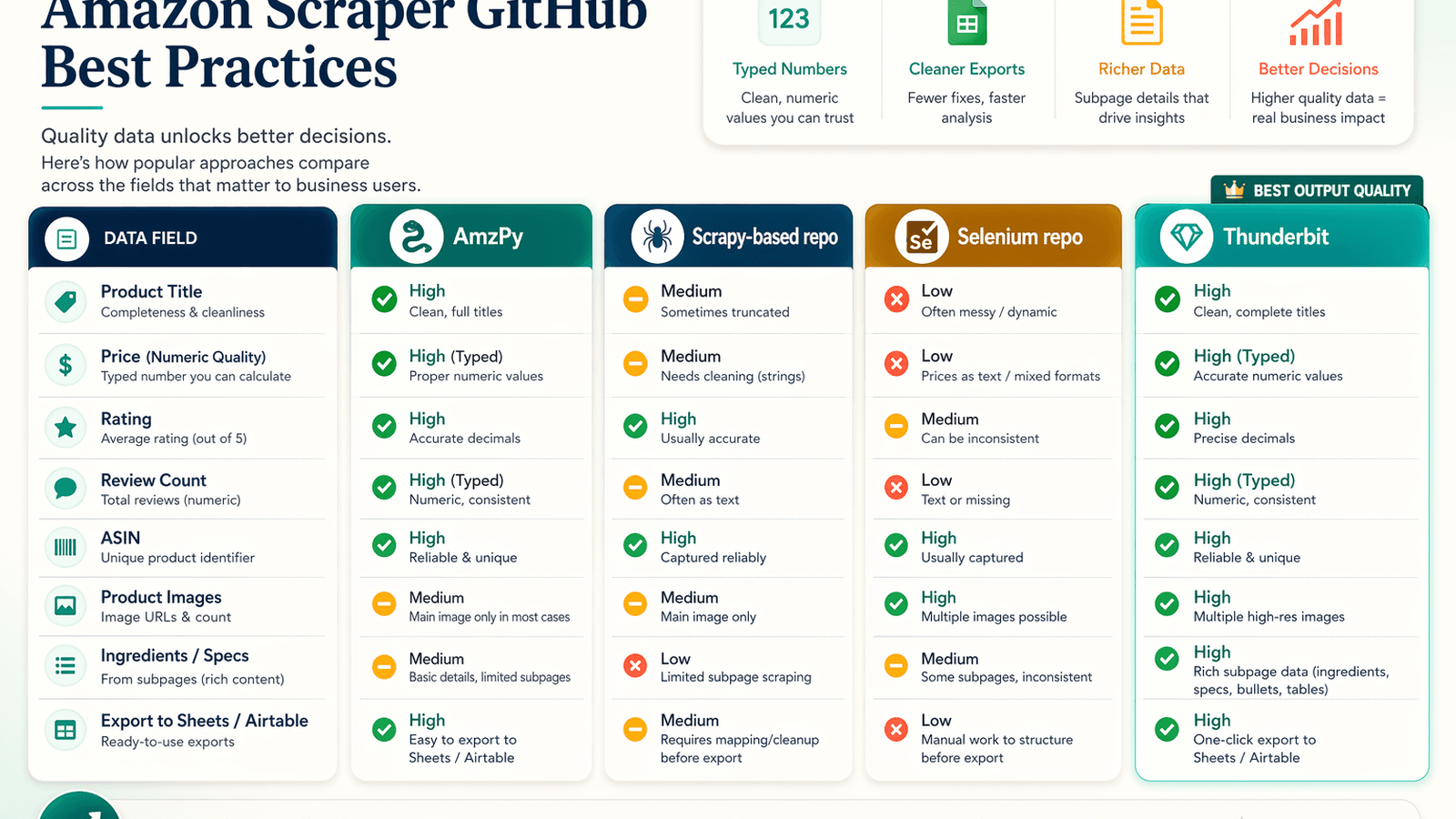

Side-by-Side Output Quality: What Each Amazon Scraper GitHub Approach Actually Returns

Nobody compares actual output quality across amazon scraper github repos. Users care deeply about data quality — "which tool gives the cleanest, most complete data" — but have to clone and test each repo themselves. This section fills that gap.

What Popular GitHub Repos Actually Extract (and Miss)

Based on README samples, public examples, and documented output formats:

| Approach | What It Clearly Extracts | Common Gaps / Trade-offs |

|---|---|---|

| amzpy | Title, price, currency, image URL, ratings, reviews, variants, ASIN | Product-page oriented; less rich on full reviews/spec sections |

| tducret/amazon-scraper-python | CSV with title, rating, review count, product URL, image URL, ASIN | Stale, listing-focused, weak anti-bot story |

| python-scrapy-playbook scraper | Search results, product pages, reviews, CSV/JSON pipelines | Tutorial-grade; relies on external proxy middleware; more cleanup likely |

| omkarcloud/amazon-scraper | Search, category, details, top reviews, many images/videos/specs | Not a raw scraper — it's a managed API service |

| Thunderbit Amazon template | Title, price, ASIN, brand, rating, reviews, availability, shipping origin, subpage enrichment | Less code-level control than custom scripts |

Output Quality Comparison Table

| Data Field | AmzPy | Scrapy-based Repo | Selenium Repo | Thunderbit |

|---|---|---|---|---|

| Product title | ✅ | ✅ | ✅ | ✅ |

| Price (numeric) | ⚠️ string | ✅ | ⚠️ string | ✅ (number type) |

| Rating | ✅ | ✅ | ✅ | ✅ |

| Review count | ❌ | ✅ | ✅ | ✅ |

| ASIN | ✅ | ✅ | ✅ | ✅ |

| Product images | ❌ | ⚠️ thumbnail only | ✅ | ✅ (full-res, exportable) |

| Ingredients/specs | ❌ | ❌ | ❌ | ✅ (via subpage scraping + AI) |

| Export to Sheets/Airtable | ❌ | ❌ | ❌ | ✅ free |

Why Data Formatting Matters for Business Users

Messy data creates hidden labor. Even a successful scraper can be an operational failure if:

- Prices are strings with currency symbols instead of clean numbers

- Missing values are inconsistent (empty string vs. null vs. "N/A")

- Images are only low-resolution thumbnails

- Review fields or specs need post-processing before analysis

For ecommerce ops teams, clean data directly impacts analysis speed and decision-making. Thunderbit's AI formats data by type — numbers as numbers, dates as dates, URLs as URLs — so it's ready to use immediately. GitHub repos vary widely on that front, and the cleanup time adds up fast.

Quick-Reference: Amazon Scraper GitHub Best Practices Checklist

- Check last commit date before cloning. Older than six months is a strong warning sign on Amazon.

- Search issues for "captcha," "503," "blocked," and "not working" before setup.

- Prefer

curl_cffior another browser-impersonating HTTP client over plainrequests. - Keep headers, TLS profile, language, and proxy geography consistent — no contradictions.

- Use sticky sessions for browsing flows; don't rotate every request blindly.

- Add randomized pacing and exponential backoff.

- Treat repeated CAPTCHA as a burned session, not a puzzle to brute-force.

- Use headless browsers only when HTTP clients can't reliably reproduce the page.

- Store checkpoints and state so failed runs can resume safely.

- Have a fallback plan — whether that's a managed API or a no-code tool like .

Legal and Ethical Considerations for Amazon Scraping in 2026

A few things worth knowing, briefly.

Amazon's posture is restrictive and getting more so. The strongest signals:

- Amazon's own help pages now return a saying: "To discuss automated access to Amazon data please contact api-services-support@amazon.com."

- Amazon's disallows a wide range of dynamic, review, profile, wishlist, and offer-listing paths.

- Amazon's explicitly objects to covert or disguised agent access, circumvention of security measures, and misidentifying an agent as Google Chrome. Amazon also about the incident.

- Amazon has against OpenAI crawlers in late 2025.

The practical risk is clearly higher when you move from public product pages to authenticated flows, disguised automation, or high-volume commercial extraction. This is not legal advice — consult your own legal team for your specific situation.

Key Takeaways: Getting Reliable Amazon Data Without Getting Banned

In order of importance:

- Audit before you clone. Assume most GitHub results are stale, tutorials, or wrappers around commercial APIs.

- Upgrade your network layer first. TLS fingerprinting and session coherence matter more than HTML selectors.

- Use sticky residential sessions, not random proxy chaos. Rotate between sessions, not inside them.

- Pace requests like a user, not a stress test. Randomized delays and exponential backoff are non-negotiable.

- Solve isolated CAPTCHAs; retire repeatedly challenged sessions. Don't brute-force a burned fingerprint.

- Have a fallback. Amazon will change something midweek, and your GitHub scraper will break. A maintained no-code tool like or a managed API can keep your data pipeline alive while you debug.

- Prioritize output quality. Clean, typed data saves more downstream time than a fast-but-messy scraper.

If you want reliability over customization, Thunderbit provides a maintained alternative — check out the or watch tutorials on the . Developers who want full control can absolutely use GitHub repos — but only with the anti-ban and maintenance practices covered in this guide.

FAQs

Is it legal to scrape Amazon product data with a GitHub scraper?

Amazon's Terms of Service restrict automated data collection, and Amazon has actively enforced this through cease-and-desist letters and technical countermeasures (especially in 2025-2026). Scraping publicly accessible product data is a gray area; scraping behind a login or disguising your bot as a real browser carries higher risk. This is not legal advice — consult your legal team for your specific use case.

How often do Amazon scraper GitHub repos break?

Frequently. Amazon changes page layouts, adds new anti-bot layers, and deprecates endpoints on a regular basis. In the audit for this article, only about 3 out of 8 widely surfaced repos were clearly functional in 2026. Even "working" repos often have open issues about CAPTCHAs and 503 errors. Expect to troubleshoot or update your setup every few weeks to months.

What is the best Amazon scraper on GitHub in 2026?

There's no single winner — it depends on your use case and technical comfort. For a lightweight, direct Python scraper, is one of the more current options. For broader coverage via a managed API, works but isn't truly DIY. Apply the freshness checklist from this article to evaluate any repo for yourself before committing.

Can Thunderbit scrape Amazon without coding?

Yes. Thunderbit's extracts product title, price, ASIN, ratings, brand, availability, and more with a single click. It supports browser scraping mode for login-required pages, cloud scraping for public pages at speed, scheduled scraping for recurring jobs, and free export to Google Sheets, Airtable, Notion, and Excel. You can get started by installing the .

How do I avoid getting my IP banned when scraping Amazon?

Use a layered approach: (1) switch from plain requests to a TLS-impersonating client like curl_cffi, (2) use residential proxies with sticky sessions instead of random datacenter rotation, (3) add randomized pacing and exponential backoff, (4) keep your full header set consistent with your browser profile and marketplace locale, and (5) treat repeated CAPTCHAs as a signal to retire the session, not a puzzle to solve indefinitely. For more detail, see the anti-ban decision matrix earlier in this article.